The Ice Lake Benchmark Preview: Inside Intel's 10nm

by Dr. Ian Cutress on August 1, 2019 9:00 AM EST- Posted in

- CPUs

- Intel

- GPUs

- 10nm

- Core

- Ice Lake

- Cannon Lake

- Sunny Cove

- 10th Gen Core

Section by Andrei Frumusanu

SPEC2017 and SPEC2006 Results (15W)

SPEC2017 and SPEC2006 is a series of standardized tests used to probe the overall performance between different systems, different architectures, different microarchitectures, and setups. The code has to be compiled, and then the results can be submitted to an online database for comparsion. It covers a range of integer and floating point workloads, and can be very optimized for each CPU, so it is important to check how the benchmarks are being compiled and run.

We run the tests in a harness built through Windows Subsystem for Linux, developed by our own Andrei Frumusanu. WSL has some odd quirks, with one test not running due to a WSL fixed stack size, but for like-for-like testing is good enough. SPEC2006 is deprecated in favor of 2017, but remains an interesting comparison point in our data. Because our scores aren’t official submissions, as per SPEC guidelines we have to declare them as internal estimates from our part.

For compilers, we use LLVM both for C/C++ and Fortan tests, and for Fortran we’re using the Flang compiler. The rationale of using LLVM over GCC is better cross-platform comparisons to platforms that have only have LLVM support and future articles where we’ll investigate this aspect more. We’re not considering closed-sourced compilers such as MSVC or ICC.

clang version 8.0.0-svn350067-1~exp1+0~20181226174230.701~1.gbp6019f2 (trunk)

clang version 7.0.1 (ssh://git@github.com/flang-compiler/flang-driver.git

24bd54da5c41af04838bbe7b68f830840d47fc03)-Ofast -fomit-frame-pointer

-march=x86-64

-mtune=core-avx2

-mfma -mavx -mavx2

Our compiler flags are straightforward, with basic –Ofast and relevant ISA switches to allow for AVX2 instructions. Despite ICL supporting AVX-512, we have not currently implemented it, as it requires a much greater level of finesse with instruction packing. The best AVX-512 software uses hand-crafted intrinsics to provide the instructions, as per our 3PDM AVX-512 test later in the review.

For these comparisons, we will be picking out CPUs from across our dataset to provide context. Some of these might be higher power processors, it should be noted.

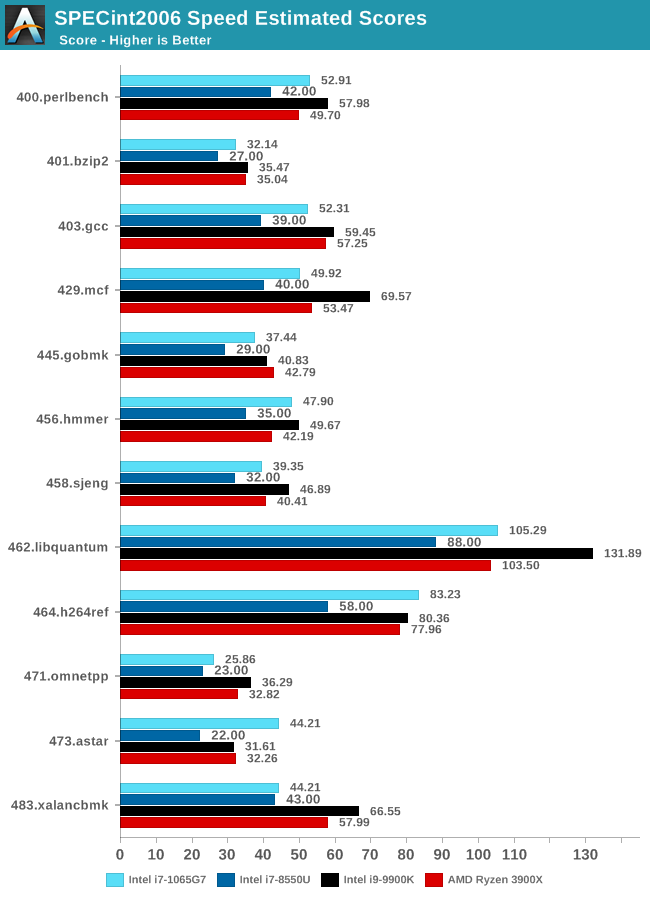

SPECint2006

Amongst SPECint2006, the one benchmark that really stands out beyond all the rest is the 473.astar. Here the new Sunny Cove core is showcasing some exceptional IPC gains, nearly doubling the performance over the 8550U even though it’s clocked 100MHz lower. The benchmark is extremely branch misprediction sensitive, and the only conclusion we can get to rationalise this increase is that the new branch predictors on Sunny Cove are doing an outstanding job and represent a massive improvement over Skylake.

456.hmmer and 464.h264ref are very execution bound and have the highest actual instructions per clock metrics in this suite. Here it’s very possible that Sunny Cove’s vastly increased out-of-order window is able to extract a lot more ILP out of the program and thus gain significant increases in IPC. It’s impressive that the 3.9GHz core here manages to match and outpace the 9900K’s 5GHz Skylake core.

Other benchmarks here which are limited by other µarch characteristics have various increases depending on the workload. Sunny Cove doubled L2 cache should certainly help with workloads like 403.gcc and others. However because we’re also memory latency limited on this platform the increases aren’t quite as large as we’d expect from a desktop variant of ICL.

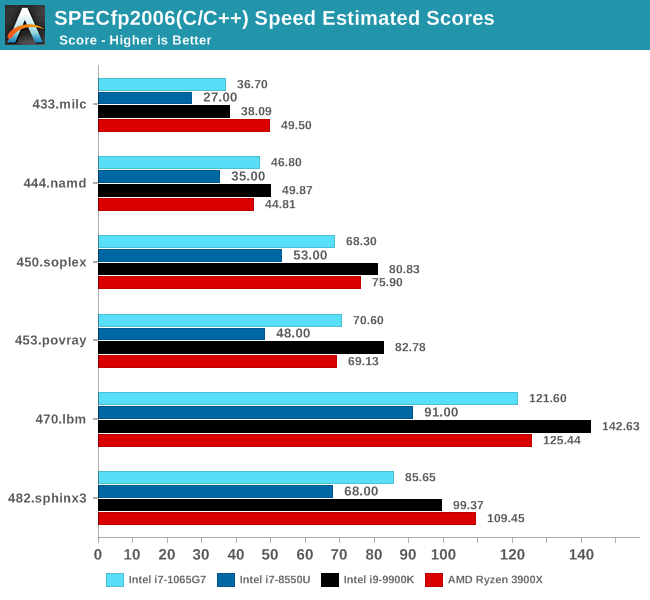

In SPECfp2006, Sunny Cove’s wider out-of-order window can again be seen in tests such as 453.povray as the core is posting some impressive gains over the 8550U at similar clocks. 470.lbm is also instruction window as well as data store heavy – the core’s doubled store bandwidth here certainly helps it.

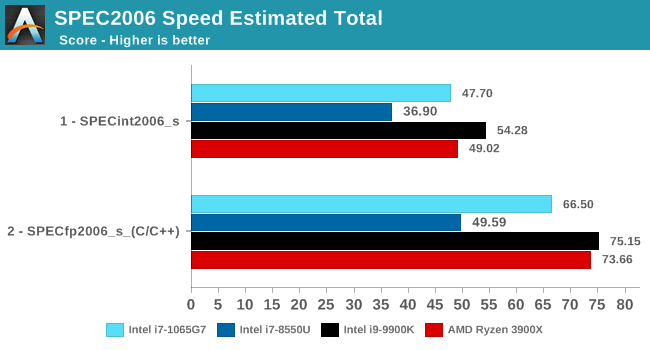

Overall in SPEC2006, the new i7-1065G7 beats a similarly clocked i7-8550U by a hefty 29% in the int suite and 34% in the fp suite. Of course this performance gap will be a lot smaller against 9th gen mobile H-parts at higher clocks, but these are also higher TDP products.

The 1065G7 comes quite close to the fastest desktop parts, however it’s likely it’ll need a desktop memory subsystem in order to catch up in total peak absolute performance.

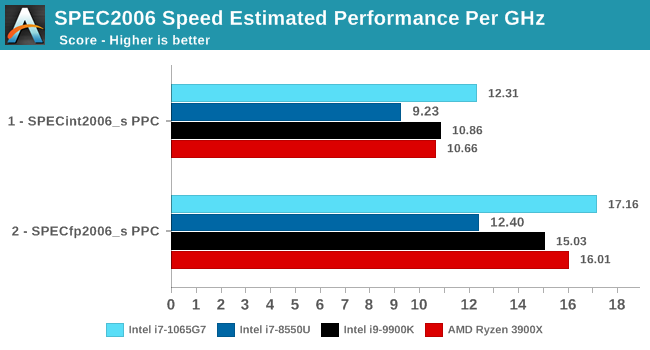

Performance per clock increases on the new Sunny Cove architecture are outstandingly good. IPC increases against the mobile Skylake are 33 and 38% in the integer and fp suites, though we also have to keep in d mind these figures go beyond just the Sunny Cove architecture and also include improvements through the new LPDDR4X memory controllers.

Against a 9900K, although apples and oranges, we’re seeing 13% and 14% IPC increases. These figures likely would be higher on an eventual desktop Sunny Cove part.

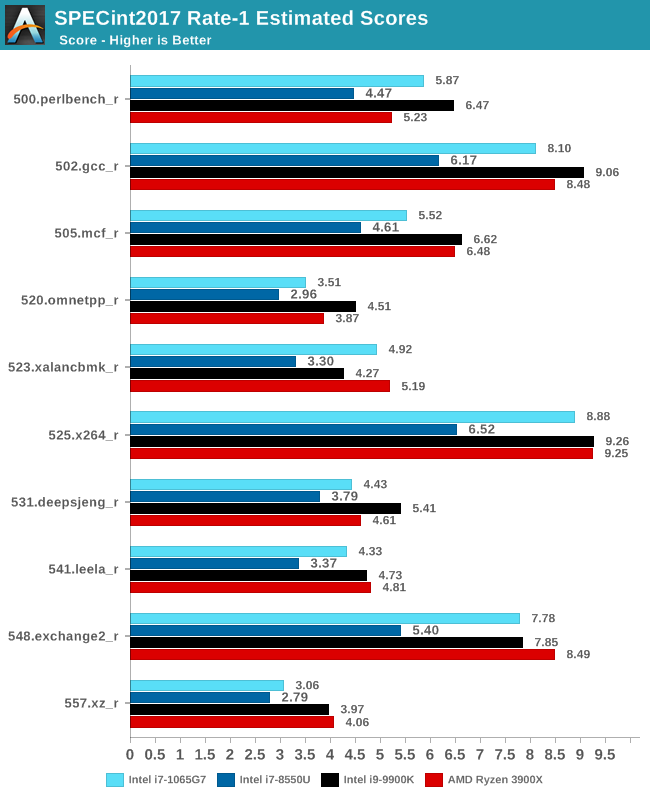

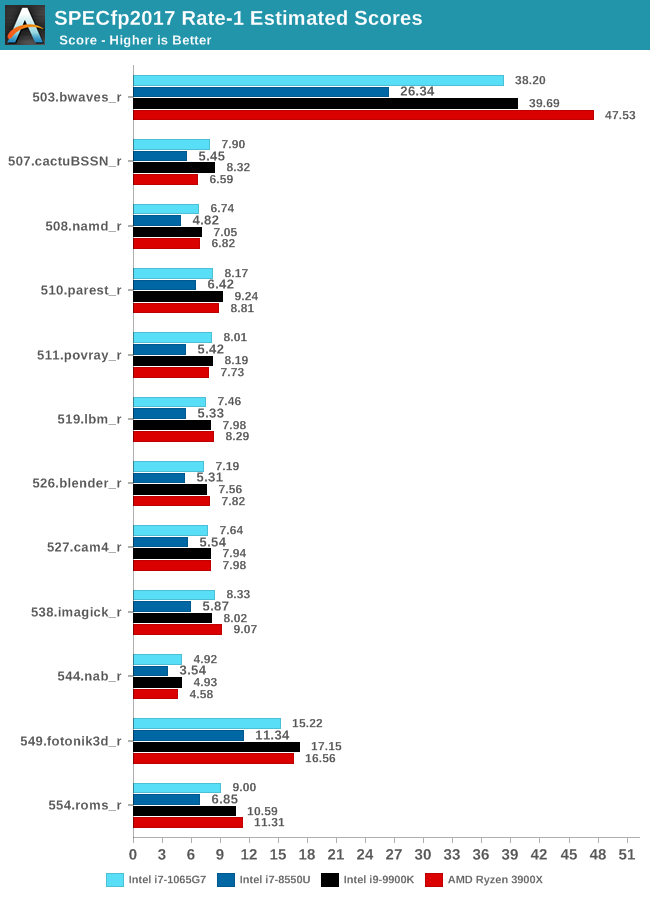

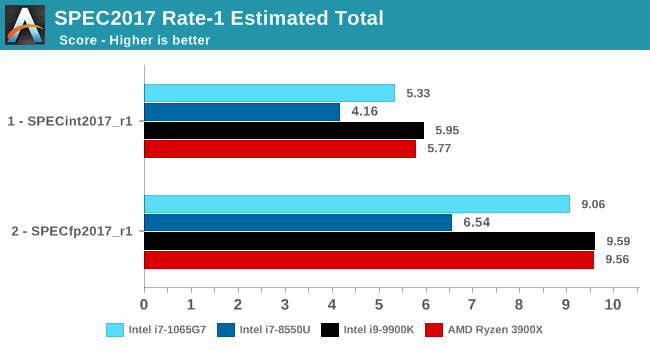

SPEC2017

The SPEC2017 results look similar to the 2006 ones. Against the 8550U, we’re seeing grand performance uplifts, just shy of the best desktop processors.

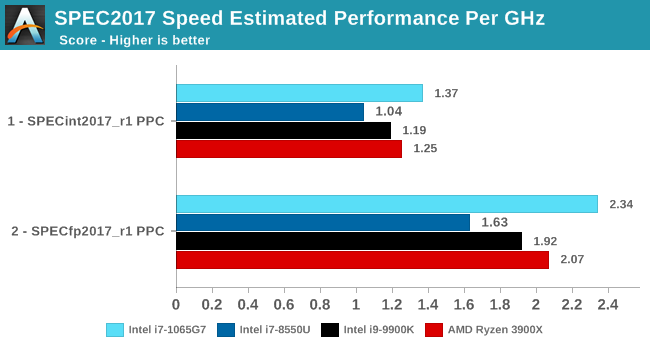

Here the IPC increase also look extremely solid. In the SPECin2017 suite the Ice Lake part achieves a 14% increase over the 9900K, however we also see a very impressive 21% increase in the fp suite.

Overall in the 2017 suite, we’re seeing a 19% increase in IPC over the 9900K, which roughly matches Intel’s advertised metric of 18% IPC increase.

261 Comments

View All Comments

Ian Cutress - Thursday, August 1, 2019 - link

I'm encouraging the behaviour. I've been on at Intel to do something like this for a while. I'm giving credit where credit is due. As always with events like this companies like Intel have PR saying they want to do something, and the legal side of the equation resisting. If we get more opportunities like this in the future, it helps us provide a richer content base in advance of a product launch - people get to prepare in case they're in the mood for a purchase.Also, tell me if you say the same things on our Qualcomm QRD testing. Please.

brakdoo - Thursday, August 1, 2019 - link

You are just trying to get an edge over other sites but you are just being played.It's the same BS with Qualcomm and their mmWave BS that has been spread by sites like this. Now 5G is just sub-6 and the only important part is massive MIMO

brakdoo - Thursday, August 1, 2019 - link

"and are for the best part thermally unconstrained"Just wait for the real release and do real testing. Don't you think Intel can release SPEC and Cinebench benchmarks themselves?

They just want you to do cheap advertisement months in advance.

Ian Cutress - Thursday, August 1, 2019 - link

We do our own validation of the platform to remove as much Intel involvement as possible. Also, if Intel went ahead and provided a system that performed vastly different, when it comes time to testing the actual systems, we'll be beating them over the head with the data and making a big stink. Everyone would.The question is, do you trust AnandTech to accurately and fairly test a reference system as if it were an OEM sample? So far your answers would seem to suggest no.

brakdoo - Thursday, August 1, 2019 - link

What I'm asking myself: Did we really learn something new from this piece? Not really would be my answer.Intel explained most of it before and we have seen enough leaks of the XPS 13 2-in-1 and the HP Spectre. Even Geekbench showed us that these CPUs have roughly the speed of 8565u on average (higher IPC, lower freq).

A journalist should always have a professional distance to these companies. This type of conflict of interest used to be most prominent in automobile magazines but the tech/PC companies are stepping up their marketing game.

Moizy - Thursday, August 1, 2019 - link

Dude. Before release, before we would have to wait for products on shelves, Ian got a chance to go inside Intel and test and upcoming product. And he wasn't handed benchmark results, he wasn't told what to report, he was allowed to run largely independently his own standardized benchmark suite. So now, 3-6 months before laptops will be available, we have a preview of what performance will look like. I learned a ton from Ian's preview article, and from this benchmarking piece. I'm not an Intel fan, I'm an industry fan, and this was awesome. Please take your toxicity elsewhere, or better yet, cure yourself of it.Ian, you're a good sport to participate in the comments section, but please don't let "brakdoo" or others pull you down. This was stellar work that I truly appreciate, as I'm sure most readers here do. I come to Anandtech specifically because of the high capabilities, understanding, and integrity of its writers and editors. Have been doing so for over 10 years. Please keep it up.

Dennis Travis - Thursday, August 1, 2019 - link

Well said Moizy. I was about the post the same basic thing. Good job Ian.eastcoast_pete - Thursday, August 1, 2019 - link

+1 on that comment. If company X let's you take a close first look AND bring your own tools I.e. test suite, Ian (and Andrei) would be fools not to take company X up on that offer. Yes, of course Intel has an agenda here, as does any other business, but we know that. In this case, I suspect that Intel wanted to show that Ice Lake actually exists and all parts are working, including the iGPU, unlike Whiskey Lake.MrSpadge - Thursday, August 1, 2019 - link

Well said Moizy, I totally agree! And would like to add: "haters gonna hate!"PreacherEddie - Thursday, August 1, 2019 - link

+1