Intel's Xeon Cascade Lake vs. NVIDIA Turing: An Analysis in AI

by Johan De Gelas on July 29, 2019 8:30 AM ESTTesting Notes

As the market stands, it is clear that alongside AMD and ARM, NVIDIA's professional offerings are a real threat to Intel's dominance in the datacenter and beyond. So for our testing today, we're going to focus on machine learning, and see just how Intel's new DL Boosted wares fare against the competition in the ML space.

On the Intel side of matters, of course, we're looking at the company's new Cascade Lake Xeon Scalable CPUs. The company provided two of their 28 core models, with the 165 Watt Xeon Platinum 8176, as well as the even faster 205 Watt Xeon Platinum 8280.

As for Cascade Lake's GPU competition, we've tapped NVIDIA's latest "Turing" Titan RTX card. While these aren't truly datacenter cards, the fact that they're based Turing means that they offer NVIDIA's very latest features. At the university that I work for, our deep learning researchers use these GPUs for training AI models as the Titan cards are affordable and have a lot of GPU memory available.

As an added bonus, Titan RTX cards can be used for both training (Hybrid FP32/16) as inference (FP16 and INT8). The current Tesla is still based on NVIDIA's Volta architecture, which does not have INT8 available for inference.

Finally, not to be excluded, we've also included AMD's first-generation EPYC platform in all of our testing. AMD doesn't have a hardware strategy quite like Intel – or specific instructions like VNNI – but as of late the company has offered all sorts of surprises.

Benchmark Configuration and Methodology

All of our testing was conducted on Ubuntu Server 18.04 LTS. You will notice that the DRAM capacity varies among our server configurations. This is of course a result of the fact that Xeons have access to six memory channels while EPYC CPUs have eight channels. As far as we know, all of our tests fit in 128 GB, so DRAM capacity should not have much influence on performance. But it will have a impact on total energy consumption, which we will discuss.

Last but not least, we want to note how the performance graphs have been color-coded. Orange is AMD's EPYC, dark blue is Intel's best (Cascade Lake/Skylake-SP), and light blue is the previous generation Xeons (Xeon E5-v4) . Gray has been used for the soon-to-be-replaced Xeon v1.

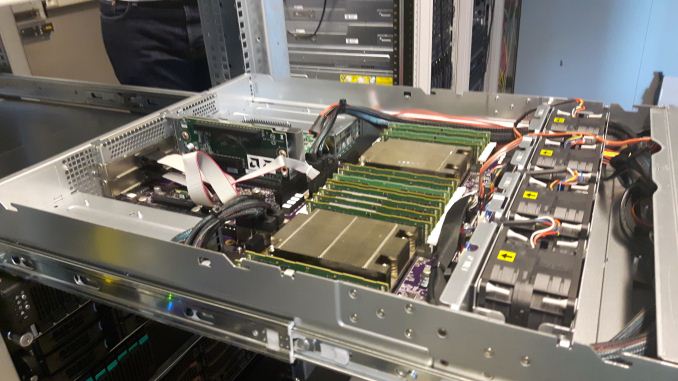

Intel's Xeon "Purley" Server – S2P2SY3Q (2U Chassis)

| CPU | Two Intel Xeon Platinum 8280 (2.7 GHz, 28c, 38.5MB L3, 205W) Two Intel Xeon Platinum 8176 (2.1 GHz, 28c, 38.5MB L3, 165W) |

| RAM | 384 GB (12x32 GB) Hynix DDR4-2666 |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3710 800 GB (data) |

| Motherboard | Intel S2600WF (Wolf Pass baseboard) |

| Chipset | Intel Wellsburg B0 |

| PSU | 1100W PSU (80+ Platinum) |

We enabled hyper-threading and Intel virtualization acceleration.

Xeon - NVIDIA Titan RTX Workstation

With some diplomacy, our AI researcher Pieter Bovijn at MCT was so kind to test his deep learning workstation. Below you can find the specs.

| CPU | Intel Xeon Gold 6152 (2.1 GHz, 22c, 30.25MB L3, 140W) |

| RAM | 192 GB (6x32 GB) Samsung DDR4-2666 |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3710 800 GB (data) |

| Motherboard | Supermicro SYS-7049A-T (Intel C621 chipset) |

| GPU | PNY TITAN RTX 24 GB GDDR6 |

| PSU | PWS-865-PQ |

This is the only server in the test with a discrete GPU.

AMD EPYC 7601 – (2U Chassis)

| CPU | Two EPYC 7601 (2.2 GHz, 32c, 8x8MB L3, 180W) |

| RAM | 512 GB (16x32 GB) Samsung DDR4-2666 @2400 |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3710 800 GB (data) |

| Motherboard | AMD Speedway |

| PSU | 1100W PSU (80+ Platinum) |

Other Notes

Both servers are fed by a standard European 230V (16 Amps max.) power line. The room temperature is monitored and kept at 23°C by our Airwell CRACs.

56 Comments

View All Comments

C-4 - Monday, July 29, 2019 - link

It's interesting that optimizations did so much for the Intel processors (but relatively less for the AMD ones). Who made these optimizations? How much time was devoted to doing this? How close are the algorithms to being "fully optimized" for the AMD and nVidia chips?quorm - Monday, July 29, 2019 - link

I believe these optimizations largely take advantage of AVX512, and are therefore intel specific, as amd processors do not incorporate this feature.RSAUser - Monday, July 29, 2019 - link

As quorm said, I'd assume it's due to AVX512 optimizations, the next generation of AMD Epyc CPU's should support it, and I am hoping closer to 3GHz clock speeds on the 64 core chips, since it seems the new ceiling is around the 4GHz mark for 16 all-core.It will be an interesting Q3/Q4 for Intel in the server market this year.

SarahKerrigan - Monday, July 29, 2019 - link

Next generation? You mean Rome? Zen2 doesn't have any AVX512.HStewart - Tuesday, July 30, 2019 - link

I believe AMD AVX 2 is dual-128 bit instead of 256bit - so AVX 512 would probably be quad 128bit .jospoortvliet - Tuesday, July 30, 2019 - link

That’s not really how it works, in the sense that you explicitly need to support the new instructions... and amd doesn’t (plan to, as far as we know).Qasar - Tuesday, July 30, 2019 - link

from wikipedia :" AVX2 is now fully supported, with an increase in execution unit width from 128-bit to 256-bit. "

" AMD has increased the execution unit width from 128-bit to 256-bit, allowing for single-cycle AVX2 calculations, rather than cracking the calculation into two instructions and two cycles."

which is from here : https://www.anandtech.com/show/14525/amd-zen-2-mic...

looks like AVX2 is single 256 bit :-)

name99 - Monday, July 29, 2019 - link

Regarding the limits of large batches: while this is true in principle, the maximum size of those batches can be very large, is hard to predict (at leas right now) and there is on-going work to increase the sizes, This link describes some of the issue and what’s known:http://ai.googleblog.com/2019/03/measuring-limits-...

I think Intel would be foolish to pin many hopes on the assumption that batch scaling will soon end the superior performance of GPUs and even more specialized hardware...

brunohassuna - Monday, July 29, 2019 - link

Some information about energy consumption would very useful in comparisons like thatozzuneoj86 - Monday, July 29, 2019 - link

My first thought when clicking this article was how much more visibly-complex CPUs have gotten in the past ~35 years.Compare the bottom of that Xeon to the bottom of a CLCC package 286:

https://en.wikipedia.org/wiki/Intel_80286#/media/F...

And that doesn't even touch the difference internally... 134,000 transistors to 8 million and from 16Mhz to 4,000Mhz. The mind boggles.