Arm's New Cortex-A77 CPU Micro-architecture: Evolving Performance

by Andrei Frumusanu on May 27, 2019 12:01 AM ESTThe Cortex-A77 µarch: Going For A 6-Wide* Front-End

The Cortex-A76 represented a clean-sheet design in terms of its microarchitecture, with Arm implementing from scratch the knowledge and lessons of years of CPU design. This allowed the company to design a new core that was forward-thinking in terms of its microarchitecture. The A76 was meant to serve as the baseline for the next two designs from the Austin family, today’s new Cortex-A77 as well next year’s “Hercules” design.

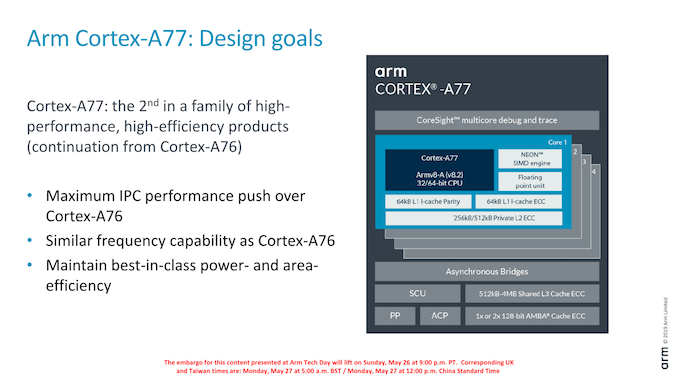

The A77 pushes new features with the primary goals of increasing the IPC of the microarchitecture. Arm’s goals this generation is a continuation of focusing on delivering the best PPA in the industry, meaning the designers were aiming to increase the performance of the core while maintaining the excellent energy efficiency and area size characteristics of the A76 core.

In terms of frequency capability, the new core remains in the same frequency range as the A76, with Arm targeting 3GHz peak frequencies in optimal implementations.

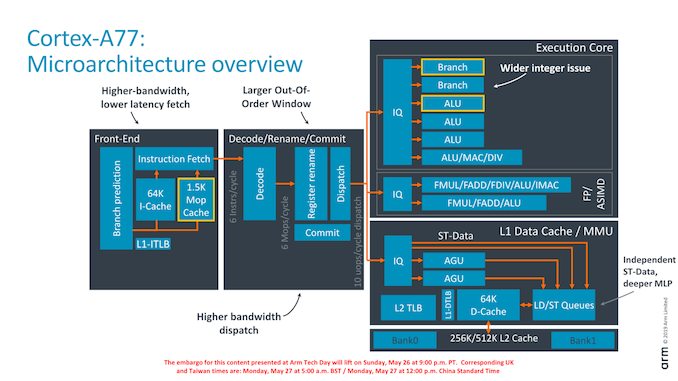

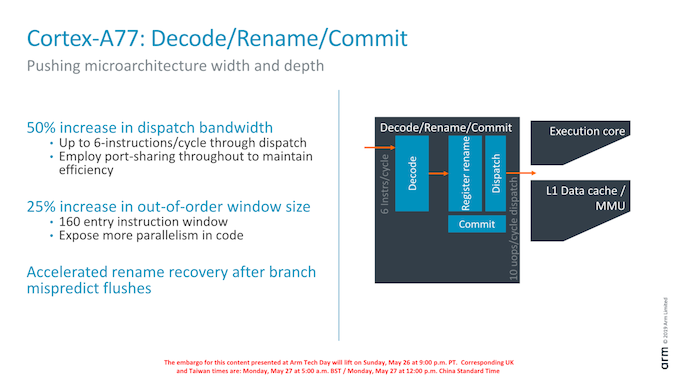

As an overview of the microarchitectural changes, Arm has touched almost every part of the core. Starting from the front-end we’re seeing a higher fetch bandwidth with a doubling of the branch predictor capability, a new macro-OP cache structure acting as an L0 instruction cache, a wider middle core with a 50% increase in decoder width, a new integer ALU pipeline and revamped load/store queues and issue capability.

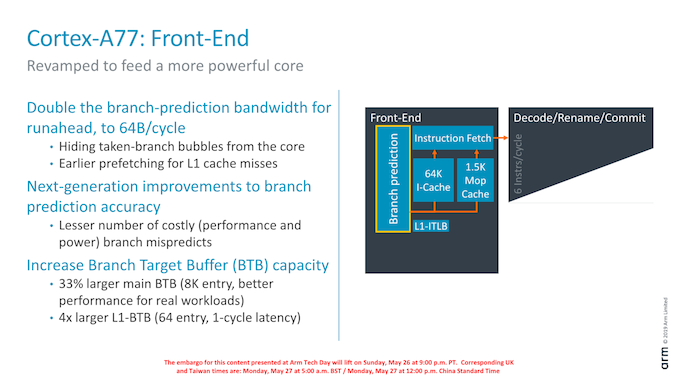

Dwelling deeper into the front-end, a major change in the branch predictor was that its runahead bandwidth has doubled from 32B/cycle to 64B/cycle. Reason for this increase was in general the wider and more capable front-end, and the branch predictor’s speed needed to be improved in order to keep up with feeding the middle-core sufficiently. Arm instructions are 32bits wide (16b for Thumb), so it means the branch predictor can fetch up to 16 instructions per cycle. This is a higher bandwidth (2.6x) than the decoder width in the middle core, and the reason for this imbalance is to allow the front-end to as quickly as possible catch up whenever there are branch bubbles in the core.

The branch predictor’s design has also changed, lowering branch mispredicts and increasing its accuracy. Although the A76 already had the a very large Branch Target Buffer capacity with 6K entries, Arm has increased this again by 33% to 8K entries with the new generation design. Seemingly Arm has dropped a BTB hierarchy: The A76 had a 16-entry nanoBTB and a 64-entry microBTB – on the A77 this looks to have been replaced by a 64-entry L1 BTB that is 1 cycle in latency.

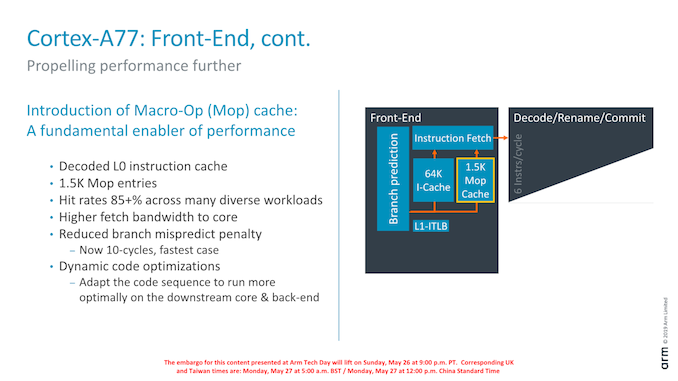

Another major feature of the new front-end is the introduction of a Macro-Op cache structure. For readers familiar with AMD and Intel’s x86 processor cores, this might sound familiar and akin to the µOP/MOP cache structures in those cores, and indeed one would be correct in assuming they have the similar functions.

In effect, the new Macro-OP cache serves as a L0 instruction cache, containing already decoded and fused instructions (macro-ops). In the A77’s case the structure is 1.5K entries big, which if one would assume macro-ops having a similar 32-bit density as Arm instructions, would equate to about 6KB.

The peculiarity of Arm’s implementation of the cache is that it’s deeply integrated with the middle-core. The cache is filled after the decode stage (in a decoupled manner) after instruction fusion and optimisations. In case of a cache-hit, then the front-end directly feeds from the macro-op cache into the rename stage of the middle-core, shaving off a cycle of the effective pipeline depth of the core. What this means is that the core’s branch mispredicts latency has been reduced from 11 cycles down to 10 cycles, even though it has the frequency capability of a 13 cycle design (+1 decode, +1 branch/fetch overlap, +1 dispatch/issue overlap). While we don’t have current direct new figures of newer cores, Arm’s figure here is outstandingly good as other cores have significantly worse mispredicts penalties (Samsung M3, Zen1, Skylake: ~16 cycles).

Arm’s rationale for going with a 1.5K entry cache size is that they were aiming for an 85% hit-rate across their test suite workloads. Having less capacity would take reduce the hit-rate more significantly, while going for a larger cache would have diminishing returns. Against a 64KB L1 cache the 1.5K MOP cache is about half the area in size.

What the MOP cache also allows is for a higher bandwidth to the middle-core. The structure is able to feed the rename stage with 64B/cycle – again significantly higher than the rename/dispatch capacity of the core, and again this imbalance with a more “fat” front-end bandwidth allows the core to hide to quickly hide branch bubbles and pipeline flushes.

Arm talked a bit about “dynamic code optimisations”: Here the core will rearrange operations to better suit the back-end execution pipelines. It’s to be noted that “dynamic” here doesn’t mean it’s actually programmable in what it does (Akin to Nvidia’s Denver code translations), the logic is fixed to the design of the core.

Finally getting to the middle-core, we see a big uplift in the bandwidth of the core. Arm has increased the decoder width from 4-wide to 6-wide.

Correction: The Cortex A77’s decoder remains at 4-wide. The increased middle-core width lies solely at the rename stage and afterwards; the core still fetches 6 instructions, however this bandwidth only happens in case of a MOP-cache hit which then bypasses the decode stage. In MOP-cache miss-cases, the limiting factor is still the decoder which remains at 4 instructions per cycle.

The increased width also warranted an increase of the reorder buffer of the core which has gone from 128 to 160 entries. It’s to be noted that such a change was already present in Qualcomm’s variant of the Cortex-A76 although we were never able to confirm the exact size employed. As Arm was still in charge of making the RTL changes, it wouldn’t surprise me if was the exact same 160 entry ROB.

108 Comments

View All Comments

Valis - Thursday, May 30, 2019 - link

Yeah, it's because he is a white male, probably hetero also. :Praptormissle - Monday, May 27, 2019 - link

So it looks like the SD 865 is finally going to break the 4000 geekbench single core score and probably score 12000+ in multi core. ARM has finally leveled the playing field with Apple as I really don't expect major gains from the A13 as they've likely blown their wad.GC2:CS - Monday, May 27, 2019 - link

There were big gains from going from 16/14 nm in 2016 to 7 nm in 2018.While performance gains were impresive in that time, no shrink like that coming any time soon.

76 was a big performance jump, but that was after many regular releases where ARM had overestimeted or even regresed on their performance metrics in reality (Or so i think... Am I right ?).

And Apple does not make promises, but they are expected (based on like 5 (how many ?!?) generations of big performance jumps) to deliver something crazy, which they can fail.

Honestly I would happily take the same performance with lower power. And cut/cap the peak power as well... not planing to buy a fan equped phone.

Wilco1 - Monday, May 27, 2019 - link

There is a 7+nm shrink coming this year and then another huge one with 5nm next year. So big shrinks are continuing at least at TSMC.And performance has increased hugely with each generation since Cortex-A57. The smallest gain was with Cortex-A73, but that still improved sustained performance and efficiency considerably.

Many phones support battery saving modes which limit the frequency of the big cores (or switch them off). So you can already get what you want if battery life is your goal. I find these modes very useful but you clearly notice the performance loss while browsing.

Santoval - Monday, May 27, 2019 - link

"or even regresed on their performance metrics in reality (Or so i think... Am I right ?)."Indeed, the A73 core was usually slower than the A72 core, or at best just as fast, though it was supposed to be the successor core.

blu42 - Tuesday, May 28, 2019 - link

CA73 was not exactly 'usually slower' than CA72 -- former was usually slower at asimd workloads, but it was going better at spaghetti code (even with the narrower frontend), of which there's more in this world. We now have CA57s, CA72s and CA73s all in affordable SBCs (finally!), so people can check for themselves.BTW, CA73 was a successor but it was not meant to be a performance improvement, rather than an efficiency improvement, and there it delivered, IMO

ZolaIII - Tuesday, May 28, 2019 - link

A73 is a two instructions wide vs A72 three instructions wide OoO design. While performance all together whose the same the gain in performance per W whose 30~33% & per mm² 27~28%.name99 - Tuesday, May 28, 2019 - link

The A10 was based on 16nm, just like the A9. But came with a substantial improvement...You guys are way too ignorant of the role of micro architecture in performance. Apple’s speed boosts so far (since A7) are pretty much exactly split 50% micro-architecture and 50% frequency (so process).

The A13 based on 7nm is still capable of large improvements; firstly from micro-arch improvements, second from having a chance to optimize what’s already there (like, as I said, the A10 as second pass through 16nm).

galdutro - Monday, May 27, 2019 - link

Given ther track record, I would be surprised if the A13 had the same performance as the A12X. YES, this is crazy! But it is what they actually have been able to achiave in the past couple of generations.galdutro - Monday, May 27, 2019 - link

typos: their* wouldn´t** achieve***