Upgrading from an Intel Core i7-2600K: Testing Sandy Bridge in 2019

by Ian Cutress on May 10, 2019 10:30 AM EST- Posted in

- CPUs

- Intel

- Sandy Bridge

- Overclocking

- 7700K

- Coffee Lake

- i7-2600K

- 9700K

Comparing the Quad Cores: CPU Tests

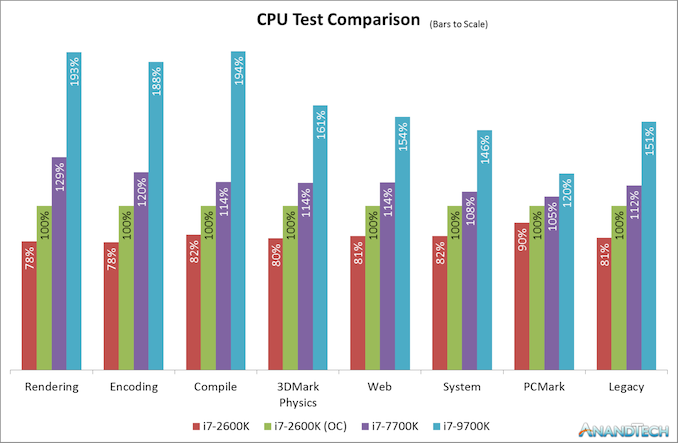

As a straight up comparison between what Intel offered in terms of quad cores, here’s an analysis of all the results for the 2600K, 2600K overclocked, and Intel’s final quad-core with HyperThreading chip for desktop, the 7700K.

On our CPU tests, the Core i7-2600K when overclocked to a 4.7 GHz all-core frequency (and with DDR3-2400 memory) offers anywhere from 10-24% increase in performance against the stock settings with Intel maximum supported frequency memory. Users liked the 2600K because of this – there were sizable gains to be had, and Intel’s immediate replacements to the 2600K didn’t offer the same level of boost or difference in performance.

However, when compared to the Core i7-7700K, Intel’s final quad-core with HyperThreading processor, users were able to get another 8-29% performance on top of that. Depending on the CPU workload, it would be very easy to see how a user could justify getting the latest quad core processor and feeling the benefits for more modern day workloads, such as rendering or encoding, especially given how the gaming market has turned more into a streaming culture. For the more traditional workflows, such as PCMark or our legacy tests, only gains of 5-12% are seen, which is what we would have seen back when some of these newer tests were no longer so relevant.

As for the Core i7-9700K, which has eight full cores and now sits in the spot of Intel’s best Core i7 processor, performance gains are very much more tangible, and almost double in a lot of cases against an overclocked Core i7-2600K (and more than double against one at stock).

The CPU case is clear: Intel’s last quad core with hyperthreading is an obvious upgrade for a 2600K user, even before you overclock it, and the 9700K which is almost the same launch price parity is definitely an easy sell. The gaming side of the equation isn’t so rosy though.

Comparing the Quad Cores: GPU Tests

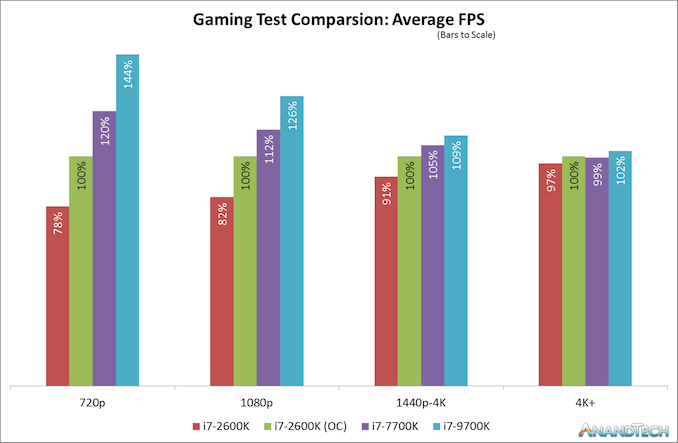

Modern games today are running at higher resolutions and quality settings than the Core i7-2600K did when it was first launch, as well as new physics features, new APIs, and new gaming engines that can take advantage of the latest advances in CPU instructions as well as CPU-to-GPU connectivity. For our gaming benchmarks, we test with four tests of settings on each game (720p, 1080p, 1440p-4K, and 4K+) using a GTX 1080, which is one of last generations high-end gaming cards, and something that a number of Core i7 users might own for high-end gaming.

When the Core i7-2600K was launched, 1080p gaming was all the rage. I don’t think I purchased a monitor bigger than 1080p until 2012, and before then I was clan gaming on screens that could have been as low as 1366x768. The point here is that with modern games at older resolutions like 1080p, we do see a sizeable gain when the 2600K is overclocked. A 22% gain in frame rates from a 34% overclock sounds more than reasonable to any high-end focused gamer. Intel only managed to improve on that by 12% over the next few years to the Core i7-7700K, relying mostly on frequency gains. It’s not until the 9700K, with more cores and running games that actually know what to do with them, do we see another jump up in performance.

However, all those gains are muted at a higher resolutions setting, such as 1440p. Going from an overclocked 2600K to a brand new 9700K only gives a 9% increase in frame rates for modern games. At an enthusiast 4K setting, the results across the board are almost equal. As resolutions are getting higher, even with modern physics and instructions and APIs, the bulk of the workload is still on the GPU, and even the Core i7-2600K is powerful enough for it. There is the odd title where having the newer chip helps a lot more, but it’s in the minority.

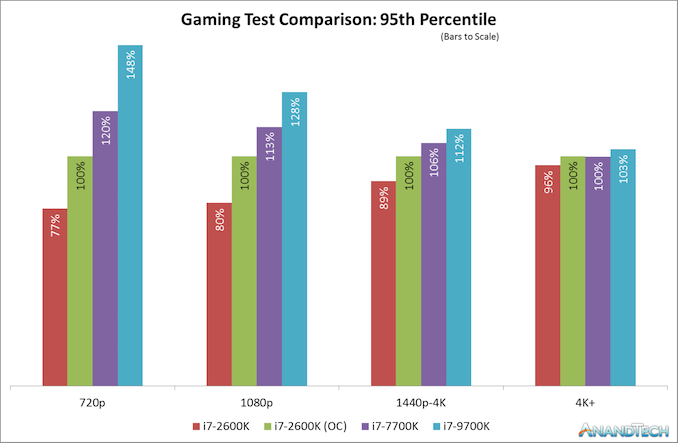

That is, at least on average frame rates. Modern games and modern testing methods now test percentile frame rates, and the results are a little different.

Here the results look a little worse for the Core i7-2600K and a bit better for the Core i7-9700K, but on the whole the broad picture is the same for percentile results as it is for average frame results. In the individual results, we see some odd outliers, such as Ashes of the Singularity which was 15% down on percentiles at 4K for a stock 2600K, but the 9700K was only 6% higher than an overclocked 2600K, but like the average frame rates, it is really title dependent.

213 Comments

View All Comments

StrangerGuy - Saturday, May 11, 2019 - link

One thing I want to point out that modern games are far less demanding relative to the CPU versus games in the 90s. If anyone thinks their 8 year old Sandy Bridge quad is having it sort of rough today, they are probably not around to remember running Half-Life comfortably above 60 FPS at least needed a CPU that was released 2 years later.versesuvius - Saturday, May 11, 2019 - link

There is a point in every Windows OS user computer endeavors, that they start playing less and less games, and at about the same time start foregoing upgrades to their CPU. They keep adding ram and hard disk space and maybe a new graphic card after a couple of years. The only reason that such a person that by now has completely stopped playing games may upgrade to a new CPU and motherboard is the maximum amount of RAM that can be installed on their motherboard. And with that really comes the final PC that such a person may have in a long, long time. Kids get the latest CPU and soon will realize the law of diminishing returns, which by now is gradually approaching "no return", much faster than their parents. So, in perhaps ten years there will be no more "Tic", or "Toc" or Cadence or Moore's law. There be will computers, baring the possibility that dumb terminals have replaced PCs, that everybody knows what they can expect from. No serendipity there for certain.Targon - Tuesday, May 14, 2019 - link

The fact that you don't see really interesting games showing up all that often is why many people stopped playing games in the first place. Many people enjoyed the old adventure games with puzzles, and while action appeals to younger players, being more strategic and needing to come up with different approaches in how you play has largely died. Interplay is gone, Bullfrog, Lionhead....On occasion something will come out, but few and far between.Games for adults(and not just adult age children who want to play soldier on the computer) are not all that common. I blame EA for much of the decline in the industry.

skirmash - Saturday, May 11, 2019 - link

I still have an i7-2600 in an old Dell based upon an H67 chipset. I was thinking about using it as a server and updating the board to get updated connectivity. updating the board and using it as a server. Z77 chipset would seem to be the way to go although getting a new board with this chipset seems expensive unless I go used. Anyone any thoughts on this - whether its worthwhile etc or a cost effective way to do it?skirmash - Saturday, May 11, 2019 - link

Sorry for the typos but I hope you get the sentiment.Tunnah - Saturday, May 11, 2019 - link

Oh wow this is insane timing, I'm actually upgrading from one of these and have had a hard time figuring out what sort of performance upgrade I'd be getting. Much appreciated!Tunnah - Saturday, May 11, 2019 - link

I feel like I can chip in a perspective re: gaming. While your benchmarks show solid average FPS and all that, they don't show the quality of life that you lose by having an underpowered CPU. I game at 4K, 2700k (4.6ghz for heat&noise reasons), 1080Ti, and regularly can't get 60fps no matter the settings, or have constant grame blips and dips. This is in comparison to a friend who has the same card but a Ryzen 1700XNewer games like Division 2, Assassin's Creed Odyssey, and as shown here, Shadow Of The Romb Raider, all severely limit your performance if you have an older CPU, to the point where getting a constant 60fps is a real struggle, and benchmarks aside, that's the only benchmark the average user is aiming for.

I also have 1333mhz RAM, which is just a whole other pain! As more and more games move into giant open world games and texture streaming and loading is happening in game rather than on loading screens, having slow RAM really affects your enjoyment.

I'm incredibly grateful for this piece btw, I'm actually moving to Zen2 when it comes out, and I gotta say, I've not been this excited since..well, Sandy Bridge.

Death666Angel - Saturday, May 11, 2019 - link

"I don’t think I purchased a monitor bigger than 1080p until 2012."Wow, really? So you were a CRT guy before that? How could you work on those low res screens all the time?! :D I got myself a 1200p 24" monitor once they became affordable in early 2008 (W2408hH). Had a 1280x1024 19" before that and it was night and day, sooo much better.

PeachNCream - Sunday, May 12, 2019 - link

Still running 1366x768 on my two non-Windows laptops (HP Steam 11 and Dell Latitude e6320) and it okay. My latest, far less uses Windows gaming system has a 14 inch panel running 1600x900. Its a slight improvement, but I could live without it. The old Latitude does all my video production work so though I could use a few more pixels, it isn't the end of the world as is. The laptop my office issued is a HP Probook 640 G3 so it has a 14 inch 1080p panel which to have to scale at 125% to actually use so the resolution is pretty much pointless.PeachNCream - Sunday, May 12, 2019 - link

Ugh, phone auto correct...I really need to look over anything I type on a phone more closely. I feel like I'm reading comment by a non-native English speaker, but its me. How depressing.