The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

by Ryan Smith & Nate Oh on February 22, 2019 9:00 AM ESTCompute & Synthetics

Shifting gears, we'll look at the compute and synthetic aspects of the GTX 1660 Ti.

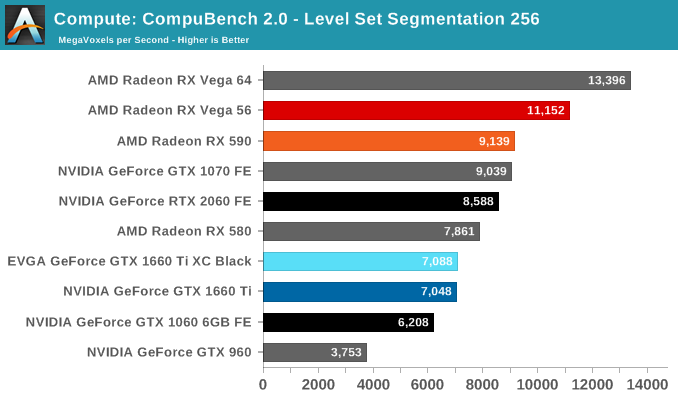

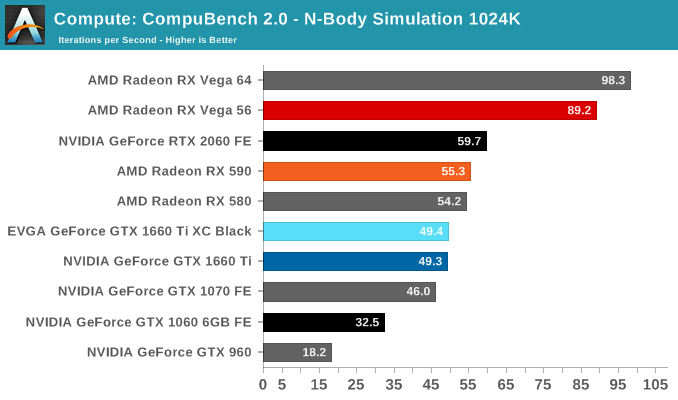

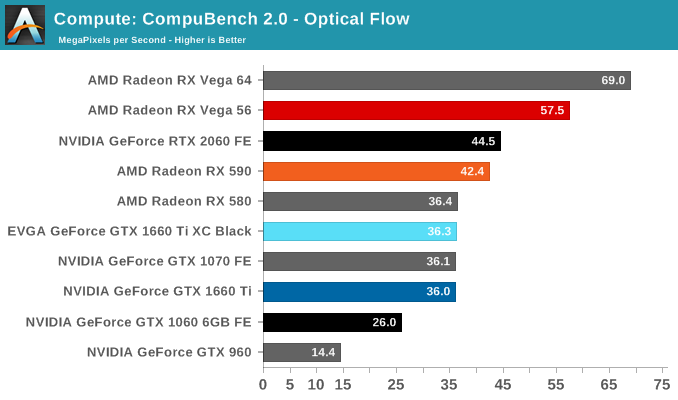

Beginning with CompuBench 2.0, the latest iteration of Kishonti's GPU compute benchmark suite offers a wide array of different practical compute workloads, and we’ve decided to focus on level set segmentation, optical flow modeling, and N-Body physics simulations.

On paper, the GTX 1660 Ti looks to provide around 85% of the RTX 2060's compute and shading throughput; for Compubench, we see it achieving around 82% of the latter's performance.

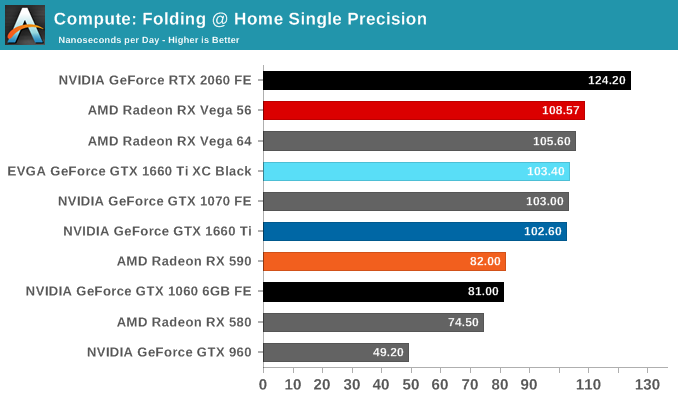

Moving on, we'll also look at single precision floating point performance with FAHBench, the official Folding @ Home benchmark. Folding @ Home is the popular Stanford-backed research and distributed computing initiative that has work distributed to millions of volunteer computers over the internet, each of which is responsible for a tiny slice of a protein folding simulation. FAHBench can test both single precision and double precision floating point performance, with single precision being the most useful metric for most consumer cards due to their low double precision performance.

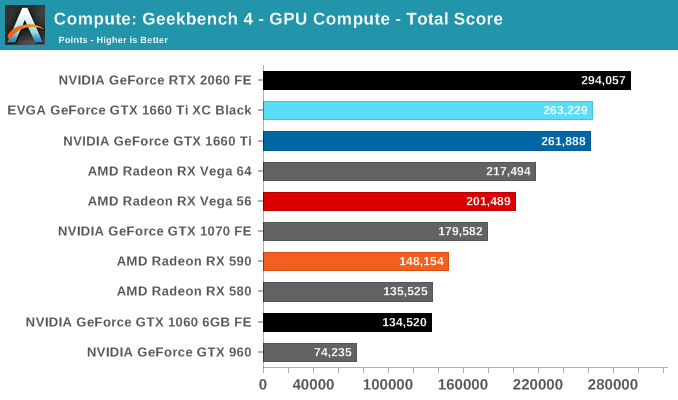

Next is Geekbench 4's GPU compute suite. A multi-faceted test suite, Geekbench 4 runs seven different GPU sub-tests, ranging from face detection to FFTs, and then averages out their scores via their geometric mean. As a result Geekbench 4 isn't testing any one workload, but rather is an average of many different basic workloads.

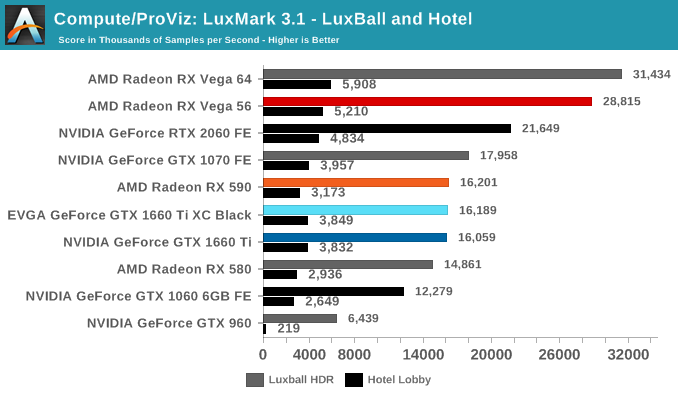

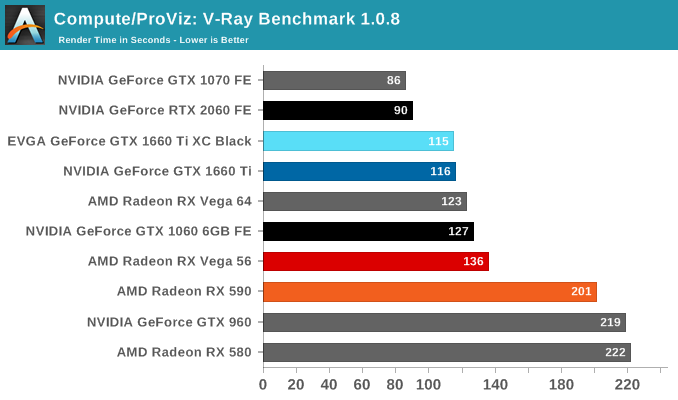

In lieu of Blender, which has yet to officially release a stable version with CUDA 10 support, we have the LuxRender-based LuxMark (OpenCL) and V-Ray (OpenCL and CUDA).

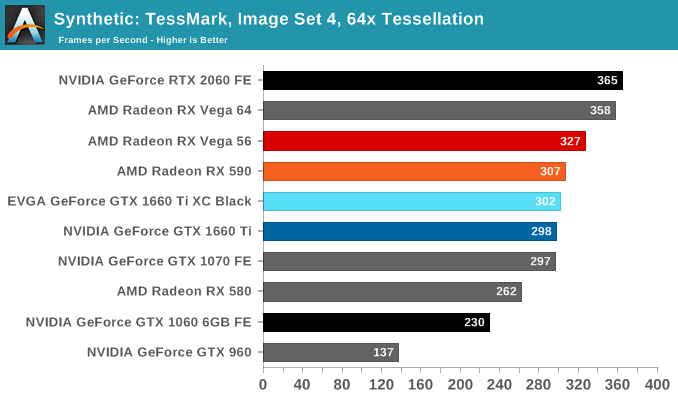

We'll also take a quick look at tessellation performance.

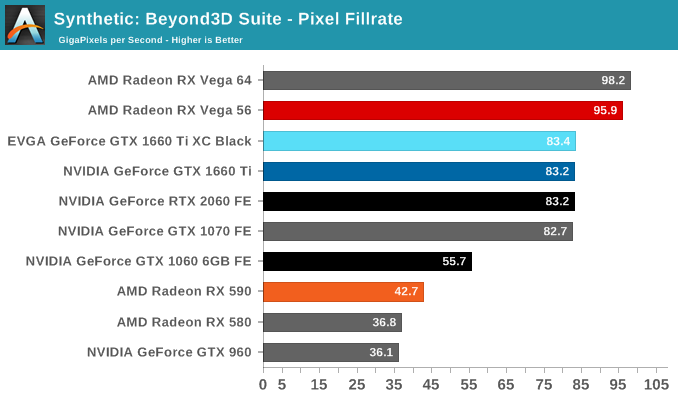

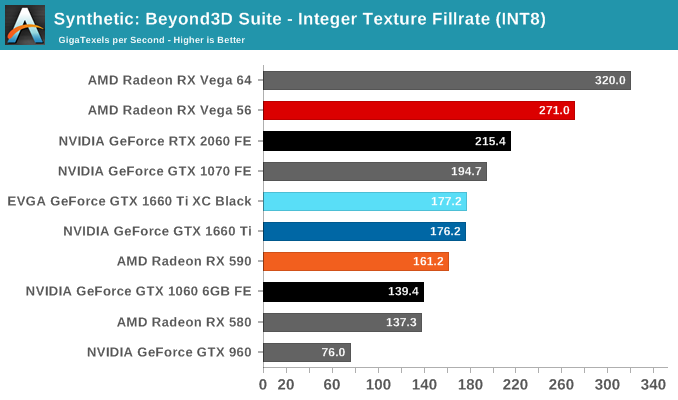

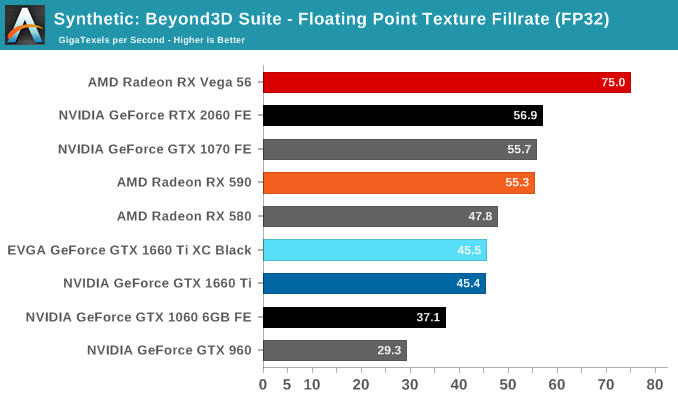

Finally, for looking at texel and pixel fillrate, we have the Beyond3D Test Suite. This test offers a slew of additional tests – many of which we use behind the scenes or in our earlier architectural analysis – but for now we’ll stick to simple pixel and texel fillrates.

The practically identical pixel fill rates for the GTX 1660 Ti and RTX 2060 might seem odd at first blush, but it is an entirely expected result as both GPUs have the same number of ROPs, similar clockspeeds, same GPC/TPC setup, and similar memory configurations. And being the same generation/architecture, there aren't any changes or improvements to DCC. In the same vein, the RTX 2060 puts up a 25% higher texture fillrate over the GTX 1660 Ti as a consequence of having 25% more TMUs (96 vs 120).

157 Comments

View All Comments

Psycho_McCrazy - Tuesday, February 26, 2019 - link

Given that 21:9 monitors are also making great inroads into the gamer's purchase lists, can benchmark resolutions also include 2560.1080p, 3440.1440p and (my wishlist) 3840.1600p benchies??eddman - Tuesday, February 26, 2019 - link

2560x1080, 3440x1440 and 3840x1600That's how you right it, and the "p" should not be used when stating the full resolution, since it's only supposed to be used for denoting video format resolution.

P.S. using 1080p, etc. for display resolutions isn't technically correct either, but it's too late for that.

Ginpo236 - Tuesday, February 26, 2019 - link

a 3-slot ITX-sized graphics card. What ITX case can support this? 0.bajs11 - Tuesday, February 26, 2019 - link

Why can't they just make a GTX 2080Ti with the same performance as RTX 2080Ti but without useless RT and dlss and charge something like 899 usd (still 100 bucks more than gtx 1080ti)?i bet it will sell like hotcakes or at least better than their overpriced RTX2080ti

peevee - Tuesday, February 26, 2019 - link

Do I understand correctly that this thing does not have PCIe4?CiccioB - Thursday, February 28, 2019 - link

No, they have not a PCIe4 bus.Do you think they should have?

Questor - Wednesday, February 27, 2019 - link

Why do I feel like this was a panic plan in an attempt to bandage the bleed from RTX failure? No support at launch and months later still abysmal support on a non-game changing and insanely expensive technology.I am not falling for it.

CiccioB - Thursday, February 28, 2019 - link

Yes, a "panic plan" that required about 3 years to create the chips.3 years ago they already know that they would have panicked at the RTX cards launch and so they made the RT-less chip as well. They didn't know that the RT could not be supported in performance with the low number of CUDA core low level cards have.

They didn't know that the concurrent would have played with the only weapon it was left to it to battle, that is prize as they could not think that the concurrent was not ready with a beefed up architecture capable of the sa functionalities.

So, yes, they panicked for sure. They were not prepared to anything of what is happening,

Korguz - Friday, March 1, 2019 - link

" that required about 3 years to create the chips.3 years ago they already know that they would have panicked at the RTX cards launch and so they made the RT-less chip as well. They didn't know that the RT could not be supported in performance with the low number of CUDA core low level cards have. "

and where did you read this ? you do understand, and realize... is IS possible to either disable, or remove parts of an IC with out having to spend " about 3 years " to create the product, right ? intel does it with their IGP in their cpus, amd did it back in the Phenom days with chips like the Phenom X4 and X3....

CiccioB - Tuesday, March 5, 2019 - link

So they created a TU116, a completely new die without RT and Tensor Core, to reduce the size of the die and lose about 15% of performance with respect to the 2060 all in 3 months because they panicked?You probably have no idea of what are the efforts to create a 280mm^2 new die.

Well, by this and your previous posts you don't have idea of what you are talking about at all.