The AMD Radeon VII Review: An Unexpected Shot At The High-End

by Nate Oh on February 7, 2019 9:00 AM ESTRadeon VII & Radeon RX Vega 64 Clock-for-Clock Performance

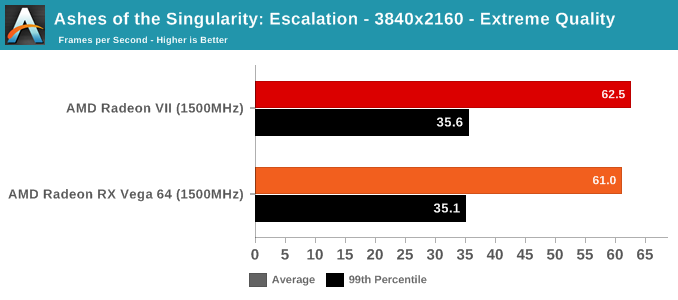

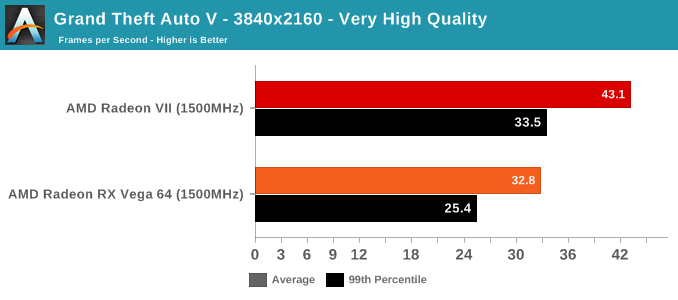

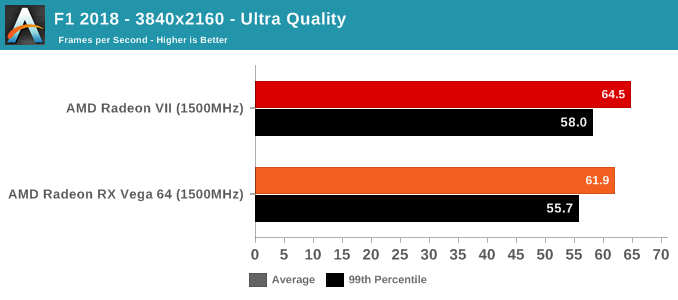

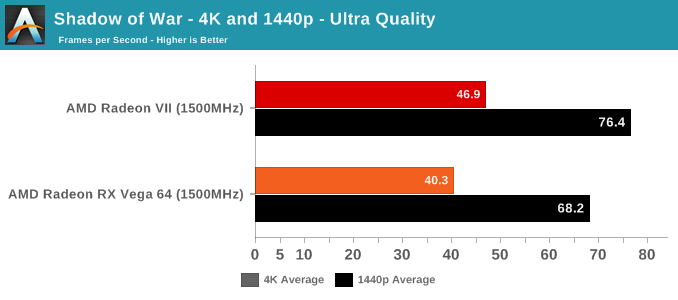

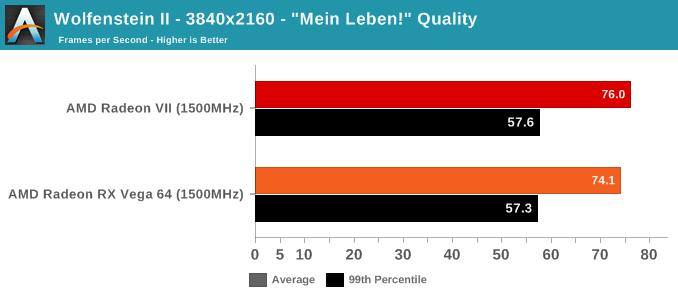

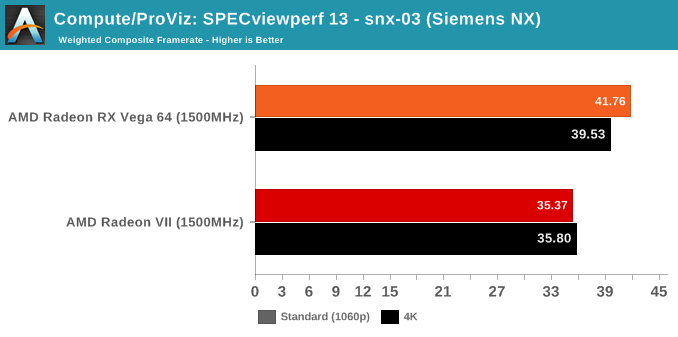

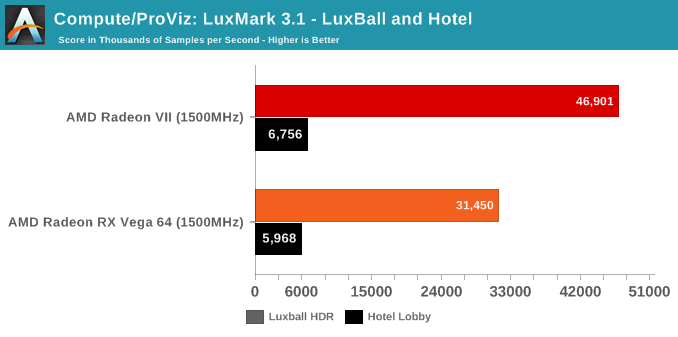

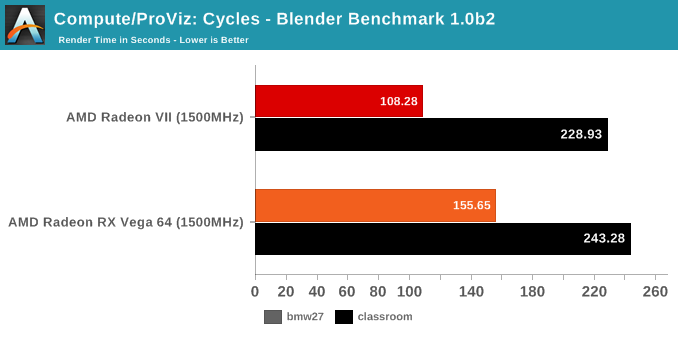

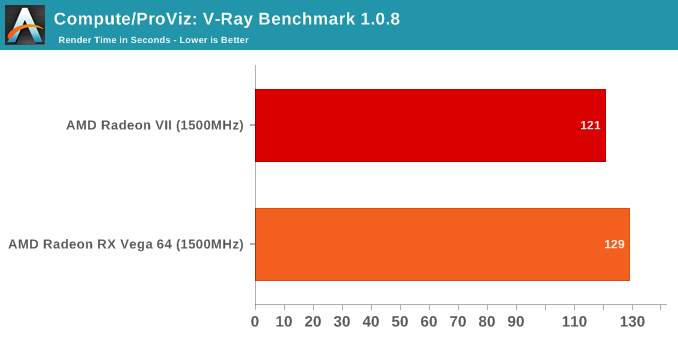

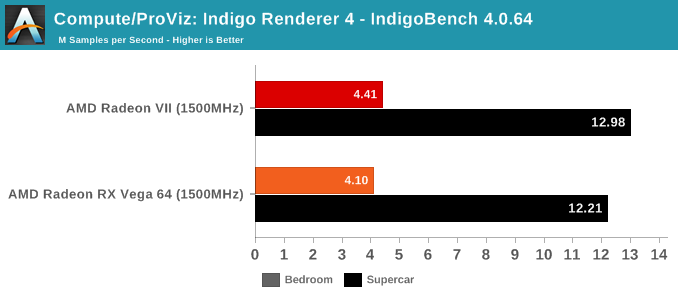

With the variety of changes from the Vega 10 powered RX Vega 64 to the new Radeon VII and its Vega 20 GPU, we wanted to take a look at performance and compute while controlling for clockspeeds. In this way, we can peek at any substantial improvements or differences in pseudo-IPC. There's a couple caveats here; obviously, because the RX Vega 64 has 64 CUs while the Radeon VII has only 60 CUs, the comparison is already not exact. The other thing is that "IPC" is not the exact metric measured here, but more so how much graphics/compute work is done per clock cycle and how that might translate to performance. Isoclock GPU comparisons tend to be less useful when comparing across generations and architectures, as like in Vega designers often design to add pipeline stages to enable higher clockspeeds, but at the cost of reducing work done per cycle and usually also increasing latency.

For our purposes, the incremental nature of 2nd generation Vega allays some of those concerns, though unfortunately, Wattman was unable to downclock memory at this time, so we couldn't get a set of datapoints for when both cards are configured for comparable memory bandwidth. While the Vega GPU boost mechanics means there's not a static pinned clockspeed, both cards were set to 1500MHz, and both fluctuated from 1490 to 1500MHZ depending on workload. All combined, this means that these results should be taken as approximations and lacking granularity, but are useful in spotting significant increases or decreases. This also means that interpreting the results is trickier, but at a high level, if the Radeon VII outperforms the RX Vega 64 at a given non-memory bound workload, then we can assume meaningful 'work per cycle' enhancements relatively decoupled from CU count.

As mentioned above, we were not able to control for the doubled memory bandwidth. But in terms of gaming, the only unexpected result is with GTA V. As an outlier, it's less likely to be an indication of increased gaming 'work per cycle,' and more likely to be related to driver optimization and memory bandwidth increases. GTA V has historically been a title where AMD hardware don't reach the expected level of performance, so regardless there's been room for driver improvement.

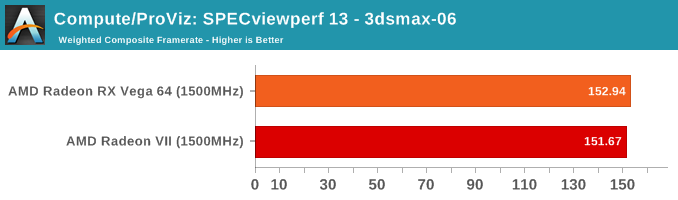

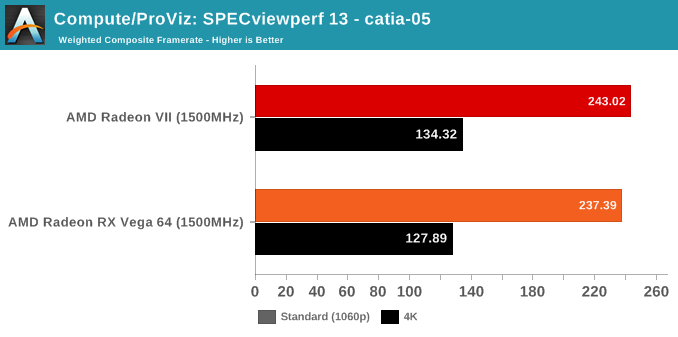

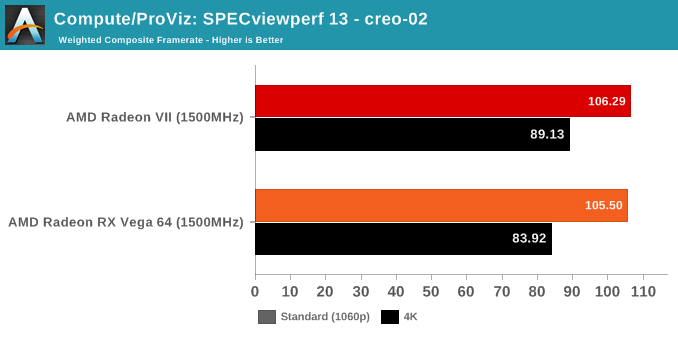

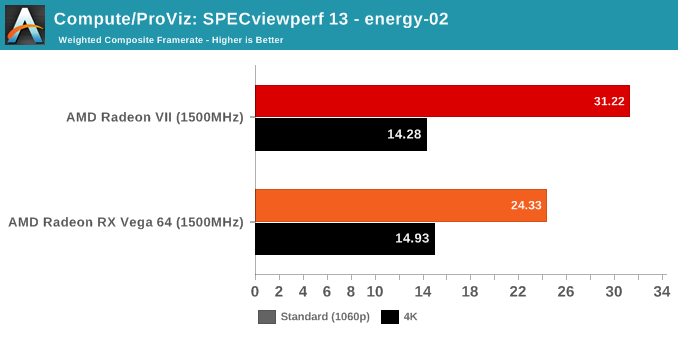

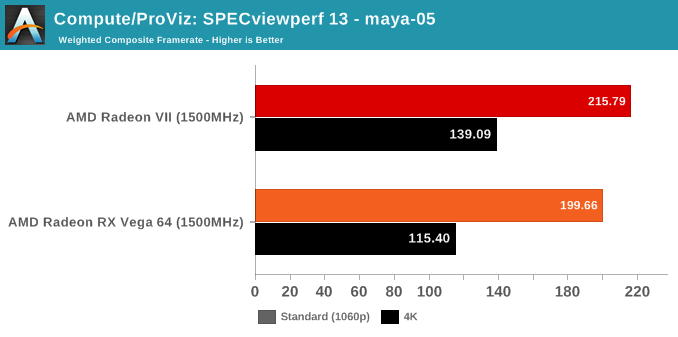

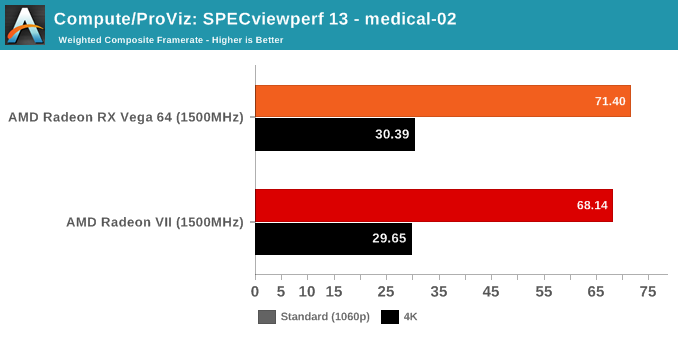

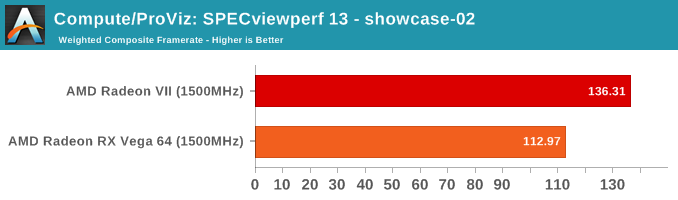

SPECviewperf is a slightly different story, though.

289 Comments

View All Comments

tipoo - Sunday, February 10, 2019 - link

It's MI50vanilla_gorilla - Thursday, February 7, 2019 - link

As a linux prosumer user who does light gaming, this card is a slam dunk for me.LogitechFan - Friday, February 8, 2019 - link

and a noisy one at thatBaneSilvermoon - Thursday, February 7, 2019 - link

Meh, I went looking for a 16GB card about a week before they announced Radeon VII because gaming was using up all 8gb of VRAM and 14gb of system RAM. This card is a no brainer upgrade from my Vega 64.LogitechFan - Friday, February 8, 2019 - link

lemme guess, you're playing sandstorm?Gastec - Tuesday, February 12, 2019 - link

I was beginning to think that the "money" was in crytocurrency mining with video cards but I guess after the €1500+ RTX 2080Ti I should reconsider :)eddman - Thursday, February 7, 2019 - link

Perhaps but Turing is also a new architecture, so it's probable it'd get better with newer drivers too.Maxwell is from 2014 and still performs as it should.

As for GPU-accelerated gameworks, obviously nvidia is optimizing it for their own cards only, but that doesn't mean they actively modify the code to make it perform worse on AMD cards; not to mention it would be illegal. (GPU-only gameworks effects can be disabled in game options if need be)

Many (most?) games just utilize the CPU-only gameworks modules; no performance difference between cards.

ccfly - Tuesday, February 12, 2019 - link

you joking right ?1st game they did just that is crysis (they hide modely under water so ati card will render these too

and be slower

and after that they cheat full time ...

eddman - Tuesday, February 12, 2019 - link

No, I'm not.There was no proof of misconduct in crysis 2's case, just baseless rumors.

For all we know, it was an oversight on crytek's part. Also, DX11 was an optional feature, meaning it wasn't part of game's main code, as I've stated.

eddman - Tuesday, February 12, 2019 - link

... I mean an optional toggle for crysis 2. The game could be run in DX9 mode.