AMD Reveals Radeon VII: High-End 7nm Vega Video Card Arrives February 7th for $699

by Ryan Smith on January 9, 2019 1:00 PM EST

As it turns out, the video card wars are going to charge into 2019 quite a bit hotter than any of us were expecting. Moments ago, as part of AMD’s CES 2019 keynote, CEO Dr. Lisa Su announced that AMD will be releasing a new high-end, high-performance Radeon graphics card. Dubbed the Radeon VII (Seven), AMD has their eyes set on countering NVIDIA’s previously untouchable GeForce RTX 2080. And, if the card lives up to AMD’s expectations, then come February 7th it may just as well do that.

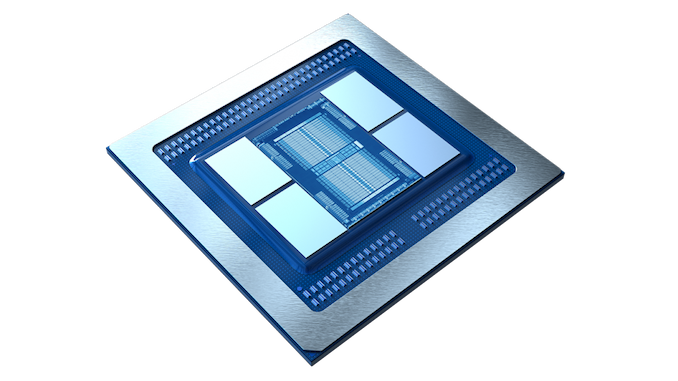

Today’s announcement is interesting in that it’s just as much about technology as it is the 3D chess that is the market positioning fights between AMD and NVIDIA. Technically AMD isn’t announcing any new GPUs here – regular readers will correctly guess that we’re talking about Vega 20 – but the situation in the high-end market has played out such that there’s now a window for AMD to bring their cutting-edge Vega 20 GPU to the consumer market, and this is a window AMD is looking to take full advantage of.

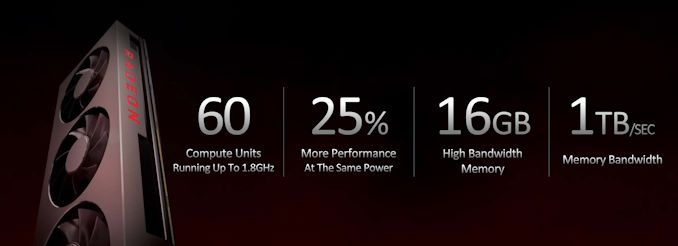

At a high level then, the Radeon VII employs a slightly cut down version of AMD’s Vega 20 GPU. With 60 of 64 CUs enabled, it actually has a few less CUs than AMD’s previous flagship, the Radeon RX Vega 64, but it makes up for the loss with much higher clockspeeds and a much more powerful memory and pixel throughput backend. As a result, AMD says that the Radeon VII should beat their former flagship by anywhere between 20% and 42% depending on the game (with an overall average of 29%), which on paper would be just enough to put the card in spitting distance of NVIDIA’s RTX 2080, and making it a viable and competitive 4K gaming card.

| AMD Radeon Series Specification Comparison | ||||||

| AMD Radeon VII | AMD Radeon RX Vega 64 | AMD Radeon RX 590 | AMD Radeon R9 Fury X | |||

| Stream Processors | 3840 (60 CUs) |

4096 (64 CUs) |

2304 (36 CUs) |

4096 (64 CUs) |

||

| ROPs | 64 |

64 | 32 | 64 | ||

| Base Clock | ? | 1247MHz | 1469MHz | N/A | ||

| Boost Clock | 1800MHz | 1546MHz | 1545MHz | 1050MHz | ||

| Memory Clock | 2.0Gbps HBM2 | 1.89Gbps HBM2 | 8Gbps GDDR5 | 1Gbps HBM | ||

| Memory Bus Width | 4096-bit | 2048-bit | 256-bit | 4096-bit | ||

| VRAM | 16GB | 8GB | 8GB | 4GB | ||

| Single Precision Perf. | 13.8 TFLOPS | 12.7 TFLOPS | 7.1 TFLOPS | 8.6 TFLOPS | ||

| Board Power | 300W? | 295W | 225W | 275W | ||

| Manufacturing Process | TSMC 7nm | GloFo 14nm | GloFo/Samsung 12nm | TSMC 28nm | ||

| GPU | Vega 20 | Vega 10 | Polaris 30 | Fiji | ||

| Architecture | Vega (GCN 5) |

Vega (GCN 5) |

GCN 4 | GCN 3 | ||

| Transistor Count | 13.2B | 12.5B | 5.7B | 8.9B | ||

| Launch Date | 02/07/2019 | 08/14/2017 | 11/15/2018 | 06/24/2015 | ||

| Launch Price | $699 | $499 | $279 | $649 | ||

Diving into the numbers a bit more, if you took AMD’s second-tier Radeon Instinct MI50 and made a consumer version of the card, the Radeon VII is almost exactly what it would look like. It has the same 60 CU configuration paired with 16GB of HBM2 memory. However the Radeon VII’s boost clock is a bit higher – 1800MHz versus 1746MHz – so AMD is getting the most out of those 60 CUs. Still, it’s important to keep in mind that from a pure FP32 throughput standpoint, the Vega 20 GPU was meant to be more of a sidegrade to Vega 10 than a performance upgrade; on paper the new card only has a 9% compute throughput advantage. So it’s not on compute throughput where Radeon VII’s real winning charm lies.

Instead, the biggest difference between the two cards is on the memory backend. Radeon Vega 64 (Vega 10) 2 HBM2 memory channels running at 1.89Gbps each, for a total of 484GB/sec of memory bandwidth. Radeon VII (Vega 20) doubles this and then some to 4 HBM2 memory channels, which also means memory capacity has doubled to 16GB. And then there’s the clockspeed boost on top of this to 2.0Gbps for the HBM2 memory. As a result Radeon VII has a lot memory bandwidth to feed itself, from the ROPs to the stream processors. Given these changes and AMD’s performance estimates, I think this lends a lot of evidence to the idea that Vega 10 was underfed – it needed more memory bandwidth keep its various processing blocks working at full potential – but that’s something we’ll save for the eventual review.

Past that, as this is still a Vega architecture product, it’s the Vega we all know and love. There are no new graphical features here, so even if AMD has opted to shy away from putting Vega in the name of the product, it’s going to be comparable to those parts as far as gaming is concerned. The Vega 20 GPU does bring new compute features – particularly much higher FP64 compute throughput and new low-precision modes well-suited for neural network inferencing – but these features aren’t something consumers are likely to use. Past that, AMD will be employing some mild product segmentation here to avoid having the Radeon VII cannibalize the MI50 – the Radeon VII does not get PCIe 4.0 support, nor does it get Infinity Link support –

The other wildcard for the moment is TDP. The MI50 is rated for 300W, and while AMD’s event did not announce a TDP for the card, I fully expect AMD is running the Radeon VII just as hard here, if not a bit harder. Make no mistake: AMD is still having to go well outside the sweet spot on their voltage/frequency curve to hit these high clockspeeds, so AMD isn’t even trying to win the efficiency race. Radeon VII will be more efficient than Radeon Vega 64 – AMD is saying 25% more perf at the same power – but even if AMD hits RTX 2080’s performance numbers, there’s nothing here to indicate that they’ll be able to meet its efficiency. This is another classic AMD play: go all-in on trying to win on the price/performance front.

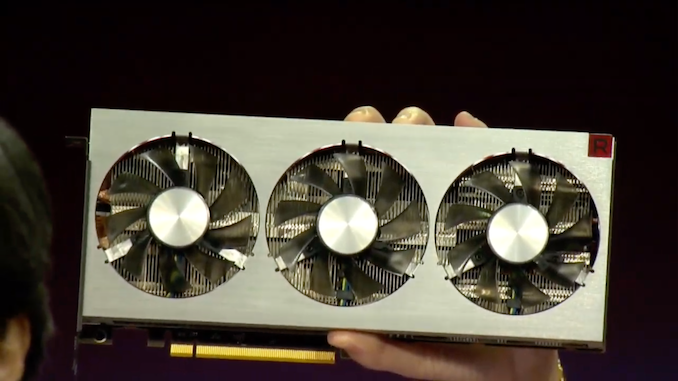

Accordingly, the Radeon VII is not a small card. The photos released show that it’s a sizable open-air triple fan cooled design, with a shroud that sticks up past the top of the I/O bracket. Coupled with the dual 8-pin PCIe power plugs on the rear of the card, and it’s clear AMD intends to remove a lot of heat. Both AMD and NVIDIA have now gone with open-air designs for their high-end cards on this most recent generation, so it’s an interesting development, and one that favors AMD given their typically higher TDPs.

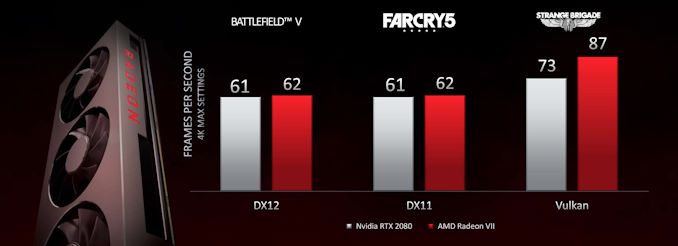

Vendor performance claims should always be taken with a grain of salt, but for the moment this is what we have. If AMD manages to reach RTX 2080 performance, then I expect this to be another case of where the two cards are tied on average but are anything but equal; there will be games where AMD falls behind, games where they do tie the RTX 2080, and then even some games they pull ahead in. These scenarios are always the most interesting for reviewers, but they’re also a bit trickier for consumers since it means there’s no clear-cut winner.

All told then, the competitive landscape is going to be an interesting one. AMD’s own proposition is actually fairly modest; with a $699 price tag they’re launching at the same price as the RTX 2080, over four months after the RTX 2080. They are presumably not going to be able to match NVIDIA’s energy efficiency, and they won’t have feature parity since AMD doesn’t (yet) have its own DirectX Raytracing (DXR) implementation.

But what AMD does have, besides an at least competitive price and presumably competitive performance in today’s games, is a VRAM advantage. Whereas NVIDIA didn’t increase their VRAM amounts between generations, AMD is for this half-generation card, giving them 16GB of VRAM to RTX 2080’s 8GB. Now whether this actually translates into a performance advantage now or in the near future is another matter; AMD has tried this gambit before with the Radeon 390 series, where it didn’t really pay off. On the other hand, the fact that NVIDIA’s VRAM capacities have been stagnant for a generation means that AMD is delivering a capacity increase “on schedule” as opposed to ahead of schedule. So while far from guaranteed, it could work in AMD’s favor. Especially as, given the performance of the card, AMD intends for the Radeon VII to be all-in on 4K gaming, which will push memory consumption higher.

Finally on the gaming front, not content to compete on just performance and pricing, AMD will also be competing on gaming bundles. The Radeon VII will be launching with a 3 game bundle, featuring Resident Evil 2, Devil May Cry 5, and The Division 2. NVIDIA of course launched their own Anthem + Battlefield V bundle at the start of this week, so both sides are now employing their complete bags of tricks to attract buyers and to prop up the prices of their cards.

Speaking of pricing, perhaps the thing that surprises me the most is that we’re even at this point – with AMD releasing a Vega 20 consumer card. When they first announced Vega 20 back in 2018, they made it very clear it was going to be for the Radeon Instinct series only. That the new features of the Vega 20 GPU were better suited for that market, and more importantly as a relatively large chip (331mm2) for this early in the life of TSMC’s 7nm manufacturing node, yields were going to be poor.

So that AMD is able to sell what are admittedly defective/recovered Vega 20s in a $699 card, produce enough of them to meet market demand, and still turn a profit on all of this is a surprising outcome. I simply would not have expected AMD to get a 7nm chip out at consumer prices this soon. All I can say is that either AMD has pulled off a very interesting incident of consumer misdirection, or the competitive landscape has evolved slowly enough that Vega 20 is viable where it otherwise wouldn’t have been. Or perhaps it’s a case of both.

Shifting gears for a second, while I’ve focused on gaming thus far, it should be noted that AMD is going after the content creation market with the Radeon VII as well. This is still a Radeon card and not a Radeon Pro card, but as we’ve seen before, AMD has been able to make a successful market out of offering such cards with only a basic level of software developer support. In this case AMD is expecting performance gains similar to the gaming side, with performance improving the more a workload is pixel or memory bandwidth bound.

Wrapping things up, the Radeon VII will be hitting the streets on February 7th for $699. At this point AMD has not announced anything about board partners doing custom designs, so it looks like this is going to be a pure reference card launch. As always, stay tuned and we should know a bit more information as we get closer to the video card’s launch date.

201 Comments

View All Comments

mode_13h - Wednesday, January 9, 2019 - link

> there's nothing "magical" about the cores in Nvidia's RTX series that enable it - they're just optimized.You got a source on that? I'm not aware of any public information about what's inside of their RT cores.

mode_13h - Wednesday, January 9, 2019 - link

DLSS uses deep learning to infer what the upsampled output would look like. While I'm not aware of published information about the specific model + pre/post processing they're using, it's less magical than you make it sound.Aside from adding integer support to their Tensor cores (which they were probably going to do anyhow), I think DLSS is more a case of them developing new software tech to utilize existing hardware engines (i.e. their Tensor cores, which they introduced in Volta).

mapesdhs - Thursday, January 10, 2019 - link

I was surprised at how grud awful DLSS actually looks. GN's review of FFXV showed absolutely dreadful texture blurring, distance popping and even aliasing artefects in some cases, the very thing AA tech is supposed to get rid off. It did help with hair rendering, though the effect still looked kinda dumb with the hair spikes clipping through the character's collar (gotta love that busted 4th wall).KateH - Wednesday, January 9, 2019 - link

maybe an edge case here, but i would. I do content creation as well as gaming; if a Radeon 7 can hit 2080 performance in DX11 and Vulkan /and/ render my videos faster I'd choose the Radeon. I downgraded from Win10 back to Win 8.1 (flame away, i am unbothered lol) and have no plans of going back up for at least a year or two so Win10-only DXR does nothing for me.HollyDOL - Wednesday, January 9, 2019 - link

That will be actually interesting to see - how much support these new cards can get on system that is year past end of support already... at absolutely worst case you might not be able to run a new card on that.KateH - Wednesday, January 9, 2019 - link

yeah :-/ I've a GTX 1070 in my gaming system right now (bought second-hand autumn 2018) and there's a distinct possibility that my only reasonable upgrade option for will be a used 1080Ti. My notion is to stick with the 1070 till this winter or so when used 1080Ti prices hopefully hit ~$300, and then stick with that until DXR/Vulkan Raytracing becomes mainstream and by that time (late 2020?) i should be able to snag a used 2080Ti or whatever midrange cards are out at the time, whichever has better perf/$$.Or I might just keep using Windows 8.1 forever like people are doing with Win7. I'll get security updates till 2023 IIRC and i don't care about bugfixes or feature upgrades as the OS has been completely stable for me for years now

Don't matter - Wednesday, January 9, 2019 - link

Hey KateH, I signed up just to reply to you :)I completely agree with you . As a 1070 owner that bought it last year for $300 was thinking the same thing. For us 1070 owners the only reasonable upgrade option is 1080 ti. or if you feeling fancy for twice the price RTX 2080 or Radeon 7. Wouldn't go for Titan RTX or 2080 TI even if I had the money for it, just not worth it, would rather donate to charity...

And 1080 ti would last for at least a couple more years. Since 1080 ti would be of a RTX 3070/3060 and RTX 4050 (at worst- judging by current trends) performance. Which means it's gonna be equal to a mid range GPU in around 2021-2024. and the performance would be between 1/2 and 1/3 of RTX 4080 ti. But by that time there is a possibility that a new fancy tech will arrive and RTX will mature... So it would be a worthwhile upgrade for RTX 4080/ 4070 for twice the performance boost from 1080 ti + new tech...

Can I ask you why you switched to Win 8.1 though? I don't get it...

KateH - Wednesday, January 9, 2019 - link

do you mean why i downgraded at all, or why i chose to downgrade to Win 8.1 specifically?I got tired of the feeling that Windows 10 is perpetually in Beta, or at least that it's perpetually a "Pre-Service Pack 1" version of Windows, from release up to 1703 when I gave up. Too many difficulties getting it to install on a wide variety of hardware. Too many driver issues (despite the fantastically wide range of drivers available automatically via Windows Update), too many instances of bizarrely high CPU usage at idle, BSODs that I couldn't troubleshoot, etc, again across a lot of hardware. And then if I got an install that worked and performed as expected, I had to deal with the weekly barrage of updates, the litany of crapware that MS bundles (and reinstalls without asking!), application incompatibility, utterly titanic install footprint (that just grows and grows and grows with each update) and so on. It's a shame, because i for the most part love the Win10 UX and appreciate it's fast boot times and responsible RAM usage.

I went back to Windows 8.1 specifically because I unabashedly actually like it. It has many of the UX improvements that 10 has. I like the flat, clean GUI that feels responsive even on low-end hardware, a far cry from the bogged-down garishness of Aero on Vista and 7. I like the fast boot times- my gaming PC has a single 1TB HDD for OS and 2x 1TB RAID stripe for gamez and stripped-down 8.1 boots from pressing power button to ready at desktop in ~10 seconds, as fast as loaded-up 8.1 on my laptop's SSD (remember booting Win7 from spinning rust? Better bring a good book to read while you wait!). I like that Win 8.1 uses a tiny amount of memory- 800MB on my 8GB equipped gaming system, 200MB of which is for the Nvidia driver alone, and ~1GB on my 16GB laptop that I've loaded up with a silly amount of startup apps and utilities. The weird Start screen doesn't bother me- on my laptop I just hit the windows key and type the name of the application and on my gaming system I actually like the tile-based UI because it makes it feel more console-like.

Overall Win8.1 just feels like a stable, mature OS which is what I want. I know its features and I know they won't change. I download my weekly Windows Defender definitions and otherwise don't have to worry about updating other than once a month or so and I have full control over said updates out-of-box. 8.1 hasn't crashed for me in literally years aside from overclocking follies. It's fast. It just works. To me (and i know this is an unpopular opinion) Windows 8.1 is the ultimate Windows- most of the efficiency and UX advantages of 10 with the feeling of being in control of one's own computer that 7 had.

andrewaggb - Thursday, January 10, 2019 - link

Windows 8.1 was my favorite version of windows and I've used all of them (server versions included) since 3.0. It was the fastest and most stable version of windows ever (for desktops). Windows 10 is less stable, slower, and more bloated.But I use windows 10 on all my desktops now because it's the current version and works reasonably well and seems like it'll be supported forever if they don't eventually release a new version.

mode_13h - Wednesday, January 9, 2019 - link

Content creation is actually one of the better use-cases for RTX. It doesn't require DXR - Nvidia has supported ray-tracing acceleration for content creation via a separate API (OptiX), which has been around for quite a while.