The Intel Xeon W-3175X Review: 28 Unlocked Cores, $2999

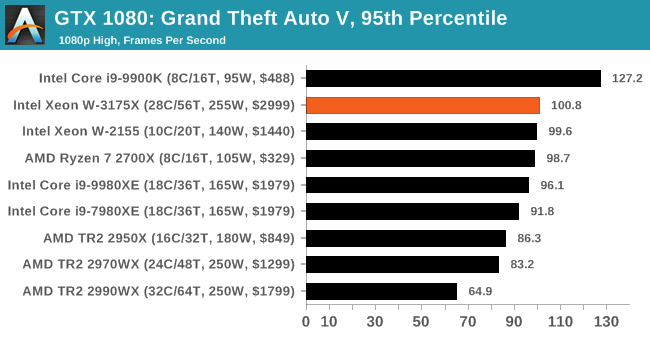

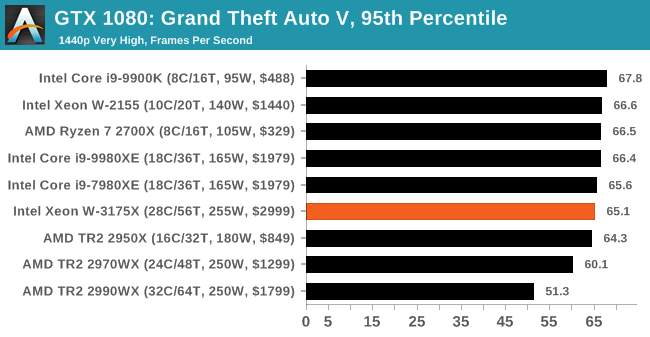

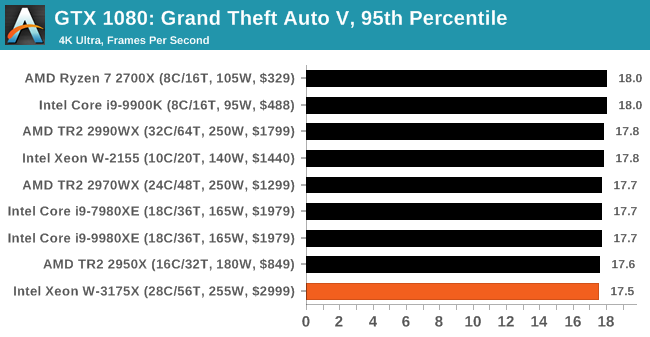

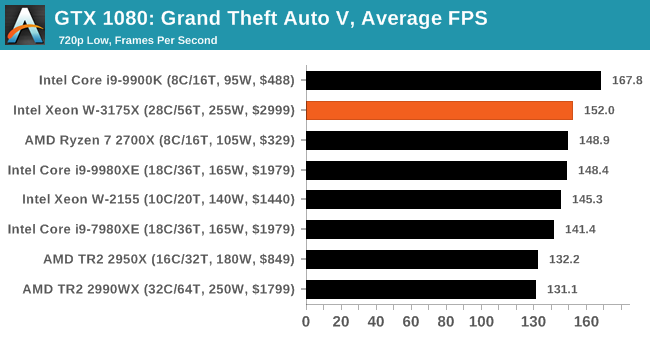

by Ian Cutress on January 30, 2019 9:00 AM ESTGaming: Grand Theft Auto V

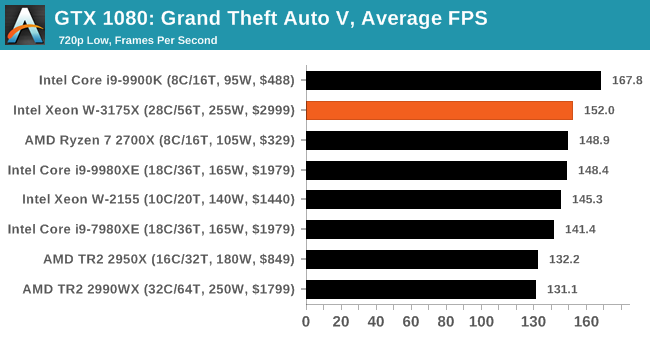

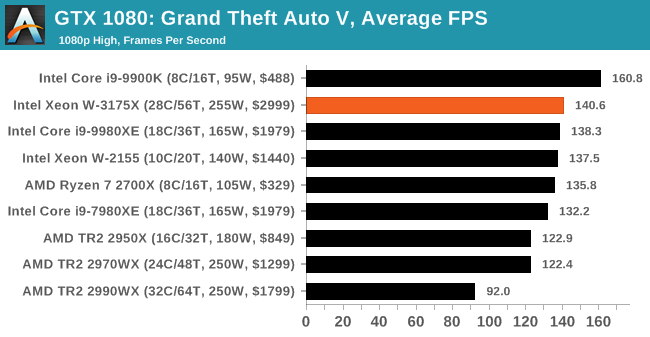

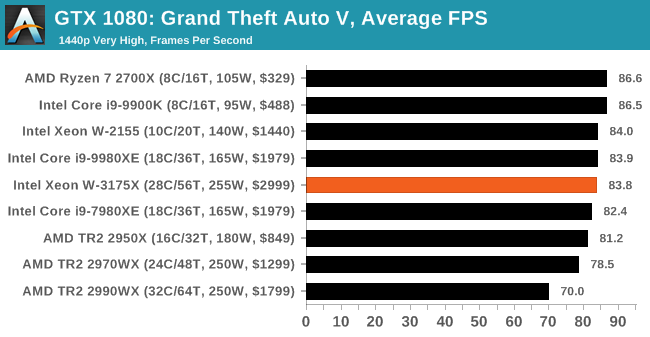

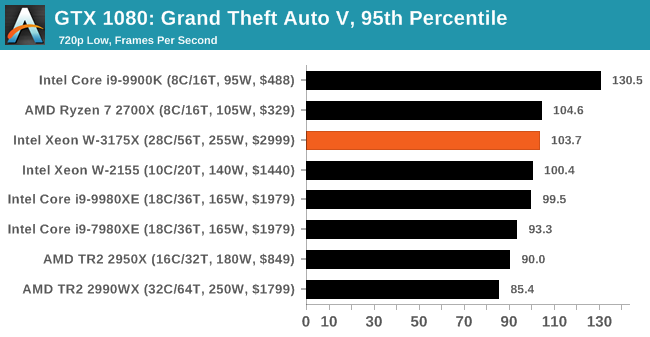

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Grand Theft Auto V | Open World | Apr 2015 |

DX11 | 720p Low |

1080p High |

1440p Very High |

4K Ultra |

|

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low end GPU with lots of GPU memory, like an R7 240 4GB).

All of our benchmark results can also be found in our benchmark engine, Bench.

| GTA V | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

.

136 Comments

View All Comments

Kevin G - Wednesday, January 30, 2019 - link

For $3000 USD, a 28 core unlocked Xeon chip isn't terribly bad. The real issue is its incredibly low volume nature and that in effect only two motherboards are going to be supporting it. LGA 3647 is a wide spread platform but the high 255W TDP keeps it isolated.Oddly I think Intel would have had better success if they also simultaneously launched an unlocked 18 core part with even higher base/turbo clocks. This would have threaded the needle better in terms of per thread performance and overall throughput. The six channel memory configuration would have assisted in performance to distinguish itself from the highend Core i9 Extreme chips.

The other aspect is that there is no clear upgrade path from the current chips: pretty much one chip to board ratio for the life time of the product. There is a lot on the Xeon side Intel has planned like on package FGPAs, Omnipath fabric and Nervana accelerators which could stretch their wings with a 255 W TDP. The Xeon Gold 6138P is an example of this as it comes with an Arria 10 FPGA inside but a slightly reduced clock 6138 die as well at a 195 W TDP. At 255 W, that chip wouldn't have needed to compromise the CPU side. For the niche market Intel is targeting, a FPGA solution would be interesting if they pushed ideas like OpenCL and DirectCompute to run on the FPGA alongside the CPU. Doing something really bold like accelerating PhysX on the FPGA would have been an interesting demo of what that technology could do. Or leverage the FGPA for DSP audio effects in a full 3D environment. That'd give something for these users to look forward to.

Well there is the opportunity to put in other LGA 3647 parts into these boards but starting off with a 28 core unlocked chip means that other offering are a downgrade. With luck, Ice Lake-SP would be an upgrade but Intel hasn't committed to it on LGA 3647.

Ultimately this looks like AMD's old 4x4/QuadFX efforts that'll be quickly forgotten by history.

Speaking of AMD, Intel missing the launch window by a few months places it closer to the eminent launch of new Threader designs leveraging Zen 2 and AMD's chiplet strategy. I wouldn't expect AMD to go beyond 32 cores for Threadripper but the common IO die should improve performance overall on top of the Zen 2 improvements. Intel has some serious competition coming.

twtech - Wednesday, January 30, 2019 - link

Nobody really upgrades workstation CPUs, but it sounds like getting a replacement in the event of failure.could be difficult if the stock will be so limited.If Dell and HP started offering this chip in their workstation lineup - which I don't expect to happen given the low-volume CPU production and needing a custom motherboard - then I think it would have been a popular product.

DanNeely - Wednesday, January 30, 2019 - link

Providing the replacement part (and thus holding back enough stock to do so) is on Dell/HP/etc via the support contract. By the time it runs out in a few years the people who buy this sort of prebuilt system will be upgrading to something newer and much faster anyway.MattZN - Wednesday, January 30, 2019 - link

I have to disagree re: upgrades. Intel has kinda programmed consumers into believing that they have to buy a whole new machine whenever they upgrade. In the old old days we actually did have to upgrade in order to get better monitor resolutions because the busses kept changing.But in modern times that just isn't the case any more. For Intel, it turned into an excuse to get people to pay more money. We saw it in spades with offerings last year where Intel forced people into a new socket for no reason (a number of people were actually able to get the cpu to work in the old socket with some minor hackery). I don't recall the particular CPU but it was all over the review channels.

This has NOT been the case for Intel's commercial offerings. The Xeons traditionally have had a whole range of socket-compatible upgrade options. It's Intel's shtick 'Scaleable Xeon CPUs' for the commercial space. I've upgraded several 2S Intel Xeon systems by buying CPUs on E-Bay... its an easy way to double performance on the cheap and businesses will definitely do it if they care about their cash burn.

AMD has thrown cold water on this revenue source on the consumer side. I think consumers are finally realizing just how much money Intel has been squeezing out of them over the last decade and are kinda getting tired of it. People are happily buying new AMD CPUs to upgrade their existing rigs.

I expect that Intel will have to follow suit. Intel traditionally wanted consumers to buy whole new computers but now that CPUs offer only incremental upgrades over prior models consumers have instead just been sticking with their old box across several CPU cycles before buying a new one. If Intel wants to sell more CPUs in this new reality, they will have to offer upgradability just like AMD is. I have already upgraded two of my AM4 boxes twice just by buying a new CPU and I will probably do so again when Zen 2 comes out. If I had had to replace the entire machine it would be a non-starter. But since I only need to do a BIOS update and buy a new CPU... I'll happily pay AMD for the CPU.

Intel's W-3175X is supposed to compete against threadripper, but while it supposedly supports ECC I do not personally believe that the socket has any longevity and that it is a complete waste of money and time to buy into it verses buying into threadripper's far more stable socket and far saner thermals. Intel took a Xeon design that is meant to run closer to the maximally efficient performance/power point on the curve and tried to turn it into a pro-sumer or small-business competitor to the threadripper by removing OC limits and running it hot, on an unstable socket. No thanks.

-Matt

Kevin G - Thursday, January 31, 2019 - link

I would disagree with this. Workstations around here are being retrofitted with old server hand-me-downs from the data center as that requipment is quietly retired. Old workstations make surprisingly good developer boxes, especially considering that the costs is just moving parts from one side of the company to the other.Though you do have point that the major OEMs themselves are not offering upgrades.

drexnx - Wednesday, January 30, 2019 - link

wow, I thought (and I think many people did) that this was just a vanity product, limited release, ~$10k price, totally a "just because we're chipzilla and we can" type of thinglooks like they're somewhat serious with that $3k price

MattZN - Wednesday, January 30, 2019 - link

The word 'nonsensical' comes to mind. But setting aside the absurdity of pumping 500W into a socket and trying to pass it off as a usable workstation for anyone, I have to ask Anandtech ... did you run with the scheduler fixes necessary to get reasonable results out of the 2990WX in the comparisons? Because it kinda looks like you didn't.The Windows scheduler is pretty seriously broken when it comes to both the TR and EPYCs and I don't think Microsoft has pushed fixes for it yet. That's probably what is responsible for some of the weird results. In fact, your own article referenced Wendel's work here:

https://www.anandtech.com/show/13853/amd-comments-...

That said, of course I would still expect this insane monster of Intel's to put up better results. It's just that... it is impractical and hazardous to actually configure a machine this way and expect it to have any sort of reasonable service life.

And why would Anandtech run any game benchmarks at all? This is a 28-core Xeon... actually, it's two 14-core Xeons haphazardly pasted together (but that's another discussion). Nobody in their right mind is going to waste it by playing games that would run just as well on a 6-core cpu.

I don't actually think Intel has any intention of actually selling very many of these things. This sort of configuration is impractical with 14nm and nobody in their right mind would buy it with AMD coming out with 10nm high performance parts in 5 months (and Intel probably a bit later this year). Intel has no business putting a $3000 price tag on this monster.

-Matt

eddman - Thursday, January 31, 2019 - link

"it's two 14-core Xeons haphazardly pasted together"Where did you get that info? Last time I checked each xeon scalable chip, be it LCC, HCC or XCC, is a monolithic die. There is no pasting together.

eddman - Thursday, January 31, 2019 - link

Didn't you read the article? It's right there: "Now, with the W-3175X, Intel is bringing that XCC design into the hands of enthusiasts and prosumers."Also, der8auer delidded it and confirmed it's an XCC die. https://youtu.be/aD9B-uu8At8?t=624

mr_tawan - Wednesday, January 30, 2019 - link

I'm surprised you put the Duron 900 on the image. That makes me expecting the test result from that CPU too!!