The Intel Xeon W-3175X Review: 28 Unlocked Cores, $2999

by Ian Cutress on January 30, 2019 9:00 AM ESTGaming: Shadow of the Tomb Raider (DX12)

The latest instalment of the Tomb Raider franchise does less rising and lurks more in the shadows with Shadow of the Tomb Raider. As expected this action-adventure follows Lara Croft which is the main protagonist of the franchise as she muscles through the Mesoamerican and South American regions looking to stop a Mayan apocalyptic she herself unleashed. Shadow of the Tomb Raider is the direct sequel to the previous Rise of the Tomb Raider and was developed by Eidos Montreal and Crystal Dynamics and was published by Square Enix which hit shelves across multiple platforms in September 2018. This title effectively closes the Lara Croft Origins story and has received critical acclaims upon its release.

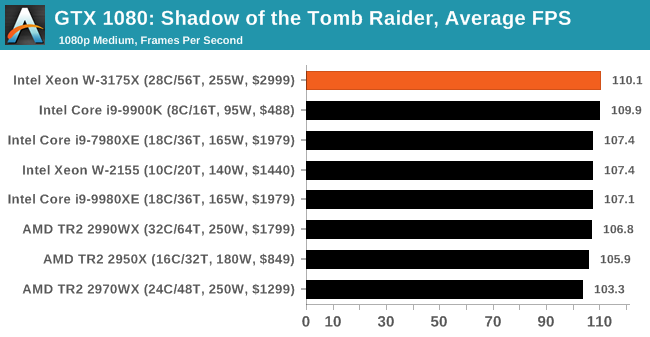

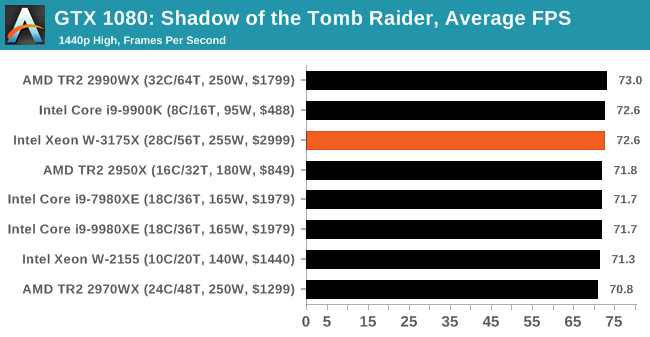

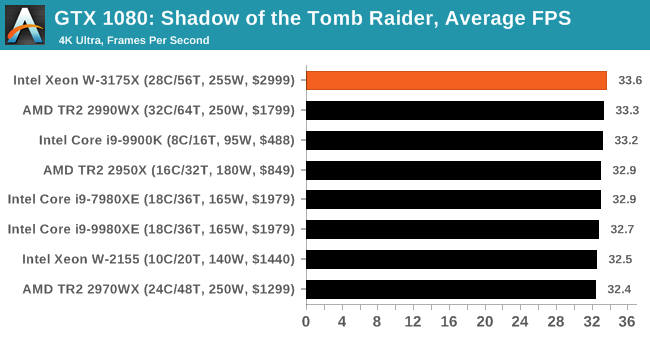

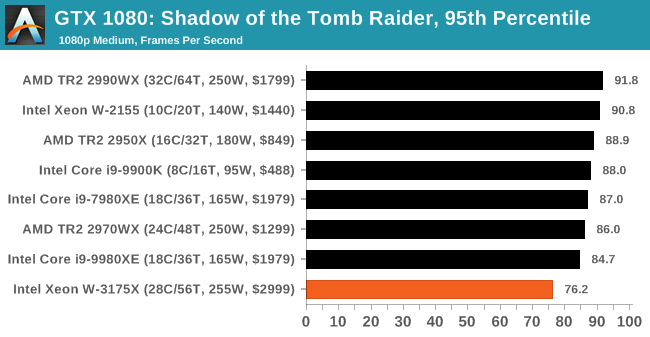

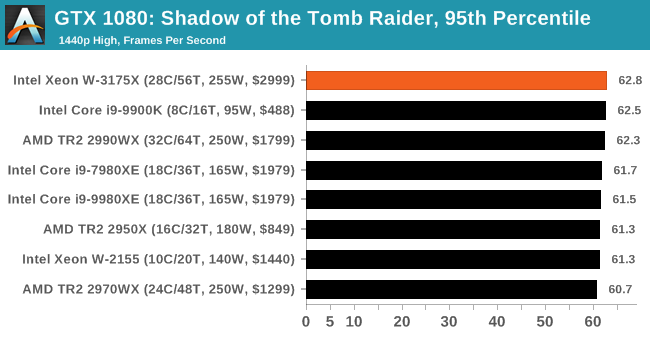

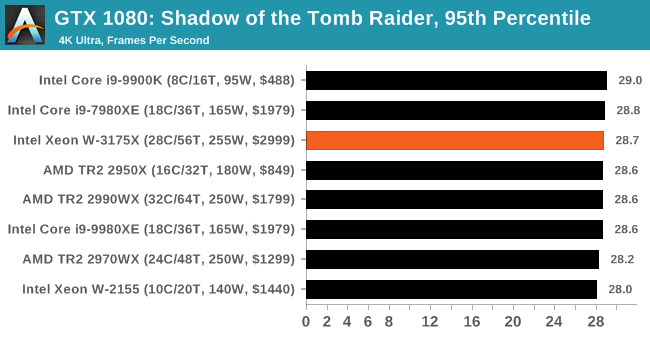

The integrated Shadow of the Tomb Raider benchmark is similar to that of the previous game Rise of the Tomb Raider, which we have used in our previous benchmarking suite. The newer Shadow of the Tomb Raider uses DirectX 11 and 12, with this particular title being touted as having one of the best implementations of DirectX 12 of any game released so far.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Shadow of the Tomb Raider | Action | Sep 2018 |

DX12 | 720p Low |

1080p Medium |

1440p High |

4K Highest |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| SOTR | Low | Medium | High |

| Average FPS |  |

|

|

| 95th Percentile |  |

|

|

.

136 Comments

View All Comments

SaturnusDK - Wednesday, January 30, 2019 - link

The price is the only big surprise here. At $3000 for the CPU alone and three times that in system price it's actually pretty decently priced. The performance is as expected but it will soon be eclipsed. The only question is what price AMD will change for it's coming Zen2 based processors in the same performance bracket, we won't know until then if the W3175X is a worthwhile investment.HStewart - Wednesday, January 30, 2019 - link

I thought the rumors were that this chip was going to be $8000. I am curious what Covey version of this chip will perform and when it comes out.But lets be honest, unless you are extremely rich or crazy, buying any processor with large amount of cores is crazy - to me it seems like high end gaming market is being taking for ride with all this core war - buy high end core now just to say you have highest performance and then next year purchase a new one. Of course there is all the ridicules process stuff. It just interesting to find a 28 core beats a AMD 32 core with Skylake and 14nm on Intel.

As for Server side, I would think it more cost effective to blade multiple lower core units than less higher core units.

jakmak - Wednesday, January 30, 2019 - link

Its not really surprising to see an 28 Intel beating an 32Core AMD. After all, it is not a hidden mystery that the Intel chips not only have a small IPC advantage, but also are able to run with a higher clockrate (nevertheless the power wattage). In this case, the Xeon-W excells where these 2 advantages combined are working 28x, so the 2 more cores on AMD side wont cut it.It is also obvious that the massive advantage works mostly in those cases where clock rate is the most important part.

MattZN - Wednesday, January 30, 2019 - link

Well, it depends on whether you care about power consumption or not, jakmak. Traditionally the consumer space hasn't cared so much, but its a bit of a different story when whole-system power consumption starts reaching for the sky. And its definitely reaching for sky with this part.The stock intel part burns 312W on the Blender benchmark while the stock threadripper 2990WX burns 190W. The OC'd Intel part burns 672W (that's right, 672W without a GPU) while the OCd 2990WX burns 432W.

Now I don't know about you guys, but that kind of power dissipation in such a small area is not something I'm willing to put inside my house unless I'm physically there watching over it the whole time. Hell, I don't even trust my TR system's 330W consumption (at the wall) for continuous operation when some of the batches take several days to run. I run it capped at 250W.

And... I pay for the electricity I use. Its not cheap to run machines far away from their maximally efficient point on the curve. Commercial machines have lower clocks for good reason.

-Matt

joelypolly - Wednesday, January 30, 2019 - link

Do you not have a hair dryer or vacuum or oil heater? They can all push up to 1800W or moreevolucion8 - Wednesday, January 30, 2019 - link

That is a terrible example if you ask me.ddelrio - Wednesday, January 30, 2019 - link

lol How long do you keep your hair dryer going for?philehidiot - Thursday, January 31, 2019 - link

Anything up to one hour. I need to look pretty for my processor.MattZN - Wednesday, January 30, 2019 - link

Heh. That's is a pretty bad example. People don't leave their hair dryers turned on 24x7, nor floor heaters (I suppose, unless its winter). Big, big difference.Regardless, a home user is not likely to see a large bill unless they are doing something really stupid like crypto-mining. There is a fairly large distinction between the typical home-use of a computer vs a beefy server like the one being reviewed here, let alone a big difference between a home user, a small business environment (such as popular youtube tech channels), and a commercial setting.

If we just use an average electricity cost of around $0.20/kWh (actual cost depends on where you live and the time of day and can range from $0.08/kWh to $0.40/kWh or so)... but lets just $0.20/kWh.

For a gamer who is spending 4 hours a day burning 300W the cost of operation winds up being around $7/month. Not too bad. Your average gamer isn't going to break the bank, so to speak. Mom and Dad probably won't even notice the additional cost. If you live in cold environment, your floor heater will indeed cost more money to operate.

If you are a solo content creator you might be spending 8 to 12 hours a day in front of the computer. For the sake of argument, running blender or encoding jobs in the background. 12 hours of computer use a day @ 300W costs around $22/month.

If you are GN or Linus or some other popular YouTube site and you are running half a dozen servers 24x7 plus workstations for employees plus running numerous batch encoding jobs on top of that, the cost will begin to become very noticable. Now you are burning, say, 2000W 24x7 (pie in the sky rough average), costing around $290/month ($3480/year). That content needs to be making you money.

A small business or commercial setting can wind up spending a lot of money on energy if no care at all is taken with regards to power consumption. There are numerous knock-on costs, such as A/C in the summer which has to take away all the equipment heat on top of everything else. If A/C is needed (in addition to human A/C needs), the cost is doubled. If you are renting colocation space then energy is the #1 cost and network bandwidth is the #2 cost. If you are using the cloud then everything has bloated costs (cpu, network, storage, and power).

In anycase, this runs the gamut. You start to notice these things when you are the one paying the bills. So, yes, Intel is kinda playing with fire here trying to promote this monster. Gaming rigs that aren't used 24x7 can get away with high burns but once you are no longer a kid in a room playing a game these costs can start to matter. As machine requirements grow then running the machines closer to their maximum point of efficiency (which is at far lower frequencies) begins to trump other considerations.

If that weren't enough, there is also the lifespan of the equipment to consider. A $7000 machine that remains relevant for only one year and has as $3000/year electricity bill is a big cost compared to a $3000 machine that is almost as fast and only has $1500/year electricity bill. Or a $2000 machine. Or a $1000 machine. One has to weigh convenience of use against the total cost of ownership.

When a person is cognizant of the costs then there is much less of an incentive to O.C. the machines, or even run them at stock. One starts to run them like real servers... at lower frequencies to hit the maximum efficiency sweet spot. Once a person begins to think in these terms, buying something like this Xeon is an obvious and egregious waste of money.

-Matt

808Hilo - Thursday, January 31, 2019 - link

Most servers run at idle speed. That is a sad fact. The sadder fact is that they have no discernible effect on business processes because they are in fact projected and run by people in a corp that have a negative cost to benefit ratio. Most important apps still run on legacy mainframe or mini computers. You know the one that keep the electricity flowing, planes up, ticketing, aisles restocked, powerplants from exploding, ICBM tracking. Only social constructivists need an overclocked server. Porn, youtubers, traders, datacollectors comes to mind. Not making much sense.