The AMD Threadripper 2 CPU Review: The 24-Core 2970WX and 12-Core 2920X Tested

by Ian Cutress on October 29, 2018 9:00 AM ESTHEDT Performance: Office Tests

The Office test suite is designed to focus around more industry standard tests that focus on office workflows, system meetings, some synthetics, but we also bundle compiler performance in with this section. For users that have to evaluate hardware in general, these are usually the benchmarks that most consider.

All of our benchmark results can also be found in our benchmark engine, Bench.

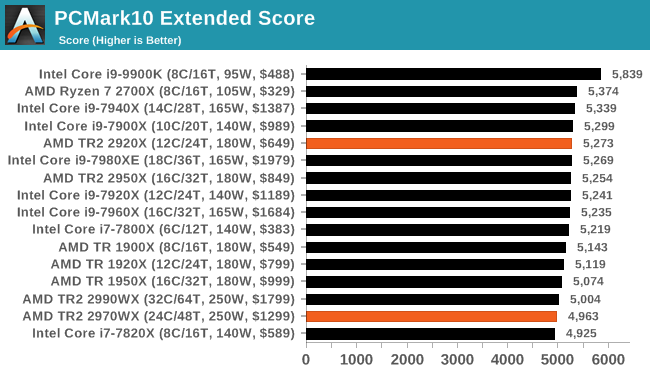

PCMark 10: Industry Standard System Profiler

Futuremark, now known as UL, has developed benchmarks that have become industry standards for around two decades. The latest complete system test suite is PCMark 10, upgrading over PCMark 8 with updated tests and more OpenCL invested into use cases such as video streaming.

PCMark splits its scores into about 14 different areas, including application startup, web, spreadsheets, photo editing, rendering, video conferencing, and physics. We post all of these numbers in our benchmark database, Bench, however the key metric for the review is the overall score.

PCMark seems to be around standard for almost every processor, except the 9900K where the 5.0 GHz really pushes the performance.

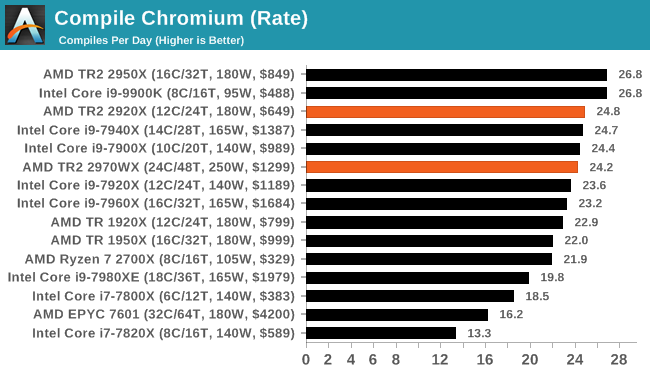

Chromium Compile: Windows VC++ Compile of Chrome 56

A large number of AnandTech readers are software engineers, looking at how the hardware they use performs. While compiling a Linux kernel is ‘standard’ for the reviewers who often compile, our test is a little more varied – we are using the windows instructions to compile Chrome, specifically a Chrome 56 build from March 2017, as that was when we built the test. Google quite handily gives instructions on how to compile with Windows, along with a 400k file download for the repo.

In our test, using Google’s instructions, we use the MSVC compiler and ninja developer tools to manage the compile. As you may expect, the benchmark is variably threaded, with a mix of DRAM requirements that benefit from faster caches. Data procured in our test is the time taken for the compile, which we convert into compiles per day.

Our compile test is a healthy mix of a variable threaded workload, and we can see that the 2950X and the 9900K are the best performers here. However the 2920X, at $649, or the 2700X, are the best bang-for-buck performers here.

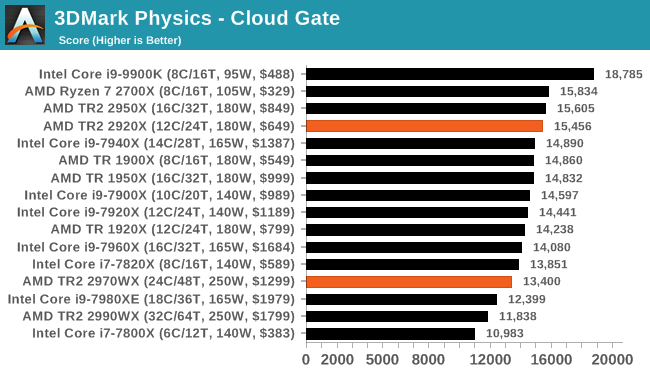

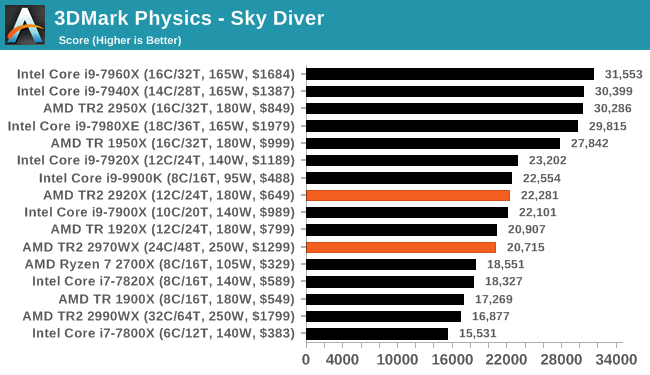

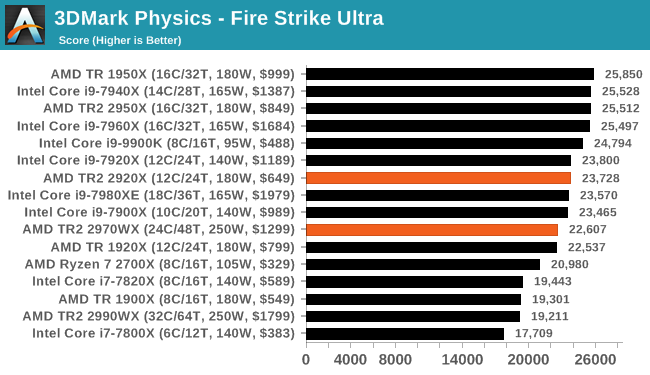

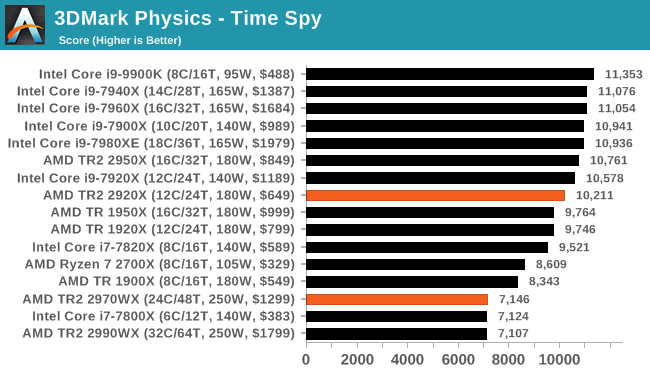

3DMark Physics: In-Game Physics Compute

Alongside PCMark is 3DMark, Futuremark’s (UL’s) gaming test suite. Each gaming tests consists of one or two GPU heavy scenes, along with a physics test that is indicative of when the test was written and the platform it is aimed at. The main overriding tests, in order of complexity, are Ice Storm, Cloud Gate, Sky Diver, Fire Strike, and Time Spy.

Some of the subtests offer variants, such as Ice Storm Unlimited, which is aimed at mobile platforms with an off-screen rendering, or Fire Strike Ultra which is aimed at high-end 4K systems with lots of the added features turned on. Time Spy also currently has an AVX-512 mode (which we may be using in the future).

For our tests, we report in Bench the results from every physics test, but for the sake of the review we keep it to the most demanding of each scene: Cloud Gate, Sky Diver, Fire Strike Ultra, and Time Spy.

Graphics engines still have trouble scaling up the cores, even with the latest models, due to a lack of proper memory bandwidth. The large TR2 chips don't have the right balance of cores to memory to be able to compete.

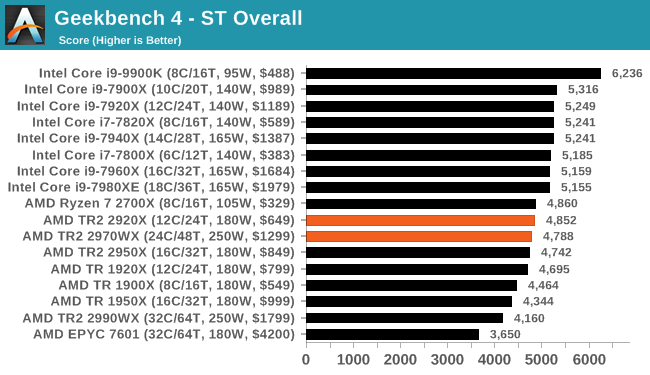

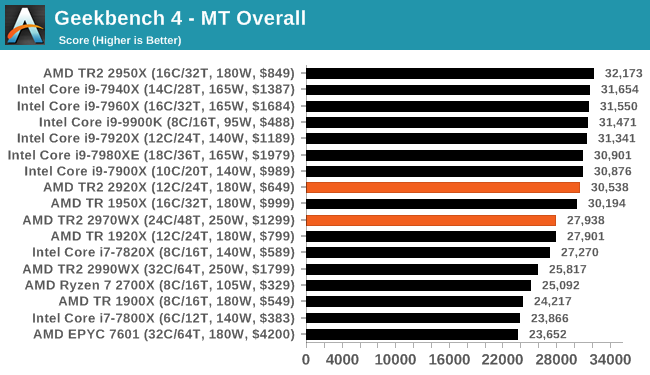

GeekBench4: Synthetics

A common tool for cross-platform testing between mobile, PC, and Mac, GeekBench 4 is an ultimate exercise in synthetic testing across a range of algorithms looking for peak throughput. Tests include encryption, compression, fast Fourier transform, memory operations, n-body physics, matrix operations, histogram manipulation, and HTML parsing.

I’m including this test due to popular demand, although the results do come across as overly synthetic, and a lot of users often put a lot of weight behind the test due to the fact that it is compiled across different platforms (although with different compilers).

We record the main subtest scores (Crypto, Integer, Floating Point, Memory) in our benchmark database, but for the review we post the overall single and multi-threaded results.

69 Comments

View All Comments

Ian Cutress - Monday, October 29, 2018 - link

EPYC 7601 is 2.2 GHz base, 3.2 GHz Turbo, at 180W, fighting against 4.2+ GHz Turbo parts at 250W. Also the memory we have to use is server ECC memory, which has worse latencies than consumer memory. I've got a few EPYC chips in, and will be testing them in due course.mapesdhs - Monday, October 29, 2018 - link

Does the server memory for EPYC run at lower clocks aswell?GreenReaper - Wednesday, October 31, 2018 - link

ECC RAM typically runs slower, yes. It's correctness that you're looking for first and foremost, and high speeds are harder to guarantee against glitches, particularly if you're trying to calculate or transfer or compare parity at the same time.iwod - Monday, October 29, 2018 - link

Waiting for Zen2Boxie - Monday, October 29, 2018 - link

only Zen2? Psshh - it was announced ages ago... /me is waiting ofr Zen5 :Pwolfemane - Monday, October 29, 2018 - link

*nods in agreement* me to, I hear good things about Zen5. Going to be epyc!5080 - Monday, October 29, 2018 - link

Why are there so many game tests with Threadripper? It should be clear by now that this CPU is not for gamers. I would rather see more tests with other professional software such as Autoform, Catia and other demanding apps.DanNeely - Monday, October 29, 2018 - link

The CPU Suite is a standard set of tests for all chips Ian tests from a lowly atom, all the way up to top end Xeon/Epyc chips; not something bespoke for each article which would limit the ability to compare results from one to the next. The limited number of "pro level" applications tested is addressed in the article at the bottom of page 4."A side note on software packages: we have had requests for tests on software such as ANSYS, or other professional grade software. The downside of testing this software is licensing and scale. Most of these companies do not particularly care about us running tests, and state it’s not part of their goals. Others, like Agisoft, are more than willing to help. If you are involved in these software packages, the best way to see us benchmark them is to reach out. We have special versions of software for some of our tests, and if we can get something that works, and relevant to the audience, then we shouldn’t have too much difficulty adding it to the suite."

TL;DR: The vendors of the software aren't interested in helping people use their stuff for benchmarks.

Ninhalem - Monday, October 29, 2018 - link

ANSYS is terrible from a licensing standpoint even though their software is very nice for FEA. COMSOL could be a much better alternative for high-end computational software. I have found the COMSOL representatives to be much more agreeable to product testing and the support lines are much better, both in responsiveness and content help.mapesdhs - Monday, October 29, 2018 - link

Indeed, ANSYS is expensive, and it's also rather unique in that it cares far more about memory capacity (and hence I expect bandwidth) than cores/frequency. Before x86 found its legs, an SGI/ANSYS user told me his ideal machine would be one good CPU and 1TB RAM, and that was almost 20 years ago.