The Intel 9th Gen Review: Core i9-9900K, Core i7-9700K and Core i5-9600K Tested

by Ian Cutress on October 19, 2018 9:00 AM EST- Posted in

- CPUs

- Intel

- Coffee Lake

- 14++

- Core 9th Gen

- Core-S

- i9-9900K

- i7-9700K

- i5-9600K

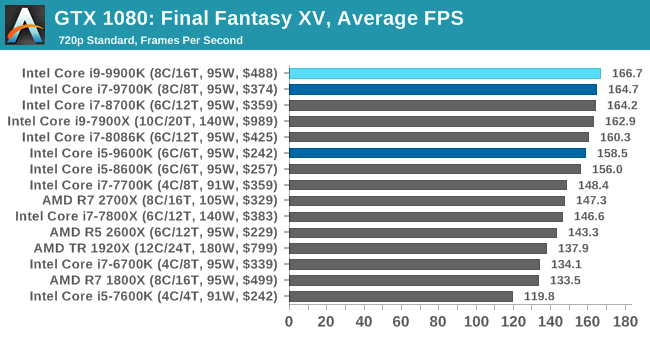

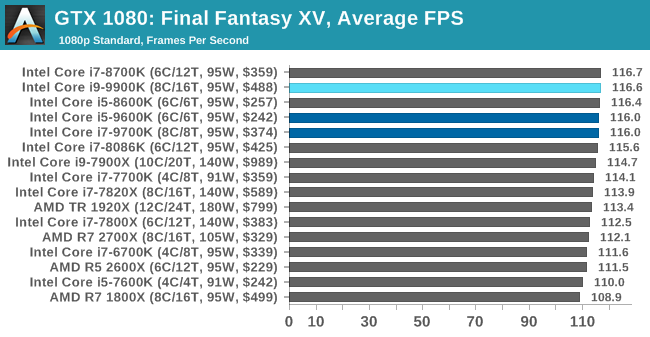

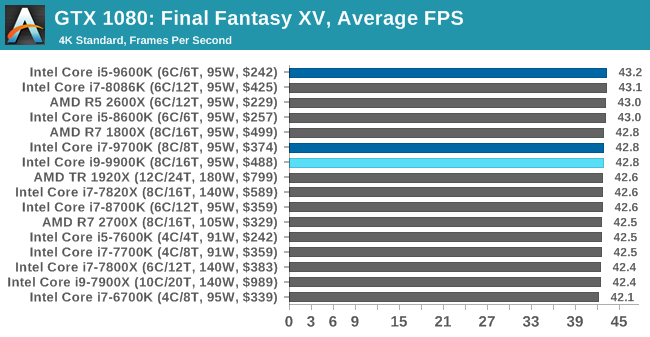

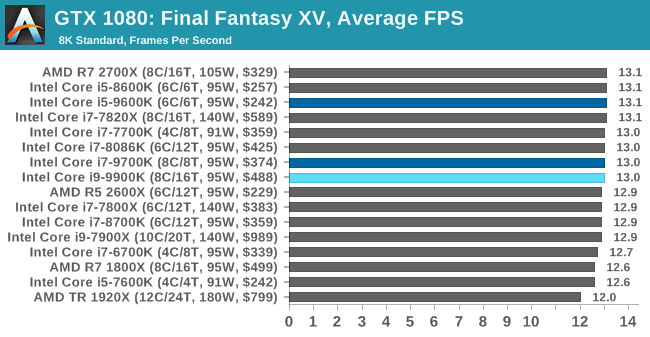

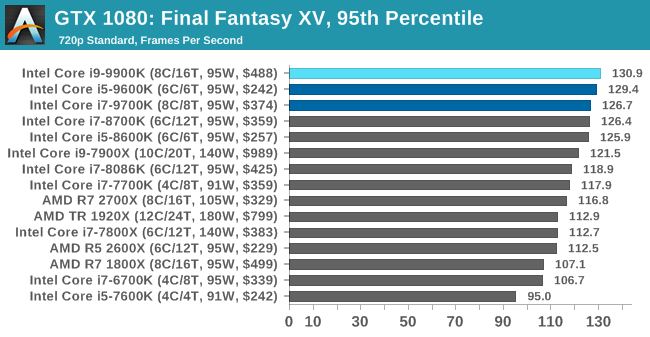

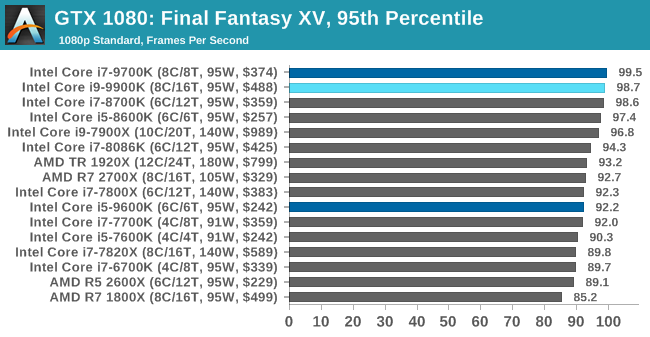

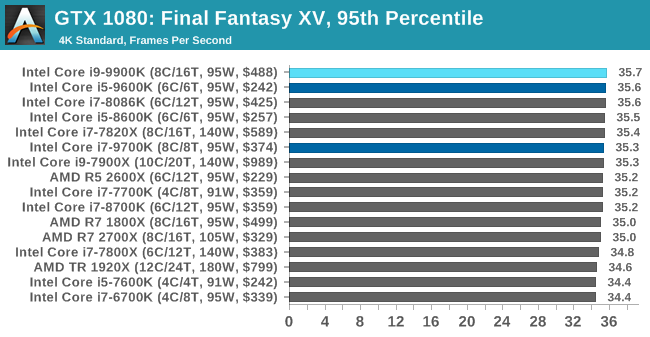

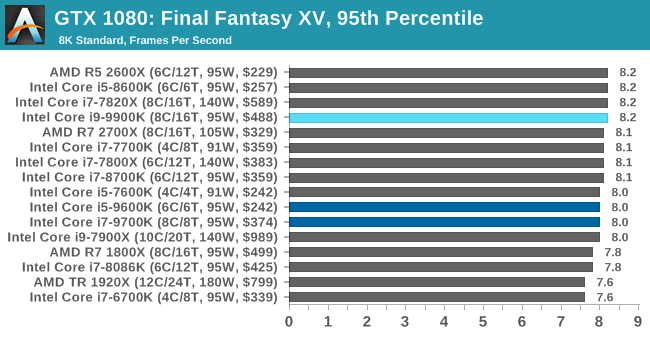

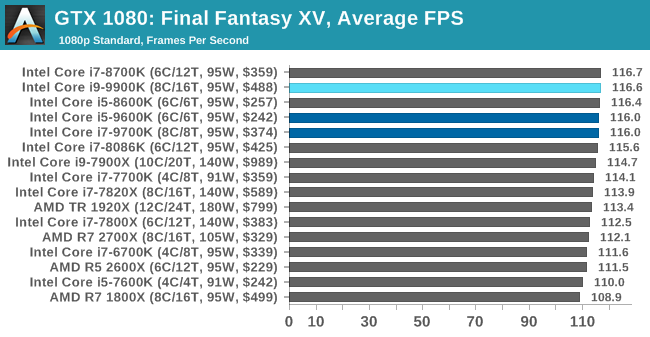

Gaming: Final Fantasy XV

Upon arriving to PC earlier this, Final Fantasy XV: Windows Edition was given a graphical overhaul as it was ported over from console, fruits of their successful partnership with NVIDIA, with hardly any hint of the troubles during Final Fantasy XV's original production and development.

In preparation for the launch, Square Enix opted to release a standalone benchmark that they have since updated. Using the Final Fantasy XV standalone benchmark gives us a lengthy standardized sequence to record, although it should be noted that its heavy use of NVIDIA technology means that the Maximum setting has problems - it renders items off screen. To get around this, we use the standard preset which does not have these issues.

Square Enix has patched the benchmark with custom graphics settings and bugfixes to be much more accurate in profiling in-game performance and graphical options. For our testing, we run the standard benchmark with a FRAPs overlay, taking a 6 minute recording of the test.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Final Fantasy XV | JRPG | Mar 2018 |

DX11 | 720p Standard |

1080p Standard |

4K Standard |

8K Standard |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| Final Fantasy XV | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

Unlike World of Tanks, Final Fantasy is never entirely CPU limited at any one point. Even on its Low settings, our entire collection of CPUs is within a 7% range. Only once we drop down to IGP-level settings – which are really meant more for IGP comparisons – do we tease out any kind of CPU difference. Still, in that scenario the 9900K does at least eek out a few more frames than prior Intel CPUs, with the 9700K taking up second place. Past that, this is very clearly a game that is GPU limited in almost all scenarios.

274 Comments

View All Comments

evernessince - Saturday, October 20, 2018 - link

I'm sure for him money is a fixed resource, he is just really bad at managing it. You'd have to be crazy to blow money on the 9900K when the 8700K is $200 cheaper and the 2700X is half the price.Dug - Monday, October 22, 2018 - link

Relative to how much you make or have. $200 isn't some life threatening amount that makes them crazy because they spent it on a product that they will enjoy. We spend more than that going out for a weekend (and usually don't have anything to show for it). If an extra 200 is threatening to your lively hood, you shouldn't be shopping for new cpu's anyway.close - Saturday, October 20, 2018 - link

@ekidhardt: "I think far too much emphasis has been placed on 'value'. I simply want the fastest, most powerful CPU that isn't priced absurdly high."That, my good man, is the very definition of value. It happens automatically when you decide to take price into consideration the price. I also don't care about value, I just want a CPU with a good performance to price ratio. See what I did there? :)

evernessince - Saturday, October 20, 2018 - link

A little bit extra? It's $200 more then the 8700K, that's not a little.mapesdhs - Sunday, October 21, 2018 - link

The key point being, for gaming, use the difference to buy a better GPU, whether one gets an 8700K or 2700X (or indeed any one of a plethora of options really, right back to an old 4930K). It's only at 1080p and high refresh rates where strong CPU performance stands out, something DX12 should help more with as time goes by (the obsession with high refresh rates is amusing given NVIDIA's focus shift back to sub-60Hz being touted once more as ok). For gaming at 1440p or higher, one can get a faster system by choosing a cheaper CPU and better GPU.

There are two exceptions: those for whom money is literally no object, and certain production workloads that still favour frequency/IPC and are not yet well optimised for more than 6 cores (Premiere is probably the best example). Someone mentioned pro tasks being irrelevant because ECC is not supported, but many solo pros can't afford XEON class hw (I mean the proper dual socket setups) even if the initial higher outlay would eventually pay for itself.

What we're going to see with the 9900K for gaming is a small minority of people taking Intel's mantra of "the best" and running with it. Technically, they're correct, but most normal people have budgets and other expenses to consider, including wives/gfs with their own cost tolerance limits. :D

If someone can genuinely afford it then who cares, in the end it's their money, but as a choice for gaming it really only makes sense via the same rationale if they've also then bought a 2080 Ti to go with it, though even there one could retort that two used 1080 TIs would be cheaper & faster (at least for those titles where SLI is functional).

If anything good has come from this and the RTX launch, it's the move away from the supposed social benefit of having "the best"; the street cred is gone, now it just makes one look like a fool who was easily parted from his money.

Spunjji - Monday, October 22, 2018 - link

Word.Total Meltdowner - Sunday, October 21, 2018 - link

This comment reads like shilling so hard. So hard. Please try harder to not be so obvious.Spunjji - Monday, October 22, 2018 - link

I think they placed just the right amount of emphasis on "value". Your post basically explains why it's not relevant for you in terms of you being an Intel fanboy with cash to burn. I'll elaborate.The MSRP is in the realm of irrational spending for a huge number of people. "Rational" here meaning "do I get out anything like what I put in", wherein the answer in all metrics is an obvious no.

Following that, there are a HUGE number of reasons not to pre-order a high-end CPU, especially before proper results are out. Pre-ordering *anything* computer related is a dubious prospect, doubly so when the company selling it paid good money to paint a deceptive picture of their product's performance.

Your assertion that Intel have never launched a bad CPU is false and either ignorance or wilful bias on your part. They have launched a whole bunch of terrible CPUs, from the P3 1.2Ghz that never worked, through the P4 Emergency Edition and the early "dual-core" P4 processors, all the way through to this i9 9900K which is the thirstiest "95W" CPU I've ever seen. Their notebook CPUs are now segregated in such a way that you have to read a review to find out how they will perform, because so much is left on the table in terms of achievable turbo boost limits.

Sorry, I know I replied just to disagree which may seem argumentative, but you posted a bunch of nonsense and half-turths passed off as common-sense and/or logic. It's just bias; none of it does any harm but you could at least be up-front that you prefer Intel. That in itself (I like Intel and am happy to spend top dollar) is a perfectly legitimate reason for everything you did. Just be open and don't actively mislead people who know less than you do.

chris.london - Friday, October 19, 2018 - link

Hey Ryan. Thanks for the review.Would it be possible to check power consumption in a test in which the 2700x and 9900k perform similarly (maybe in a game)? POV-Ray seems like a good way to test for maximum power draw but it makes the 9900k look extremely inefficient (especially compared to the 9600k). It would be lovely to have another reference point.

0ldman79 - Friday, October 19, 2018 - link

I'm legitimately surprised.The 9900k is starving for bandwidth, needs more cache or something. I never expected it to *not* win the CPU benchmarks vs the 9700k. I honestly expected the 9700k to be the odd one out, more expensive than the i5 and slower than the 9900k. This isn't the case. Apparently SMT isn't enabling 100% usage of the CPU's resources, it is allowing a bottleneck due to fighting over resources. I'd love to see the 9900K run against it's brethren with HT disabled.