The Intel 9th Gen Review: Core i9-9900K, Core i7-9700K and Core i5-9600K Tested

by Ian Cutress on October 19, 2018 9:00 AM EST- Posted in

- CPUs

- Intel

- Coffee Lake

- 14++

- Core 9th Gen

- Core-S

- i9-9900K

- i7-9700K

- i5-9600K

Gaming: Ashes Classic (DX12)

Seen as the holy child of DirectX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go explore as many of the DirectX12 features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

As a real-time strategy title, Ashes is all about responsiveness during both wide open shots but also concentrated battles. With DirectX12 at the helm, the ability to implement more draw calls per second allows the engine to work with substantial unit depth and effects that other RTS titles had to rely on combined draw calls to achieve, making some combined unit structures ultimately very rigid.

Stardock clearly understand the importance of an in-game benchmark, ensuring that such a tool was available and capable from day one, especially with all the additional DX12 features used and being able to characterize how they affected the title for the developer was important. The in-game benchmark performs a four minute fixed seed battle environment with a variety of shots, and outputs a vast amount of data to analyze.

For our benchmark, we run Ashes Classic: an older version of the game before the Escalation update. The reason for this is that this is easier to automate, without a splash screen, but still has a strong visual fidelity to test.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Ashes: Classic | RTS | Mar 2016 |

DX12 | 720p Standard |

1080p Standard |

1440p Standard |

4K Standard |

|

Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain. There are several presents, from Very Low to Extreme: we run our benchmarks at the above settings, and take the frame-time output for our average and percentile numbers.

All of our benchmark results can also be found in our benchmark engine, Bench.

| Ashes Classic | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

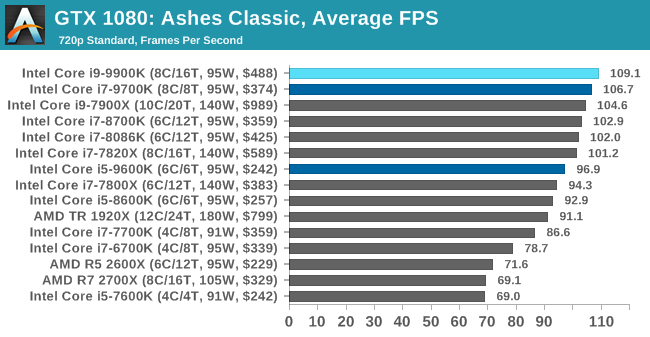

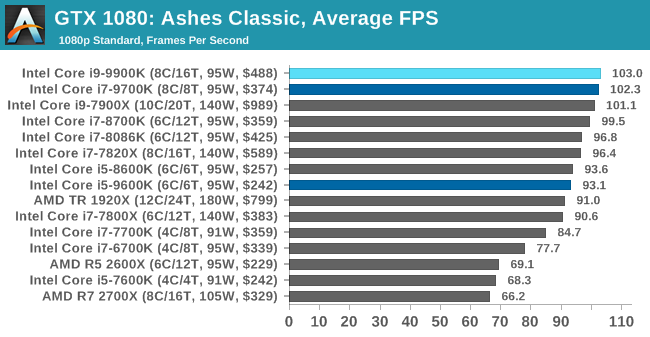

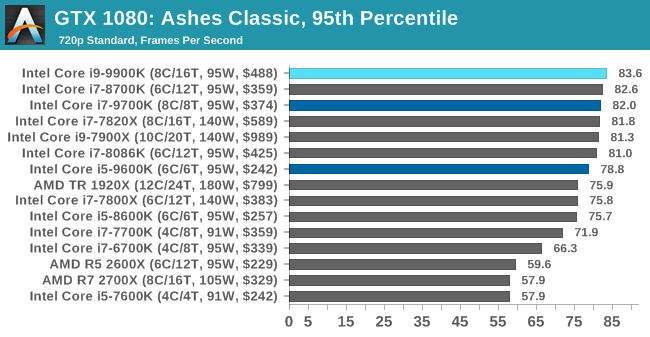

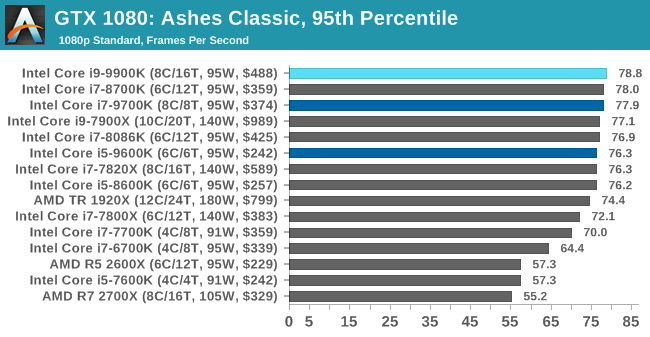

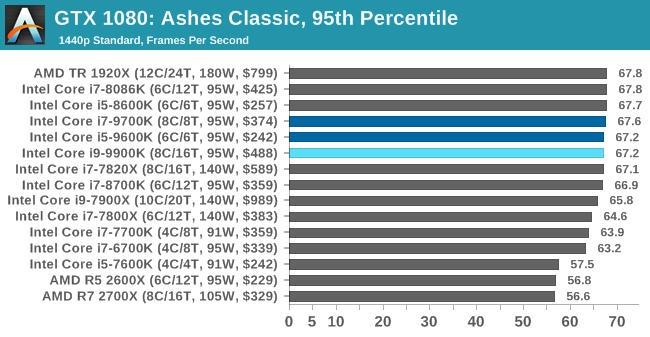

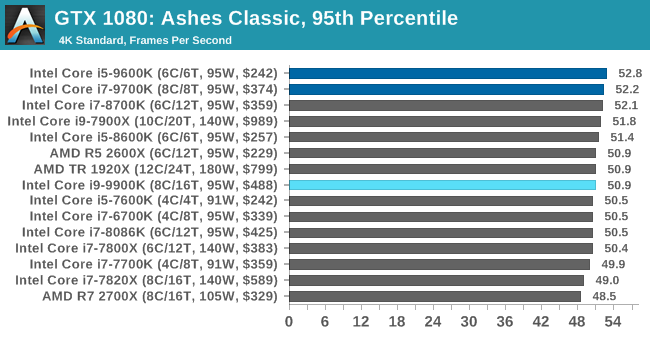

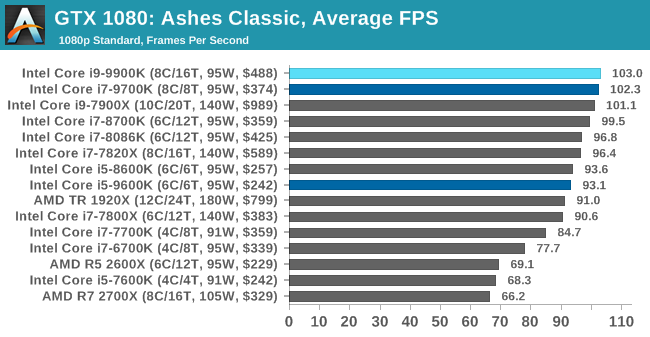

As a game that was designed from the get-go to punish CPUs and showcase the benefits of DirectX 12-style APIs, Ashes is one of our more CPU-sensitive tests. Above 1080p results still start running together due to GPU limits, but at or below that, we get some useful separation. In which case what we see is that the 9900K ekes out a small advantage, putting it in the lead and with the 9700K right behind it.

Notably, the game doesn’t scale much from 1080p down to 720p. Which leads me to suspect that we’re looking at a relatively pure CPU bottleneck, a rarity in modern games. In which case it’s both good and bad for Intel’s latest CPU; it’s definitely the fastest thing here, but it doesn’t do much to separate itself from the likes of the 8700K, holding just a 4% advantage at 1080p. This being despite its frequency and core count advantage. So assuming this is not in fact a GPU limit, then it means we may be encroaching on another bottleneck (memory bandwidth?), or maybe the practical frequency gains on the 9900K just aren’t all that much here.

But if nothing else, the 9900K and even the 9700K do make a case for themselves here versus the 9600K. Whether it’s the core or the clockspeeds, there’s a 10% advantage for the faster processors at 1080p.

274 Comments

View All Comments

3dGfx - Friday, October 19, 2018 - link

game developers like to build and test on the same machinemr_tawan - Saturday, October 20, 2018 - link

> game developers like to build and test on the same machineOh I thought they use remote debugging.

12345 - Wednesday, March 27, 2019 - link

Only thing I can think of as a gaming use for those would be to pass through a gpu each to several VMs.close - Saturday, October 20, 2018 - link

@Ryan, "There’s no way around it, in almost every scenario it was either top or within variance of being the best processor in every test (except Ashes at 4K). Intel has built the world’s best gaming processor (again)."Am I reading the iGPU page wrong? The occasional 100+% handicap does not seem to be "within variance".

daxpax - Saturday, October 20, 2018 - link

if you noticed 2700x is faster in half benchmarks for games but they didnt include itnathanddrews - Friday, October 19, 2018 - link

That wasn't a negative critique of the review, just the opposite in fact: from the selection of benchmarks you provided, it is EASY to see that given more GPU power, the new Intel chips will clearly outperform AMD most of the time - generally with average, but specifically minimum frames. From where I'm sitting - 3570K+1080Ti - I think I could save a lot of money by getting a 2600X/2700X OC setup and not miss out on too many fpses.philehidiot - Friday, October 19, 2018 - link

I think anyone with any sense (and the constraints of a budget / missus) will be stupid to buy this CPU for gaming. The sensible thing to do is to buy the AMD chip that provides 99% of the gaming performance for half the price (even better value when you factor in the mobo) and then to plough that money into a better GPU, more RAM and / or a better SSD. The savings from the CPU alone will allow you to invest a useful amount more into ALL of those areas. There are people who do need a chip like this but they are not gamers. Intel are pushing hard with both the limitations of their tech (see: stupid temperatures) and their marketing BS (see: outright lies) because they know they're currently being held by the short and curlies. My 4 year old i5 may well score within 90% of these gaming benchmarks because the limitation in gaming these days is the GPU. Sorry, Intel, wrong market to aim at.imaheadcase - Saturday, October 20, 2018 - link

I like how you said limitations in tech and point to temps, like any gamer cares about that. Every game wants raw performance, and the fact remains intel systems are still easier to go about it. The reason is simple, most gamers will upgrade from another intel system and use lots of parts from it that work with current generation stuff.Its like the whole Gsync vs non gsync. Its a stupid arguement, its not a tax on gsync when you are buying the best monitor anyways.

philehidiot - Saturday, October 20, 2018 - link

Those limitations affect overclocking and therefore available performance. Which is hardly different to much cheaper chips. You're right about upgrading though.emn13 - Saturday, October 20, 2018 - link

The AVX 512 numbers look suspicious. Both common sense and other examples online suggest that AVX512 should improve performance by much less than a factor 2. Additionally, AVX-512 causes varying amounts of frequency throttling; so you;re not going to get the full factor 2.This suggests to me that your baseline is somehow misleading. Are you comparing AVX512 to ancient SSE? To no vectorization at all? Something's not right there.