The Intel 9th Gen Review: Core i9-9900K, Core i7-9700K and Core i5-9600K Tested

by Ian Cutress on October 19, 2018 9:00 AM EST- Posted in

- CPUs

- Intel

- Coffee Lake

- 14++

- Core 9th Gen

- Core-S

- i9-9900K

- i7-9700K

- i5-9600K

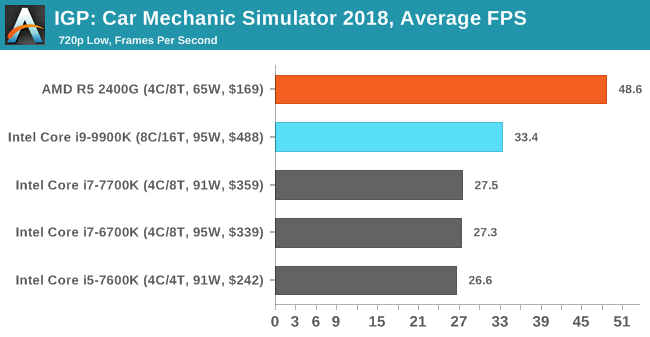

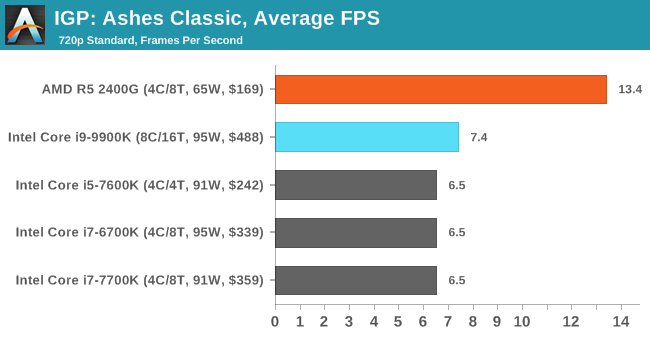

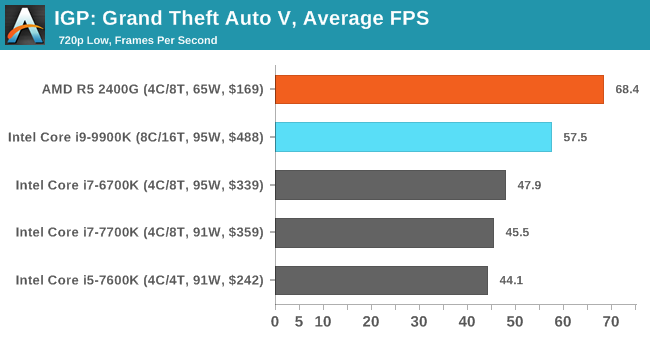

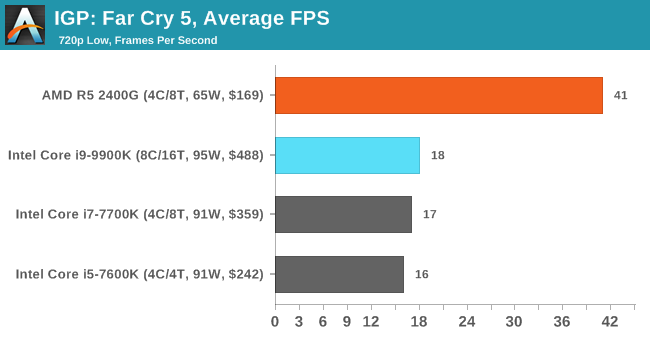

Gaming: Integrated Graphics

Despite being the ultimate joke at any bring-your-own-computer event, gaming on integrated graphics can ultimately be as rewarding as the latest mega-rig that costs the same as a car. The desire for strong integrated graphics in various shapes and sizes has waxed and waned over the years, with Intel relying on its latest ‘Gen’ graphics architecture while AMD happily puts its Vega architecture into the market to swallow up all the low-end graphics card sales. With Intel poised to make an attack on graphics in the next few years, it will be interesting to see how the graphics market develops, especially integrated graphics.

For our integrated graphics testing, we take our ‘IGP’ category settings for each game and loop the benchmark round for five minutes a piece, taking as much data as we can from our automated setup.

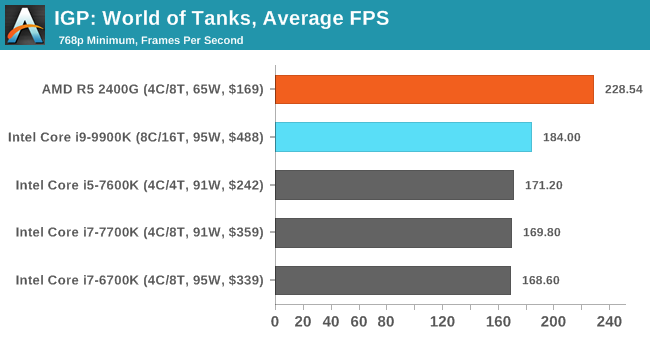

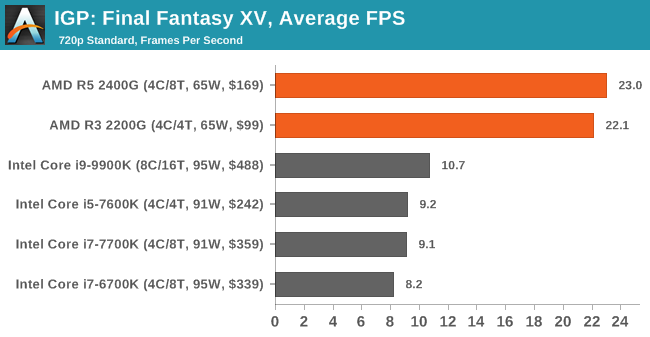

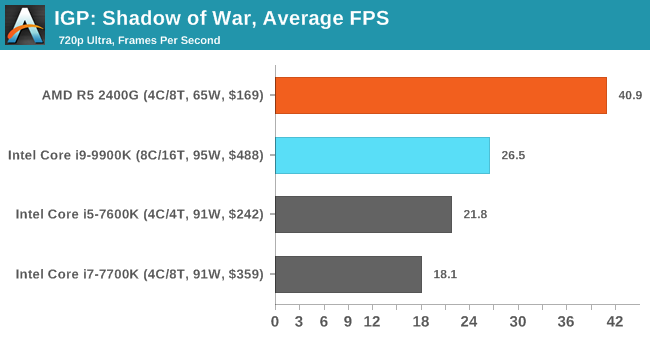

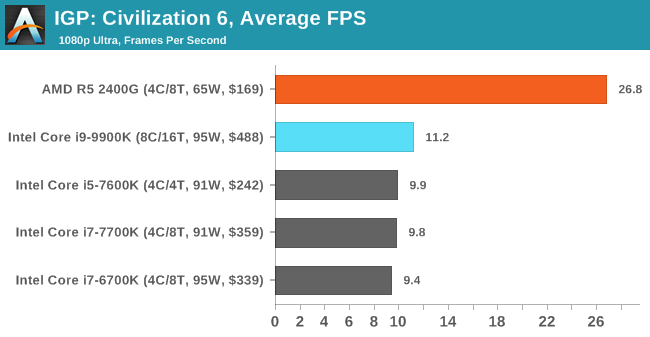

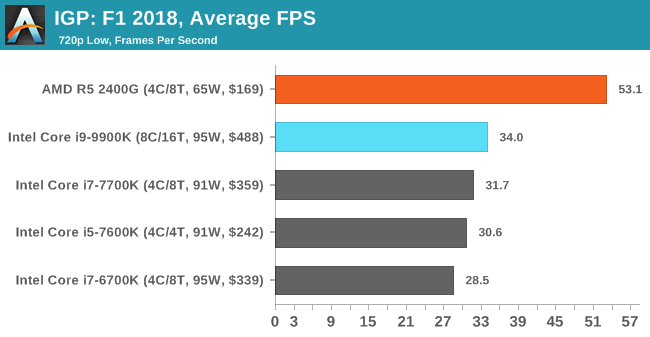

Finally, looking at integrated graphics performance, I don’t believe anyone should be surprised here. Intel has not meaningfully changed their iGPU since Kaby Lake – the microarchitecture is the same and the peak GPU frequency has risen by all of 50MHz to 1200MHz – so Intel’s iGPU results have essentially been stagnant for the last couple of years at the top desktop segment.

To that end I don’t think there’s much new to say. Intel’s GT2 iGPU struggles even at 720p in some of these games; it’s not an incapable iGPU, but there’s sometimes a large gulf between it and what these games (which are multi-platform console ports) expect for minimum GPU performance. The end result is that if you’re serious about iGPU performance in your desktop CPU, then AMD’s APUs provide much better performance. That said, if you are forced to game on the 9900K’s iGPU, then at least the staples of the eSports world such as World of Tanks will run quite well.

274 Comments

View All Comments

leexgx - Saturday, October 20, 2018 - link

Can you please stop your website playing silent audio, very annoying as it stops playback on my other phone (dual connection headset)moozooh - Sunday, October 21, 2018 - link

To be fair, the 9900K seems like a suboptimal choice for a gaming rig despite the claims—the extra performance is marginal and comes at a very heavy price. Consider that in all the CPU-bound 95th percentile graphs (which are the only important ones in this context)—even in the more CPU-intensive games—the 9700K was within 5% of the 9900K, sometimes noticeably faster (e.g. Civ6 Low). And its overclocking potential is just *so* much better—all of this at ~3/4 the price and power consumption (and hence more relaxed cooling requirements and lower noise). I cannot possibly envision a scenario where a rational choice, all this considered, would point to 9900K for a gaming machine. The at most 5% extra performance just isn't worth the downsides.On a sidenote, I'd actually like to see how an overclocked 9700K fares against overclocked 8700K/8086K (delidded for fair comparison—you seem to have had at least one of those, no?) with regards to frame times/worst performance. For my current home PC I chose a delidded 8350K running at 4.9 GHz on 1–2 cores and at 4.7 GHz on 3–4, which I considered the optimal choice for my typical usage, where the emphasis lies on non-RTS games, general/web/office performance, emulation, demoscene, some Avisynth—basically all of the tasks that heavily favor per-thread performance and don't scale well with HT. In most of the gaming tests the OC 8350K showed frame times about on par with the twice more expensive 8700K at stock settings, so it made perfect sense as a mid-tier gaming CPU. It appears that 9700K would be an optimal and safe drop-in replacement for it as it would double the number of cores while enabling even better per-thread performance without putting too much strain on the cooler. But then again I'd be probably better off waiting for its Ice Lake counterpart with full (?) hardware Spectre mitigation, which should result in a "free" minor performance bump if nothing else. At least assuming it will still use the same socket, which you never can tell with Intel...

R0H1T - Sunday, October 21, 2018 - link

Ryan & Ian, I see that the last few pages have included a note about Z390 used because the Z370 board was over-volting the chip? Yet on the Overclocking page we see the Z370 listed with max CPU package power at 168 Watts? Could you list the (default) auto voltage applied by the Asrock Z370 & if appropriate update the charts on OCing page with the Z390 as well?Total Meltdowner - Sunday, October 21, 2018 - link

Ryan, you do great work. Please don't let all these haters in the comments who constantly berate you over grammar and typos get you down.Icehawk - Saturday, October 27, 2018 - link

Ryan, I still haven't been able to find an answer to this - what are your actual HEVC settings? Because I've got an 8700 @4.5 no offset and it does 1080p at "1080p60 HEVC at 3500 kbps variable bit rate, fast setting, main profile" with passthrough audio and I get ~40fps not the 175 you achieved - how on earth are you getting over 4x the performance??? The only way I can get remotely close would be to use NVENC or QuickSync neither of which are acceptable to me.phinnvr6@gmail.com - Wednesday, October 31, 2018 - link

My thoughts are why would anyone recommend the 9900K over the 9700K? It's absurdly priced, draws an insane amount of power, and performs roughly identical.DanNeely - Friday, October 19, 2018 - link

Have any mobo makers published block diagrams for their Z390 boards? I'm wondering if the 10GB USB3.1 ports are using 2 HSIO lanes as speculated in the mobo preview article, or if Intel has 6 lanes that can run at 10gbps instead of the normal 8 so that they only need one lane each.repoman27 - Friday, October 19, 2018 - link

They absolutely do not use 2 HSIO lanes. That was a total brain fart in the other article. The datasheet for the other 300 series chipsets is available on ARK, and the HSIO configuration of the Z390 can easily be extrapolated from that.HSIO lanes are just external connections to differential signaling pairs that are connected internally to either various controllers or a PCIe switch via muxes. They’re analog interfaces connected to PHYs. They operate at whatever signaling rate and encoding scheme the selected PHY operates at. There is no logic to perform any type of channel bonding between the PCH and any connected ports or devices.

TEAMSWITCHER - Friday, October 19, 2018 - link

My big question ... Could there be an 8 core Mobile part on the way?Ryan Smith - Friday, October 19, 2018 - link

We don't have it plotted since we haven't taken enough samples for a good graph, but CFL-R is showing a pretty steep power/frequency curve towards the tail-end. That means power consumption drops by a lot just by backing off of the frequency a little.So while it's still more power-hungry than the 6-cores at the same frequencies, it's not out of the realm of possibility. Though base clocks (which are TDP guaranteed) will almost certainly have to drop to compensate.