The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Power, Temperature, and Noise

With a large chip, more transistors, and more frames, questions always pivot to the efficiency of the card, and how well it sits with the overall power consumption, thermal limits of the default ‘coolers’, and the local noise of the fans when at load. Users buying these cards are going to be expected to push some pixels, which will have knock on effects inside a case. For our testing, we use a case for the best real-world results in these metrics.

Power

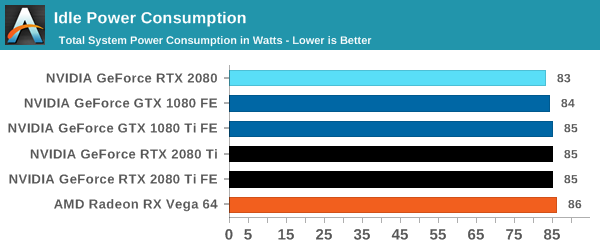

All of our graphics cards pivot around the 83-86W level when idle, though it is noticeable that they are in sets: the 2080 is below the 1080, the 2080 Ti sits above the 1080 Ti, and the Vega 64 consumes the most.

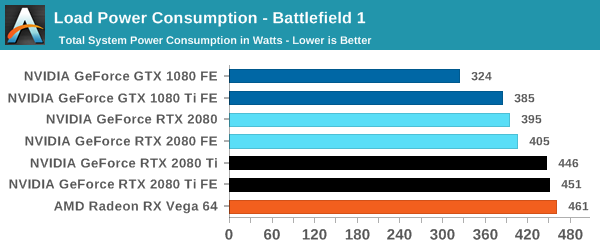

When we crank up a real-world title, all the RTX 20-series cards are pushing more power. The 2080 consumes 10W over the previous generation flagship, the 1080 Ti, and the new 2080 Ti flagship goes for another 50W system power beyond this. Still not as much as the Vega 64, however.

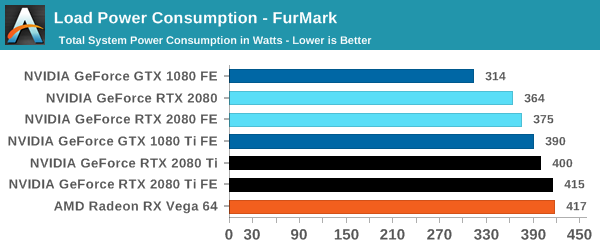

For a synthetic like Furmark, the RTX 2080 results show that it consumes less than the GTX 1080 Ti, although the GTX 1080 is some 50W less. The margin between the RTX 2080 FE and RTX 2080 Ti FE is some 40W, which is indicative of the official TDP differences. At the top end, the RTX 2080 Ti FE and RX Vega 64 are consuming equal power, however the RTX 2080 Ti FE is pushing through more work.

For power, the overall differences are quite clear: the RTX 2080 Ti is a step up above the RTX 2080, however the RTX 2080 shows that it is similar to the previous generation 1080/1080 Ti.

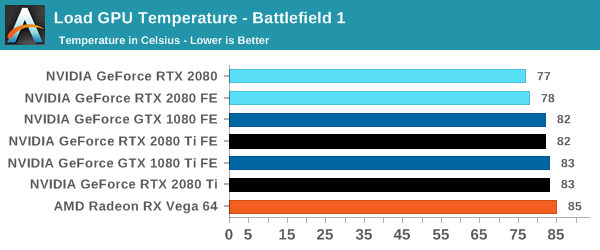

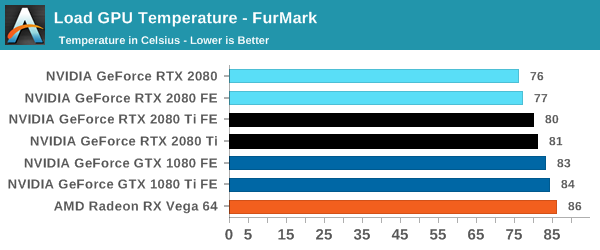

Temperature

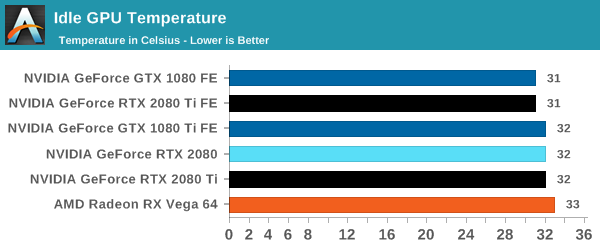

Straight off the bat, moving from the blower cooler to the dual fan coolers, we see that the RTX 2080 holds its temperature a lot better than the previous generation GTX 1080 and GTX 1080 Ti.

At each circumstance at load, the RTX 2080 is several degrees cooler than both the previous generation and the RTX 2080 Ti. The 2080 Ti fairs well in Furmark, coming in at a lower temperature than the 10-series, but trades blows in Battlefield. This is a win for the dual fan cooler, rather than the blower.

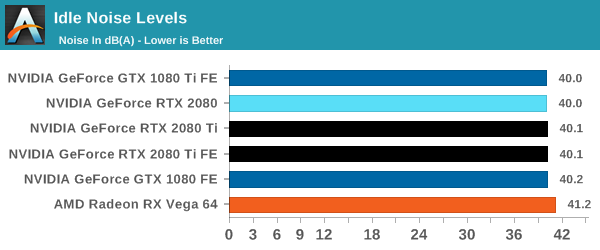

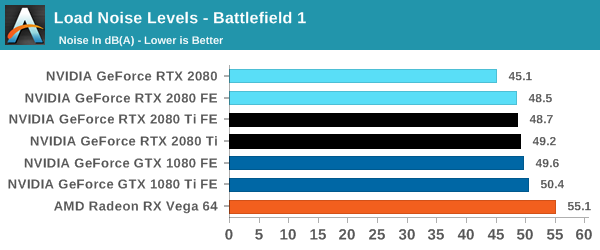

Noise

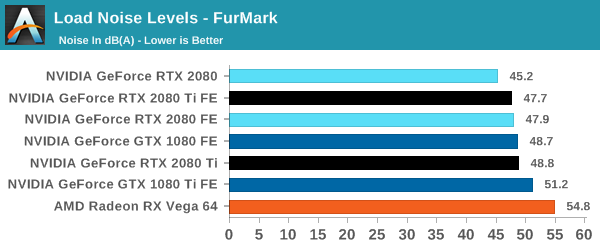

Similar to the temperature, the noise profile of the two larger fans rather than a single blower means that the new RTX cards can be quieter than the previous generation: the RTX 2080 wins here, showing that it can be 3-5 dB(A) lower than the 10-series and perform similar. The added power needed for the RTX 2080 Ti means that it is still competing against the GTX 1080, but it always beats the GTX 1080 Ti by comparison.

337 Comments

View All Comments

mapesdhs - Thursday, September 27, 2018 - link

It also glosses over the huge pricing differences and the fact that most gamers buy AIB models, not reference cards.noone2 - Thursday, September 20, 2018 - link

Not sure why people are so negative about these and the prices. Sell your old card and amortize the cost over how long you'll keep the new one. So maybe $400/year (less if you keep it longer).If you're a serious gamer, are you really not willing to spend a few hundred dollars per year on your hardware? I mean, the performance is there and it's somewhat future proofed (assuming things take off for RT and DLSS.)

A bowling league (they still have those?) probably costs more per year than this card. If you only play Minecraft I guess you don't need it, but if you want the highest setting in the newest games and potentially the new technology, then I think it's worth it.

milkod2001 - Friday, September 21, 2018 - link

Performance is not there. Around 20% actual performance boost is not very convincing especially due much higher price. How can you be positive about it?Future tech promise doesn't add that much and it is not clear if game developers will bother.

When one spend $1000 of GPU it has to deliver perfect 4k all maxed gaming and NV charges ever more. This is a joke, NV is just testing how much they can squeeze of us until we simply don't buy.

noone2 - Friday, September 21, 2018 - link

The article clearly says that the Ti is 32% better on average.The idea about future tech is you either do it and early adopters pay for it in hopes it catches on, or you never do it and nothing ever improves. Game developers don't really create technology and then ask hardware producers to support it/figure out how to do it. Dice didn't knock on Nvidia's door and pay them to figure out how to do ray tracing in real time.

My point remains though: If this is a favorite hobby/pass-time, then it's a modest price to pay for what will be hundreds of hours of entertainment and the potential that ray tracing and DLSS and whatever else catches on and you get to experience it sooner rather than later. You're saying this card is too expensive, yet I can find console players who think a $600 video game is too expensive too. Different strokes for different folks. $1100 is not terrible value. You talking hundreds of dollars here, not 10s of thousands of dollars. It's drop in the bucket in the scope of life.

mapesdhs - Thursday, September 27, 2018 - link

So Just Buy It then? Do you work for toms? :DTheJian - Thursday, September 20, 2018 - link

"Ultimately, gamers can't be blamed for wanting to game with their cards, and on that level they will have to think long and hard about paying extra to buy graphics hardware that is priced extra with features that aren't yet applicable to real-world gaming, and yet only provides performance comparable to previous generation video cards."So, I guess I can't read charts, because I thought they said 2080ti was massively faster than anything before it. We also KNOW devs will take 40-100% perf improvements seriously (already said such, and NV has 25 games being worked on now coming soon with support for their tech) and will support NV's new tech since they sell massively more cards than AMD.

Even the 2080 vs. 1080 is a great story at 4k as the cards part by quite a margin in most stuff.

IE, battlefield 1, 4k test 2080fe scores 78.9 vs. 56.4 for 1080fe. That's a pretty big win to scoff at calling it comparable is misleading at best correct? Far Cry 5 same story, 57 2080fe, vs. 42 for 1080fe. Again, pretty massive gain for $100. Ashes, 74 to 61fps (2080fe vs. 1080fe). Wolf2 100fps for 2080fe, vs. 60 for 1080fe...LOL. Well, 40% is, uh, "comparable perf"...ROFL. OK, I could go on but whatever dude. Would I buy one if I had a 1080ti, probably not unless I had cash to burn, but for many that usually do buy these things, they just laughed at $100 premiums...ROFL.

Never mind what these cards are doing to the AMD lineup. No reason to lower cards, I'd plop them on top of the old ones too, since they are the only competition. When you're competing with yourself you just do HEDT like stuff, rather than shoving down the old lines. Stack on top for more margin and profits!

$100 for future tech and a modest victory in everything or quite a bit more in some things, seems like a good deal to me for a chip we know is expensive to make (even the small one is Titan size).

Oh, I don't count that fold@home crap, synthetic junk as actual benchmarks because you gain nothing from doing it but a high electric bill (and a hot room). If you can't make money from it, or play it for fun (game), it isn't worth benchmarking something that means nothing. How fast can you spit in the wind 100 times. Umm, who cares. Right. Same story with synthetics.

mapesdhs - Thursday, September 27, 2018 - link

It's future tech that cannot deliver *now*, so what's the point? The performance just isn't there, and it's a pretty poor implementation of what they're boasting about anyway (I thought the demos looked generally awful, though visual realism is less something I care about now anyway, games need to better in other ways). Fact is, the 2080 is quite a bit more expensive than a new 1080 Ti for a card with less RAM and no guarantee these supposed fancy features are going to go anywhere anyway. The 2080 Ti is even worse; it has the speed in some cases, but the price completely spoils the picture, where I am the 2080 Ti is twice the cost of a 1080 Ti, with no VRAM increase either.NVIDIA spent the last 5 years pushing gamers into high frequency displays, 4K and VR. Now they're trying to do a total about face. It won't work.

lenghui - Thursday, September 20, 2018 - link

Thanks for rushing the review out. BTW, the auto-play video on every AT page has got to stop. You are turning into Tom's Hardware.milkod2001 - Friday, September 21, 2018 - link

They are both owned by Purch. Marketing company responsible for those annoying auto play videos and the lowest crap possible From the web section. They go with motto: Ad clicks over anything. Don't think it will change anytime soon. Anand sold his soul twice to Apple and also his web to Purch.mapesdhs - Thursday, September 27, 2018 - link

One can use Ublock Origin to prevent those jw-player vids.