The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Final Fantasy XV (DX11)

Upon arriving to PC earlier this, Final Fantasy XV: Windows Edition was given a graphical overhaul as it was ported over from console, fruits of their successful partnership with NVIDIA, with hardly any hint of the troubles during Final Fantasy XV's original production and development.

In preparation for the launch, Square Enix opted to release a standalone benchmark that they have since updated. Using the Final Fantasy XV standalone benchmark gives us a lengthy standardized sequence to utilize OCAT. Upon release, the standalone benchmark received criticism for performance issues and general bugginess, as well as confusing graphical presets and performance measurement by 'score'. In its original iteration, the graphical settings could not be adjusted, leaving the user to the presets that were tied to resolution and hidden settings such as GameWorks features.

Since then, Square Enix has patched the benchmark with custom graphics settings and bugfixes to be much more accurate in profiling in-game performance and graphical options, though leaving the 'score' measurement. For our testing, we enable or adjust settings to the highest except for NVIDIA-specific features and 'Model LOD', the latter of which is left at standard. Final Fantasy XV also supports HDR, and it will support DLSS at some date.

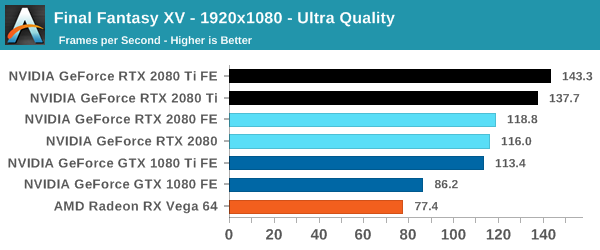

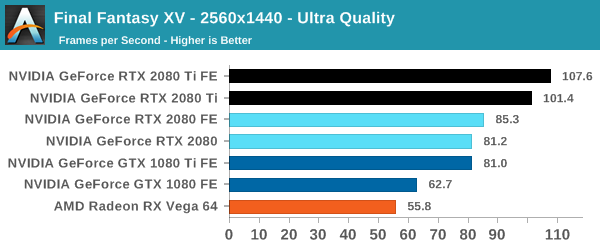

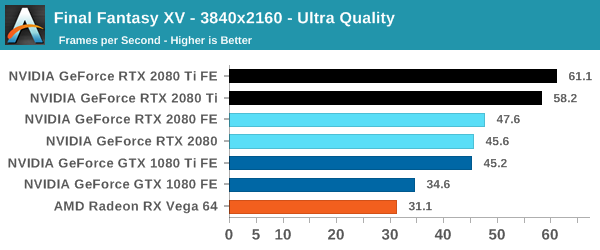

| Final Fantasy XV | 1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

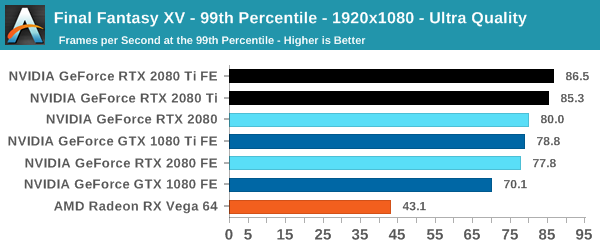

| 99th Percentile |  |

|

|

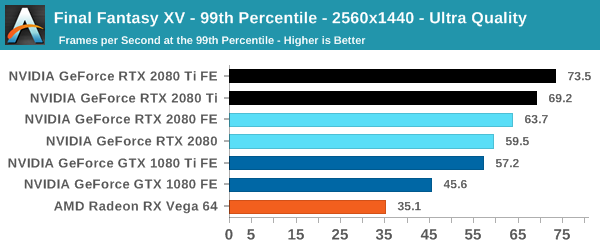

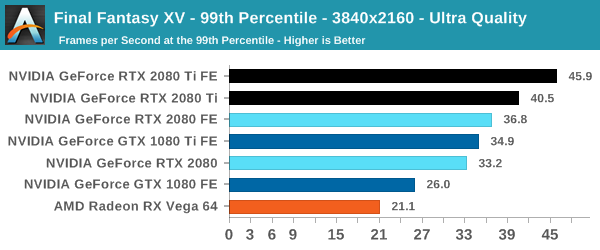

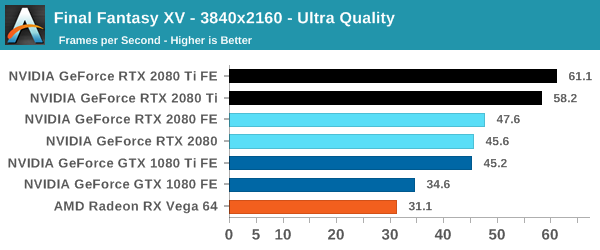

NVIDIA, of course, is working closely with Square Enix, and the game is naturally expected to run well on NVIDIA cards in general, but the 1080 Ti truly lives up to its gaming flagship reputation in matching the RTX 2080. With Final Fantasy XV, the Founders Edition power and clocks again prove highly useful in the 2080 FE pipping the 1080 Ti, while the 2080 Ti FE makes it across the psychological 60fps mark at 4K.

337 Comments

View All Comments

AnnoyedGrunt - Friday, September 21, 2018 - link

I think it was actually much less, judging by comments made in one of the reviews I linked. Maybe around $350 or so, which was very expensive at the time. It is true that it was a revolutionary card, but at the same time it was greeted with a lukewarm reception from the gaming community. Much like the 20XX series. I doubt that the 20XX will seem as revolutionary in hindsight as the GeForce256 did, but the initial reception does seem similar between the two. Will be interesting to see what the next year brings to the table.-AG

eddman - Friday, September 21, 2018 - link

Wow, that's just $525 now. I'm interested in old card prices because some people claim they have always been super expensive. It seems they have a selective memory. I'm yet to find a card more expensive than 2080 Ti from that time period.I'm not surprised that people still didn't buy many 256 cards. The previous cards were cheaper and performed close enough for the time.

abufrejoval - Thursday, September 20, 2018 - link

I am pretty sure I'll get a 2080ti, simply because nothing else will run INT4 or INT8 based inference with similar performance and ease of availability and tools support. Sure, when you are BAIDU or Facebook, you can buy even faster inference hardware or if you are Google you can build your own. But if you are not, I don't know where you'll get something that comes close.As far as gaming is concerned, my 1080ti falls short on 4k with ARK, which is noticeable at 43". If the 2080ti can get me through the critical minimum of 30FPS, it will have been worth it.

As far as ray tracing is concerned, I am less concerned about its support in games: Photo realism isn't an absolute necessity for game immersion.

But I'd love to see hybrid render support in software like Blender: The ability to pimp up the quality for video content creation and replace CPU based rander farms with something that is visually "awsome enough" points towards the real "game changing" capacity of this generation.

It pushes three distinct envelopes, raster, compute and render: If you only care about one, the value may not be there. In my case, I like the ability to explore all three, while getting an 2080ti for me allows me to push down an 1070 to one of my kids still running an R290X: Christmas for both of us!

mapesdhs - Thursday, September 27, 2018 - link

In the end though that's kinda the point, these are not gaming cards anymore and haven't been for some time. These are side spins from compute, where the real money & growth lie. We don't *need* raytracing for gaming, that glosses over so many other far more relevant issues about what makes for a good game.Pyrostemplar - Thursday, September 20, 2018 - link

High performance and (more than) matching price. nVidia seemingly put the card classification down one notch (x80 => x70; Ti => x80; Titan => Ti) while keeping the prices and overclocked then from day one so it looks like solid progress if one disregards the price.I think it will be a short lived (1 year or so) generation. A pricey stop gap with a few useless new features (because when devs catch up and actually deploy DXR enabled games, these cards will have been replaced by something faster).

ballsystemlord - Thursday, September 20, 2018 - link

Spelling/grammar errors (Only 2!):Wrong word:

"All-in-all, NVIDIA is keeping the Founders Edition premium, now increased to $100 to $200 over the baseline"

Should be:

"All-in-all, NVIDIA is keeping the Founders Edition premium, now increased from $100 to $200 over the baseline"

Missing "s":

"Of course, NVIDIA maintain that the cards will provide expected top-tier"

Should be:

"Of course, NVIDIA maintains that the cards will provide expected top-tier"

Ryan Smith - Thursday, September 20, 2018 - link

Thanks!ballsystemlord - Thursday, September 20, 2018 - link

Nate! Can you add DP folding @ home benchmark numbers? There were none in the Vega review and only SP in this Nvidia review.SanX - Thursday, September 20, 2018 - link

Author thinks that all gamers buy only fastest cards? May be. But I doubt all of them buy the new generestion card every year. In short, where are comparisons to 980/980Ti and even 780/780Ti? Owners of those cards are more interested to upgrade.milkod2001 - Friday, September 21, 2018 - link

See from top menu on right, there is a bench where you can see results. I presume they add data to huge database soon. And yes,people are talking about high end GPU but most are spending $400 max. for it.