Intel’s High-End Cascade Lake CPUs to Support 3.84 TB of Memory Per Socket

by Anton Shilov on July 10, 2018 10:00 AM EST

While Intel has yet to detail its upcoming Cascade Lake processors for servers, some of the key characteristics are beginning to emerge. According to a new report from ServeTheHome, some of the new chips will support up to 3.84 TB of memory per socket, double the amount supported by contemporary Skylake-based Xeon Platinum M-series CPUs that support 1.5 TB of DDR4, due to combining 512 GB Optane DIMMs and 128GB DDR4 DIMMs. For a dual socket system, this rises to up to 7.68 TB per node.

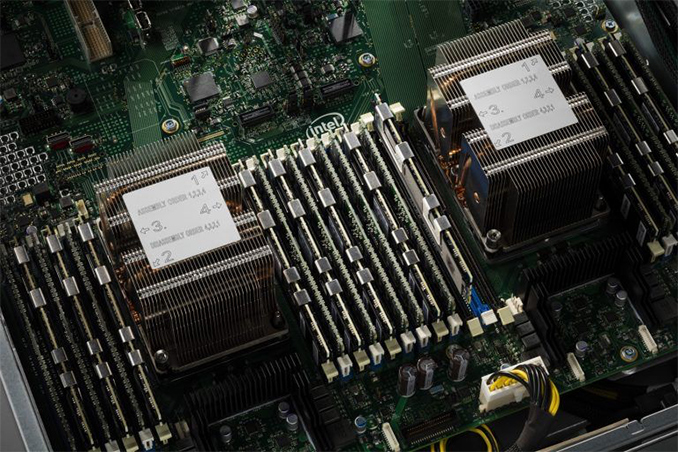

Last year Intel published a picture of a Cascade Lake-based server outfitted with six DDR4 DIMMs and six Optane Persistent Memory DIMMs per socket. Intel’s code-named Apache Pass modules have 512 GB capacity, whereas commercial standard DDR4 LRDIMMs often carry a peak of 128 GB of usable memory. If these modules are installed into a server, in a 6 x Optane and 6 x DDR4 configuration, they will provide 3072 GB of 3D XPoint memory and 768 GB of DDR4 RAM for a total of 3.84 TB of memory.

For write endurance reasons, six DDR4 DIMMs and six Optane DIMMs per socket will likely be a popular configuration for servers that run databases which benefit from high capacity of memory.

These metrics are confirmed by a document released by QCT and their QCT QuantaMesh systems, with the key picture here below:

The top left is a single server in a 1U configuration, showing five PCIe expansion slots and up to 7.68 TB memory capacity when a Cascade Lake CPU is installed. The bottom right is the T42D-2U, giving four nodes in a 2U configuration, totalling 30 TB memory capacity for a 2U rack. Given that the price of a single DDR4 128GB LRDIMM is circa $3500, and pricing for Optane still unknown, along with reports that pricing for Cascade Lake might be adjusted, these systems are likely to cost a pretty penny.

It is worth noting, given Intel's historic policy on product segmentation, that not all Cascade Lake SKUs will support the maximum 3.84 TB of memory, leaving it only to premium models. Or Intel may go even further, potentially, and say that not all SKUs will support Optane DIMMs - that might also be a premium feature. Intel did not confirm at the launch of Optane if all of the Cascade Lake Xeons would support it (the official response was 'we haven't released that information yet').

Related Reading

- Intel Discuss Whiskey Lake-U, Amber Lake-Y, and ‘Cascade Lake-X’

- Intel Delays Mass Production of 10 nm CPUs to 2019

- Building for Apache Pass: Why Some Skylake Servers Already have 8 DIMMs Per Socket

- Intel Launches Optane DIMMs Up To 512GB: Apache Pass Is Here!

Source: ServeTheHome

20 Comments

View All Comments

Mr Perfect - Wednesday, July 11, 2018 - link

Is that even relevant to RAM? I've only seen the distinction applied to storage.sandytheguy - Thursday, July 12, 2018 - link

RAM has always been measured in GiB/TiB, so whenever someone says GB it's safe to assume they mean GiB. Opposite for network bandwidth. It's those evil optical and magnetic storage companies that decided to confuse everyone.Joseph Luppens - Thursday, July 12, 2018 - link

https://mintywhite.com/vista/terabytes-tebibytes-h...SLB - Tuesday, July 17, 2018 - link

Right -- the correct number is 3.75 TB per socket (or better yet, 3.75 TiB). The article should be fixed.lilmoe - Tuesday, July 10, 2018 - link

I have a bad feeling about Optane. Something tells me that Xpoint will be sold as actual DRAM for entry level VMs on Azure, AWS and other cloud services.Zan Lynx - Wednesday, July 11, 2018 - link

So what?You do know that anyone could write an AVX job to run on a VM and if run on the same CPU as your VM would reduce your memory latency and bandwidth to less than Optane.

Jhlot - Saturday, July 14, 2018 - link

I thought I understood VMs and such. Either I don't or your comment is acronym barf that makes no sense. Someone please explain "write an AVX job to run on a VM and if run on the same CPU as your VM would reduce your memory latency and bandwidth to less than Optane." It just makes no sense to way it is said, who says "run on the same CPU as your VM"? and that "would reduce your memory latency and bandwidth? Huh?PaulStoffregen - Monday, July 30, 2018 - link

AVX is an acronym for Advanced Vector Extensions, which are special instructions that perform vector math. Historically AVX has been aimed at multimedia applications, where algorithms like filters require dozens or hundreds of the same math performed on each pixel or audio sample. While the performance is amazing, because a single instruction can operate on 128 to 512 bit vectors of packed data, the functions are fairly simple and only really apply to certain types of applications. It's no replacement for general purpose computing.An "AVX job" would presumably be a program someone has written to leverage AVX instructions to speed up a massive computation. While multimedia has typically been the main use for AVX, modern AI frameworks like Tensorflow (a keyword to google...) can leverage AVX to speed up neural network computation. So it's quite possible someone might try to make heavy use of AVX512 on a server.

I believe an implicit assumption here could be called "Process CPU Affinity" (another term to give to google....) And of course the pretty obvious practice of VPS providers to run more virtual machines on their servers than non-hyperthread CPU cores. If these conditions are true, then it would seem likely your virtual machine would tend to time-slice with the same group of other customers' VPS instances on the same CPU core.

I personally do not have enough experience with AVX to know whether code using mostly AVX instructions would put an undue burned on the L1, L2, L3 caches and memory bus to the actual DRAM. But I have personally written quite a lot of signal processing code on embedded ARM microcontrollers, where sustaining enough read and write performance to the buffers with the signal data (or vectors in memory) is a huge issue. It's pretty easy to imagine AVX512 code could theoretically sustain a large number of row reads & writes as it grinds through incredible amount of vector data. If you're familiar with DDR DRAM timing numbers (usually 4 numbers advertised on consumer DDR DIMMs, each the number of cycles needed for a certain part of a row access), it's pretty easy to imagine a CPU core running lots of AVX instructions causing a large number of row reads and queuing lots of row write backs.

AVX also has earned a reputation for causing thermal problems, since an sustained set of AVX instructions (or "AVX job") places far more computation load on these CPUs than ordinary code.

Hopefully this helps explain", with the caveat that someone who's actually written & debugged & optimized code directly using AVX could probably speak more authoritatively to the memory bandwidth & latency impact on other code.

But I'm going to go with not "acronym barf".

HStewart - Wednesday, July 25, 2018 - link

"I have a bad feeling about Optane. Something tells me that Xpoint will be sold as actual DRAM for entry level VMs on Azure, AWS and other cloud services."I don't think you need to be worry about that - for one thing I don't believe the Optane memory can be used in same slot for DRAM. It appears that even if it is same slots - it requires special programming to access this memory. My feeling is that it will actually be used by VM's and that the system using will have extremely high performance transfer between normal ram and Optane memory.

Yes a system with 3.75 TB of DRAM will perform better than a system with 678G of DRAM and remainder with Optane memory - but think realistically - what kind of system would use 3.75 TB at the same time.

I see it as high performance cache that is slower than all DRAM but faster than SSD.

The following is information on SDK for programming this kind of memory

https://www.snia.org/sites/default/orig/SDC2013/pr...

eastcoast_pete - Wednesday, July 11, 2018 - link

Yes, this won't be coming to a gaming rig near you, at least not near me or for me. Optane-type DIMM-formatted non-volatile memory is a niche product for a niche market, albeit a very lucrative one. The terabyte capacities become interesting if you are, for example, running HANA or similar very large high-availability databases. Customers that can afford the very expensive site licenses for those will also spring for top-end CPUs with all the frills, because it makes business sense. If millions of dollars are at stake, spending $ 150K and more on 1-2 TB ECC DRAM plus matching optane DIMMs is suddenly not that outrageous.