AT 101: Understanding Laptop Displays & How We Test Them

by Brett Howse on July 10, 2018 8:00 AM EST

Everyone might have a different take on what they feel is the single most important factor to consider when purchasing a new laptop, but it would be hard to argue that the display quality shouldn't be near the top. There’s simply no other part of a notebook computer you’re going to use more. The good news is that display quality has improved immensely in recent years, with even some low-cost notebooks offering a good display.

For a while now we've been wanting to do some more fundamentals-driven articles – an introduction to various technologies for people who don't have 21 years of the computing industry crammed into their heads like we do – and given the importance of displays, this is a great place to start. This gives us the opportunity to cover what makes up the display stack in a notebook, how we test it, and what we’re looking for. There’s a tremendous amount of terminology which can be a bit overwhelming as well, and with the advancements in display tech, it’s worth defining the different display types and going over their strengths and weaknesses.

There’s no best display technology for everyone, although the industry has somewhat converged on just a couple of different options, but each has their own strengths and weaknesses.

Resolution

Probably the easiest place to start is display resolution. This is simply the number of pixels on the display, measured horizontally, and then vertically, so a laptop with a resolution of 1920x1080 is going to have 1920 horizontal pixels and 1080 vertical. Over time, there’s been a shift away from the lower resolution displays we were used to, and of course the advantage of a higher resolution is that you can end up with a sharper image and crisper text.

Common resolutions on laptops available today are:

- 1366x768 – WXGA

- 1920x1080 – Full HD or FHD

- 2560x1440 – Quad HD or QHD

- 3840x2160 – Ultra HD or UHD, sometimes also called 4K (although originally 4K was 4096x2160)

Those are the most common, although some devices offer different aspect ratios and unique resolutions as well. If you know the display size and resolution, you can also calculate the pixel density by dividing the diagonal resolution of a display by the diagonal size of the display in inches. Since only the display size is commonly measured by the diagonal, you’d have to convert the resolution from width and height using the Pythagorean theorem. The resulting value will be the display density in pixels per inch (PPI), or how many pixels per inch of display measured on the diagonal. The higher the PPI, the sharper the display.

But as with most things, there’s a cost to moving to a high-resolution display, and it’s not just the actual cost of the panel – although that will almost always be higher as well. Because pixel density is inversely proportional to how well a panel blocks its backlight – denser panels will block more light – increasing the pixel density of a display requires ramping up the strength of the backlighting system as well. And while that’s not an issue for desktop monitors because of their constant power source, for laptops it can have a significant impact on battery life.

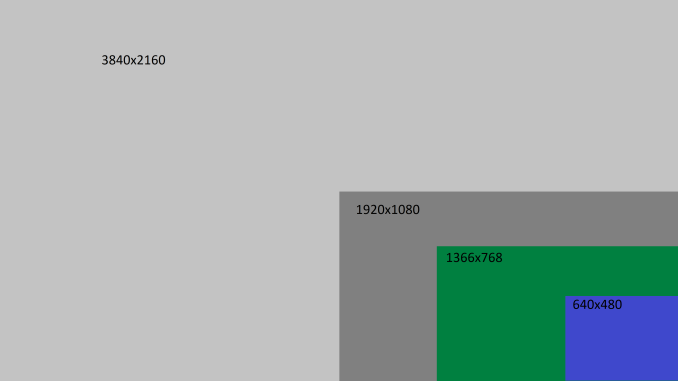

There’s also a cost in terms of software usability. Often, you’ll see an image such as this when comparing the different resolutions available.

If you were using your display at 100% scaling for each resolution, the image above would accurately provide you with an idea on what kind of desktop space you’d have available, but if you ran a typical 15-inch laptop with a 3840x2160 resolution at 100% display scaling, everything would be so tiny that it would be somewhat difficult to use, so Windows provides display scaling options to compensate.

Years ago, it was assumed by software developers that displays would be at or around 96 DPI (Dots per inch, which in this case is equivalent to PPI). As display technology has improved, we are now seeing many laptops offering much higher pixel densities than that. A 13.3-inch laptop with a FHD resolution is already well over the 96 PPI or a traditional display, offering 165 PPI, so Windows will scale images and text up to make it larger. However, older software can sometimes perform unexpectedly when scaling isn’t at 100%. This issue isn’t as bad as it used to be, with software being updated to address it and Windows 10 offering better scaling APIs and support, but it is likely never to be completely solved on Windows. For a deeper look into this issue specifically, check out our look at this from a couple of years back.

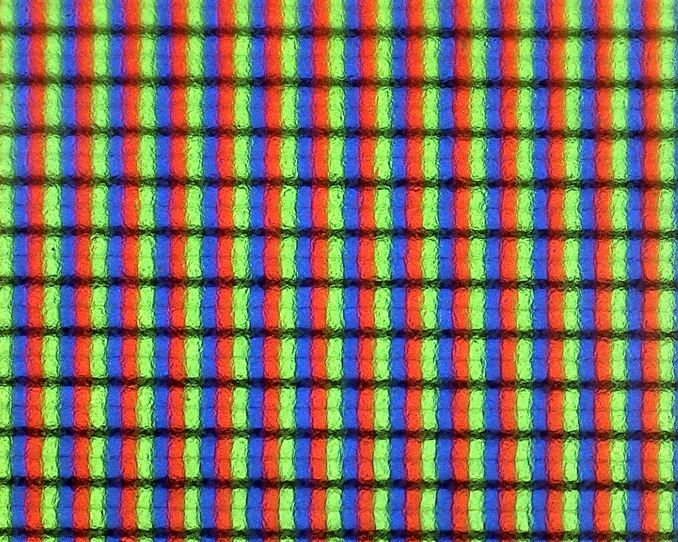

Subpixels on a 1920x1080 13.3-inch display - the haze is the anit-glare coating

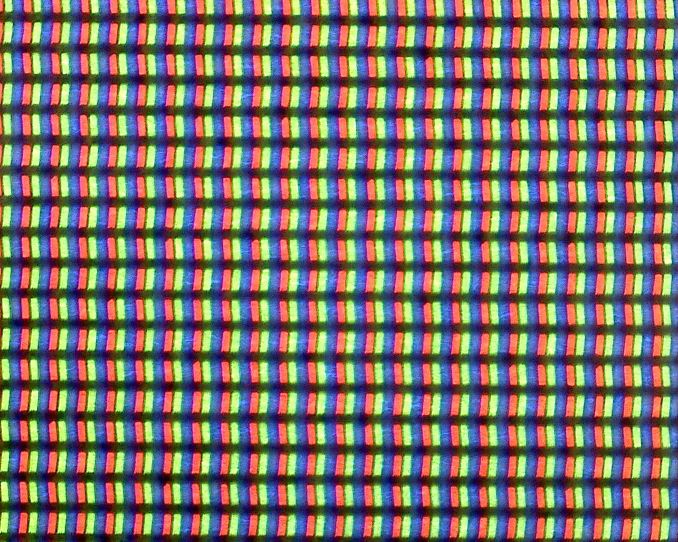

Subpixels on a 3200x1800 13.3-inch display - no haze means it is glossy

Still, even with the drawbacks, the advantages of a higher PPI display are often worth the costs, since the image sharpness is just so much better. This is certainly a case of diminishing returns though. Although a display with a 192 PPI resolution is going to look much better than a 96 PPI one, one that is 384 PPI is going to be less noticeable compared to the first jump, and for a higher cost. Smartphones often have much higher density displays than laptops, but the display is often held much closer to the eyes than a laptop would be. Smaller laptops with very high-resolution displays are going to look great, but the extra power usage of a UHD display on a small notebook might not make it the best fit.

Inspecting the Aspect Ratio

Aspect Ratio is simply the number of horizontal pixels per vertical pixel, expressed as a ratio. The most common aspect ratio on laptops today is 16:9, so for every nine vertical pixels, there are sixteen horizontal pixels. But that wasn’t always the case.

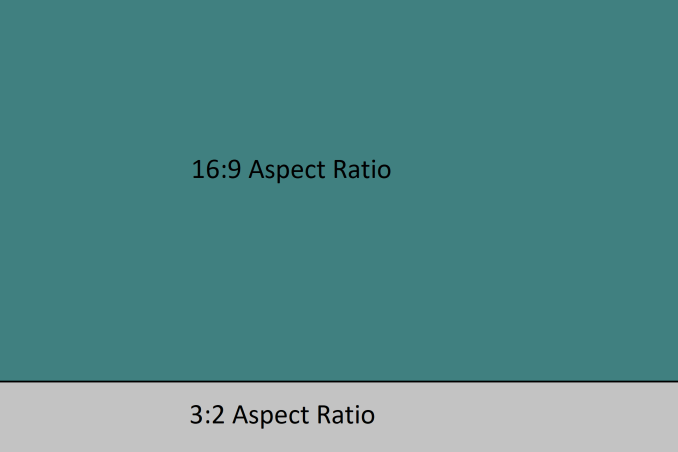

Likely the most common aspect ratios on all computers in years past was 4:3, which was a much more square aspect ratio than we see now. When the world moved to widescreen displays, computers moved to a common aspect ratio of 16:10, but when HD televisions adopted 16:9 as their standard, there was a trend for PC makers to adopt that as well, and it has won out for the time being.

However, much of the work done on a computer often requires additional vertical space, so the loss of 16:10 was mourned by many. At this point, pretty much only Apple consistently offers notebooks with this aspect ratio. You can see the advantage of having more vertical room though when you look at most web content, which is vertical scrolling. The move to 16:9 helped when watching HD video content, and somewhat with movies, although they are generally even wider aspect ratios than 16:9, but hasn’t always been a blessing on the PC.

Luckily, we’ve seen some movement back towards taller displays on notebooks as an option for those that aren’t big fans of 16:9. One of the first was Microsoft with the Surface Pro 3, which offered a 3:2 aspect ratio. On a convertible device like the Surface Pro, the taller aspect ratio also helps when switching the from a landscape view to a portrait view, which you’d do more often with a tablet, but even on laptops, the extra height offered by a 3:2 display is very welcome for most productivity tasks. There’s a smattering of laptops out there now that have adopted 3:2, and likely more on the way.

49 Comments

View All Comments

linuxgeex - Thursday, July 12, 2018 - link

"Driving the extra pixels with the GPU and other components is a tiny difference. That's a common misconception you've stumbled upon."Going from 1920x1080 to 3840x2160 is 4x the rendering cost, minimum (recognise that given more than 2 layers to composite you can easily exceed the CPU's L3 cache size with a 4k display), and that is 4x the amount of time that the CPU and all related subsystems can't drop to C7 sleep.

It's not a tiny difference at all. If it was negligible then why is the OS trying to use PSR (Panel Self Refresh) and FBC (FrameBuffer Compression) to reduce the IO channel and RAM access overheads, while those costs are negligible compared to keeping the CPU and GPU spinning with rasterizing and compositing.

What's keeping your OS and apps compositing constantly? Your browser which now does full-page 60hz updates of every pixel, changed or not, so the OS can't send only the damaged pixels to the display device as in earlier versions. Why? Because modern machines are fast enough and it's a "small difference" but keeps the render pathways hot in the caches so less frames are dropped. Welcome to 2018, when your battery life got slaughtered and people haven't quite clued in yet.

erple2 - Sunday, July 22, 2018 - link

PSR and FBC tasks are tackling the 20% case, though, namely the parts at idle where 80% of the power consumed is just directly from keeping the backlight bright enough that the LCD can be seen. Note also that PSR and FBC doesn't make that much of a difference in battery life overall. I've seen up to about 10% in some cases. And that's consistent with doubling the GPU rendering pipeline efficiency _at idle_ for the entire display pipeline. Doubling the efficiency of 20% of your overall budget decreases power consumption by around 10%.Note that much of the compositing engine is offloaded (in modern GPUs) from the heavyweight parts of the 3D rendering pipeline, so those costs aren't that high in comparison. It's not like you're keeping all 2048 stream processors (or however many equivalent GPU processors) active 60 times a second. That was the first "revolution" in GPU efficiency gains a while back - you didn't need to keep your entire GPU rendering silicon active all the time if they weren't being used.

linuxgeex - Wednesday, July 11, 2018 - link

"Less expensive displays may even reduce this more to 6-bit with Frame Rate Control (FRC) which uses the dithering of adjacent pixels to simulate the full 8-bit levels."No. FRC uses Temporal dithering. It shows the pixel brighter or darker across multiple frames which average out to the intended intensity. On displays with poor response times this actually works out quite nicely. On TN displays, you can actually see the patterns flickering when you are close to a large display and cast your gaze around the display. Particularly in your peripheral vision which is more responsive to high-speed motion changes.

VA - You mentioned MVA, which is one type of PVA arrangement. PVA is Patterned Vertical Alignment, where not all of the VA pixels/subpixels are aligned in the same plane. Almost all VA displays are PVA. PVA allows to directly trade display brightness for wider viewing angles, and to choose in which direction those tradeoffs will be made. For example a PVA television will trade off mostly in the horizontal direction because that allows people to sit in various places around the room and still see the display well. They don't need to increase the vertical viewing angle so that the roof has a good view of the tv. ;-) But for a laptop just the opposite is true. You want to still see the display well when you stop slouching or stand up, but you don't really care if the people to your sides can see your display well. In fact, people purchase privacy guard overlays that reduce the side viewing angles intentionally.

Brett Howse - Wednesday, July 11, 2018 - link

Excellent info thanks!linuxgeex - Thursday, July 12, 2018 - link

The author was obviously in a hurry, saw the word "dithering", and jumped to the conclusion that it was spatial error distribution dithering as is commonly used in static images to create an appearance of a larger palette. ie GIFs, printers. But for video there's a 3rd dimension to perform dithering in which doesn't trade off resolution or cause edge flickering artefacts, so of course they're going to use FRC (Frame Rate Control which is basically a form of PWM) instead of spatial dithering.linuxgeex - Thursday, July 12, 2018 - link

Oh Brett, lol that's you. ;-)UtilityMax - Friday, July 13, 2018 - link

WTF, you guys still test laptop displays at the time when more than half of personal computing has already moved onto mobile devices, like phones or tables, which you no longer review? Mmmokay.linuxgeex - Friday, July 13, 2018 - link

Actually they have reviewed new phones within the last 30 days... Mmmokay.Zan Lynx - Saturday, July 14, 2018 - link

A tablet is just a gimped laptop without a keyboard.madskills42001 - Tuesday, July 17, 2018 - link

Given that contrast is the most important factor in subjective image quality tests, why is more discussion not given to it in this article?