AT 101: Understanding Laptop Displays & How We Test Them

by Brett Howse on July 10, 2018 8:00 AM EST

Everyone might have a different take on what they feel is the single most important factor to consider when purchasing a new laptop, but it would be hard to argue that the display quality shouldn't be near the top. There’s simply no other part of a notebook computer you’re going to use more. The good news is that display quality has improved immensely in recent years, with even some low-cost notebooks offering a good display.

For a while now we've been wanting to do some more fundamentals-driven articles – an introduction to various technologies for people who don't have 21 years of the computing industry crammed into their heads like we do – and given the importance of displays, this is a great place to start. This gives us the opportunity to cover what makes up the display stack in a notebook, how we test it, and what we’re looking for. There’s a tremendous amount of terminology which can be a bit overwhelming as well, and with the advancements in display tech, it’s worth defining the different display types and going over their strengths and weaknesses.

There’s no best display technology for everyone, although the industry has somewhat converged on just a couple of different options, but each has their own strengths and weaknesses.

Resolution

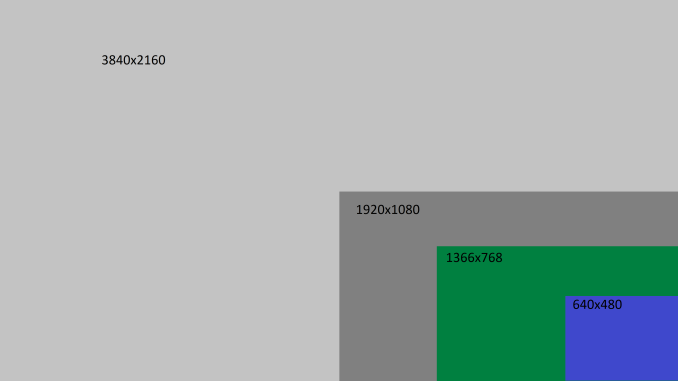

Probably the easiest place to start is display resolution. This is simply the number of pixels on the display, measured horizontally, and then vertically, so a laptop with a resolution of 1920x1080 is going to have 1920 horizontal pixels and 1080 vertical. Over time, there’s been a shift away from the lower resolution displays we were used to, and of course the advantage of a higher resolution is that you can end up with a sharper image and crisper text.

Common resolutions on laptops available today are:

- 1366x768 – WXGA

- 1920x1080 – Full HD or FHD

- 2560x1440 – Quad HD or QHD

- 3840x2160 – Ultra HD or UHD, sometimes also called 4K (although originally 4K was 4096x2160)

Those are the most common, although some devices offer different aspect ratios and unique resolutions as well. If you know the display size and resolution, you can also calculate the pixel density by dividing the diagonal resolution of a display by the diagonal size of the display in inches. Since only the display size is commonly measured by the diagonal, you’d have to convert the resolution from width and height using the Pythagorean theorem. The resulting value will be the display density in pixels per inch (PPI), or how many pixels per inch of display measured on the diagonal. The higher the PPI, the sharper the display.

But as with most things, there’s a cost to moving to a high-resolution display, and it’s not just the actual cost of the panel – although that will almost always be higher as well. Because pixel density is inversely proportional to how well a panel blocks its backlight – denser panels will block more light – increasing the pixel density of a display requires ramping up the strength of the backlighting system as well. And while that’s not an issue for desktop monitors because of their constant power source, for laptops it can have a significant impact on battery life.

There’s also a cost in terms of software usability. Often, you’ll see an image such as this when comparing the different resolutions available.

If you were using your display at 100% scaling for each resolution, the image above would accurately provide you with an idea on what kind of desktop space you’d have available, but if you ran a typical 15-inch laptop with a 3840x2160 resolution at 100% display scaling, everything would be so tiny that it would be somewhat difficult to use, so Windows provides display scaling options to compensate.

Years ago, it was assumed by software developers that displays would be at or around 96 DPI (Dots per inch, which in this case is equivalent to PPI). As display technology has improved, we are now seeing many laptops offering much higher pixel densities than that. A 13.3-inch laptop with a FHD resolution is already well over the 96 PPI or a traditional display, offering 165 PPI, so Windows will scale images and text up to make it larger. However, older software can sometimes perform unexpectedly when scaling isn’t at 100%. This issue isn’t as bad as it used to be, with software being updated to address it and Windows 10 offering better scaling APIs and support, but it is likely never to be completely solved on Windows. For a deeper look into this issue specifically, check out our look at this from a couple of years back.

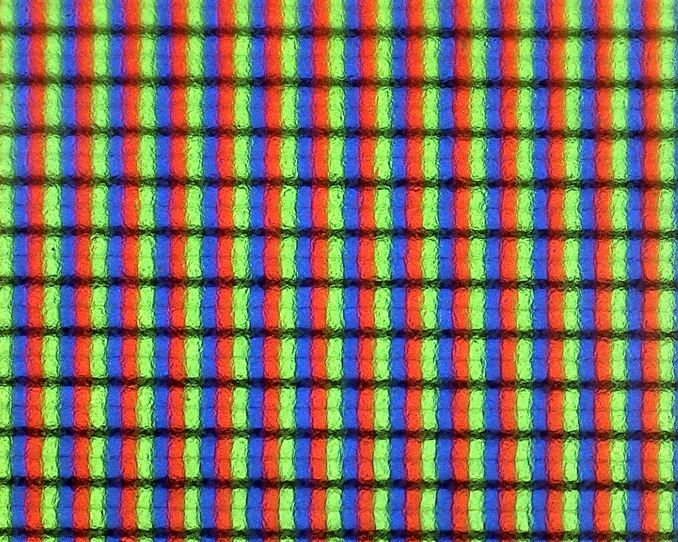

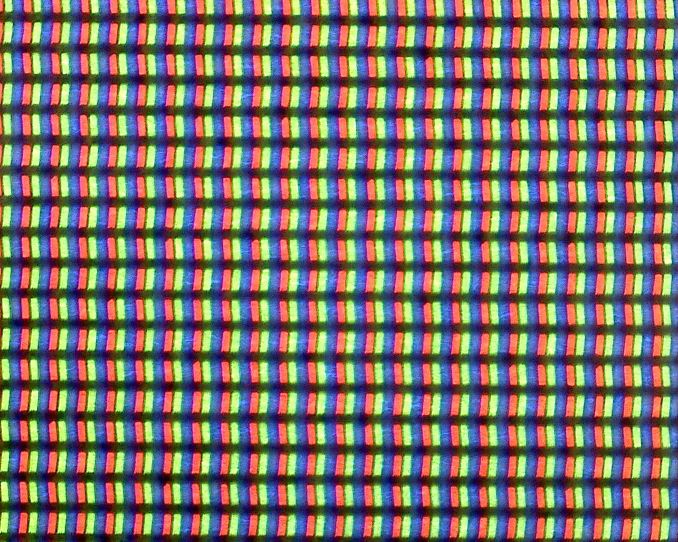

Subpixels on a 1920x1080 13.3-inch display - the haze is the anit-glare coating

Subpixels on a 3200x1800 13.3-inch display - no haze means it is glossy

Still, even with the drawbacks, the advantages of a higher PPI display are often worth the costs, since the image sharpness is just so much better. This is certainly a case of diminishing returns though. Although a display with a 192 PPI resolution is going to look much better than a 96 PPI one, one that is 384 PPI is going to be less noticeable compared to the first jump, and for a higher cost. Smartphones often have much higher density displays than laptops, but the display is often held much closer to the eyes than a laptop would be. Smaller laptops with very high-resolution displays are going to look great, but the extra power usage of a UHD display on a small notebook might not make it the best fit.

Inspecting the Aspect Ratio

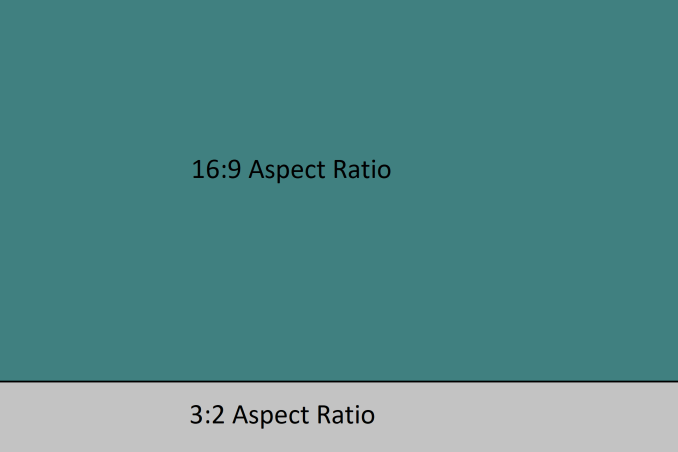

Aspect Ratio is simply the number of horizontal pixels per vertical pixel, expressed as a ratio. The most common aspect ratio on laptops today is 16:9, so for every nine vertical pixels, there are sixteen horizontal pixels. But that wasn’t always the case.

Likely the most common aspect ratios on all computers in years past was 4:3, which was a much more square aspect ratio than we see now. When the world moved to widescreen displays, computers moved to a common aspect ratio of 16:10, but when HD televisions adopted 16:9 as their standard, there was a trend for PC makers to adopt that as well, and it has won out for the time being.

However, much of the work done on a computer often requires additional vertical space, so the loss of 16:10 was mourned by many. At this point, pretty much only Apple consistently offers notebooks with this aspect ratio. You can see the advantage of having more vertical room though when you look at most web content, which is vertical scrolling. The move to 16:9 helped when watching HD video content, and somewhat with movies, although they are generally even wider aspect ratios than 16:9, but hasn’t always been a blessing on the PC.

Luckily, we’ve seen some movement back towards taller displays on notebooks as an option for those that aren’t big fans of 16:9. One of the first was Microsoft with the Surface Pro 3, which offered a 3:2 aspect ratio. On a convertible device like the Surface Pro, the taller aspect ratio also helps when switching the from a landscape view to a portrait view, which you’d do more often with a tablet, but even on laptops, the extra height offered by a 3:2 display is very welcome for most productivity tasks. There’s a smattering of laptops out there now that have adopted 3:2, and likely more on the way.

49 Comments

View All Comments

ikjadoon - Tuesday, July 10, 2018 - link

Excellent overview, Brett. I will be linking this many weeks onward.I’m curious how you were able to measure the SB2’s display power usage—that sounds incredibly handy as panel efficiency seems to be the name of the game here. Is this through software or hardware, like clamping or voltage measurements?

I had high hopes for IGZO penetrating and overtaking a-Si, but it seems like it’s the forgotten middle child sans one or two poster models like the Razer Blade.

Seeing LTPS proliferate, though, is welcome: Lenovo’s using it on their X1 Yoga HDR display and Huawei’s MateBook has won a lot of hearts (and eyes).

MajGenRelativity - Tuesday, July 10, 2018 - link

I enjoyed this article very much. I didn't know VA was a different technology, and assumed it was some subtype of IPS, so I'm glad that was cleared up.I look forward to in-depth articles about other components!

Brett Howse - Tuesday, July 10, 2018 - link

Thanks!Ehart - Tuesday, July 10, 2018 - link

Really nice article, but you're falling into some common confusion on HDR10. HDR10 is really only defined as a 'media profile', and for a display it means that it accepts at least 10 bits to support that profile. For PC displays, they often can accept a 12 bit signal. (I'm using one right now.)DanNeely - Tuesday, July 10, 2018 - link

Is "3k" eg 3200x1800 going out of favor on 13" laptops? I'd be rather disappointed if it is.At 280 DPI it's equivalent to 4k on a 15.6" panel, and on anything that doens't have broken DPI scaling is high enough resolution that you can pick whatever scaling factor you want and have sharp can't come close to seeing the pixels anymore. The higher, going higher eg 4k and 330DPI doesn't really get anything except higher power consumption and lower battery life IMO.

Brett Howse - Tuesday, July 10, 2018 - link

Seems to be less options for 3200x1800 these days.CaedenV - Tuesday, July 10, 2018 - link

"so the loss of 16:10 was mourned by many."Yeah... I miss my 1200p 16:10 display. It wasnt the best quality... but man was it useful!

keg504 - Tuesday, July 10, 2018 - link

If nit is not an SI unit, why not use lux, which is, and is the same quantity (from my understanding)?Death666Angel - Tuesday, July 10, 2018 - link

It isn't, though. The SI unit for nits would be candela/square_meter [cd/m²]. Lux = Lumen/square_meter [lm/m²] has an additional light source component and a distant component in it, because it is used to measure the light that hits a certain point, not the source itself. Most non-US based tech reviewers I frequent use cd/m².Amoro - Tuesday, July 10, 2018 - link

What about adaptive refresh rate technologies?