The AMD 2nd Gen Ryzen Deep Dive: The 2700X, 2700, 2600X, and 2600 Tested

by Ian Cutress on April 19, 2018 9:00 AM ESTCivilization 6

First up in our CPU gaming tests is Civilization 6. Originally penned by Sid Meier and his team, the Civ series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer overflow. Truth be told I never actually played the first version, but every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, it a game that is easy to pick up, but hard to master.

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

Perhaps a more poignant benchmark would be during the late game, when in the older versions of Civilization it could take 20 minutes to cycle around the AI players before the human regained control. The new version of Civilization has an integrated ‘AI Benchmark’, although it is not currently part of our benchmark portfolio yet, due to technical reasons which we are trying to solve. Instead, we run the graphics test, which provides an example of a mid-game setup at our settings.

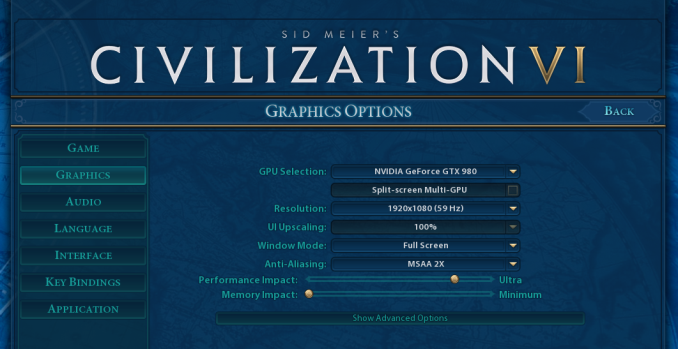

At both 1920x1080 and 4K resolutions, we run the same settings. Civilization 6 has sliders for MSAA, Performance Impact and Memory Impact. The latter two refer to detail and texture size respectively, and are rated between 0 (lowest) to 5 (extreme). We run our Civ6 benchmark in position four for performance (ultra) and 0 on memory, with MSAA set to 2x.

For reviews where we include 8K and 16K benchmarks (Civ6 allows us to benchmark extreme resolutions on any monitor) on our GTX 1080, we run the 8K tests similar to the 4K tests, but the 16K tests are set to the lowest option for Performance.

All of our benchmark results can also be found in our benchmark engine, Bench.

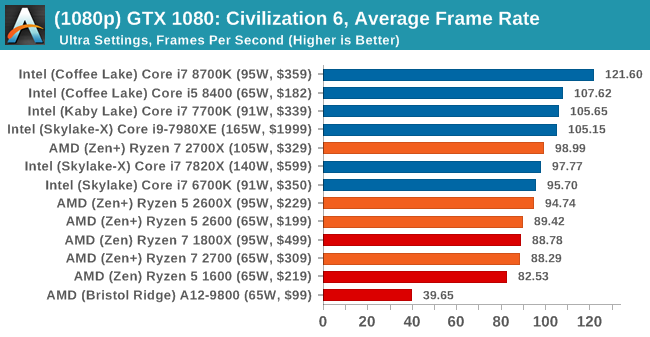

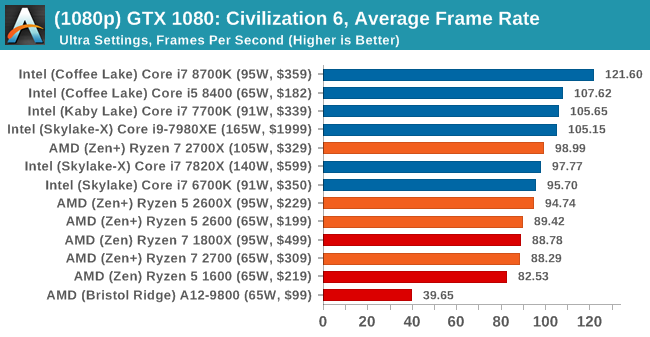

MSI GTX 1080 Gaming 8G Performance

1080p

4K

8K

16K

545 Comments

View All Comments

bsp2020 - Thursday, April 19, 2018 - link

Was AMD's recently announced Spectre mitigation used in the testing? I'm sorry if it was mentioned in the article. Too long and still in the process of reading.I'm a big fan of AMD but want to make sure the comparison is apples to apples. BTW, does anyone have link to performance impact analysis of AMD's Spectre mitigation?

fallaha56 - Thursday, April 19, 2018 - link

Yep, X470 is microcode parchedThis article as it stands is Intel Fanboi stuff

fallaha56 - Thursday, April 19, 2018 - link

As in the Toms articleSaturnusDK - Thursday, April 19, 2018 - link

Maybe he didn't notice that the tests are at stock speeds?DCide - Friday, April 20, 2018 - link

I can't find any other site using a BIOS as recent as the 0508 version you used (on the ASUS Crosshair VII Hero). Most sites are using older versions. These days, BIOS updates surrounding processor launches make significant performance differences. We've seen this with every Intel and AMD CPU launch since the original Ryzen.Shaheen Misra - Sunday, April 22, 2018 - link

Hi , im looking to gain some insight into your testing methods. Could you please explain why you test at such high graphics settings? Im sure you have previously stated the reasons but i am not familiar with them. My understanding has always been that this creates a graphics bottleneck?Targon - Monday, April 23, 2018 - link

When you consider that people want to see benchmark results how THEY would play the games or do work, it makes sense to focus on that sort of thing. Who plays at a 720p resolution? Yes, it may show CPU performance, or eliminate the GPU being the limiting factor, but if you have a Geforce 1080 GTX, 1080p, 1440, and then 4k performance is what people will actually game at.The ability to actually run video cards at or near their ability is also important, which can be a platform issue. If you see every CPU showing the same numbers with the same video card, then yea, it makes sense to go for the lower settings/resolutions, but since there ARE differences between the processors, running these tests the way they are makes more sense from a "these are similar to what people will see in the real world" perspective.

FlashYoshi - Thursday, April 19, 2018 - link

Intel CPUs were tested with Meltdown/Spectre patches, that's probably the discrepancy you're seeing.MuhOo - Thursday, April 19, 2018 - link

Computerbase and pcgameshardware also used the patched... every other site has completely different results from anandtechsor - Thursday, April 19, 2018 - link

Fwiw I took five minutes to see what you guys are talking about. To me it looks like Toms is screwed up. If you look at the time graphs it looks to me like it’s the purple line on top most of the time, but the summaries have that CPU in 3rd or 4th place. E.G. https://img.purch.com/r/711x457/aHR0cDovL21lZGlhLm...At any rate things are generally damn close, and they largely aren’t even benchmarking the same games, so I don’t understand why a few people are complaining.