The AMD 2nd Gen Ryzen Deep Dive: The 2700X, 2700, 2600X, and 2600 Tested

by Ian Cutress on April 19, 2018 9:00 AM ESTTranslating to IPC: All This for 3%?

Contrary to popular belief, increasing IPC is difficult. Attempt to ensure that each execution port is fed every cycle requires having wide decoders, large out-of-order queues, fast caches, and the right execution port configuration. It might sound easy to pile it all on, however both physics and economics get in the way: the chip still has to be thermally efficient and it has to make money for the company. Every generational design update will go for what is called the ‘low-hanging fruit’: the identified changes that give the most gain for the smallest effort. Usually reducing cache latency is not always the easiest task, and for non-semiconductor engineers (myself included), it sounds like a lot of work for a small gain.

For our IPC testing, we use the following rules. Each CPU is allocated four cores, without extra threading, and power modes are disabled such that the cores run at a specific frequency only. The DRAM is set to what the processor supports, so in the case of the new CPUs, that is DDR4-2933, and the previous generation at DDR4-2666. I have recently seen threads which dispute if this is fair: this is an IPC test, not an instruction efficiency test. The DRAM official support is part of the hardware specifications, just as much as the size of the caches or the number of execution ports. Running the two CPUs at the same DRAM frequency gives an unfair advantage to one of them: either a bigger overclock/underclock, and deviates from the intended design.

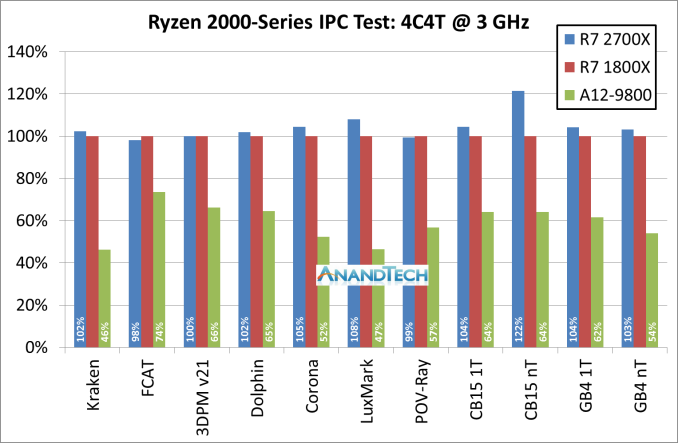

So in our test, we take the new Ryzen 7 2700X, the first generation Ryzen 7 1800X, and the pre-Zen Bristol Ridge based A12-9800, which is based on the AM4 platform and uses DDR4. We set each processors at four cores, no multi-threading, and 3.0 GHz, then ran through some of our tests.

For this graph we have rooted the first generation Ryzen 7 1800X as our 100% marker, with the blue columns as the Ryzen 7 2700X. The problem with trying to identify a 3% IPC increase is that 3% could easily fall within the noise of a benchmark run: if the cache is not fully set before the run, it could encounter different performance. Shown above, a good number of tests fall in that +/- 2% range.

However, for compute heavy tasks, there are 3-4% benefits: Corona, LuxMark, CineBench and GeekBench are the ones here. We haven’t included the GeekBench sub-test results in the graph above, but most of those fall into the 2-5% category for gains.

If we take out Cinebench R15 nT result and the Geekbench memory tests, the average of all of the tests comes out to a +3.1% gain for the new Ryzen 2700X. That sounds bang on the money for what AMD stated it would do.

Cycling back to that Cinebench R15 nT result that showed a 22% gain. We also had some other IPC testing done at 3.0 GHz but with 8C/16T (which we couldn’t compare to Bristol Ridge), and a few other tests also showed 20%+ gains. This is probably a sign that AMD might have also adjusted how it manages its simultaneous multi-threading. This requires further testing.

AMD’s Overall 10% Increase

With some of the benefits of the 12LP manufacturing process, a few editors internally have questioned exactly why AMD hasn’t redesigned certain elements of the microarchitecture to take advantage. Ultimately it would appear that the ‘free’ frequency boost is worth just putting the same design in – as mentioned previously, the 12LP design is based on 14LPP with performance bump improvements. In the past it might not have been mentioned as a separate product line. So pushing through the same design is an easy win, allowing the teams to focus on the next major core redesign.

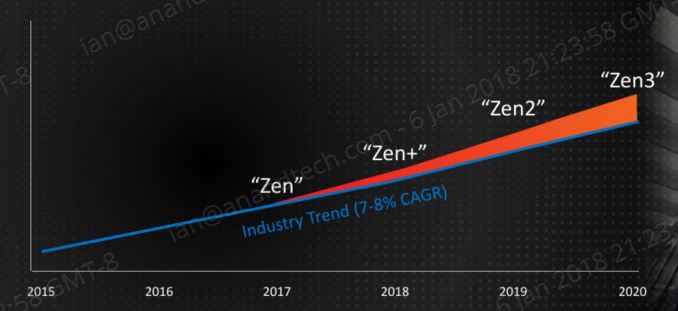

That all being said, AMD has previously already stated its intentions for the Zen+ core design – rolling back to CES at the beginning of the year, AMD stated that they wanted Zen+ and future products to go above and beyond the ‘industry standard’ of a 7-8% performance gain each year.

Clearly 3% IPC is not enough, so AMD is combining the performance gain with the +250 MHz increase, which is about another 6% peak frequency, with better turbo performance with Precision Boost 2 / XFR 2. This is about 10%, on paper at least. Benchmarks to follow.

545 Comments

View All Comments

MDD1963 - Friday, April 20, 2018 - link

The Gskill 32 GB kit (2 x 16 GB/3200 MHz) I bought 13 months ago for $205 is now $400-ish...andychow - Friday, April 20, 2018 - link

Ridiculous comment. 7 years ago I bought 4x8 GB of RAM for $110. That same kit, from the same company, seven years later, now sells for $300. 4x16GB kits are around $800. Memory prices aren't at all the way they've always been. There is clear collusion going on. Micron and SK Hynix have both seen their stock price increase 400% in the last two years. 400%!!!!!The price of RAM just keeps increasing and increasing, and the 3 manufacturers are in no hurry to increase supply. They are even responsible for the lack of GPUs, because they are the bottleneck.

spdragoo - Friday, April 20, 2018 - link

You mean a price history like this?https://camelcamelcamel.com/Corsair-Vengeance-4x8G...

Or perhaps, as mentioned here (https://www.techpowerup.com/forums/threads/what-ha... how the previous-generation RAM tends to go up in price once the manufacturers switch to the next-gen?

Since I KNOW you're not going to claim that you bought DDR4 RAM 7 YEARS AGO (when it barely came out 4 years ago)...

Alexvrb - Friday, April 20, 2018 - link

I love how you ignored everyone that already smushed your talking points to focus on a post which was likely just poorly worded.RAM prices have traditionally gone DOWN over time for the same capacity, as density improves. But recently the limited supply has completely blown up the normal price-per-capacity-over-time curve. Profit margins are massive. Saying this is "the same as always" is beyond comprehension. If it wasn't for your reply I would have sworn you were simply trolling.

Anyway this is what a lack of genuine competition looks like. NAND market isn't nearly as bad but there's supply problems there too.

vext - Friday, April 20, 2018 - link

True. When prices double with no explanation, there must be collusion.The same thing has happened with videocards. I have great doubts about bitcoin mining as a driver for those price increases. If mining was so profitable, you would think there would be a mad scramble to design cards specifically for mining. Instead the load falls on the DYI consumer.

Something very odd is happening.

Alexvrb - Friday, April 20, 2018 - link

They DO design things specifically for mining. It's called an ASIC miner. Unfortunately for us, some currencies are ASIC-resistant, and in some cases they can potentially change the algorithm, which makes such (expensive!) development challenging.Samus - Friday, April 20, 2018 - link

Yep. I went with 16GB in 2013-2014 just because I was like meh what difference does $50-$60 make when building a $1000+ PC. These days I do a double take when choosing between 8GB and 16GB for PC's I build. Even hardcore gaming PC's don't *NEED* more than 8GB, so it's worth saving $100+Memory prices have nearly doubled in the last 5 years. Sure there is cheap ram, there always has been. But a kit of quality Gskill costs twice as much as a comparable kit of quality Gskill cost in 2012.

FireSnake - Thursday, April 19, 2018 - link

Awesome, as always. Happy reading! :)Chris113q - Thursday, April 19, 2018 - link

Your gaming benchmarks results are garbage and every other reviewer got different results than you did. I hope no one takes this review seriously as the data is simply incorrect and misleading.Ian Cutress - Thursday, April 19, 2018 - link

Always glad to see you offer links to show the differences.We ran our tests on a fresh version of RS3 + April Security Updates + Meltdown/Spectre patches using our standard testing implementation.