The AMD 2nd Gen Ryzen Deep Dive: The 2700X, 2700, 2600X, and 2600 Tested

by Ian Cutress on April 19, 2018 9:00 AM ESTImprovements to the Cache Hierarchy

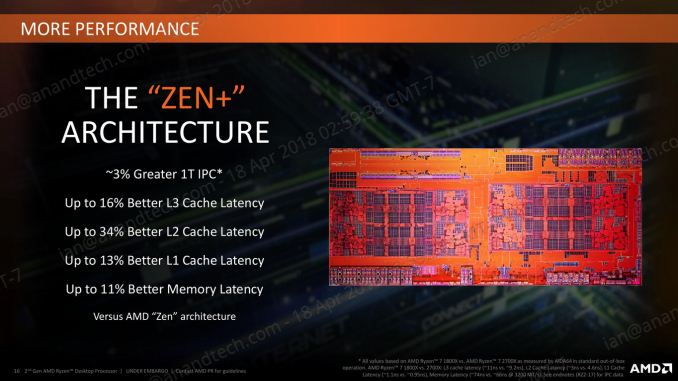

The biggest under-the-hood change for the Ryzen 2000-series processors is in the cache latency. AMD is claiming that they were able to knock one-cycle from L1 and L2 caches, several cycles from L3, and better DRAM performance. Because pure core IPC is intimately intertwined with the caches (the size, the latency, the bandwidth), these new numbers are leading AMD to claim that these new processors can offer a +3% IPC gain over the previous generation.

The numbers AMD gives are:

- 13% Better L1 Latency (1.10ns vs 0.95ns)

- 34% Better L2 Latency (4.6ns vs 3.0ns)

- 16% Better L3 Latency (11.0ns vs 9.2ns)

- 11% Better Memory Latency (74ns vs 66ns at DDR4-3200)

- Increased DRAM Frequency Support (DDR4-2666 vs DDR4-2933)

It is interesting that in the official slide deck AMD quotes latency measured as time, although in private conversations in our briefing it was discussed in terms of clock cycles. Ultimately latency measured as time can take advantage of other internal enhancements; however a pure engineer prefers to discuss clock cycles.

Naturally we went ahead to test the two aspects of this equation: are the cache metrics actually lower, and do we get an IPC uplift?

Cache Me Ousside, How Bow Dah?

For our testing, we use a memory latency checker over the stride range of the cache hierarchy of a single core. For this test we used the following:

- Ryzen 7 2700X (Zen+)

- Ryzen 5 2400G (Zen APU)

- Ryzen 7 1800X (Zen)

- Intel Core i7-8700K (Coffee Lake)

- Intel Core i7-7700K (Kaby Lake)

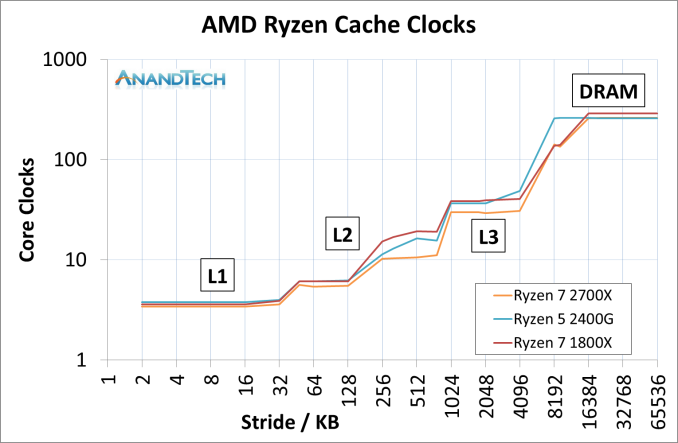

The most obvious comparison is between the AMD processors. Here we have the Ryzen 7 1800X from the initial launch, the Ryzen 5 2400G APU that pairs Zen cores with Vega graphics, and the new Ryzen 7 2700X processor.

This graph is logarithmic in both axes.

This graph shows that in every phase of the cache design, the newest Ryzen 7 2700X requires fewer core clocks. The biggest difference is on the L2 cache latency, but L3 has a sizeable gain as well. The reason that the L2 gain is so large, especially between the 1800X and 2700X, is an interesting story.

When AMD first launched the Ryzen 7 1800X, the L2 latency was tested and listed at 17 clocks. This was a little high – it turns out that the engineers had intended for the L2 latency to be 12 clocks initially, but run out of time to tune the firmware and layout before sending the design off to be manufactured, leaving 17 cycles as the best compromise based on what the design was capable of and did not cause issues. With Threadripper and the Ryzen APUs, AMD tweaked the design enough to hit an L2 latency of 12 cycles, which was not specifically promoted at the time despite the benefits it provides. Now with the Ryzen 2000-series, AMD has reduced it down further to 11 cycles. We were told that this was due to both the new manufacturing process but also additional tweaks made to ensure signal coherency. In our testing, we actually saw an average L2 latency of 10.4 cycles, down from 16.9 cycles in on the Ryzen 7 1800X.

The L3 difference is a little unexpected: AMD stated a 16% better latency: 11.0 ns to 9.2 ns. We saw a change from 10.7 ns to 8.1 ns, which was a drop from 39 cycles to 30 cycles.

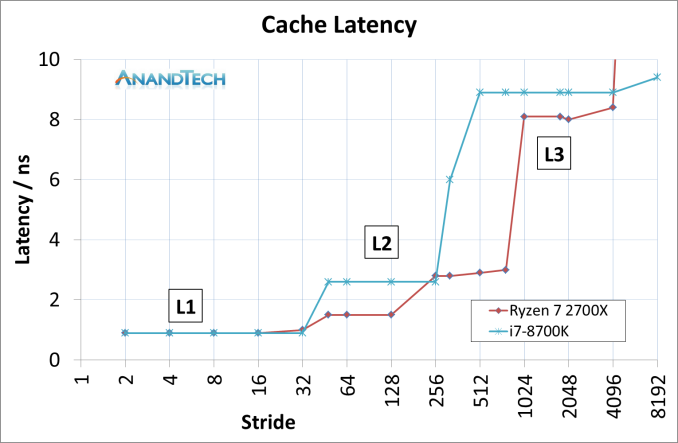

Of course, we could not go without comparing AMD to Intel. This is where it got very interesting. Now the cache configurations between the Ryzen 7 2700X and Core i7-8700K are different:

| CPU Cache uArch Comparison | ||

| AMD Zen (Ryzen 1000) Zen+ (Ryzen 2000) |

Intel Kaby Lake (Core 7000) Coffee Lake (Core 8000) |

|

| L1-I Size | 64 KB/core | 32 KB/core |

| L1-I Assoc | 4-way | 8-way |

| L1-D Size | 32 KB/core | 32 KB/core |

| L1-D Assoc | 8-way | 8-way |

| L2 Size | 512 KB/core | 256 KB/core |

| L2 Assoc | 8-way | 4-way |

| L3 Size | 8 MB/CCX (2 MB/core) |

2 MB/core |

| L3 Assoc | 16-way | 16-way |

| L3 Type | Victim | Write-back |

AMD has a larger L2 cache, however the AMD L3 cache is a non-inclusive victim cache, which means it cannot be pre-fetched into unlike the Intel L3 cache.

This was an unexpected result, but we can see clearly that AMD has a latency timing advantage across the L2 and L3 caches. There is a sizable difference in DRAM, however the core performance metrics are here in the lower caches.

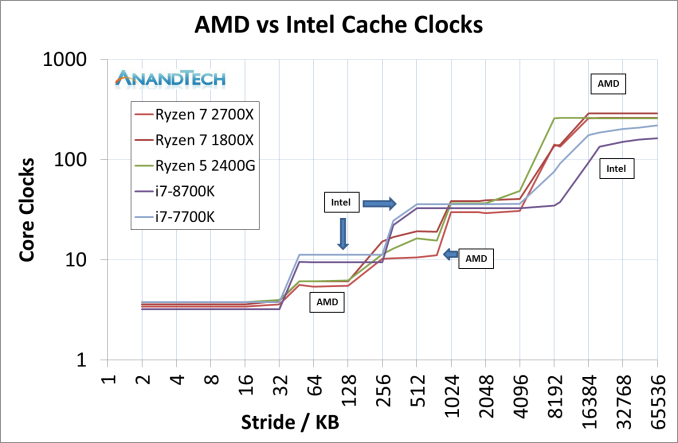

We can expand this out to include the three AMD chips, as well as Intel’s Coffee Lake and Kaby Lake cores.

This is a graph using cycles rather than timing latency: Intel has a small L1 advantage, however the larger L2 caches in AMD’s Zen designs mean that Intel has to hit the higher latency L3 earlier. Intel makes quick work of DRAM cycle latency however.

545 Comments

View All Comments

MDD1963 - Friday, April 20, 2018 - link

The Gskill 32 GB kit (2 x 16 GB/3200 MHz) I bought 13 months ago for $205 is now $400-ish...andychow - Friday, April 20, 2018 - link

Ridiculous comment. 7 years ago I bought 4x8 GB of RAM for $110. That same kit, from the same company, seven years later, now sells for $300. 4x16GB kits are around $800. Memory prices aren't at all the way they've always been. There is clear collusion going on. Micron and SK Hynix have both seen their stock price increase 400% in the last two years. 400%!!!!!The price of RAM just keeps increasing and increasing, and the 3 manufacturers are in no hurry to increase supply. They are even responsible for the lack of GPUs, because they are the bottleneck.

spdragoo - Friday, April 20, 2018 - link

You mean a price history like this?https://camelcamelcamel.com/Corsair-Vengeance-4x8G...

Or perhaps, as mentioned here (https://www.techpowerup.com/forums/threads/what-ha... how the previous-generation RAM tends to go up in price once the manufacturers switch to the next-gen?

Since I KNOW you're not going to claim that you bought DDR4 RAM 7 YEARS AGO (when it barely came out 4 years ago)...

Alexvrb - Friday, April 20, 2018 - link

I love how you ignored everyone that already smushed your talking points to focus on a post which was likely just poorly worded.RAM prices have traditionally gone DOWN over time for the same capacity, as density improves. But recently the limited supply has completely blown up the normal price-per-capacity-over-time curve. Profit margins are massive. Saying this is "the same as always" is beyond comprehension. If it wasn't for your reply I would have sworn you were simply trolling.

Anyway this is what a lack of genuine competition looks like. NAND market isn't nearly as bad but there's supply problems there too.

vext - Friday, April 20, 2018 - link

True. When prices double with no explanation, there must be collusion.The same thing has happened with videocards. I have great doubts about bitcoin mining as a driver for those price increases. If mining was so profitable, you would think there would be a mad scramble to design cards specifically for mining. Instead the load falls on the DYI consumer.

Something very odd is happening.

Alexvrb - Friday, April 20, 2018 - link

They DO design things specifically for mining. It's called an ASIC miner. Unfortunately for us, some currencies are ASIC-resistant, and in some cases they can potentially change the algorithm, which makes such (expensive!) development challenging.Samus - Friday, April 20, 2018 - link

Yep. I went with 16GB in 2013-2014 just because I was like meh what difference does $50-$60 make when building a $1000+ PC. These days I do a double take when choosing between 8GB and 16GB for PC's I build. Even hardcore gaming PC's don't *NEED* more than 8GB, so it's worth saving $100+Memory prices have nearly doubled in the last 5 years. Sure there is cheap ram, there always has been. But a kit of quality Gskill costs twice as much as a comparable kit of quality Gskill cost in 2012.

FireSnake - Thursday, April 19, 2018 - link

Awesome, as always. Happy reading! :)Chris113q - Thursday, April 19, 2018 - link

Your gaming benchmarks results are garbage and every other reviewer got different results than you did. I hope no one takes this review seriously as the data is simply incorrect and misleading.Ian Cutress - Thursday, April 19, 2018 - link

Always glad to see you offer links to show the differences.We ran our tests on a fresh version of RS3 + April Security Updates + Meltdown/Spectre patches using our standard testing implementation.