The Intel Optane SSD 800p (58GB & 118GB) Review: Almost The Right Size

by Billy Tallis on March 8, 2018 5:15 PM ESTSequential Read Performance

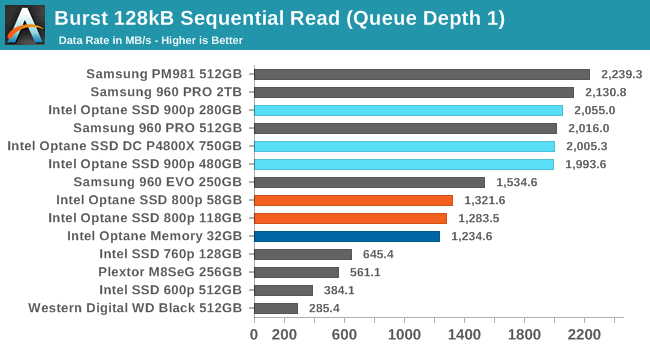

Our first test of sequential read performance uses short bursts of 128MB, issued as 128kB operations with no queuing. The test averages performance across eight bursts for a total of 1GB of data transferred from a drive containing 16GB of data. Between each burst the drive is given enough idle time to keep the overall duty cycle at 20%.

The QD1 burst sequential read performance of the Intel Optane SSD 800p is close to their rated maximum throughput, but they are far behind the 900p and high-end Samsung drives that actually need more than two PCIe lanes.

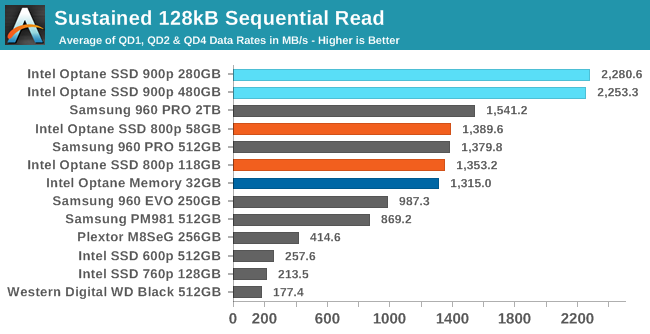

Our test of sustained sequential reads uses queue depths from 1 to 32, with the performance and power scores computed as the average of QD1, QD2 and QD4. Each queue depth is tested for up to one minute or 32GB transferred, from a drive containing 64GB of data.

On the longer sequential read test, the Samsung NVMe SSDs fall down to the level of the Optane SSD 800p, because the flash-based SSDs are slowed down by some of the data fragmentation left over from the random write test. The Optane SSDs performed those writes as in-place modifications and thus didn't incur any fragmentation. This leaves the Samsung 960 PRO 2TB barely faster than the 800p, while the 900p runs away with its lead.

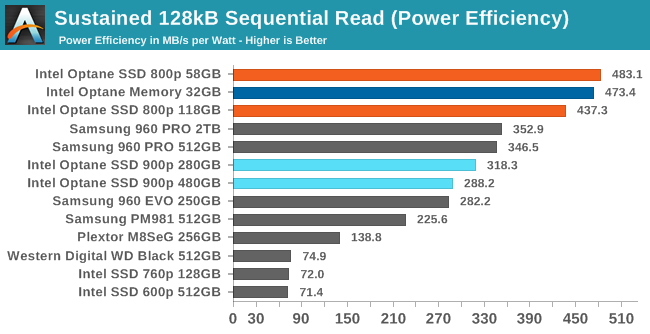

The Optane SSD 800p has the clear lead in power efficiency, as its second-tier performance comes with far lower power consumption than the top-performing 900p.

|

|||||||||

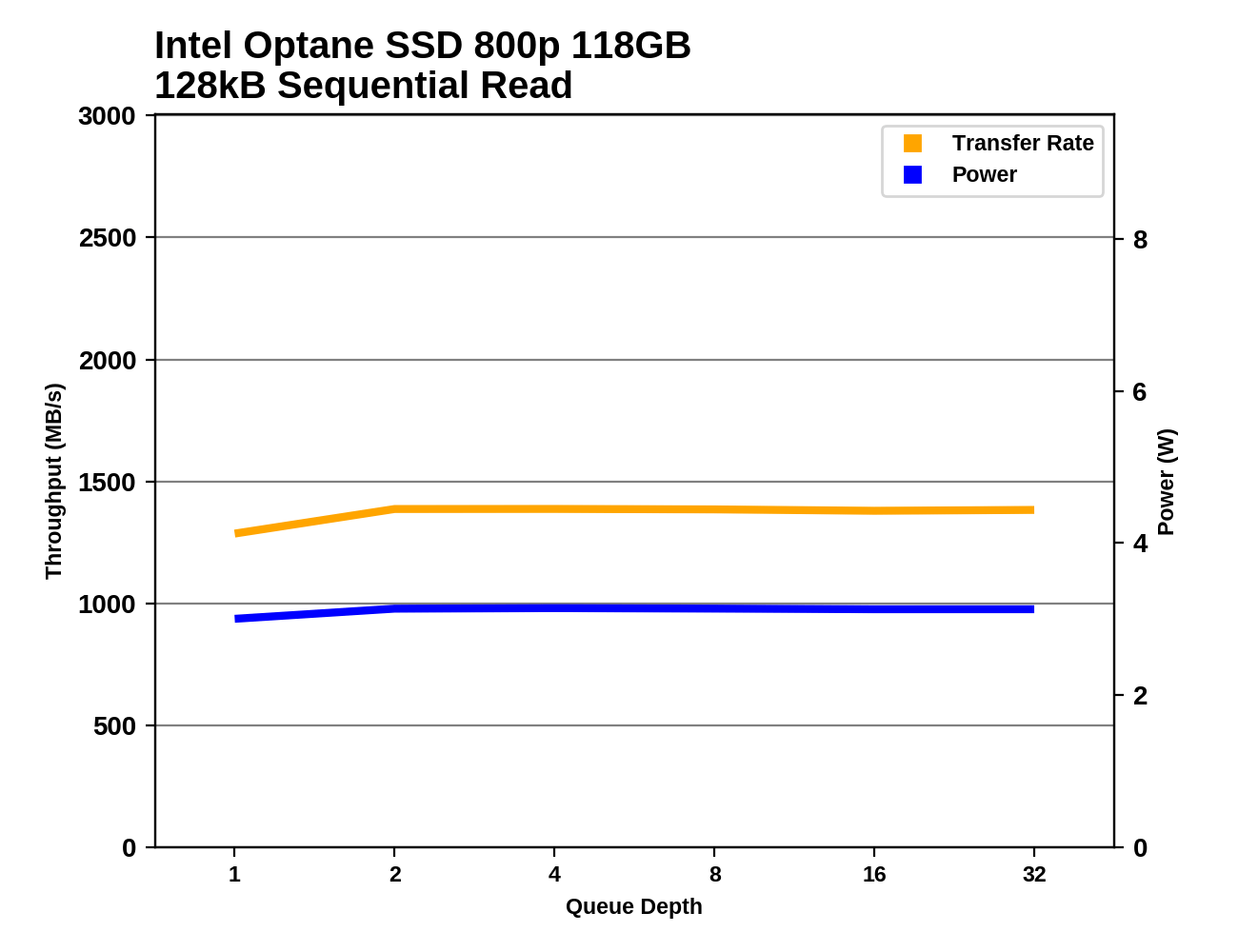

There are no big surprises with the queue depth scaling; the 800p's sequential reads are slightly faster at QD2 than QD1, but there's no further improvement beyond that. The 800p is easily staying within its 3.75 W rated maximum power draw.

Sequential Write Performance

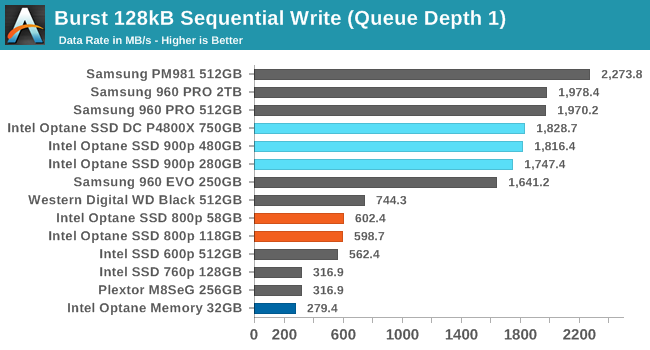

Our test of sequential write burst performance is structured identically to the sequential read burst performance test save for the direction of the data transfer. Each burst writes 128MB as 128kB operations issued at QD1, for a total of 1GB of data written to a drive containing 16GB of data.

The burst sequential write speed of the Intel Optane SSD 800p is no better than the low-end flash-based NVMe SSDs. Without any write caching mechanism in the controller, the fundamental nature of 3D XPoint write speeds shows through. The 900p overcomes this by using a 7-channel controller, but that design doesn't fit within the M.2 form factor.

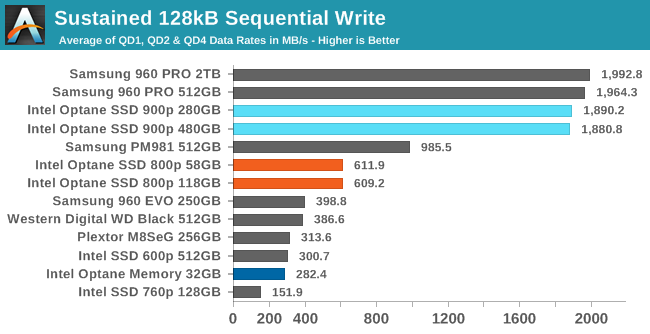

Our test of sustained sequential writes is structured identically to our sustained sequential read test, save for the direction of the data transfers. Queue depths range from 1 to 32 and each queue depth is tested for up to one minute or 32GB, followed by up to one minute of idle time for the drive to cool off and perform garbage collection. The test is confined to a 64GB span of the drive.

The Optane SSD 800p looks better on the sustained sequential write test, as all the TLC-based SSDs run out of SLC cache and slow down dramatically, while the Optane SSDs keep delivering the exact same performance.

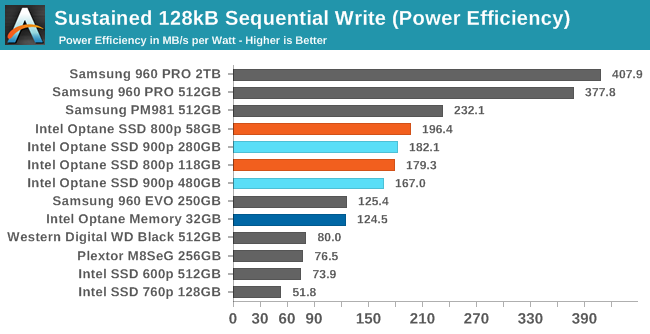

Despite their very different sequential write throughput, the Optane SSD 900p and 800p end up with very similar power efficiency on this test. The Samsung NVMe drives are even more efficient, but only the premium MLC-based 960 PRO has a large lead.

|

|||||||||

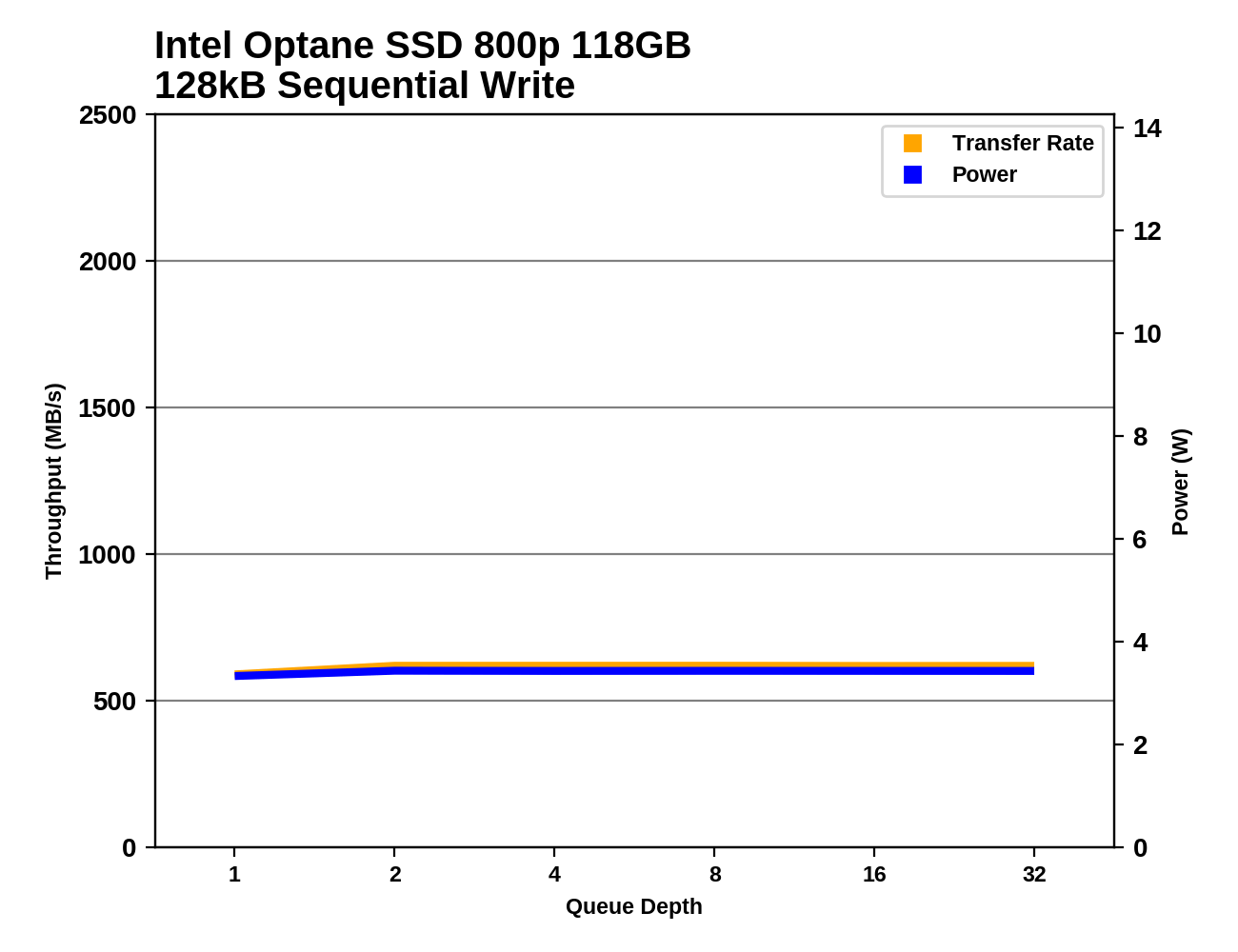

Almost all of the drives show no performance scaling with increasing queue depth, as large-block sequential writes can keep all the memory channels busy with only a little bit of buffering. The 900p needs at least two 128kB writes in flight to reach full throughput.

116 Comments

View All Comments

Reflex - Saturday, March 10, 2018 - link

I also think people forget how crappy & expensive gen1 and 2 SSD's were.Drazick - Friday, March 9, 2018 - link

We really need those in U2 / SATA Express form.Desktop users shouldn't use M2 with all its thermal limitations.

jabber - Friday, March 9, 2018 - link

Whichever connector you use or whatever the thermals, once you go above 600MBps the real world performance difference is very hard to tell in most cases. We just need SATA4 and we can dump all these U2/SATA Express sockets. M.2 for compactness and SATA4 for everything else non Enterprise. Done.Reflex - Friday, March 9, 2018 - link

U2 essentially is next gen SATA. There is no SATA4 on the way. SATA is at this point an 18 year old specification ripe for retirement. There is also nothing wrong with M.2 even in desktops. Heat spreaders aren't a big deal in that scenario. All that's inside a SATA drive is the same board you'd see in M.2 form factor more or less.leexgx - Saturday, March 10, 2018 - link

apart from that your limited to 0-2 slots per board (most come with 6 SATA ports)i agree that a newer SATA that support NVME over it be nice but U2 be nice if anyone would adopt it and make the ports become standard and have U2 SSDs

jabber - Friday, March 9, 2018 - link

I am amazed that no one has decided to just do the logical thing and slap a 64GB Flash cache in a 4TB+ HDD and be done with it. One unit and done.iter - Friday, March 9, 2018 - link

They have, seagate has a hybrid drive, not all that great really.The reason is that caching algorithms suck. They are usually FIFO - first in first out, and don't take into account actual usage patterns. Meaning you get good performance only if your work is confined to a data set that doesn't exceed the cache. If you exceed it, it starts bringing in garbage, wearing down the flash over nothing. Go watch a movie, that you are only gonna watch once - it will cache that, because you accessed it. And now you have gigabytes of pointless writes to the cache, displacing data that actually made sense to be cached.

Which is why I personally prefer to have separate drives rather than cache. Because I know what can benefit from flash and what makes no sense there. Automatic tiering is pathetic, even in crazy expensive enterprise software.

jabber - Friday, March 9, 2018 - link

Yeah I was using SSHD drives when they first came out but 8GB of flash doesn't really cut it. I'm sure after all this time 64GB costs the same as 8GB did back then (plus it would be space enough for several apps and data sets to be retained) and the algorithms will have improved. If Intel thinks caches for HDDs have legs then why not just combine them in one simple package?wumpus - Friday, March 9, 2018 - link

Presumably, there's no market. People who buy spinning rust are either buying capacity (for media, and using SSD for the rest) or cheaping out and not buying SSDs.What surprises me is that drives still include 64MB of DRAM, you would think that companies who bothered to make these drives would have switched to TLC (and pseudo-SLC) for their buffer/caches (writing on power off must be a pain). Good luck finding someone who would pay for the difference.

Intel managed to shove this tech into the chipsets (presumably a software driver that looked for the hardware flag, similar to RAID) in 2011-2012, but apparently dropped that soon afterward. Too bad, reserving 64GB of flash to cache a harddrive (no idea if you could do this with a RAID array) sounds like something that is still usefull (not that you need the performance, just that the flash is so cheap). Just make sure the cache is set to "write through" [if this kills performance it shouldn't be on rust] to avoid doubling your chances of drive loss. Apparently the support costs weren't worth the bother.

leexgx - Saturday, March 10, 2018 - link

8GB should be plenty for SSHD and there currant generation have cache evic protection (witch i think is 3rd gen) so say a LBA block is read 10 times it will assume that is something you open often or its a system file or a startup item, so 2-3GB of data will not get removed easily (so windows, office, browsers and other startup items will always be in the nand cache) the rest of the caching is dynamic if its had more then 2-4 reads it caches it to the nandthe current generation SSHDs by seagate (don't know how others do it) its split into 3 sections so has a easy, bit harder and very hard to evict from read cache, as the first gen SSHDs from seagate just defragmenting the drive would end evicting your normal used stuff as any 2 reads would be cached right away that does not happen any more

if you expect it to make your games load faster you need to look elsewhere, as they are meant to boost commonly used applications and OS and on startup programs but still have the space for storage

that said i really dislike HDDs as boot drives if they did not cost £55 for a 250gb SSD i put them in for free