Western Digital Stuns Storage Industry with MAMR Breakthrough for Next-Gen HDDs

by Ganesh T S on October 12, 2017 8:00 AM EST

Yesterday, Western Digital announced a breakthrough in microwave-assisted magnetic recording (MAMR) that completely took the storage industry by surprise. The takeaway was that Western Digital would be using MAMR instead of HAMR for driving up hard drive capacities over the next decade. Before going into the specifics, it is beneficial to have some background on the motivation behind MAMR.

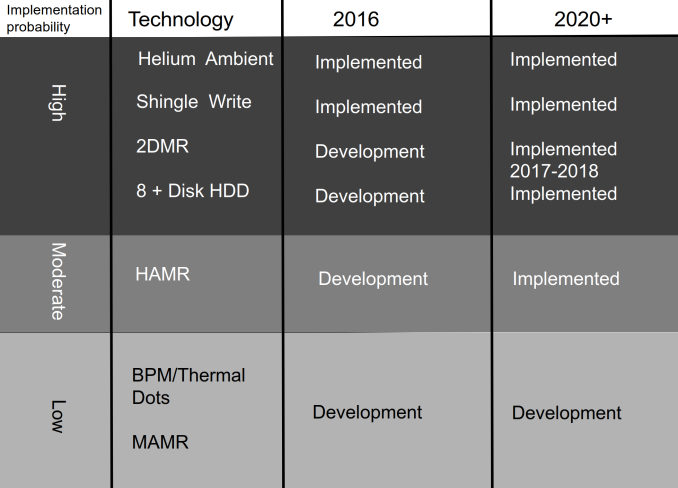

Hard drives may be on the way out for client computing systems, but, they will continue to be the storage media of choice for datacenters. The Storage Networking Industry Association has the best resources for identifying trends in the hard drive industry. As recently as last year, heat-assisted magnetic recording (HAMR) was expected to be the technology update responsible for increasing hard drive capacities.

Slide Courtesy: Dr.Ed Grochowski's SNIA 2016 Storage Developer Conference Presentation

'The Magnetic Hard Disk Drive: Today’s Technical Status and Its Future' (Video, PDF)

Mechanical Hard Drives are Here to Stay

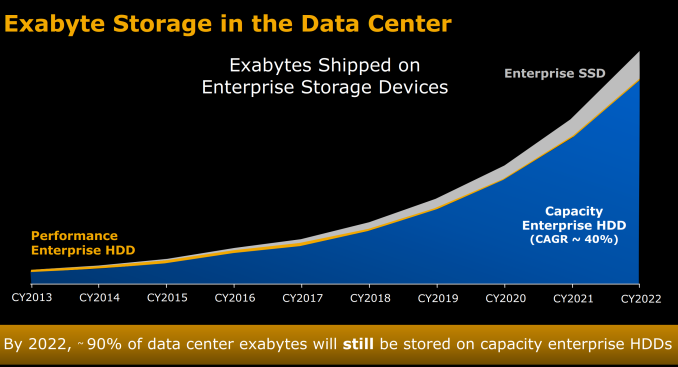

One of the common misconceptions amongst readers focused on consumer technology relates to flash / SSDs rendering HDDs obsolete. While using SSDs over HDDs is definitely true in the client computing ecosystem, it is different for bulk storage. Bulk storage in the data center, as well as the consumer market, will continue to rely on mechanical hard drives for the foreseeable future.

The main reason lies in the 'Cost per GB' metric.

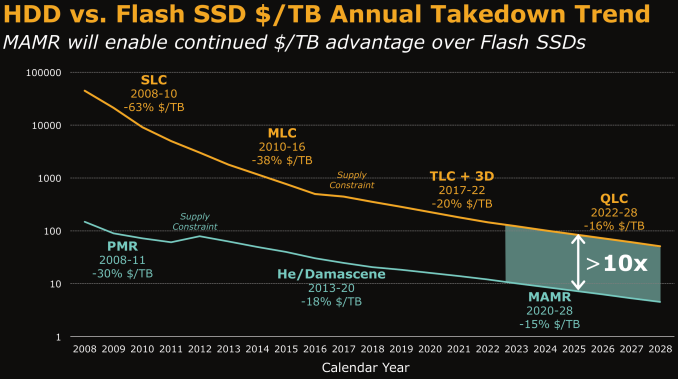

Home consumers are currently looking at drives to hold 10 TB+ of data, while datacenters are looking to optimize their 'Total Cost of Ownership' (TCO) by cramming as many petabytes as possible in a single rack. This is particularly prevalant for cold storage and archival purposes, but can also expand to content delivery networks. Western Digital had a couple of slides in their launch presentation yesterday that point towards hard drives continuing to enjoy this advantage, thanks to MAMR being cost-effective.

Despite new HDD technology, advancements in solid state memory technology are running at a faster pace. As a result SSD technology and NAND Flash have ensured that performance enterprise HDDs will make up only a very minor part of the total storage capacity each year in the enterprise segment.

The projections presented by any vendor's internal research team always need to be taken with a grain of salt, but given that SanDisk is now a part of Western Digital the above market share numbers for different storage types seem entirely plausible.

In the next section, we take a look at advancements in hard drive technology over the last couple of decades. This will provide further technical context to the MAMR announcement from Western Digital.

127 Comments

View All Comments

cekim - Thursday, October 12, 2017 - link

The bigger concern is throughput - if it takes the bulk of the MTBF of a drive to write then read it we are gonna have a bad time... quick math - maybe I goofed, but given 250MB/s and TB = 1024^4 that's 167,772s or 2796m or 46 hours to read the entire drive. Fun time waiting 2 days for a raid re-build...imaheadcase - Thursday, October 12, 2017 - link

If you are using this for home use, you should not be using raid anyways. Since you will had SSD on computer, and also if its a server bandwidth is not a concern since its on LAN. And backing up to cloud is what %99 of people do in that situation.RAID is dead for most part.

qap - Thursday, October 12, 2017 - link

It's dangerous not only for RAID, but also for that "cloud" you speak of and underlaying object storages. Typical object storage have 3 replicas. With 250MBps peak write/read speed you are not looking at two days to replicate all files. In reality it's more like two weeks to one month because you are handling lot of small files, transfer over LAN and in that case both read and write suffer. Over the course of several weeks there is too high probability of 3 random drives failing.We were considering 60TB SATA SSDs for our object storage, but it simply doesn't add up even in case of SSD-class read/write.

Especially if there is only single supplier of such drives, chance of synchronized failure of multiple drives is too high (we had one such scare).

LurkingSince97 - Friday, October 20, 2017 - link

That is not how it works. If you have 3 replicas, and one drive dies, then all of that drive's data has two other replicas.Those two other replicas are _NOT_ just on two other drives. A large clustered file system will have the data split into blocks, and blocks randomly assigned to other drives. So if you have 300 drives in a cluster, a replica factor of 3, and one drive dies, then that drive's data has two copies, evenly spread out over the other 299 drives. If those are spread out across 30 nodes (each with 10 drives) with 10gbit network, then we have aggregate ~8000 MB/sec copying capacity, or close to a half TB per minute. That is a little over an hour to get the replication factor back to 3, assuming no transfers are local, and all goes over the network.

And that is a small cluster. A real one would have closer to 100 nodes and 1000 drives, and higher aggregate network throughput, with more intelligent block distribution. The real world result is that on today's drives it can take less than 5 minutes to re-replicate a failed drive. Even with 40TB drives, sub 30 minute times would be achievable.

bcronce - Thursday, October 12, 2017 - link

RAID isn't dead. The same people who used it in the past are still using it. It was never popular outside of enterprise/enthusiast use. I need a place to store all of my 4K videos.wumpus - Thursday, October 12, 2017 - link

[non-0] RAID almost never made sense for home use (although there was a brief point before SSDs where it was cool to partitions two drives so /home could be mirrored and /everything_else could be striped.Backblaze uses some pretty serious RAID, and I'd expect that all serious datacenters use similar tricks. Redundancy is the heart of storage reliability (SSDs and more old fashioned drives have all sorts of redundancy built in) and there is always the benefit of having somebody swap out the actual hardware (which will always be easier with RAID).

RAID isn't going anywhere for the big boys, but unless you have a data hording hobby (and tons of terrabytes to go with it), you probably don't want RAID at home. If you do, then you you will probably on need to RAID your backups (RAID on your primary only helps for high availability).

alpha754293 - Thursday, October 12, 2017 - link

I can see people using RAID at home thinking that it will give them the misguided latency advantage (when they think about "speed").(i.e. higher MB/s != lower latency, which is what gamers probably actually want when they put two SSDs on RAID0)

surt - Sunday, October 15, 2017 - link

Not sure what game you are playing, but at least 90% of the tier 1 games out there care mostly about throughput not latency when it comes to hard drive speed. Hard drive latency in general is too great for any reasonable game design to assume anything other than a streaming architecture.Ahnilated - Thursday, October 12, 2017 - link

Sorry but if you backup to the cloud you are a fool. All your data is freely accessible to anyone from the script kiddies on up. Much less transferring it over the web is a huge risk.Notmyusualid - Thursday, October 12, 2017 - link

@ AhnilatedI never liked the term 'script kiddies'.

What is the alternative? Waste your time / bust your ass writing your own exploit(s) - when so many cool exploits already exist?

Some of us who dabble with said scripts, have significant other networking / Linux knowledge, so it doesn't fit to denigrate us, just because we can't be arsed to write new exploits ourselves.

We've better things to be doing with our time...

I bet you don't make your own clothes, even though you possibly can.