Analyzing Falkor’s Microarchitecture: A Deep Dive into Qualcomm’s Centriq 2400 for Windows Server and Linux

by Ian Cutress on August 20, 2017 11:00 AM EST- Posted in

- CPUs

- Qualcomm

- Enterprise

- SoCs

- Enterprise CPUs

- ARMv8

- Centriq

- Centriq 2400

Enterprise Features: Security

With security being a strong focal point in data center tasks, all of the major players that want to provide processors for cloud deployments have been getting their hands dirty and talking security. The ability to provide security keys for hypervisors, VMs, and everything else that can be sandboxed from other users is paramount. To this extent, the Centriq 2400 is supporting two levels of security: EL3 and EL2. This means TrustZone at a system level (EL3) as well as a hypervisor level (EL2), although Qualcomm has not gone into detail if this extends through to having some VMs secure and others not within the same hypervisor environment. Where some of Qualcomm’s competitors are using ARM’s TrustZone implementation – which is an ARM Cortex-A5 for the management – Qualcomm has stated that their solution is not ARM based but a custom design that is TrustZone compliant. We confirmed that this wasn’t another re-use of an ARM architecture license.

Also for security, Qualcomm has added instructions geared towards cryptography acceleration, supporting AES, SHA1, and SHA2-256.

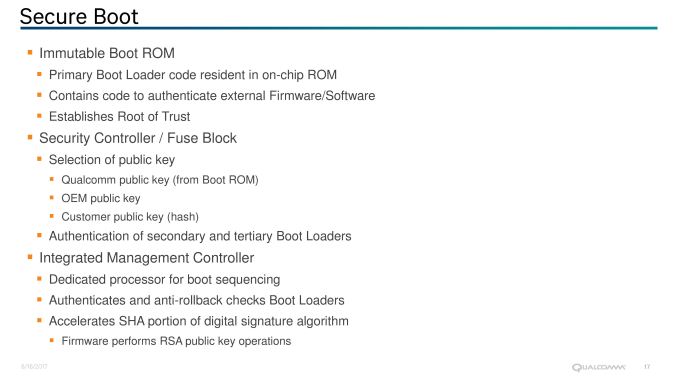

Enterprise Features: Secure Boot

Implementing a Root of Trust has also being making the rounds in recent years. With nefarious code potentially rewriting firmware, or zero-day flaws in technology being exploited by friend and foe, being able to verify the underlying system is as intended and only as intended becomes paramount. Qualcomm’s Centriq 2400 will use Secure Boot functionality.

This is accomplished by providing an Immutable Boot ROM via an integrated management controller, with burned in code and cryptographic keys to authenticate firmware and software before any other firmware is loaded. Qualcomm states that this guarantees knowledge of ownership at the base level, as it allows customers to store (at purchase) public keys from Qualcomm, the OEM or the customer to authenticate secondary and tertiary bootloaders with an anti-rollback check. The management controller also supports accelerated cryptography on SHA for digital signatures and RSA public key operations.

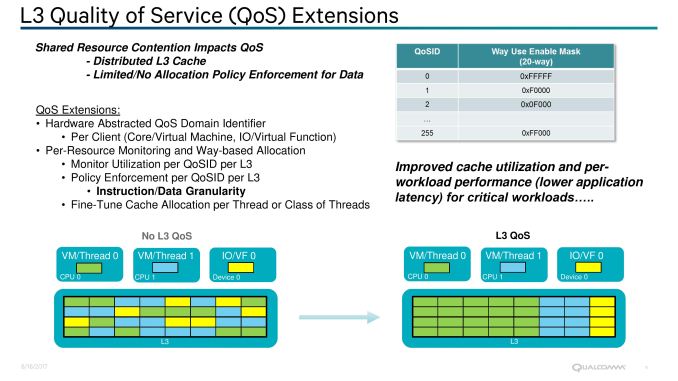

Enterprise Features: QoS

Also on the cards is L3 quality of service. In shared resource environments, mission critical applications can be disturbed by ‘noisy neighbors’. With multiple virtual machines vying for the same resources on a single machine, issues such as shared cache contention have flared up in recent quarters for data center use. If one VM is relying on consistent performance from memory accesses from cache but another program is thrashing it and causing inconsistent performance, the user experience can noticeably be disturbed.

There are multiple ways to tackle this, such as increasing the amount of private cache per core/VM, or by providing L3 cache Quality of Service (QoS) features. Intel has done both in recent years, such as increasing the amount of L2 private cache on the Skylake-SP Xeons from 256KB to 1MB, as well as offering L3 QoS since Broadwell-EP. AMD uses 512KB of L2 private cache, and also has QoS in play. Qualcomm isn’t disclosing the amount of L2 or L3 cache in today’s announcement, but were happy to discuss their QoS strategy.

Qualcomm has stated (despite some odd diagrams perhaps suggesting otherwise) that the L3 cache in the Centriq family is a distributed cache, which likely means that each core (or Duplex, more on that later) has a certain amount of associated L3 cache and L3 cache tags with it. By using a hardware abstracted QoS identification method per client, the SoC can monitor resources and enforce L3 QoS policies per domain ID and per L3 segment, down to the instruction and data level granularity. This is done using Way-based allocation, and policies can be adjusted or fine-tuned on the fly per thread or class of threads. Qualcomm’s implementation can support up to 256 defined environments, one of which can be designated or the SoC IO.

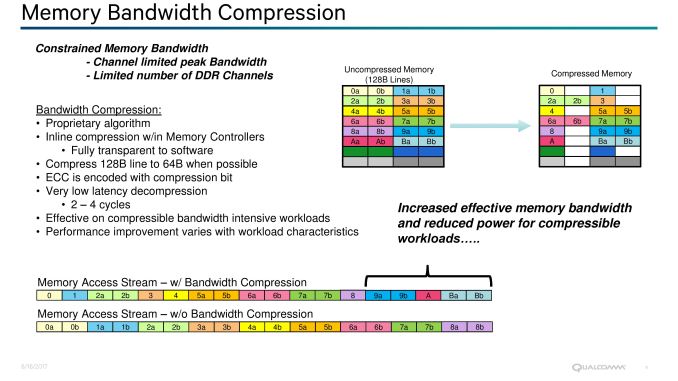

Enterprise Features: Memory Bandwidth Compression

One of Qualcomm’s angles in the data center space is going to be that many data center workloads are memory bandwidth constrained. ‘Feeding the Beast’ is the limit for the markets they want to enter, so by enabling transparent memory compression out to DRAM, Qualcomm is attempting to address the issue. This feature will be transparent to any software, with the effect seen mostly in compressible data streams and memory streaming benchmarks.

By using a proprietary algorithm, Qualcomm’s inline compression will attempt to reduce a 128-byte cache line to 64-bytes with ECC as it moves into main memory. When recalling the data back into the core or for committing to storage, decompression adds an additional 2-4 cycles (1-2% on 250-cycle latency) but aims to bring more data in per request than uncompressed data. There could be a slight added benefit of lower power consumption as well, as less data is transferred. We’ve seen these techniques in the GPU space for a number of years.

From the software perspective, the effect will vary considerably from test to test depending on the workload. The Centiq 2400 series comes with six DDR4 memory channels, supporting two DIMMs per channel and up to DDR4-2667, so there’s going to be a lot of bandwidth to begin with – but sometimes that just isn’t enough.

41 Comments

View All Comments

DanNeely - Sunday, August 20, 2017 - link

Mobile CSS needs fixed. Bulleted lists need to wrap, instead of overflowing into horizontal scroll as they currently do on Android.twotwotwo - Sunday, August 20, 2017 - link

The things I most wonder about the chip itself are, how much worse will single-thread speed be, and how much better will throughput/$ be?For serial speed, there's sort of a sliding scale of "good enough"; you can certainly find uses for chips that are slower than Intel's large cores, but as single-thread perf gets worse and worse, more types of app become tricky to run on it because of latency. So you want latency stats for typical enterprisey Java or C# (or, heck, Go) Web app or widely used databases or infrastructure tools. That's also a test of how well compilers and runtimes are tuned for ARMV8, the chip, and live with may cores, but since early customers will have to deal with the ecosystem that exists today, that's reasonable.

For cost-effective throughput I guess we need to have an idea of at least price and power consumption, and parallel benchmarks that will hit bottlenecks a single-threaded one might not, like memory bandwidth. And the toughest comparison is probably against Intel's parts in the same segment, Xeon D and their server Atom chips. Something that makes it harder to win big on throughput/$ is that the CPU's cost and power consumption are only a piece of the total: DRAM, storage, network, and so on account for a lot of it. Also, the big cloud customers Qualcomm wants to win probably aren't paying the same premiums as you and I are to Intel.

Then, aside from questions about the chip itself, there are questions about the ecosystem and customers. There are the questions above of how well toolchains and software are tuned. Maybe the biggest question is whether some big customer will make the leap and do some deployments on lots of slower cores. It might be a strategic long-term bet for some big cloud company that wants more competition in the server chip space, but I bet they have to be willing to lose real money on the effort for a generation or two first.

name99 - Monday, August 21, 2017 - link

Intel sells 28 core CPUs that run at 2.1 GHz (and turbo up to 3.8GHz but see below).Hell, they sell 16 core systems that run at 2.0 GHz and only turbo up to 2.8 GHz.

Remember QC is not TRYING to sell these to amateurs, or even as office servers. They are targeted at data warehouse tasks where the job they're doing will be pretty well defined, and it's expected for the most part that ALL the cores will be running (ie when the work load lightens, you shut down entire dies and then racks, you don't futz around with just shutting down single cores).

For environments like that, turbo'ing is of much less value. QC doesn't have to (and isn't) targeting the entire space of HPC+server+data warehouse, just the part that's a good match to what they're offering.

Threska - Tuesday, August 22, 2017 - link

Well there is the small developer virtualization market like Ansible.prisonerX - Monday, August 21, 2017 - link

A preoccupation with single thread performance is the domain of video game playing teenagers and not terribly important, neither is the "latency" you refer to. This sort of high-core, efficient processor is going to be used where throughput and price/power/performance ratio (ie, all three, not just any one of those), are the key metric.Latency is mostly irrelevant since processing will be stream oriented and bandwidth limited rather than hamstrung by latency (thus features such as memory bandwidth compression). Gimmicks like "turbo" (which should be called by its proper name: "throttling") and favoring single thread performance are counterproductive in this mode. Being able to deploy many CPUs in dense compute nodes is what is required and memory, storage and networking are minor parts here.

I don't know why you think compilers or runtimes is a concern, 99.9% of code is common across archs, so if you've supported a lot of x86 cores your code is going to function well for a lot of ARM cores with a small amount of arch specific configuration. The compilers themselves, namely GCC and LLVM are mature as is their support for ARM.

Finally the new ARM CPUs don't have to beat Intel, just stay roughly competitive, because the one thing the tech industry hates more than a monopoly is a monopoly that has abused its monopoly powers, and Intel is it. Industry is itching for an alternative, and near enough is good enough.

deltaFx2 - Thursday, August 24, 2017 - link

"Industry is itching for an alternative": While this is true, is the industry truly interested in an alternative ISA, or alternative supplier? Because there is one now in the x86 space, and is very competitive, and in some metrics better than Intel. Also, your argument about single threaded performance being irrelevant in servers is false. A famous example of this is a paper in ISCA by google folks arguing in favor of high IPC machines (among other things). They also note that memory bandwidth is not as critical as latency. Now this is specific to google, but in plenty of other cases too, unless you have a very lopsided configuration, bandwidth doesn't get anywhere near saturation. There are also plenty of server users who provide extra cooling capacity to run at higher than base frequencies because it's cheaper than scaling out to more nodes. Obviously, your workloads should scale with freq."Finally the new ARM CPUs don't have to beat Intel," -> change intel to AMD. AMD is hugely motivated to compete on price and has the performance to match intel in many workloads. And AMD's killer app is the 1P system, exactly where Qualcomm intends to go. You also have to add the cost of porting from x86->ARM (recompile, validation, etc). Time is money and employees need to be paid. So the question is, why ARM? More threads/socket? Nope. More memory/socket? Nope. More perf/thread? Probably not based on the architecture described but we'll see. More connectivity then? Nope. Lower absolute power? Maybe. Lower cost? I suspect AMD's MCM design is great for yields. And there's the porting cost if you're not already on ARM.

There's a lot more work to be done and money to be spent before ARM becomes competitive in the mainstream server space. QC has the deep pockets to stick it out, but I am not sure about cavium.

Gc - Sunday, August 20, 2017 - link

Confusing terminology: prefetch vs. fetchPrefetch heuristics predict *future* memory addresses based on past memory access patterns, such as sequential or striding patterns, and try to prefetch the relevant cache lines *before* a miss occurs, attempting to avoid the cache miss or at least reduce the delay. A memory fetch to satisfy a cache miss is not a prefetch.

Slide: "Hardware Prefetch on L1 miss."

Text: "An L1 miss will initiate a hardware prefetch."

The initial fetch is after the miss, so the initial fetch is not a 'prefetch'. I assume this means that it is not only fetching the missed cache line but also triggering the prefetchers to fetch additional cache lines.

Slide: "Hardware data prefetch engine Prefetches for L1, L2, and L3 caches"

Text: "If a miss occurs on the L1-data cache, hardware data prefetchers are used to probe the L2 and L3 caches."

The slide is saying that data is prefetched at all three levels of cache. I'm not sure what the text is saying. Probing refers to querying the caches around the fabric to see which if any holds the requested cache line. This is part of any fetch, not specific to prefetching. Maybe the text is trying to say that the prefetchers not only remember past addresses and predict future addresses, but also remember which cache held past addresses and predicts which cache holds fetched and prefetched addresses?

YoloPascual - Monday, August 21, 2017 - link

Inb4 fanless data centers near the equator.KongClaude - Tuesday, August 22, 2017 - link

'however Samsung does not have much experience with large silicon dies'I don't remember the actual die size for the DEC Alpha's that Samsung fabbed back in the day, the Alpha was a fairly large CPU even by todays standard. Would they have let go of that knowledge or is Alpha being relegated to low-volume/not much experience?

psychobriggsy - Tuesday, August 22, 2017 - link

We should also consider that GlobalFoundries licensed Samsung's 14nm after digging their own 14nm hole and failing to get out of it, and right now AMD are making 486mm^2 Vega dies on that process. The process doesn't have a massive maximum reticle size however, IIRC it's around 700mm^2, whereas TSMC can do just over 800mm^2 on their 16nm.