Retesting AMD Ryzen Threadripper’s Game Mode: Halving Cores for More Performance

by Ian Cutress on August 17, 2017 12:01 PM ESTGrand Theft Auto

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

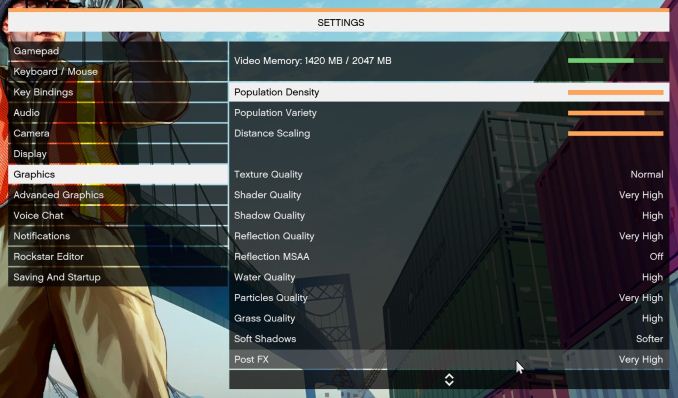

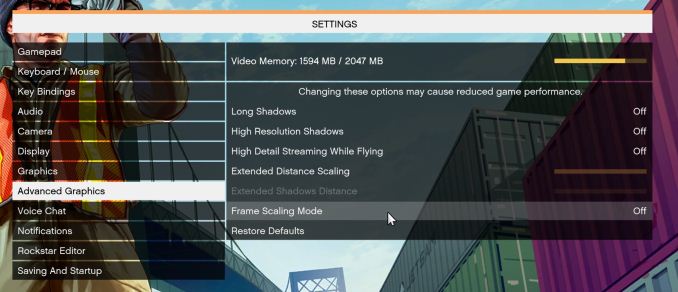

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low-end GPU with lots of GPU memory, like an R7 240 4GB).

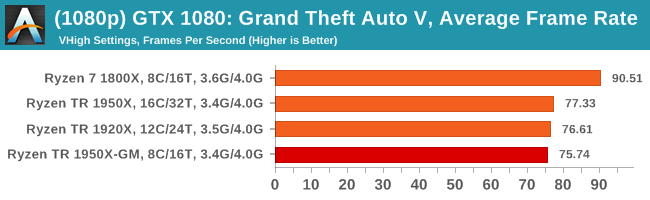

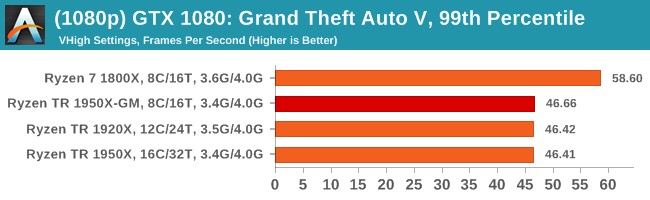

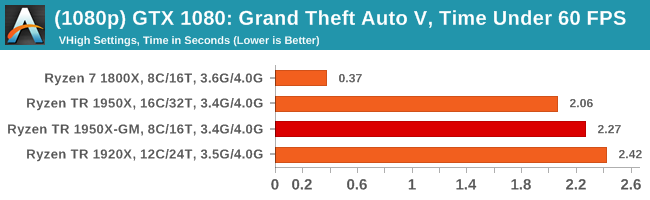

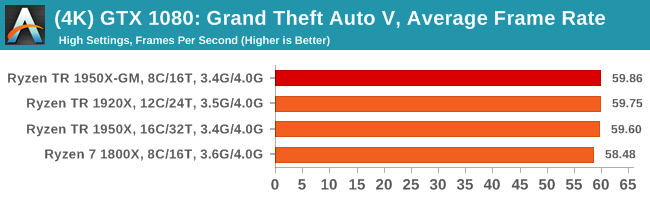

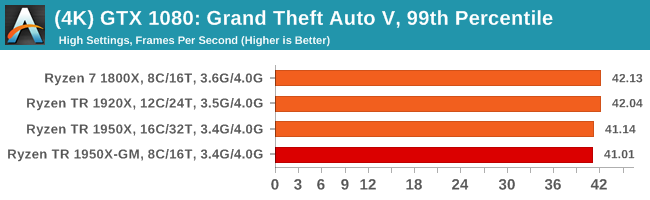

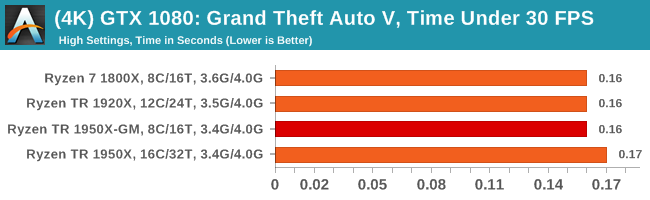

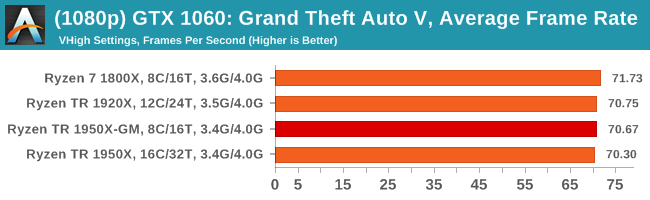

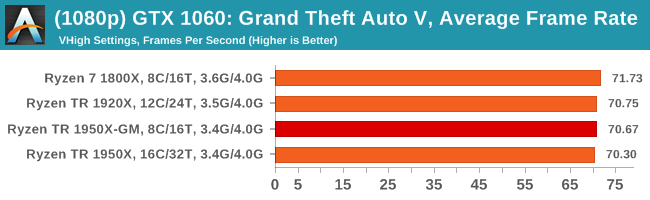

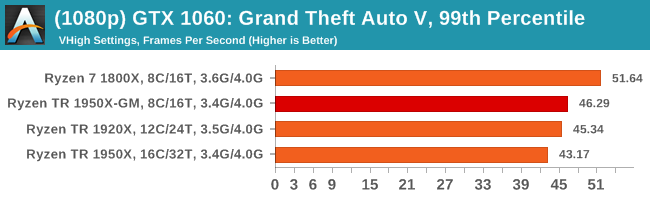

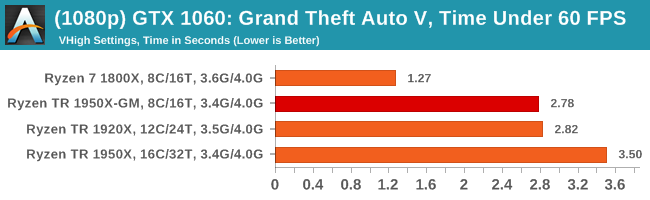

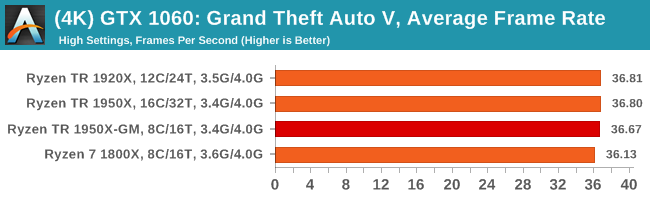

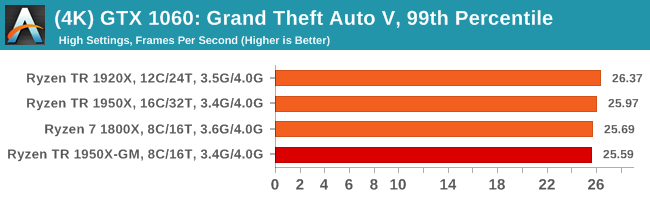

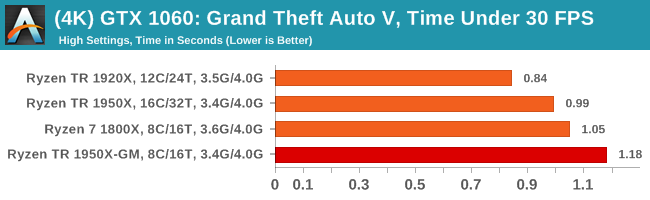

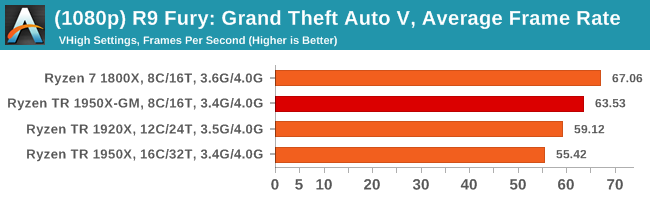

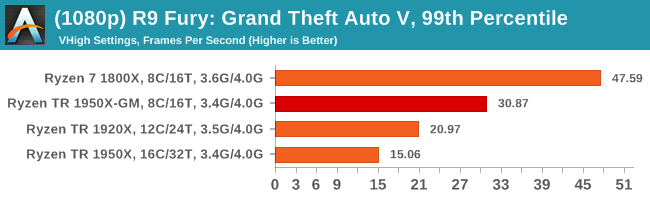

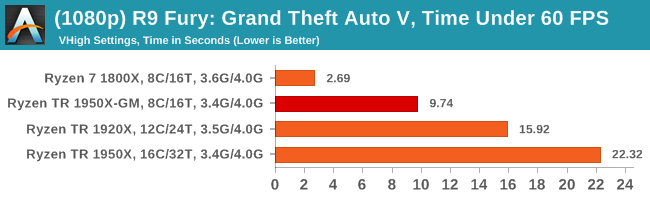

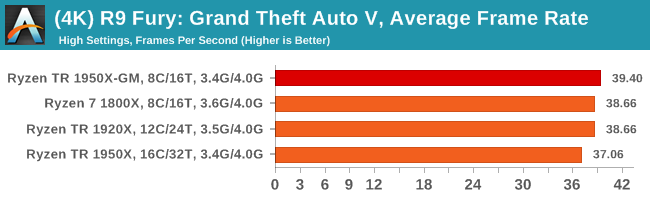

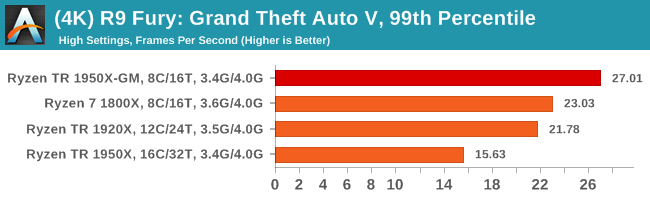

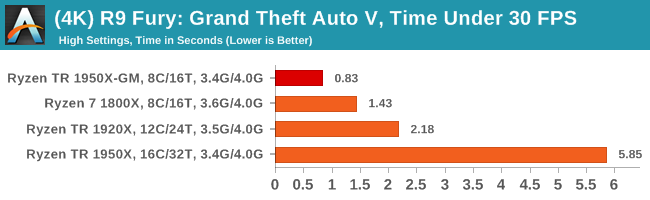

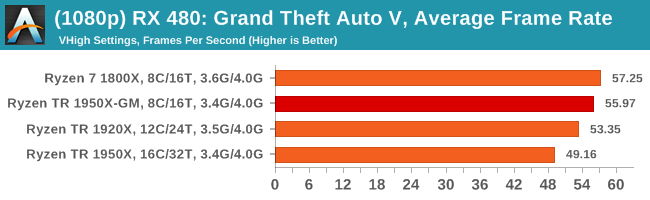

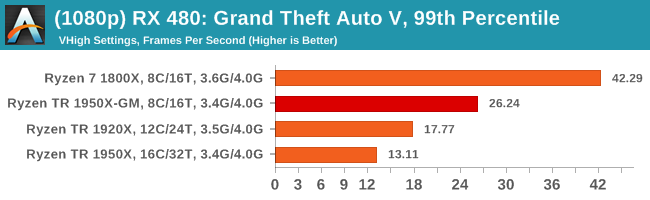

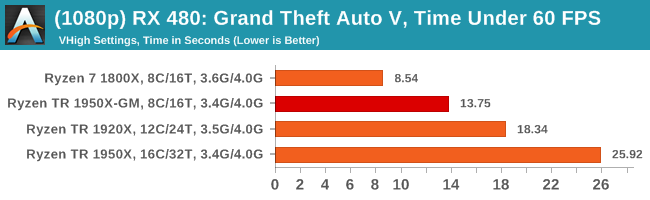

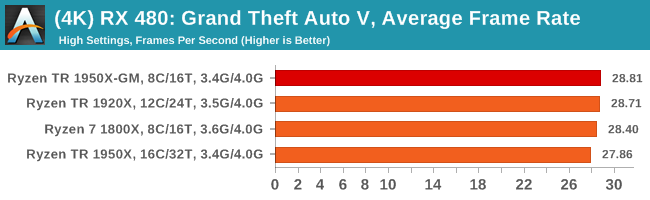

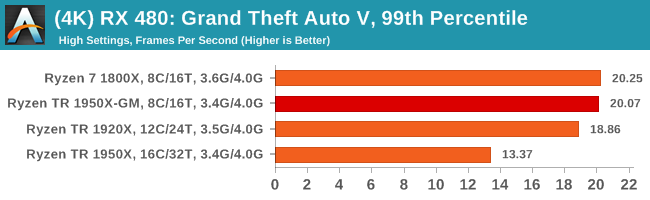

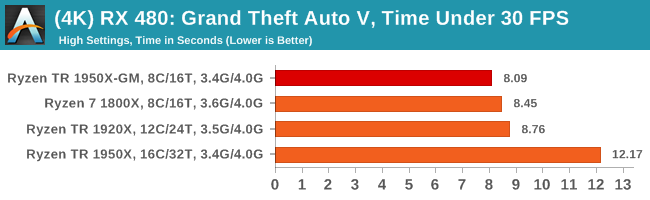

To that end, we run the benchmark at 1920x1080 using an average of Very High on the settings, and also at 4K using High on most of them. We take the average results of four runs, reporting frame rate averages, 99th percentiles, and our time under analysis.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

ASUS GTX 1060 Strix 6G Performance

1080p

4K

Sapphire Nitro R9 Fury 4G Performance

1080p

4K

Sapphire Nitro RX 480 8G Performance

1080p

4K

104 Comments

View All Comments

Gigaplex - Thursday, August 17, 2017 - link

How is Windows supposed to know when a specific app will run better with SMT enabled/disabled, NUMA, or even settings like SLI/Crossfire and PCIe lane distribution between peripheral cards? If your answer is app profiles based on benchmark testing, there's no way Microsoft will do that for all the near infinite configurations of hardware against all the Windows software out there. They've cut back on their own testing and fired most of their testing team. It's mostly customer beta testing instead.peevee - Friday, August 18, 2017 - link

Windows does not know whether it is a critical gaming thread or not. Setting thread affinity is not a rocket science - unless you are some Java "programmer".Spoelie - Friday, August 18, 2017 - link

And anyone not writing directly in assembly should be shot on sight, right?peevee - Friday, August 18, 2017 - link

You don't need to write in assembly to set thread affinities.Glock24 - Thursday, August 17, 2017 - link

Seems like the 1800X is a better all around CPU. If you really need and can use more than 8C/16T then get TR.For mixed workloads of gaming and productivity the 1800X or any of the smaller siblings is a better choice.

msroadkill612 - Friday, August 18, 2017 - link

The decision watershed is pcie3 lanes imo. Otherwise, the ryzen is a mighty advance in the mainstream sweet spot over ~6 months ago.OTH, I see lane hungry nvme ports as a boon to expanding a pcs abilities later. The premium for an 8 core TR & mobo over ryzen seems cost justifiable expandability.

Luckz - Friday, August 18, 2017 - link

It seems that the 1800X has the NVIDIA spend less time doing weird stuff.franzeal - Thursday, August 17, 2017 - link

If you're going to reference Intel in your benchmark summaries (Rocket League is one place I noticed it), either include them or don't forget to edit them out of your copy/paste job.Luckz - Friday, August 18, 2017 - link

WCCFTech-Level writing, eh.franzeal - Thursday, August 17, 2017 - link

Again, as with the original article, the description for the Dolphin render benchmarks is incorrectly stating that the results are shown in minutes.