The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTCreator Mode and Game Mode

*This page was updated on 8/17. A subsequent article with new information has been posted.

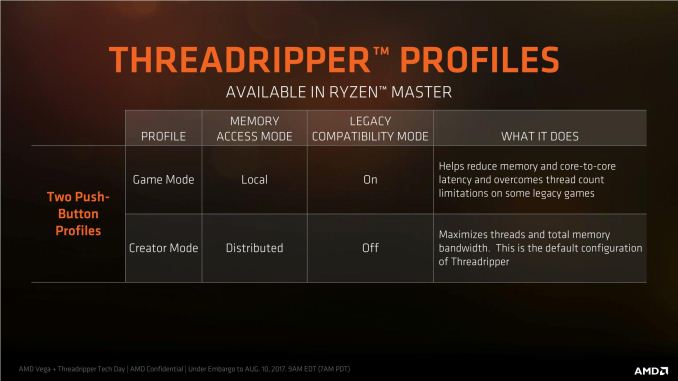

Due to the difference in memory latency between the two pairs of memory channels, AMD is implementing a ‘mode’ strategy for users to select depending on their workflow. The two modes are called Creator Mode (default), and Game Mode, and control two switches in order to adjust the performance of the system.

The two switches are:

- Legacy Compatibility Mode, on or off (off by default)

- Memory Mode: UMA vs NUMA (UMA by default)

The first switch disables the cores in one fo the silicon dies, but retains access to the DRAM channels and PCIe lanes. When the LCM switch is off, each core can handle two threads and the 16-core chip now has a total of 32 threads. When enabled, the system cuts half the cores, leaving 8 cores and 16 threads. This switch is primarily for compatibility purposes, as certain games (like DiRT) cannot work with more than 20 threads in a system. By reducing the total number of threads, these programs will be able to run. Turning the cores in one die off also alleviates some potential pressure in the core microarchitecture for cross communication.

The second switch, Memory Mode, puts the system into a unified memory architecture (UMA) or a non-unified memory architecture (NUMA) mode. Under the default setting, unified, the memory and CPU cores are seen as one massive block to the system, with maximum bandwidth and an average latency between the two. This makes it simple for code to understand, although the actual latency for a single instruction will be a good +20% faster or slower than the average, depending on which memory bank it is coming from.

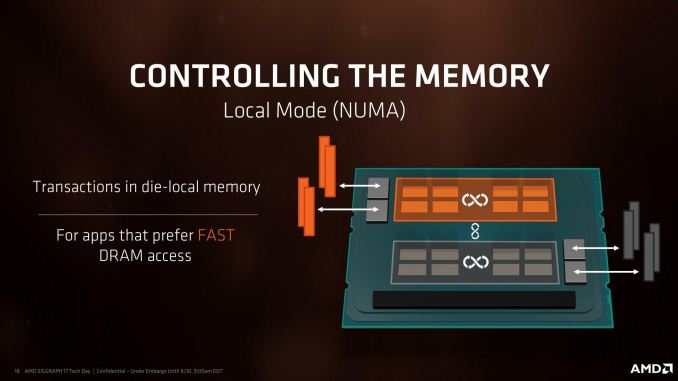

NUMA still gives the system the full memory, but splits the memory and cores into into two NUMA banks depending on which pair of memory channels is nearest the core that needs the memory. The system will keep the data for a core as near to it as possible, giving the lowest latency. For a single core, that means it will fill up the memory nearest to it first at half the total bandwidth but a low latency, then the other half of the memory at the same half bandwidth at a higher latency. This mode is designed for latency sensitive workloads that rely on the lower latency removing a bottleneck in the workflow. For some code this matters, as well as some games – low latency can affect averages or 99th percentiles for game benchmarks.

The confusing thing about this switch is that AMD is calling it ‘Memory Access Mode’ in their documents, and labeling the two options as Local and Distributed. This is easier to understand than the SMT switch, in that the Local setting focuses on the latency local to the core (NUMA), and the Distributed setting focuses on the bandwidth to the core (UMA), with Distributed being default.

- When Memory Access Mode is Local, NUMA is enabled (Latency)

- When Memory Access Mode is Distributed, UMA is enabled (Bandwidth, default)

So with that in mind, there are four ways to arrange these two switches. AMD has given two of these configurations specific names to help users depending on how they use their system: Creator Mode is designed to give as many threads as possible and as much memory bandwidth as possible. Game Mode is designed to optimize for latency and compatibility, to drive game frame rates.

| AMD Threadripper Options | |||||

| Words That Make Sense | Marketing Spiel | ||||

| Ryzen Master Profile |

Two Dies or One Die |

Memory Mode |

Legacy Compatibility Mode |

Memory Access Mode |

|

| Creator Mode | Two | UMA | Off | Distributed | |

| - | Two | NUMA | Off | Local | |

| - | One | UMA | On | Distributed | |

| Game Mode | One | NUMA | On | Local | |

There are two ways to select these modes, although this is also a confusing element to this situation.

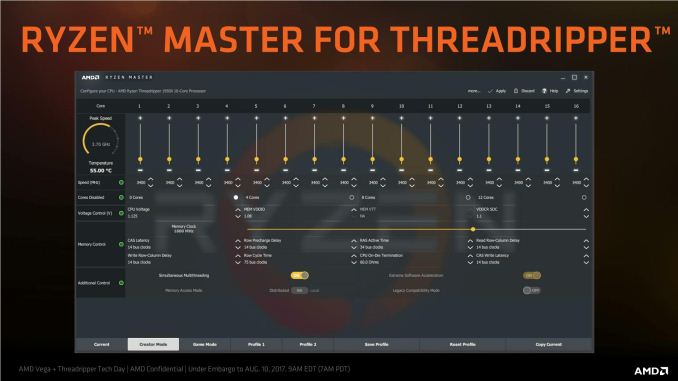

The way I would normally adjust these settings is through the BIOS, however the BIOS settings do not explicitly state ‘Creator Mode’ and ‘Game Mode’. They should give immediate access for the Memory Mode, where ASUS has used the Memory Access naming for Local and Distributed, not NUMA and UMA. For the Legacy Compatibility Mode, users will have to dive several screens down into the Zen options and manually switch off eight of the cores, if the setting is going to end up being visible to the user. This makes Ryzen Master the easiest way to implement Game Mode.

While we were testing Threadripper, AMD updated Ryzen Master several times to account for the latest updates, so chances are that by the time you are reading this, things might have changed again. But the crux is that Creator Mode and Game Mode are not separate settings here either. Instead, AMD is labelling these as ‘profiles’. Users can select the Creator Mode profile or the Game Mode profile, and within those profiles, the two switches mentioned above (labelled as Legacy Compatibility Mode and Memory Access Mode) will be switched as required.

Cache Performance

As an academic exercise, Creator Mode and Game Mode make sense depending on the workflow. If you don’t need the threads and want the latency bump, Game Mode is for you. The perhaps odd thing about this is that Threadripper is aimed at highly threaded workloads more than gaming, and so losing half the threads in Game Mode might actually be a detriment to a workstation implementation. That being said, users can leave SMT on and still change the memory access mode on its own, although AMD is really focusing more on the Creator and Game mode specifically.

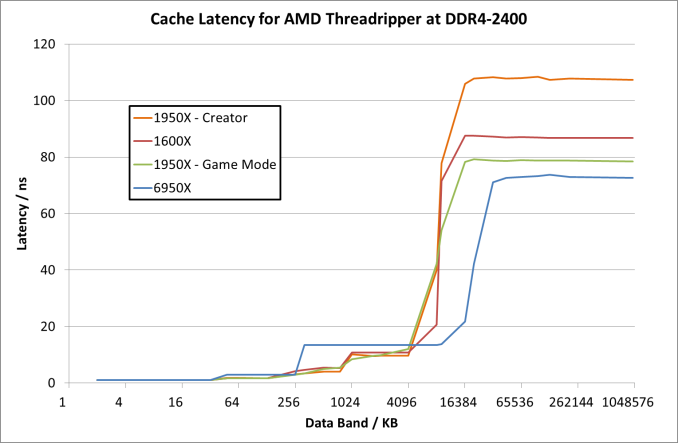

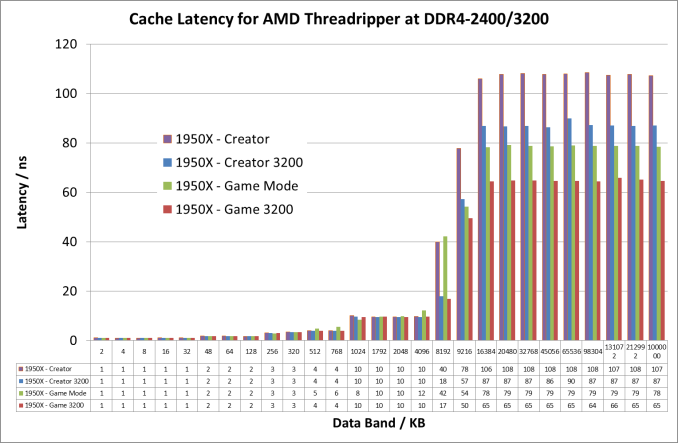

For this review, we tested both Creator (default) and Game modes on the 16-core Threadripper 1950X. As an academic exercise we looked into memory latency in both modes, as well as at higher DRAM frequencies. These latency numbers take the results for the core selected (we chose core 2 in each case) and then stride through to hit L1, L2, L3 and main memory. For UMA systems like in Creator Mode, main memory will be an average between the near and far memory results. We’ve also added in here a Ryzen 5 1600X as an example of a single Zeppelin die, and a 6950X Broadwell for comparison. All CPUs were run at DDR4-2400, which is the maximum supported at two DIMMs per channel.

For the 1950X in the two modes, the results are essentially equal until we hit 8MB, which is the L3 cache limit per CCX. After this, the core bounces out to main memory, where the Game mode sits around 79ns while the Creator mode is at 108 ns. By comparison the Ryzen 5 1600X seems to have a lower latency at 8MB (20ns vs 41 ns), and then sits between the Creator and Game modes at 87 ns. It would appear that the bigger downside of Creator mode in this way is the fact that main memory accesses are much slower than normal Ryzen or in Game mode.

If we crank up the DRAM frequency to DDR4-3200 for the Threadripper 1950X, the numbers change a fair bit:

Up until the 8MB boundary where L3 hits main memory, everything is pretty much equal. At 8MB however, the latency at DDR4-2400 is 41ns compared to 18ns at DDR4-3200. Then out into full main memory sees a pattern: Creator mode at DDR4-3200 is close to Game Mode at DDR4-2400 (87ns vs 79ns), but taking Game mode to DDR4-3200 drops the latency down to 65ns.

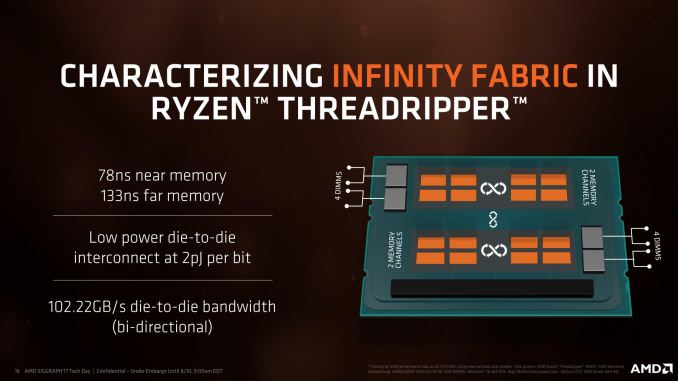

Another element we tested while in Game Mode was the latency for near memory and far memory as seen from a single core. Remember this slide from AMD’s deck?

In our testing, we achieved the following:

- At DDR4-2400, 79ns near memory and 136ns far memory (108ns average)

- At DDR4-3200, 65ns near memory and 108ns far memory (87ns average)

Those average numbers are what we get for Creator mode by default, indicating that the UMA mode in Creator mode will just use memory at random between the two.

347 Comments

View All Comments

NikosD - Sunday, August 13, 2017 - link

Well, reading the whole review today - 13/08/2017 - I can see that the reviewer did something more evil than not using DDR4-3200 to give us performance numbers.He used DDR4-2400, as he clearly states in the configuration table, filling up the performance tables BUT in the power consumption page he added DDR4-3200 results (!) just to inform us that DDR4-3200 consumes 13W more, without providing any performance numbers for that memory speed (!!)

The only thing left for the reviewer is to tell us in which department of Intel works exactly, because in the first pages he wanted to test TR against a 2P Intel system as Skylake-X has only 10C/20T but Intel didn't allow him.

Ask for your Intel department to permit it next time.

Zingam - Sunday, August 13, 2017 - link

Yeah! You make a great point! Too much emphasis on gaming all the time! These processors aren't GPUs after all! Most people who buy PCs don't play games at all. Even I as a game developer would like to see more real world tests, especially compilation and data-crunching tests that are typical for game content creation and development workloads. Even I as a game developer spend 99% of my time in front of the computer not playing any games.pm9819 - Friday, August 18, 2017 - link

So Intel made AMD release the underpowered overheating Bulldozer cpu's? Did Intel also make them sell there US and EU based fabs so they'll be wholly dependant on the Chinese to make their chips? Did Intel also make them buy a equally struggling graphics card company? Truth is AMD lost all the mind and market share they had because of bad corporate decision and uncompetitive cpu designs post Thunderbird. It's no one's fault but there own that it took seven years to produce a competitive replacement. Was Intel suppose to wait till they caught up? And Intel was a monopoly long before AMD started producing competitive cpu's.You can keep blaming Intel for AMD's screw ups but those of us with common sense and the ability to read know the fault lays with AMD's management.

ddriver - Thursday, August 10, 2017 - link

You are not sampled because of your divine objectivity Ian, you are sampled because you review for a site that is still somewhat popular for its former glory. You can deny it all you want, and understandable, as it is part of your job, but AT is heavily biased towards the rich american boys - intel, apple, nvidia... You are definitely subtle enough for the dumdums, but for better or worse, we are not all dumdums yet.But hey, it is not all that bad, after all, nowadays there are scores of websites running reviews, so people have a base for comparison, and extrapolate objective results for themselves.

ddriver - Thursday, August 10, 2017 - link

And some bits of constructive criticism - it would be nicer if those reviews featured more workloads people actually use in practice. Too much synthetics, too much short running tests, too much tests with software that is like "wtf is it and who in the world is using it".For example rendering - very few people in the industry actually use corona or blender, blender is used for modelling and texturing a lot, but not really for rendering. Neither is luxmark. Neither is povray, neither is CB.

Most people who render stuff nowadays use 3d max and vray, so testing this will actually be indicative of actual, practical perforamnce to more people than all those other tests combined.

Also, people want render times, not scores. That's very poor indication of actual performance that you will get, because many of those tests are short, so the CPU doesn't operate in the same mode it will operate if it sweats under continuous work.

Another rendering test that would benefit prosumers is after effects. A lot of people use after effects, all the time.

You also don't have a DAW test, something like cubase or studio one.

A lot of the target market for HEDT is also interested in multiphysics, for example ansys or comsol.

The compilation test you run, as already mentioned several times by different people, is not the most adequate either.

Basically, this review has very low informational value for people who are actually likely to purchase TR.

mapesdhs - Thursday, August 10, 2017 - link

AE would definitely be a good test for TR, it's something that can hammer an entire system, unlike games which only stress certain elements. I've seen AE renders grab 40GB RAM in seconds. A guy at Sony told me some of their renders can gobble 500GB of data just for a single frame, imposing astonishing I/O demands on their SAN and render nodes. Someone at a London movie company told me they use a 10GB/sec SAN to handle this sort of thing, and the issues surrounding memory access vs. cache vs. cores are very important, eg. their render management sw can disable cores where some types of render benefit from a larger slice of mem bw per core.There are all sorts of tasks which impose heavy I/O loads while also needing varying degrees of main CPU power. Some gobble enormous amounts of RAM, like ANSYS, though I don't know if that's still used.

I'd be interested to know how threaded Sparks in Flame/Smoke behave with TR, though I guess that won't happen unless Autodesk/HP sort out the platform support.

Ian.

Zingam - Sunday, August 13, 2017 - link

Good points!Notmyusualid - Sunday, August 13, 2017 - link

...only he WAS sampled. Read the review.bongey - Thursday, August 10, 2017 - link

You don't have to be paid by Intel, but this is just a bad review.Gothmoth - Thursday, August 10, 2017 - link

where is smoke there is fire.there are clear indications that anandtech is a bit biased.