AMD's Future in Servers: New 7000-Series CPUs Launched and EPYC Analysis

by Ian Cutress on June 20, 2017 4:00 PM EST- Posted in

- CPUs

- AMD

- Enterprise CPUs

- EPYC

- Whitehaven

- 1P

- 2P

Power

As with the Ryzen parts, EPYC will support 0.25x multipliers for P-state jumps of 25 MHz. With sufficient cooling, different workloads will be able to move between the base frequency and the maximum boost frequency in these jumps – AMD states that by offering smaller jumps it allows for smoother transitions rather than locking PLLs to move straight up and down, providing a more predictable performance implementation. This links into AMD’s new strategy of performance determinism vs power determinism.

Each of the EPYC CPUs include two new modes, one based on power and one based on performance. When a system configured at boot time to a specific maximum power, performance may vary based on the environment but the power is ultimately limited at the high end. For performance, the frequency is guaranteed, but not the power. This enables AMD customers to plan in advance without worrying about how different processors perform with regards voltage/frequency/leakage, or helps provide deterministic performance in all environments. This is done at the system level at boot time, so all VMs/containers on a system will be affected by this.

This extends into selectable power limits. For EPYC, AMD is offering the ability to run processors at a lower or higher TDP than out of the box – most users are likely familiar with Intel’s cTDP Up and cTDP Down modes on the mobile processors, and this feature by AMD is somewhat similar. As a result, the TDP limits given at the start of this piece can go down 15W or up 20W:

| EPYC TDP Modes | ||

| Low TDP | Regular TDP | High TDP |

| 155W | 180W | 200W |

| 140W | 155W | 175W |

| 105W | 120W | - |

The sole 120W processor at this point is the 8-core EPYC 7251 which is geared towards memory limited workloads that pay licenses per core, hence why it does not get a higher power band to work towards.

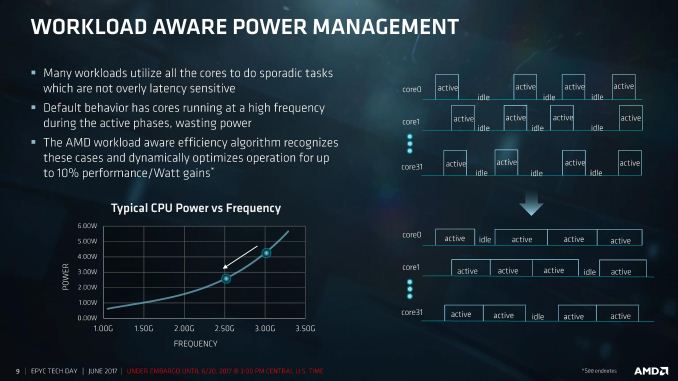

Workload-Aware Power Management

One of AMD’s points about the sort of workloads that might be run on EPYC is that sporadic tasks are sometimes hard to judge, or are not latency sensitive. In a non-latency sensitive environment, in order to conserve power, the CPU could spread the workload out across more cores at a lower frequency. We’ve seen this sort of policy before on Intel’s Skylake and up processors, going so far as duty cycling at the efficiency point to conserve power, or in the mobile space. AMD is bringing this to the EPYC line as well.

Rather than staying at the high frequency and continually powering up and down, by reducing the frequency such the cores are active longer, latency is traded for power efficiency. AMD is claiming up to a 10% perf-per-Watt improvement with this feature.

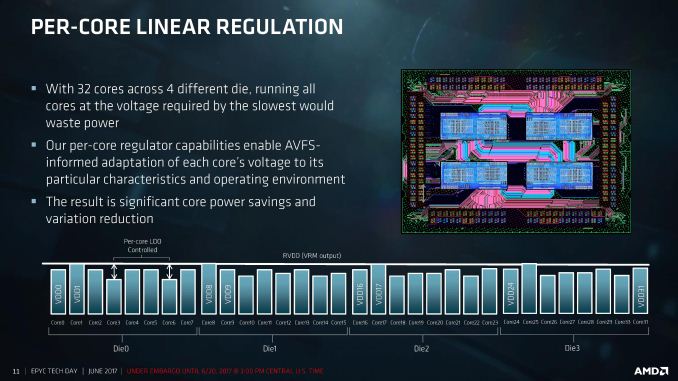

Frequency and voltage can be adjusted for each core independently, helping drive this feature. The silicon implements per-core linear regulators that work with the onboard sensor control to adjust the AVFS for the workload and the environment. We are told that this helps reduce the variability from core-to-core and chip-to-chip, with regulation supported with 2mV accuracy. We’ve seen some of this in Carrizo and Bristol Ridge already, although we are told that the goal for per-core VDO was always meant to be EPYC.

This can not only happen on the core, but also on the Infinity Fabric links between the CPU dies or between the sockets. By modulating the link width and analyzing traffic patterns, AMD claims another 8% perf-per-Watt for socket-to-socket communications.

Performance-Per-Watt Claims

For the EPYC system, AMD is claiming power efficiency results in terms of SPEC, compiled on GCC 6.2:

| AMD Claims 2P EPYC 7601 vs 2P E5-2699A V4 |

||

| SPECint | SPECfp | |

| Performance | 1.47x | 1.75x |

| Average Power | 0.96x | 0.99x |

| Total System Level Energy | 0.88x | 0.78x |

| Overall Perf/Watt | 1.54x | 1.76x |

Comparing a 2P high-end EPYC 7601 server against Intel’s current best 2P E5-2699A v4 arrangement, AMD is claiming a 1.54x perf/watt for integer performance and 1.76x perf/watt on floating point performance, giving more performance for a lower average power resulting in overall power gains. Again, we cannot confirm these numbers, so we look forward to testing.

131 Comments

View All Comments

msroadkill612 - Wednesday, June 21, 2017 - link

Sounds a powerful feature for vid editors etc."Hot-swap NVMe/SAS3/SATA3

drive bays and M.2 slots"

Breit - Wednesday, June 21, 2017 - link

Really?: http://images.anandtech.com/galleries/5699/epyc_te..."All 2P E5 scores were derived from the following ICC compiler-based test results per spec.org, multiplied by 0.575 to convert them from the ICC compiler to GCC -O2 v6.1..."?!?

Not cool.

TC2 - Wednesday, June 21, 2017 - link

i smell a tragedy for amd with those "multiplications" :)HollyDOL - Wednesday, June 21, 2017 - link

Reminds me so called "Resultin's constant" joke...[Value you get] [any operator] [Resultin's constant] = [Value you wanted]

TC2 - Wednesday, June 21, 2017 - link

precisely!try another behavioural pattern: great claims, feeble results :)

to compare E5-2698 v4 20 cores with EPYC 7551 32 cores - 32/20 = 1.6, the extrapolation is claimed to be +44% or 1.44.

therefore 1.6/1.44=1.1(1).

now we can to conclude that for a 32 core xeon (it's easy for intel) the result will be +11% for intel, and no more advantage for amd at all!!!

such mathematical practices are ridiculous!

FreckledTrout - Wednesday, June 21, 2017 - link

Anyone buying these is going to want to see some large independent reviews / studies. Some of the larger companies looking at these say like Amazon's data center may get a few and do a study. I think that type of info is what everyone should wait on. These numbers look like they came from the marketing side of the house.Intel999 - Wednesday, June 21, 2017 - link

"Anyone buying these is going to want to see some large independent reviews / studies. Some of the larger companies looking at these say like Amazon's data center may get a few and do a study."Those companies that shared the stage with AMD for this presentation were included in the 5,000 Epyc chips that were given to OEMs/ODMs to test and validate over the last six months.

All the big boys know what EPYC is capable of and it seems that most are quite impressed.

Zizy - Wednesday, June 21, 2017 - link

Yeah well the story is that they obtained GCC 6.1 scores (by benching the CPU) for the top CPU and found out those are ~40% lower than the official ones. Some of that is ICC cheating on tests, some of that is optimization level.So, they reduced all official scores by the same ~40% for this comparison (with the top part being actually benched and achieved that result as on slides).

I can't fault them for using the middle ground GCC for those benchmarks, and the normalization step is reasonable enough as well. The only real issue here is use of -O2 instead of more optimized code. Sure, Ryzen/Epyc is new and GCC likely cannot optimize as good for it (which is probably they reason they used default O2), but this is not a valid excuse, should have used O3 at least if not advanced flags.

petteyg359 - Thursday, June 22, 2017 - link

Don't be a ricer. -O3 is often else than -O2 and EVERYBODY with a clue recommend against using it anywhere, ever.Zan Lynx - Monday, June 26, 2017 - link

Erm. I use GCC with -O3 in a library I build at my job. With profile feedback it is easily 20% faster than -O2. So I don't know where these "clue" people are. I've only been coding for 20 years or so. I may not have a clue yet.