Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Intel Expanding the Chipset: 10 Gigabit Ethernet and QuickAssist Technology

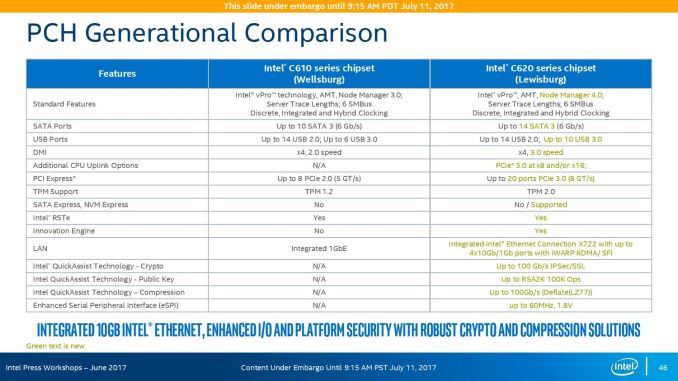

The refresh strategy from Intel on the chipset side has an ultra-long cadence. In recent memory, Intel’s platform launches are designed to support two generations of processor release, and in that time there is typically no chipset update, leaving the platform controller hub semi-static for functionality for usually three years. This is compared to the consumer side, where new chipsets are launched with every new CPU generation, with bigger jumps coming every couple of years. For the new launch today, Intel pushing the enterprise chipset ahead in a new direction.

The point of the chipset previously was to provide some basic IO support in the form of SATA/SAS ports, some USB ports, and a few PCIe lanes for simple controllers like USB 3.0, Gigabit Ethernet, or perhaps an x4 PCIe slot for a non-accelerator type card. The new chipsets, part of the C620 family codenamed Lewisburg, are designed to assist with networking, cryptography, and act more like a PCIe switch with up to 20 PCIe 3.0 lane support.

The headline features that matter most is the upgrade in DMI connection to the chipset, upgraded from DMI 2.0 to DMI 3.0 to match the consumer platforms, having those 20 PCIe 3.0 lanes from the chipset, and also the new feature under CPU Uplink.

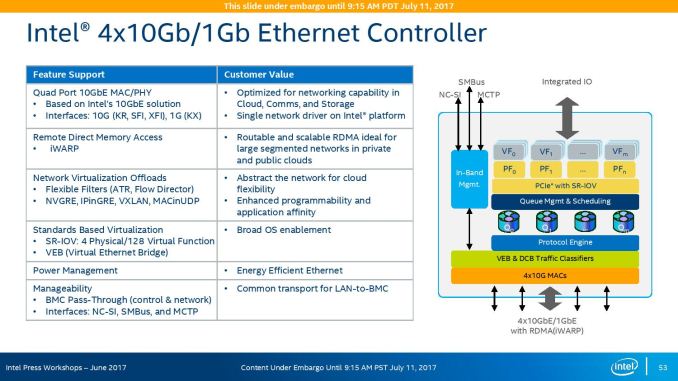

For the new generation of Lewisburg chipsets, if an OEM requires that a platform has access to a cryptography engine or 10 Gigabit Ethernet, then they can attach 8 or 16 lanes from the processor into the chipset via this CPU Uplink port. Depending on which model of chipset is being used, this can provide up to four 10 GbE ports with iWARP RDMA, or up to 100 GB/s IPSec/SSL of QuickAssist support.

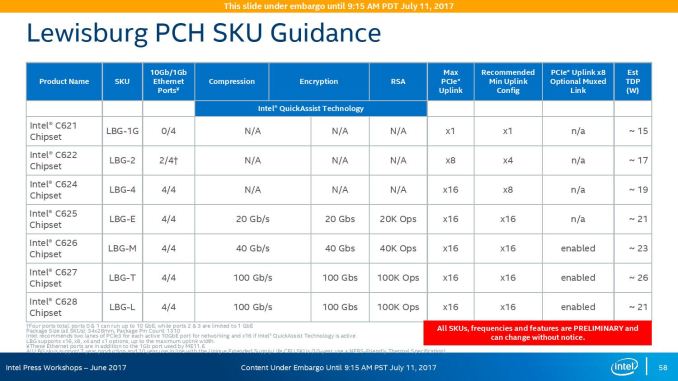

Intel will offer seven different versions of the chipset, varying in 10G and QAT support, but also varying in TDP:

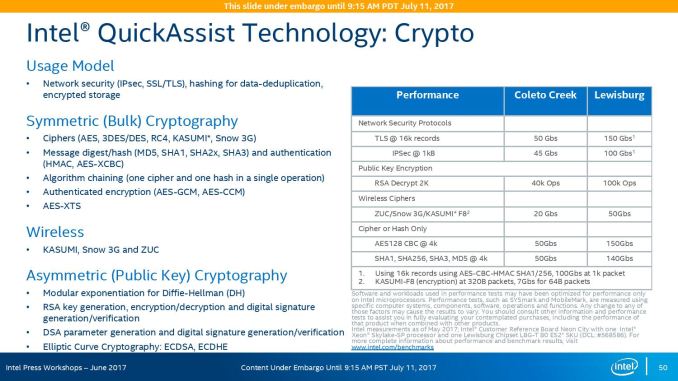

On the cryptography side, Intel has previously sold add-in PCIe cards for QuickAssist, but is now moving it onto the systems directly. By adding it into the chipset, it can be paired with the Ethernet traffic and done in-situ, and specifically Intel points to bulk cryptography (150 Gb/s AES256/SHA256), Public Key Encryption (100k ops of RSA2048) and compression (100+ Gb/s deflate).

With the GbE, Intel has designed this to be paired with the X722 PHY, and supports network virtualization, traffic shaping, and supports Intel’s Data Plane Development Kit for advanced packet forwarding.

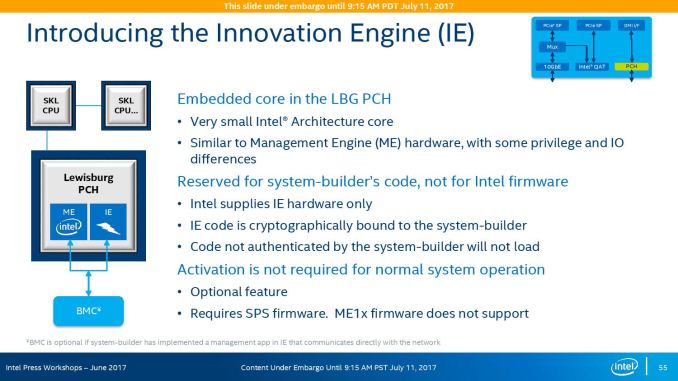

The chipset will also include a new feature called Intel’s Innovation Engine, giving a small embedded core into the PCH which mirrors Intel’s Management Engine but is designed for system-builders and integrators. This allows specialist firmware to manage some of the capabilities of the system on top of Intel’s ME, and is essentially an Intel Quark x86 core with 1.4MB SRAM.

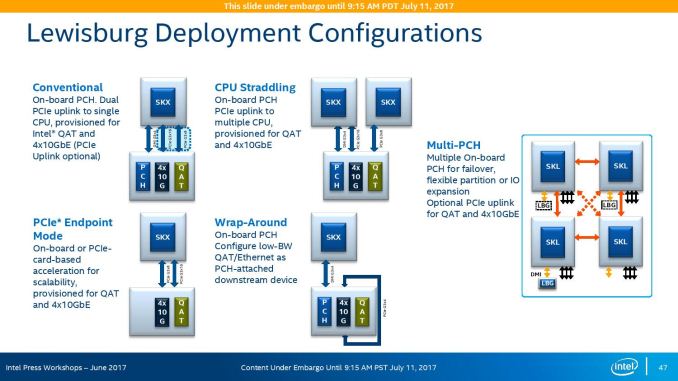

The chipsets are also designed to be supported between different CPUs within the same multi-processor system, or for a system to support multiple chipsets at once as needed.

219 Comments

View All Comments

StargateSg7 - Sunday, August 6, 2017 - link

Maybe I'm spoiled, but to me a BIG database is something I usually deal with on a daily basissuch as 500,000 large and small video files ranging from two megabytes to over a PETABYTE

(1000 Terabytes) per file running on a Windows and Linux network.

What sort of read and write speeds do we get between disk, main memory and CPU

and when doing special FX LIVE on such files which can be 960 x 540 pixel youtube-style

videos up to full blown 120 fps 8192 x 4320 pixel RAW 64 bits per pixel colour RGBA files

used for editing and video post-production.

AND I need for the smaller files, total I/O-transaction rates at around

OVER 500,000 STREAMS of 1-to-1000 64 kilobyte unique packets

read and written PER SECOND. Basically 500,000 different users

simultaneously need up to one thousand 64 kilobyte packets per

second EACH sent to and read from their devices.

Obviously Disk speed and network comm speed is an issue here, but on

a low-level hardware basis, how much can these new Intel and AMD chips

handle INTERNALLY on such massive data requirements?

I need EXABYTE-level storage management on a chip! Can EITHER

Xeon or EPyC do this well? Which One is the winner? ... Based upon

this report it seems multiple 4-way EPyC processors on waterblocked

blades could be racked on a 100 gigabit (or faster) fibre backbone

to do 500,000 simultaneous users at a level MUCH CHEAPER than

me having to goto IBM or HP for a 30+ million dollar HPC solution!

PixyMisa - Tuesday, July 11, 2017 - link

It seems like a well-balanced article to me. Sure the DB performance issue is a corner case, but from a technical point of view its worth knowing.I'd love to see a test on a larger database (tens of GB) though.

philehidiot - Wednesday, July 12, 2017 - link

It seems to me that some people should set up their own server review websites in order that they might find the unbiased balance that they so crave. They might also find a time dilation device that will allow them to perform the multitude of different workload tests they so desire. I believe this article stated quite clearly the time constraints and the limitations imposed by such constraints. This means that the benchmarks were scheduled down to the minute to get as many in as possible and therefore performing different tests based on the results of the previous benchmarks would have put the entire review dataset in jeopardy.It might be nice to consider just how much data has been acquired here, how it might have been done and the degree of interpretation. It might also be worth considering, if you can do a better job, setting up shop on your own and competing as obviously the standard would be so much higher.

Sigh.

JohanAnandtech - Thursday, July 13, 2017 - link

Thank you for being reasonable. :-) Many of the benchmarks (Tinymembench, Stream, SPEC) etc. can be repeated, so people can actually check that we are unbiased.Shankar1962 - Monday, July 17, 2017 - link

Don't go by the labs idiotUnderstand what real world workloads are.....understand what owning an entire rack means ......you started foul language so you deserve the same respect from me......

roybotnik - Wednesday, July 12, 2017 - link

EPYC looks extremely good here aside from the database benchmark, which isn't a useful benchmark anyways. Need to see the DB performance with 100GB+ of memory in use.CarlosYus - Friday, July 14, 2017 - link

A detailed and unbiased article. I'm awaiting for more tests as testing time passes.3.2 Ghz is a moderate Turbo for AMD EPYC, I think AMD could push it further with a higher thermal envelope i/o 14 nm process improvement in the coming months.

mdw9604 - Tuesday, July 11, 2017 - link

Nice, comprehensive article. Glad to see AMD is competitive once again in the server CPU space.nathanddrews - Tuesday, July 11, 2017 - link

"Competitive" seems like an understatement, but yes, AMD is certainly bringing it!ddriver - Tuesday, July 11, 2017 - link

Yeah, offering pretty much double the value is so barely competitive LOL.