The Intel Optane Memory (SSD) Preview: 32GB of Kaby Lake Caching

by Billy Tallis on April 24, 2017 12:00 PM EST- Posted in

- SSDs

- Storage

- Intel

- PCIe SSD

- SSD Caching

- M.2

- NVMe

- 3D XPoint

- Optane

- Optane Memory

Mixed Read/Write Performance

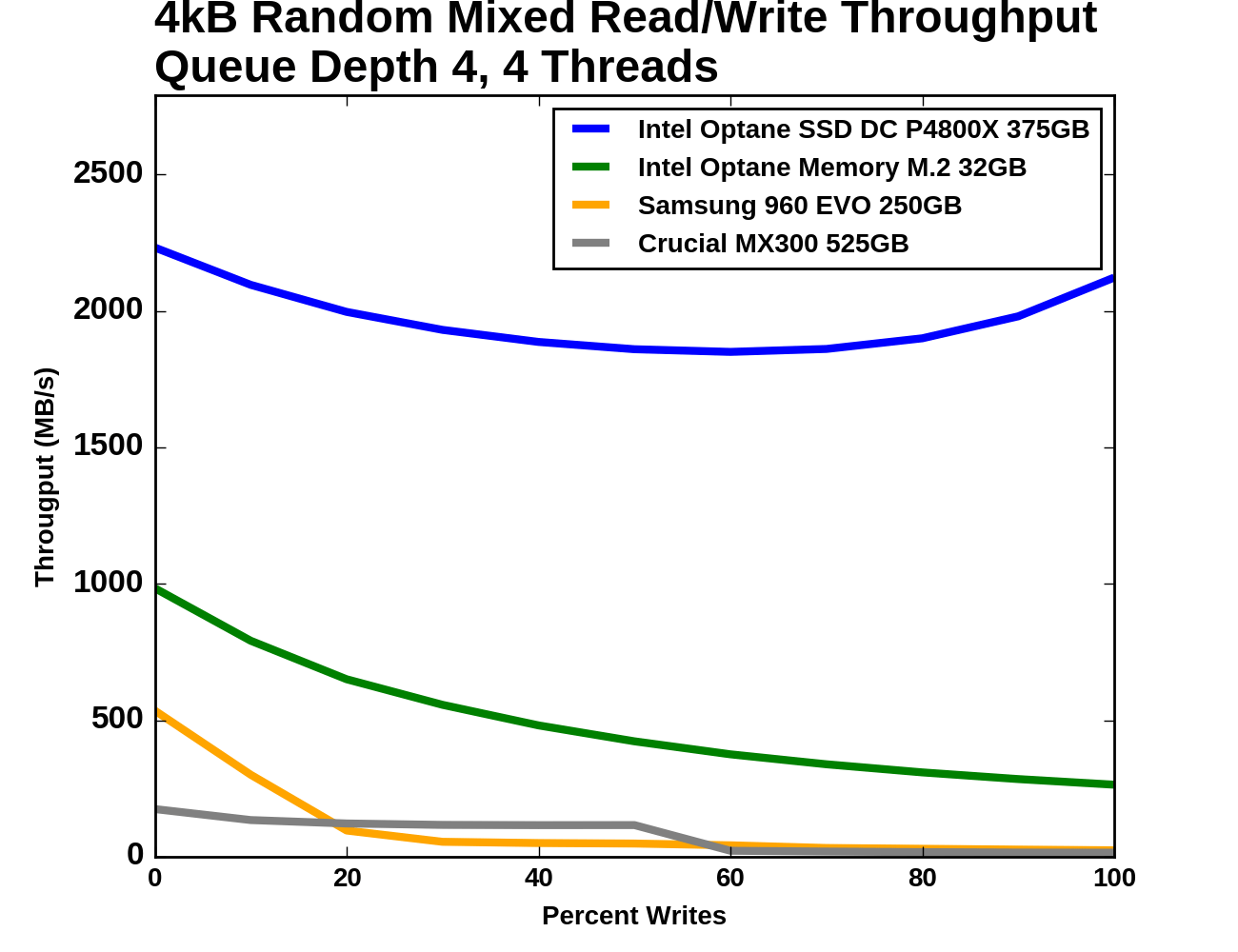

Workloads consisting of a mix of reads and writes can be particularly challenging for flash based SSDs. When a write operation interrupts a string of reads, it will block access to at least one flash chip for a period of time that is substantially longer than a read operation takes. This hurts the latency of any read operations that were waiting on that chip, and with enough write operations throughput can be severely impacted. If the write command triggers an erase operation on one or more flash chips, the traffic jam is many times worse.

The occasional read interrupting a string of write commands doesn't necessarily cause much of a backlog, because writes are usually buffered by the controller anyways. But depending on how much unwritten data the controller is willing to buffer and for how long, a burst of reads could force the drive to begin flushing outstanding writes before they've all been coalesced into optimal sized writes.

This mixed workload test is an extension of what Intel describes in their specifications for the Optane SSD DC P4800X. A total queue depth of 16 is achieved using four worker threads, each performing a mix of random reads and random writes. Instead of just testing a 70% read mixture, the full range from pure reads to pure writes is tested at 10% increments. These tests were conducted on the Optane Memory as a standalone SSD, not in any caching configuration. Client and consumer workloads do consist of a mix of reads and writes, but never at queue depths this high; this test is included primarily for comparison between the two Optane devices.

|

|||||||||

| Vertical Axis units: | IOPS | MB/s | |||||||

At the beginning of the test where the workload is purely random reads, the four drives almost form a geometric progression: the Optane Memory is a little under half as fast as the P4800X and a little under twice as fast as the Samsung 960 EVO, and the MX300 is about a third as fast as the 960 EVO. As the proportion of writes increases, the flash SSDs lose throughput quickly. The Optane Memory declines across the entire test but gradually, ending up at a random write speed around one fourth of its random read speed. The P4800X has enough random write throughput to rebound during the final phases of the test, ending up with a random write throughput almost as high as the random read throughput.

|

|||||||||

| Mean | Median | 99th Percentile | 99.999th Percentile | ||||||

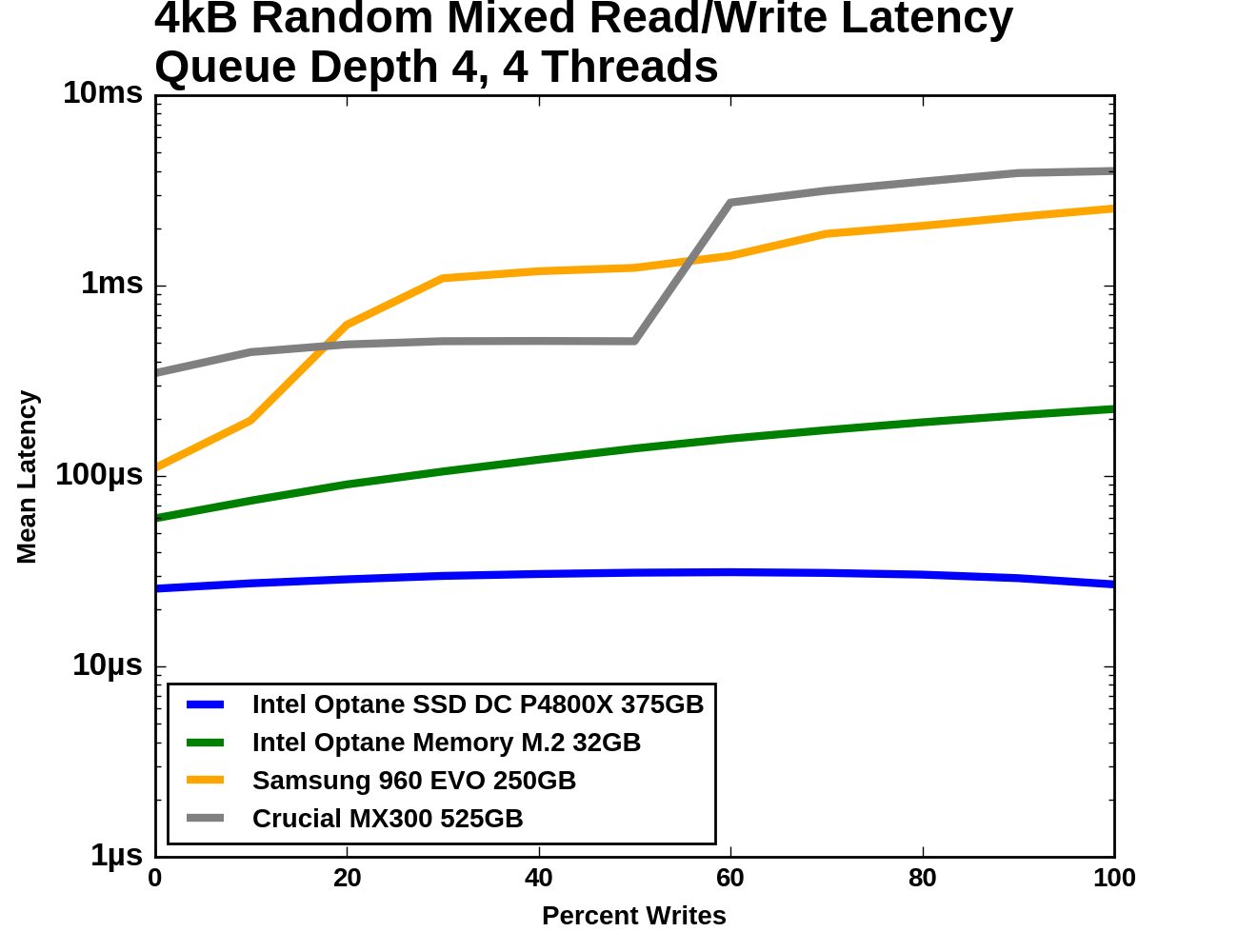

The flash SSDs actually manage to deliver better median latency than the Optane Memory through a portion of the test, after they've shed most of their throughput. For the 99th and 99.999th percentile latencies, the flash SSDs perform much worse once writes are added to the mix, ending up almost 100 times slower than the Optane Memory.

Idle Power Consumption

There are two main ways that a NVMe SSD can save power when idle. The first is through suspending the PCIe link through the Active State Power Management (ASPM) mechanism, analogous to the SATA Link Power Management mechanism. Both define two power saving modes: an intermediate power saving mode with strict wake-up latency requirements (eg. 10µs for SATA "Partial" state) and a deeper state with looser wake-up requirements (eg. 10ms for SATA "Slumber" state). SATA Link Power Management is supported by almost all SSDs and host systems, though it is commonly off by default for desktops. PCIe ASPM support on the other hand is a minefield and it is common to encounter devices that do not implement it or implement it incorrectly, especially among desktops. Forcing PCIe ASPM on for a system that defaults to disabling it may lead to the system locking up.

The NVMe standard also defines a drive power management mechanism that is separate from PCIe link power management. The SSD can define up to 32 different power states and inform the host of the time taken to enter and exit these states. Some of these power states can be operational states where the drive continues to perform I/O with a restricted power budget, while others are non-operational idle states. The host system can either directly set these power states, or it can declare rules for which power states the drive may autonomously transition to after being idle for different lengths of time. NVMe power management including Autonomous Power State Transition (APST) fortunately does not depend on motherboard support the way PCIe ASPM does, so it should eventually reach the same widespread availability that SATA Link Power Management enjoys.

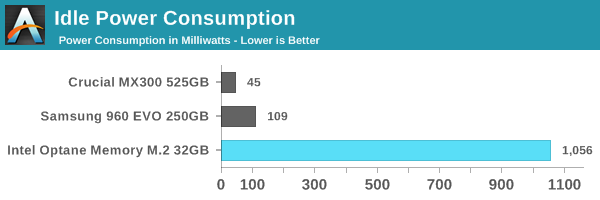

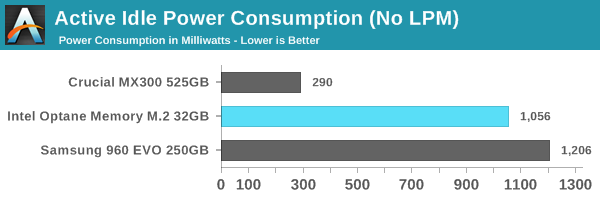

We report two idle power values for each drive: an active idle measurement taken with none of the above power management states engaged, and an idle power measurement with either SATA LPM Slumber state or the lowest-power NVMe non-operational power state, if supported. These tests were conducted on the Optane Memory as a standalone SSD, not in any caching configuration.

With no support for NVMe idle power states, the Optane Memory draws the rated 1W at idle while the SATA and flash-based NVMe drives drop to low power states with a tenth of the power draw or less. Even without using low power states, the Crucial MX300 uses a fraction of the power, and the Samsung 960 EVO uses only 150mW more to keep twice as many PCIe lanes connected.

The Optane Memory is a tough sell for anyone concerned with power consumption. In a typical desktop it won't be enough to worry about, but Intel definitely needs to add proper power management to the next iteration of this product.

110 Comments

View All Comments

name99 - Tuesday, April 25, 2017 - link

Why are you so sure you understand the technology? Intel has told us nothing about how it works.What we have are

- a bunch of promises from Intel that are DRAMATICALLY not met

- an exceptionally lousy (expensive, low capacity) product being sold.

You can interpret these in many ways, but the interpretation that "Intel over promised and dramatically underdelivered" is certainly every bit as legit as the interpretation "just wait, the next version (which ships when?) will be super-awesome".

If Optane is capable TODAY of density comparable to NAND, then why ship such a lousy capacity? And if it's not capable, then what makes you so sure that it can reach NAND density? Getting 3D-NAND to work was not a cheap exercise. Does Intel have the stomach (and the project management skills) to last till that point, especially given that the PoS that they're shipping today ain't gonna generate enough of a revenue stream to pay for the electric bill of the Optane team while they take however long they need to get out the next generation.

emn13 - Tuesday, April 25, 2017 - link

Intel hasn't confirmed what it is, but AFAICT all the signs point to xpoint being phase-change ram, or at least very similar to it. Which still leaves a lot of wiggle room, of course.ddriver - Tuesday, April 25, 2017 - link

IIRC they have explicitly denied xpoint being PCM. But then again, who would ever trust a corporate entity, and why?Cellar - Tuesday, April 25, 2017 - link

Implying Intel would only use only the revenue of Optane to fund their next generation of Optane. You forget how much profit they make milking out their Processors? *Insert Woody Harrelson wiping tears away with money gif*name99 - Tuesday, April 25, 2017 - link

Be careful. What he's criticizing is the HYPE (ie Intel's business plan for this technology) rather than the technology itself, and in that respect he is basically correct. It's hard to see what more Intel could have done to give this technology a bad name.- We start with the ridiculous expectations that were made for it. Most importantly the impression given that the RAM-REPLACEMENT version (which is what actually changes things, not a faster SSD) was just around the corner.

- Then we get this attempt to sell to the consumer market a product that makes ZERO sense for consumers along any dimension. The product may have a place in enterprise (where there's often value in exceptionally fast, albeit expensive, particular types of storage), but for consumers there's nothing of value here. Seriously, ignore the numbers, think EXPERIENCE. In what way is the Optane+hard drive experience better than the larger SSD+hard drive or even large SSD and no hard drive experience at the same price points. What, in the CONSUMER experience, takes advantage of the particular strengths of Optane?

- Then we get this idiotic power management nonsense, which reduces the value even further for a certain (now larger than desktop) segment of mobile computing

- And the enforced tying of the whole thing to particular Intel chipsets just shrinks the potential market even further. For example --- you know who's always investigating potential storage solutions and how they could be faster? Apple. It is conceivable (obviously in the absence of data none of us knows, and Intel won't provide the data) that a fusion drive consisting of, say, 4GB of Optane fused to an iPhone or iPad's 64 or 128 or 256GB could have advantages in terms of either performance or power. (I'm thinking particularly for power in terms of allowing small writes to coalesce in the Optane.)

But Intel seems utterly uninterested in investigating any sort of market outside the tiny tiny market it has defined.

Maybe Optane has the POTENTIAL to be great tech in three years. (Who knows since, as I said, right know what it ACTUALLY is is a secret, along with all its real full spectrum of characteristics).

But as a product launch, this is a disaster. Worse than all those previous Intel disasters whose names you've forgotten like ViiV or Intel Play or the Intel Personal Audio Player 3000 or the Intel Dot.Station.

Reflex - Tuesday, April 25, 2017 - link

Meanwhile in the server space we are pretty happy with what we've seen so far. I get that its not the holy grail you expected, but honestly I didn't read Intel's early info as an expectation that gen1 would be all things to all people and revolutionize the industry. What I saw, and what was delivered, was a path forward past the world of NAND and many of its limitations, with the potential to do more down the road.Today, in low volume and limited form factors it likely will sell all that Intel can produce. My guess is that it will continue to move into the broader space as it improves incrementally generation over generation, like most new memory products have done. Honestly the greatest accomplishment here is Intel and Micron finally introducing a new memory type, at production quantity, with a reasonable cost for its initial markets. We've spent years hearing about phase-change, racetrack, memrister, MRAM and on and on, and nobody has managed to introduce anything at volume since NAND. This is a major milestone, and hopefully it touches off a race between Optane and other technologies that have been in the permanent 3-5 year bucket for a decade plus.

ddriver - Tuesday, April 25, 2017 - link

Yeah, I bet you are offering hypetane boards by the dozens LOL. But shouldn't it be more like "in the _servers that don't serve anyone_ space" since in order to take advantage of them low queue depth transfers and latencies, such a s "server" would have to serve what, like a client or two?I don't claim to be a "server specialist" like you apparently do, but I'd say if a server doesn't have a good saturation, they either your business sucks and you don't have any clients or you have more servers than you need and should cut back until you get a good saturation.

To what kind of servers is it that beneficial to shave off a few microseconds of data access? And again, only in low queue depth loads? I'd understand if hypetane stayed equally responsive regardless of the load, but as the load increases we see it dwindling down to the performance of available nand SSDs. Which means you won't be saving on say query time when the system is actually busy, and when the system is not it will be snappy enough as it is, without magical hypetane storage. After all, servers serve networks, and even local networks are slow enough to completely mask out them "tremendous" SSD latencies. And if we are talking an "internet" server, then the network latency is much, much worse than that.

You also evidently don't understand how the industry works. It is never about "the best thing that can be done", it is always about "the most profitable thing that can be done". As I've repeated many times, even NAND flash can be made tremendously faster, in terms of both latency and bandwidth, it is perfectly possible today and it has been technologically possible for years. Much like it has been possible to make cars that go 200 MPH, yet we only see a tiny fraction of the cars that are actually capable to make that speed. There has been a small but steady market for mram, but that's a niche product, it will never be mainstream because of technological limitations. It is pretty much the same thing with hypetane, regardless of how much intel are trying to shove it to consumers in useless product forms, it only makes sense in an extremely narrow niche. And it doesn't owe its performance to its "new memory type" but to its improved controller, and even then, its performance doesn't come anywhere close to what good old SLC is capable of technologically as a storage medium, which one should not confuse with a compete product stack.

The x25-e was launched almost 10 years ago. And its controller was very much "with the times" which is the reason the drive does a rather meager 250/170 mb/s. Yet even back then its latency was around 80 microseconds, with its "latest and greatest" hypetane struggling to beat that by a single order of magnitude 10 years later. Yet technologically the SLC PE cycle can go as low as 200 nanoseconds, which is 50 times better than hypetane and 400 times better than what the last pure SLC SSD controller was capable of.

No wonder the industry abandoned SLC - it was and still is too good not only for consumers but also for the enterprise. Which begs the question, with the SLC trump card being available for over a decade why would intel and micron waste money on researching a new media. And whether they really did that, or simply took good old SLC, smeared a bunch of lies, hype and cheap PR on it to step forward and say "here, we did something new".

I mean come on, when was the last time intel made something new? Oh that's right, back when they made netburst, and it ended up a huge flop. And then, where did the rescue come from? Something radically new? Nope, they got back to the same old tried and true, and improved instead of trying to innovate. Which is also what this current situation looks like.

I can honestly think of no better reason to be so secretive about the "amazing new xpoint", unless it actually isn't neither amazing, nor new, nor xpoint. I mean if it s a "tech secret" I don't see how they shouldn't be able to protect their IP via patents, I mean if it really is something new, it is not like they are short on the money it will take to patent it. So there is no good reason to keep it such a secret other than the intent to cultivate mystery over something that is not mysterious at all.

eddman - Tuesday, April 25, 2017 - link

This is what happens when people let their personal feelings get in the way."Even if they cure cancer, they still suck and I hate them"

ddriver - Tuesday, April 25, 2017 - link

Except it doesn't cure cancer. And I'd say it is always better to prevent cancer than to have the destructive treatment leave you a diminished being.eddman - Tuesday, April 25, 2017 - link

Just admit you have a personal hatred towards MS, intel and nvidia, no matter what they do, and be done with it. It's beyond obvious.