The Intel Optane SSD DC P4800X (375GB) Review: Testing 3D XPoint Performance

by Billy Tallis on April 20, 2017 12:00 PM ESTRandom Read

Random read speed is the most difficult performance metric for flash-based SSDs to improve on. There is very limited opportunity for a drive to do useful prefetching or caching, and parallelism from multiple dies and channels can only help at higher queue depths. The NVMe protocol reduces overhead slightly, but even a high-end enterprise PCIe SSD can struggle to offer random read throughput that would saturate a SATA link.

Real-world random reads are often blocking operations for an application, such as when traversing the filesystem to look up which logical blocks store the contents of a file. Opening even an non-fragmented file can require the OS to perform a chain of several random reads, and since each is dependent on the result of the last, they cannot be queued.

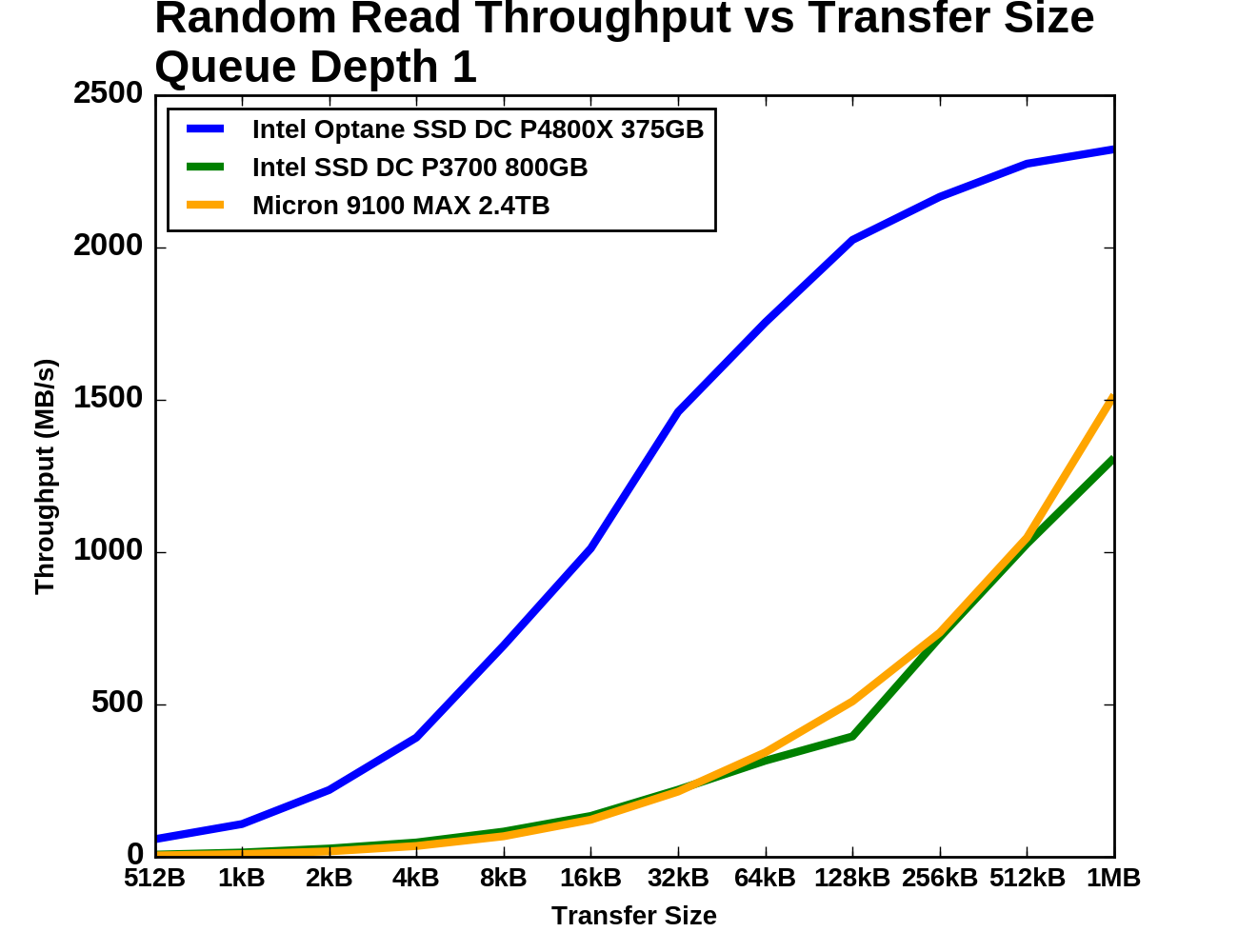

Our first test of random read performance looks at the dependence on transfer size. Most SSDs focus on 4kB random access as that is the most common page size for virtual memory systems and it is a common filesystem block size. Maximizing 4kB performance has gotten more difficult as NAND flash has moved to page sizes that are larger than 4kB, and some SSD vendors have started including 8kB random access specifications. It is worth noting that 3D XPoint memory, from a fundamental standpoint, does not impose any inherent block size restrictions on the Optane SSD, but for compatibility purposes the P4800X by default exposes a 512B sector size.

Queue Depth 1

For our test, each transfer size was tested for four minutes and the statistics exclude the first minute. The drives were preconditioned to steady state by filling them with 4kB random writes twice over.

|

|||||||||

| Vertical Axis scale: | Linear | Logarithmic | |||||||

The Optane SSD starts off with about eight times the throughput of the other drives for small random reads. As the transfer sizes grow past 16kB the Optane SSD's performance starts to level off and the flash SSDs start to catch up, with the Micron 9100 overtaking the Intel P3700. At 1MB transfer size the Optane SSD is only providing an additional 50% higher throughput than the Micron 9100.

Queue Depth >1

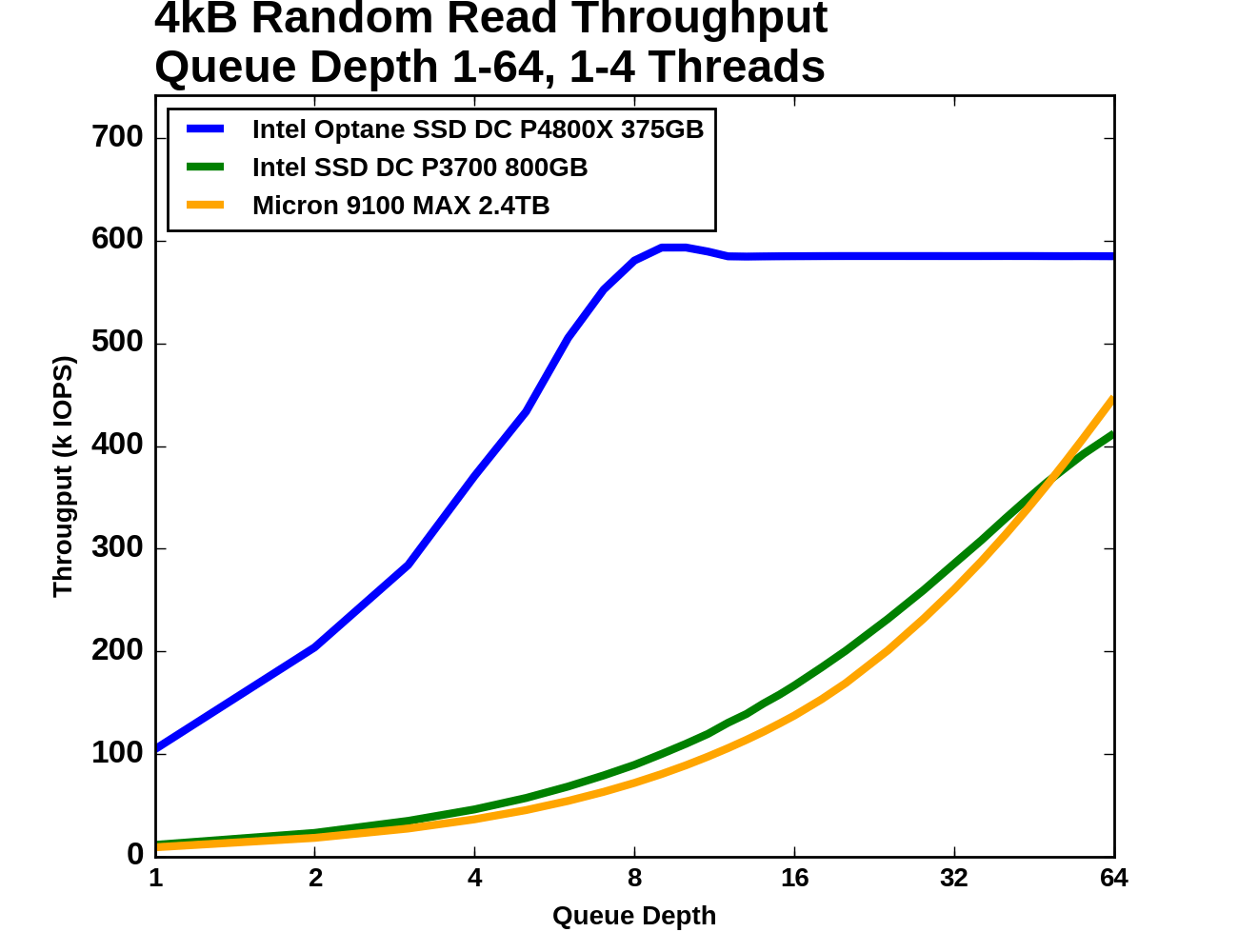

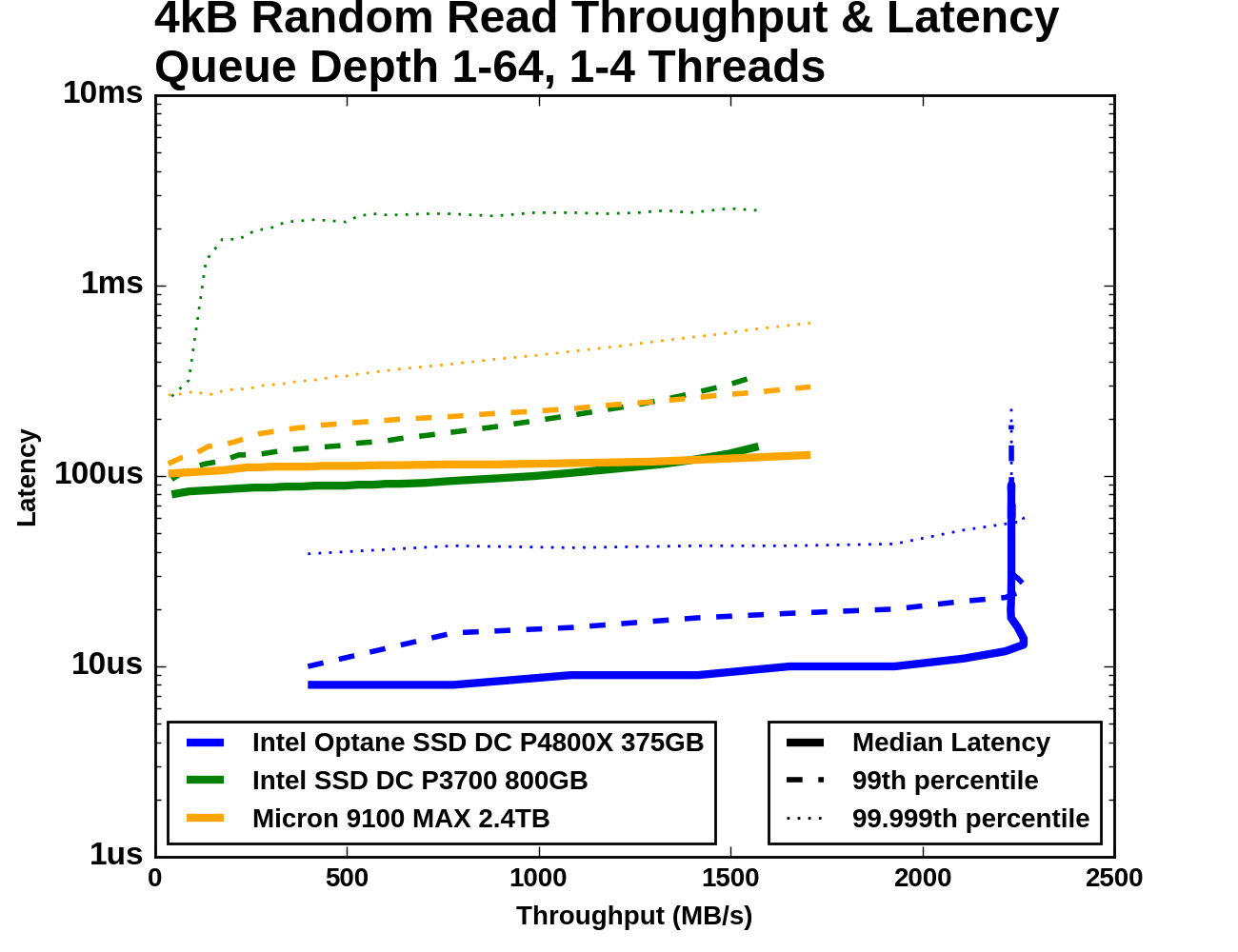

Next, we consider 4kB random read performance at queue depths greater than one. A single-threaded process is not capable of saturating the Optane SSD DC P4800X with random reads so this test is conducted with up to four threads. The queue depths of each thread are adjusted so that the queue depth seen by the SSD varies from 1 to 64, with every single queue depth from 1 through 16, then 18, 20, and factors of four up to 64 (so 24, 28, 32... to 64). The timing is the same as for the other tests: four minutes for each tested queue depth, with the first minute excluded from the statistics.

Looking just at the range of throughputs and latencies achieved, it is clear that the Optane SSD DC P4800X is in a different league entirely from the flash SSDs. The Optane SSD saturates part way through the test with a throughput +30% higher than what the Micron 9100 can deliver even at QD64, and at the same time its 99.999th percentile latency is half of the Micron 9100's median latency.

Between the two flash SSDs, the Intel P3700 has better performance on average through most of the test, but its maximum achieved throughput is slightly lower than the Micron 9100's peak and the 9100 offers lower latency at the high end. The Micron 9100 also has much better 99.999th percentile latency across almost the entire range of queue depths.

|

|||||||||

| Vertical Axis units: | IOPS | MB/s | |||||||

In absolute terms, the Optane SSD's performance is uncontested. Even though the Optane SSD's random read throughput is saturating at QD8, by QD6 it's outperforming what either flash SSD can deliver at any reasonable queue depth. Beyond QD8 the Optane SSD does not deliver even incremental improvement in throughput and increasing queue depth just adds latency. This test stops at QD64, which isn't enough to saturate either flash SSD. The Micron 9100 MAX is rated for a maximum of 750k random read IOPS, but clearly the Optane SSD delivers far better performance at the kinds of queue depths that are reasonably attainable.

|

|||||||||

| Mean | Median | 99th Percentile | 99.999th Percentile | ||||||

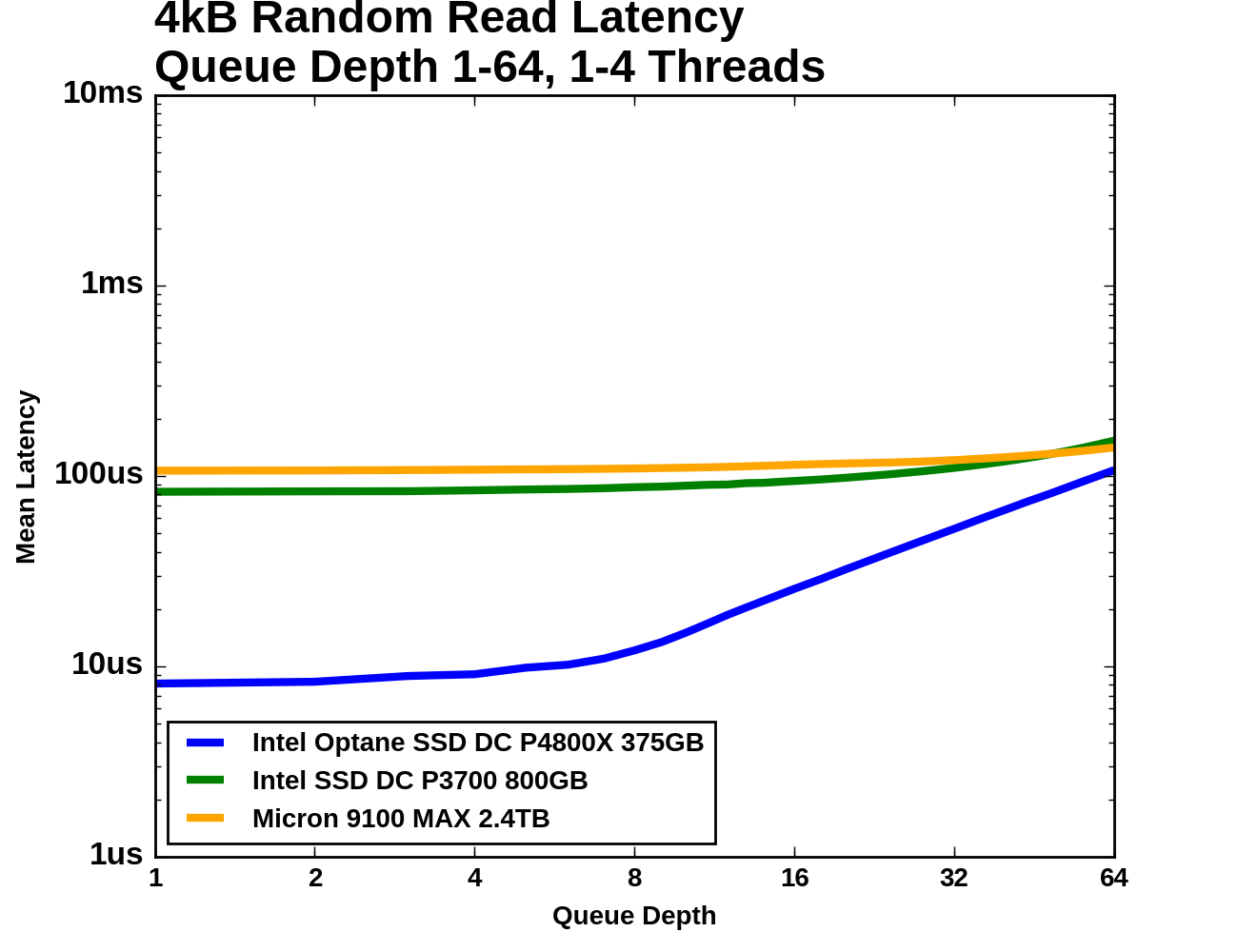

All three SSDs show median latency growing slowly across a wide range of queue depths. At QD1 the 99th percentile curves are very close to the median latency curves, but at high queue depths the 99th percentile latency is around twice the median. For the Optane SSD and the Micron 9100 MAX, the 99.999th percentile latency is higher by another factor of two or so, but the Intel P3700 cannot deliver such tight regulation and its worst-case latencies are well over a millisecond.

Random Write

Flash memory write operations are far slower than read operations. This is not always reflected in the performance specifications of SSDs because writes can be deferred and combined, allowing the SSD to signal completion before the data has actually moved from the drive's cache to the flash memory. The 3D XPoint memory used by the Optane SSD DC P4800X does have slower writes than reads, and it was commented that Intel did not specificy read latency when Optane was initially announced, but our results show that the disparity is not as large. With inherently fast writes and no page size and erase block limitations, the Optane SSD should be far less reliant on write combining and large spare areas to offer high throughput random writes. The drive's translation layer is probably far simpler than what flash SSDs require, potentially giving a latency advantage.

Queue Depth 1

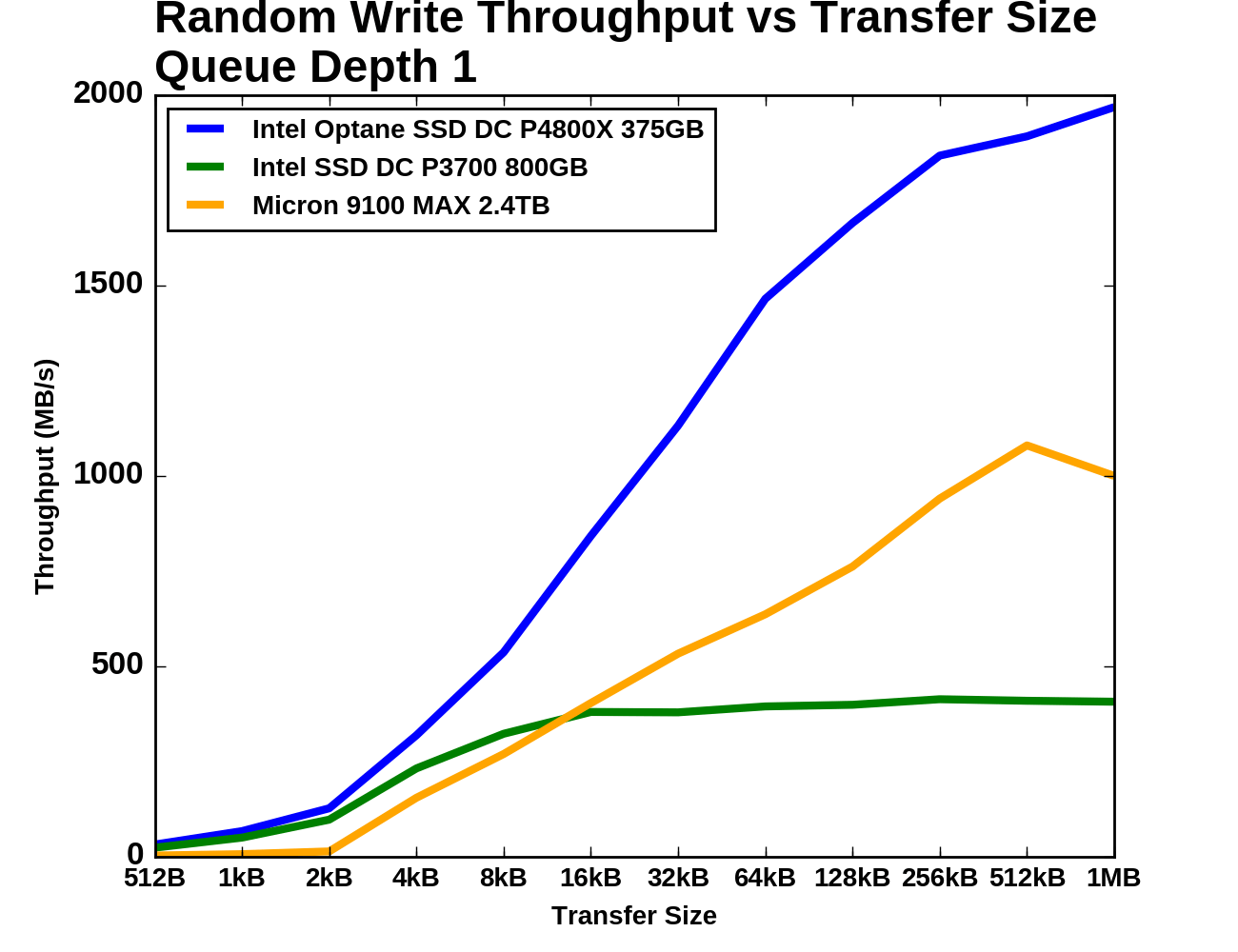

As with random reads, we first examine QD1 random write performance of different transfer sizes. 4kB is usually the most important size, but some applications will make smaller writes when the drive has a 512B sector size. Larger transfer sizes make the workload somewhat less random, reducing the amount of bookkeeping the SSD controller needs to do and generally allowing for increased performance.

|

|||||||||

| Vertical Axis scale: | Linear | Logarithmic | |||||||

The Micron 9100 really doesn't like random writes smaller than 4kB, but both Intel drives handle it relatively well. The Optane SSD DC P4800X has only a 30% higher throughput result than the P3700 for transfer sizes of 4kB and smaller. The Intel P3700 (owing mainly to its relatively low capacity) doesn't benefit very much as transfer sizes grow beyond 4kB, as it saturates soon after. The Optane SSD maintains a clear lead for transfers of 8kB and larger, averaging about twice the throughput of the Micron 9100 as both show diminishing returns from increased transfer sizes.

Queue Depth >1

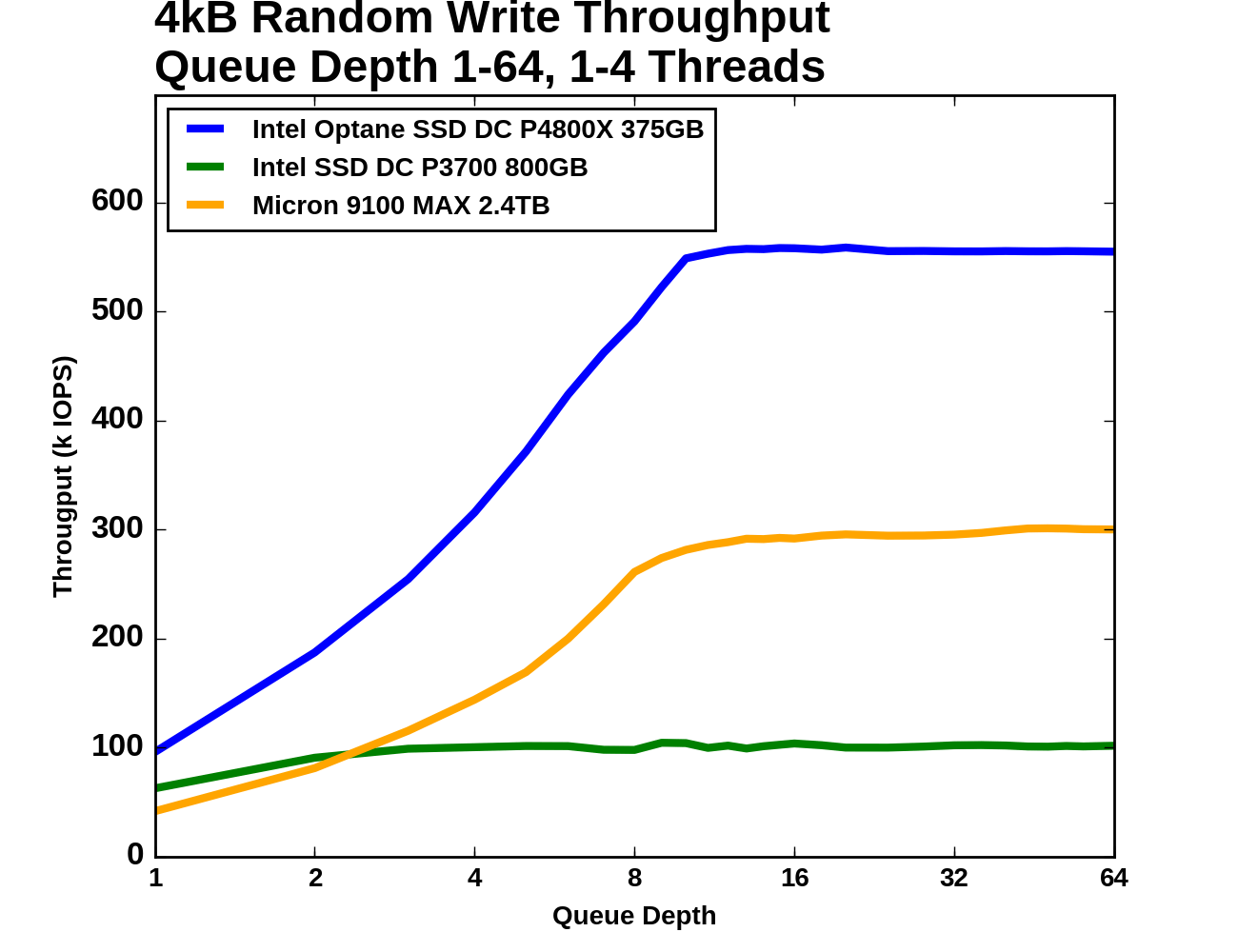

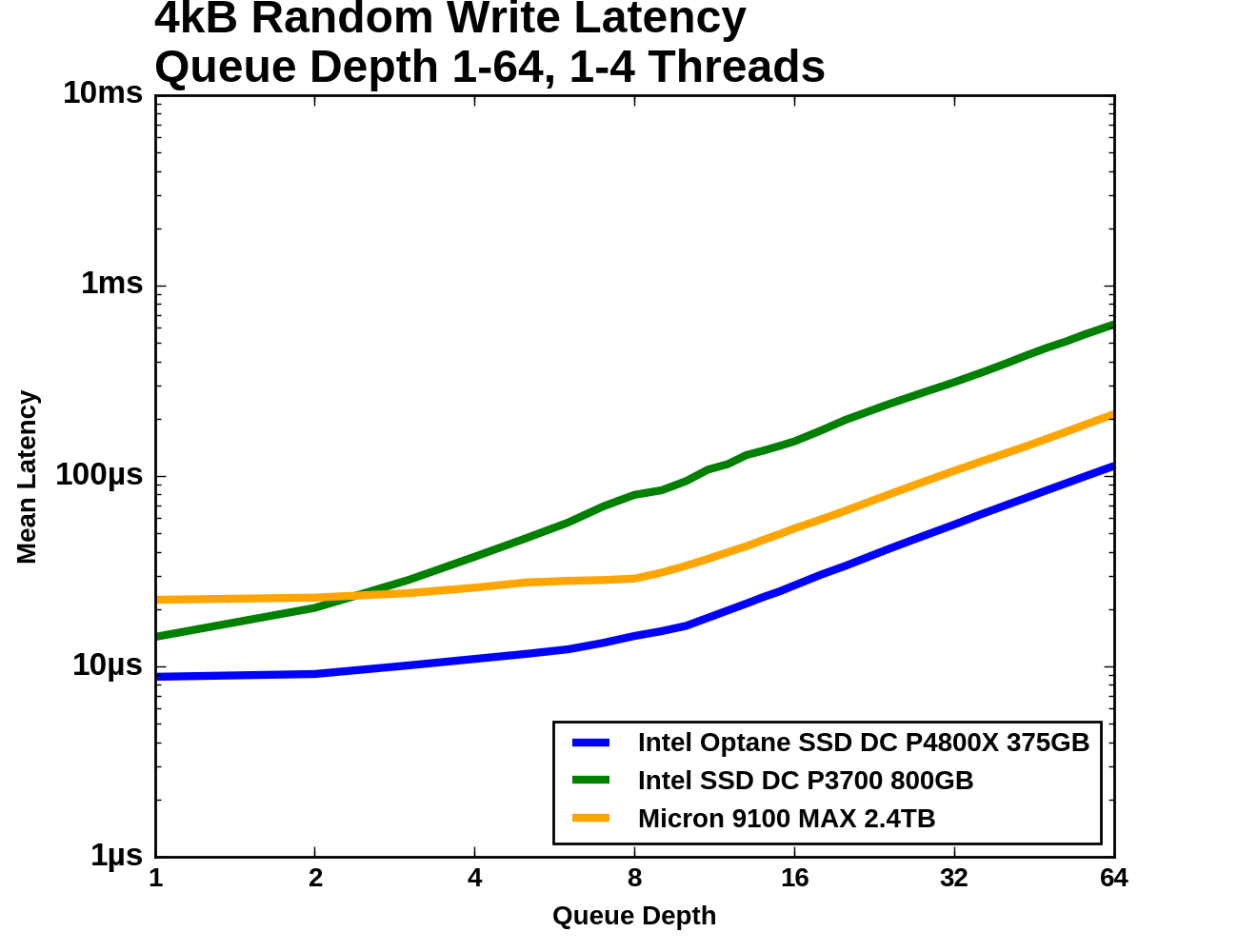

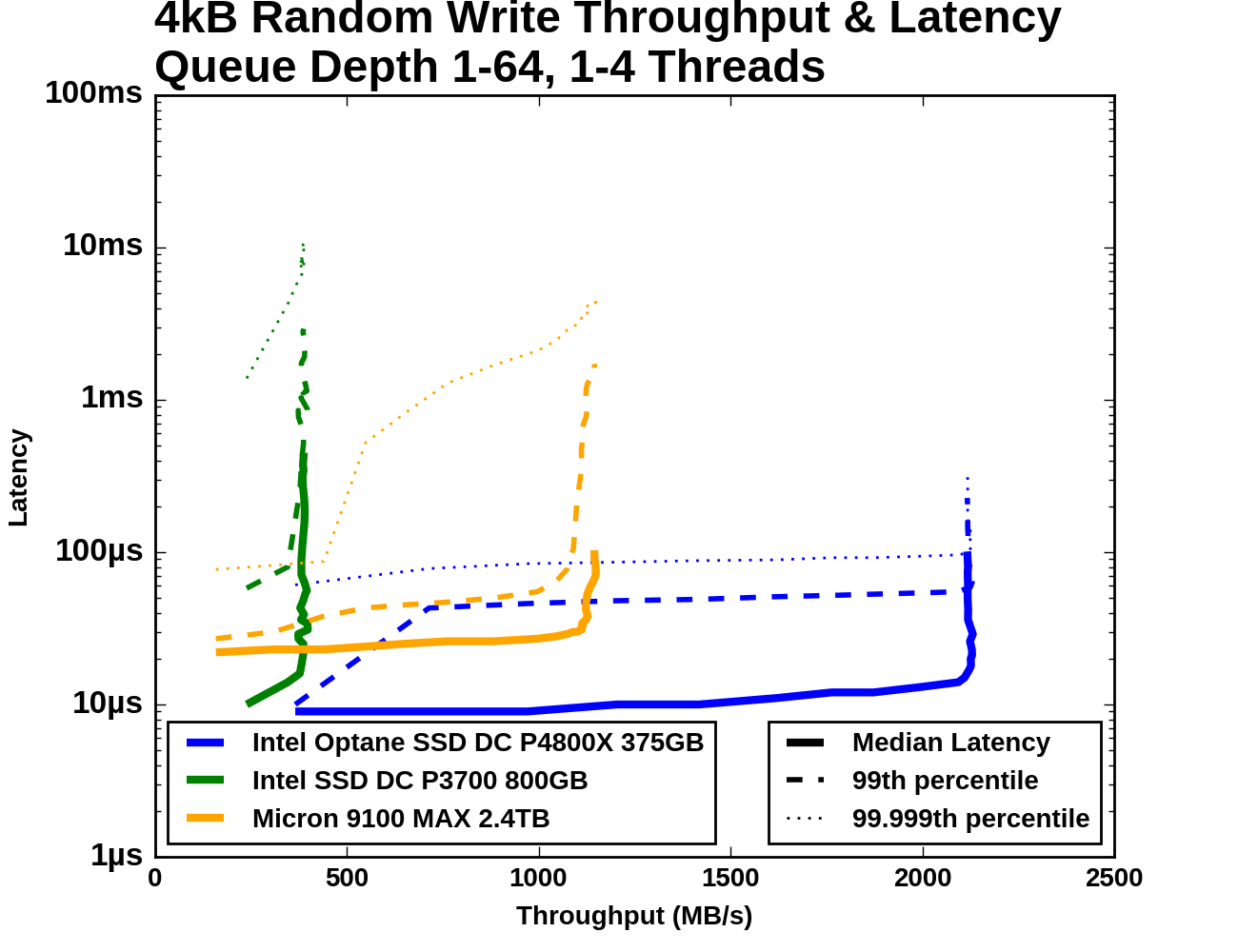

The test of 4kB random write throughput at different queue depths is structured identically to its counterpart random write test above. Queue depths from 1 to 64 are tested, with up to four threads used to generate this workload. Each tested queue depth is run for four minutes and the first minute is ignored when computing the statistics.

The QD1 starting points for all three drives are somewhat close together, with the fastest drive (the Optane SSD, of course) only offering about twice the random write throughput than the Micron 9100, with less than half the average latency. From there, the gaps widen quickly. The Intel P3700 reaches its maximum throughput very quickly and then the latency just piles up. The Micron 9100 keeps its median and 99th percentile latency reasonably well controlled until reaching its maximum throughput, which is half of what the Optane SSD can deliver.

|

|||||||||

| Vertical Axis units: | IOPS | MB/s | |||||||

While QD64 wasn't enough to completely saturate the flash SSDs with random reads, here with random writes, QD8 is enough for any of the drives, and the P3700 is done around QD2. The Micron 9100 starts out as the slowest of the three but soon overtakes the Intel P3700.

When examining the latency statistics, we should keep in mind that all three drives reached their full throughput by QD8. At queue depths higher than that, latency increases with no improvement to throughput. A well-tuned server will generally not be operating the drives in that regime, so the right half of these graphs can be mostly ignored.

|

|||||||||

| Mean | Median | 99th Percentile | 99.999th Percentile | ||||||

Median latency for these drives is quite flat until they reach saturation. 99th percentile latency for the flash SSDs shoots up when they're operated at unnecessarily high queue depths. The 99.999th percentile latency of the Intel P3700 is never less than 1ms and actually exceeds 10ms at the end of the test. The Micron 9100's 99.999th percentile latency is fairly close to that of the Optane SSD until the 9100 hits QD4, where it spikes and surpasses 1ms shortly before the drive reaches full throughput. Meanwhile, the Optane SSD's 99.999th percentile latency only climbs up to a third of a millisecond even at QD64.

117 Comments

View All Comments

melgross - Tuesday, April 25, 2017 - link

You're making the mistake those who know nothing make, which is surprising for you. This is a first generation product. It will get much faster, and much cheaper as time goes on. NAND will stagnate. You also have to remember that Intel never made the claim that this was as fast as RAM, or that it would be. The closest they came was to say that this would be in between NAND and RAM in speed. And yes, for some uses, it might be able to replace RAM. But that could be several generations down the road, in possibly 5 years, or so.tuxRoller - Sunday, April 23, 2017 - link

I'm not sure i understand you.You talk about "pages", but, i hope, the reviewer was only using dio, so there would be no page cache.

It's very unclear where you are getting this "~100x" number. Nvme connected dram has a plurality of hits around 4-6 us (depending on software) but it also has a distributed latency curve. However, i don't know what the latency at the 99.999% percentile. The point is that even with dram's sub-100ns latency, it's still not staying terribly close to the theoretical min latency of the bus.

Btw, it's not just the controller. A very large amount of latency comes from the block layer itself (amongst other things).

Santoval - Tuesday, June 6, 2017 - link

It is quite possible that Intel artificially weakened P4800X's performance and durability in order to avoid internal competition with their SSD division (they already did the same with Atoms). If your new technology is *too* good it might make your other more mainstream technology look bad in comparison and you could see a big drop in sales. Or it might have a "deflationary" effect, where their customers might delay buying in hope of lower prices later. This way they can also have a more clear storage hierarchy, business segment wise, where their mainstream products are good, and their niche ones are better but not too good.I am not suggesting that it could ever compete with DRAM, just that the potential of 3D XPoint technology might actually be closer to what they mentioned a year ago than the first products they shipped.

albert89 - Friday, April 21, 2017 - link

Intel wont be reducing the price of the optane but rather will be giving the average consumer a watered down version which will be charged at a premium but perform only slightly better then the top SSD. The conclusion ? Another over priced ripoff from Intel.TheinsanegamerN - Thursday, April 20, 2017 - link

the fastest SSD on the consumer market is the 960 pro, which can hit 3.2GB/s read under certain circumstances.This is the equivalent of single channel DDR 400 from 2001. and DDR had far lower latencys to boot.

We are a long, long way from replacing RAM with storage.

ddriver - Friday, April 21, 2017 - link

What makes the most impression is it took a completely different review format to make this product look good. No doubt strictly following intel's own review guidelines. And of course, not a shred of real world application. Enter hypetane - the paper dragon.ddriver - Friday, April 21, 2017 - link

Also, bandwidth is only one side of the coin. Xpoint is 30-100+ times more latent than dram, meaning the CPU will have to wait 30-100+ times longer before it has data to compute, and dram is already too slow in this aspect, so you really don't want to go any slower.I see a niche for hypetane - ram-less systems, sporting very slow CPUs. Only a slow CPU will not be wasted on having to wait on working memory. Server CPUs don't really need to crunch that much data either, if any, which is paradoxical, seeing how intel will only enable avx512 on xeons, so it appears that the "amazingly fast" and overpriced hypetane is at home only in simple low end servers, possibly paired with them many core atom chips. Even overpriced, it will kind of a decent deal, as it offers about 3 times the capacity per dollar as dram, paired with wimpy atoms it could make for a decent simple, low cost, frequent access server.

frenchy_2001 - Friday, April 21, 2017 - link

You are missing the usefulness of it entirely.Yes, it is a niche product.

And I even agree, intel is hyping it and offering it for consumer with minimal benefit (beside intel's bottom line).

But it realistically slots between NAND and DRAM.

This review shows that it has lower latency than NAND and it has higher density than DRAM.

This is the play.

You say it cannot replace DRAM and for most usage (by far) you are true. However, for a small niche that works with very big data sets (like for finace or exploration), having more memory, although slower, will still be much faster than memory + swap (to a slower NAND storage).

Let me repeat, this is a niche product, but it has its uses.

Intel marketing is hyping it and trying to use it where its tradeoffs (particularly price) make little sense, but the technology itself is good (if limited).

wumpus - Sunday, April 23, 2017 - link

Don't be so sure that latency is keeping it from being used as [secondary] main memory. A 4GB machine can actually function (more or less) for office duty and some iffy gaming capability. I'd strongly suspect that a 4-8GB stack of HBM (preferably the low-cost 512 bit systems, as the CPU really only wants 512bit chunks of memory at a time) with the rest backed by 3dxpoint would still be effective at this high latency. Any improvement is likely to remove latency as something that would stop it (and current software can use the current stack [with PCIe connection] to work 3dxpoint as "swappable ram").The endurance may well keep this from happening (it is on par with SLC).

The other catch is that this is a pretty steep change along the entire memory system. Expect Intel to have huge internal fights as to what the memory map should look like, where the HBM goes (does Intel pay to manufacture an expensive CPU module or foist it on down the line), do you even use HBM (if Ravenridge does, I'd expect that Intel would have to if they tried to use xpoint as main memory)? The big question is what would be the "cache line" of the DRAM memory: the current stack only works with 4k, the CPU "wants" 512 bits, HBM is closer to 4k. 4k looks like a no-brainer, but you still have to put a funky L5/buffer that deals with the huge cache line or waste a ton of [top level, not sure if L3 or L4] cache by giving it 4k cache lines.

melgross - Tuesday, April 25, 2017 - link

What is it with you and RAM? This isn't a RAM replacement for most any use. Intel hasn't said that it is. Why are you insisting on comparing it to RAM?