The Intel Optane SSD DC P4800X (375GB) Review: Testing 3D XPoint Performance

by Billy Tallis on April 20, 2017 12:00 PM ESTChecking Intel's Numbers

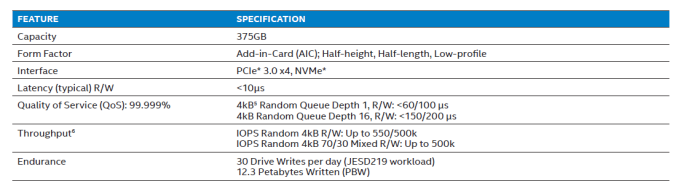

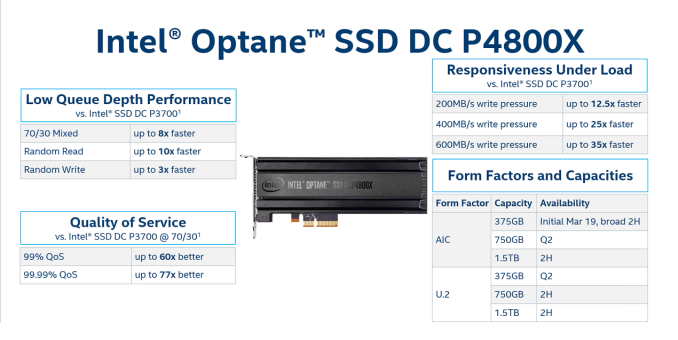

The product brief for the Optane SSD DC P4800X provides a limited set of performance specifications, entirely omitting any standards for sequential throughput. Some latency and throughput targets are provided for 4kB random reads, writes, and a 70/30 mix of reads and writes.

This section has our results for how the Optane SSD measures up to Intel's advertised specifications and how the flash SSDs fare on the same tests. The rest of this review provides deeper analysis of how these drives perform across a range of queue depths, transfer sizes, and read/write mixes.

| 4kB Random Read at a Queue Depth of 1 (QD1) | |||||||

| Drive | Throughput | Latency (µs) | |||||

| MB/s | IOPS | Mean | Median | 99th | 99.999th | ||

| Intel Optane SSD DC P4800X 375GB | 413.0 | 108.3k | 8.9 | 9 | 10 | 37 | |

| Intel SSD DC P3700 800GB | 48.7 | 12.8k | 77.9 | 76 | 96 | 2768 | |

| Micron 9100 MAX 2.4TB | 35.3 | 9.2k | 107.7 | 104 | 117 | 306 | |

Intel's queue depth 1 specifications are expressed in terms of latency, and at a throughput specification at QD1 would be redundant. Intel specifies a "typical" latency of less than 10µs, and most QD1 random reads on the Optane SSD take 8 or 9µs; even the 99th percentile latency is still 10µs.

The 99.999th percentile target is less than 60µs, which the Optane SSD beats by a wide margin. Overall, the Optane SSD passes with ease. The flash SSDs are 8-12x slower on average, and the 99.999th percentile latency of the Intel P3700 is far worse, at around 75x slower.

| 4kB Random Read at a Queue Depth of 16 (QD16) | |||||||

| Drive | Throughput | Latency (µs) | |||||

| MB/s | IOPS | Mean | Median | 99th | 99.999th | ||

| Intel Optane SSD DC P4800X 375GB | 2231.0 | 584.8k | 25.5 | 25 | 41 | 81 | |

| Intel SSD DC P3700 800GB | 637.9 | 167.2k | 93.9 | 91 | 163 | 2320 | |

| Micron 9100 MAX 2.4TB | 517.5 | 135.7k | 116.2 | 114 | 205 | 1560 | |

Intel's QD16 random read result is 584.8k IOPS for throughput, which is above the official specification of 550k IOPS by a few percent. The 99.999th percentile latency scores 81µs, significantly under the target of less than 150µs. The flash SSDs are 3-5x slower on most metrics, but 20-30 times slower at the 99.999th percentile for latency.

| 4kB Random Write at a Queue Depth of 1 (QD1) | |||||||

| Drive | Throughput | Latency (µs) | |||||

| MB/s | IOPS | Mean | Median | 99th | 99.999th | ||

| Intel Optane SSD DC P4800X 375GB | 360.6 | 94.5k | 8.9 | 9 | 10 | 64 | |

| Intel SSD DC P3700 800GB | 350.6 | 91.9k | 9.2 | 9 | 18 | 81 | |

| Micron 9100 MAX 2.4TB | 160.9 | 42.2k | 22.2 | 22 | 24 | 76 | |

In the specifications, the QD1 random write specifications are 10µs on latency, while the 99.999th percentile for latency is relaxed from 60µs to 100µs. In our results, the QD1 random write throughput (360.6 MB/s) of the Optane SSD is a bit lower than the QD1 random read throughput (413.0 MB/s), but the latency is roughly the same (8.9µs mean, 10µs on 99th).

However it is worth noting that the Optane SSD only manages a passing score when the application uses asynchronous I/O APIs. Using simple synchronous write() system calls pushes the average latency up to 11-12µs.

Also, due to the capacitor-backed DRAM caches, the flash SSDs also handle QD1 random writes very well. The Intel P3700 also manages to keep latency mostly below 10µs, and all three drives have 99.999th percentile latency below Intel's 100µs standard for the Optane SSD.

| 4kB Random Write at a Queue Depth of 16 (QD16) | |||||||

| Drive | Throughput | Latency (µs) | |||||

| MB/s | IOPS | Mean | Median | 99th | 99.999th | ||

| Intel Optane SSD DC P4800X 375GB | 2122.5 | 556.4 | 27.0 | 23 | 65 | 147 | |

| Intel SSD DC P3700 800GB | 446.3 | 117.0 | 134.8 | 43 | 1336 | 9536 | |

| Micron 9100 MAX 2.4TB | 1144.4 | 300.0 | 51.6 | 34 | 620 | 3504 | |

The Optane SSD DC P4800X is specified for 500k random write IOPS using four threads to provide a total queue depth of 16. In our tests, the Optane SSD scored 556.4k IOPs, exceeding the specification by more than 11%. This equates to a random write throughput of more than 2GB/s.

The flash SSDs are more dependent on the parallelism benefits of higher capacities, and as a result can be slow at the same capacity. Hence in this case the 2.4TB Micron 9100 fares much better than the 800GB Intel P3700. The Micron 9100 hits its own specification right on the nose with 300k IOPS and the Intel P3700 comfortably exceeds its own 90k IOPS specification, although remaining the slowest of the three by far. The Optane SSD stays well below its 200µs limit for 99.999th percentile latency by scoring 147µs, while the flash SSDs have outliers of several milliseconds. Even at the 99th percentile the flash SSDs are 10-20x slower than Optane.

| 4kB Random Mixed 70/30 Read/Write Queue Depth 16 | |||||||

| Drive | Throughput | Latency (µs) | |||||

| MB/s | IOPS | Mean | Median | 99th | 99.999th | ||

| Intel Optane SSD DC P4800X 375GB | 1929.7 | 505.9 | 29.7 | 28 | 65 | 107 | |

| Intel SSD DC P3700 800GB | 519.9 | 136.3 | 115.5 | 79 | 1672 | 5536 | |

| Micron 9100 MAX 2.4TB | 518.0 | 135.8 | 116.0 | 105 | 1112 | 3152 | |

On a 70/30 read/write mix, the Optane SSD DC P4800X scores 505.9k IOPS, which beats the specification of 500k IOPS by 1%. Both of the flash SSDs deliver roughly the same throughput, a little over a quarter of the speed of the Optane SSD. Intel doesn't provide a latency specification for this workload, but the measurements unsurprisingly fall in between the random read and random write results. While low-end consumer SSDs sometimes perform dramatically worse on mixed workloads than on pure read or write workloads, none of these drives have that problem due to their market positioning and capabilities therein.

117 Comments

View All Comments

Ninhalem - Thursday, April 20, 2017 - link

At last, this is the start of transitioning from hard drive/memory to just memory.ATC9001 - Thursday, April 20, 2017 - link

This is still significantly slower than RAM....maybe for some typical consumer workloads it can take over as an all in one storage solution, but for servers and power users, we'll still need RAM as we know it today...and the fastest "RAM" if you will is on die L1 cache...which has physical limits to it's speed and size based on speed of light!I can see SSD's going away depending on manufacturing costs but so many computers are shipping with spinning disks still I'd say it's well over a decade before we see SSD's become the replacement for all spinning disk consumer products.

Intel is pricing this right between SSD's and RAM which makes sense, I just hope this will help the industry start to drive down prices of SSD's!

DanNeely - Thursday, April 20, 2017 - link

Estimates from about 2 years back had the cost/GB price of SSDs undercutting that of HDDs in the early 2020's. AFAIK those were business as usual projections, but I wouldn't be surprised to see it happen a bit sooner as HDD makers pull the plug on R&D for the generation that would otherwise be overtaken due to sales projections falling below the minimums needed to justify the cost of bringing it to market with its useful lifespan cut significantly short.Guspaz - Saturday, April 22, 2017 - link

Hard drive storage cost has not changed significantly in at least half a decade, while ssd prices have continued to fall (albeit at a much slower rate than in the past). This bodes well for the crossover.Santoval - Tuesday, June 6, 2017 - link

Actually it has, unless you regard HDDs with double density at the same price every 2 - 2.5 years as not an actual falling cost. $ per GB is what matters, and that is falling steadily, for both HDDs and SSDs (although the latter have lately spiked in price due to flash shortage).bcronce - Thursday, April 20, 2017 - link

The latency specs include PCIe and controller overhead. Get rid of those by dropping this memory in a DIMM slot and it'll be much faster. Still not as fast as current memory, but it's going to be getting close. Normal system memory is in the range of 0.5us. 60us is getting very close.tuxRoller - Friday, April 21, 2017 - link

They also include context switching, isr (pretty board specific), and block layer abstraction overheads.ddriver - Friday, April 21, 2017 - link

PCIE latency is below 1 us. I don't see how subtracting less than 1 from 60 gets you anywhere near 0.5.All in all, if you want the best value for your money and the best performance, that money is better spent on 128 gigs of ecc memory.

Sure, xpoint is non volatile, but so what? It is not like servers run on the grid and reboot every time the power flickers LOL. Servers have at the very least several minutes of backup power before they shut down, which is more than enough to flush memory.

Despite intel's BS PR claims, this thing is tremendously slower than RAM, meaning that if you use it for working memory, it will massacre your performance. Also, working memory is much more write intensive, so you are looking at your money investment crapping out potentially in a matter of months. Whereas RAM will be much, much faster and work for years.

4 fast NVME SSDs will give you like 12 GB\s bandwidth, meaning that in the case of an imminent shutdown, you can flush and restore the entire content of those 128 gigs of ram in like 10 seconds or less. Totally acceptable trade-back for tremendously better performance and endurance.

There is only one single, very narrow niche where this purchase could make sense. Database usage, for databases with frequent low queue access. This is an extremely rare and atypical application scenario, probably less than 1/1000 in server use. Which is why this review doesn't feature any actual real life workloads, because it is impossible to make this product look good in anything other than synthetic benches. Especially if used as working memory rather than storage.

IntelUser2000 - Friday, April 21, 2017 - link

ddriver: Do you work for the memory industry? Or hold a stock in them? You have a personal gripe about the company that goes beyond logic.PCI Express latency is far higher than 1us. There are unavoidable costs of implementing a controller on the interface and there's also software related latency.

ddriver - Friday, April 21, 2017 - link

I have a personal gripe with lying. Which is what intel has been doing every since it announced hypetane. If you find having a problem with lying a problem with logic, I'd say logic ain't your strong point.Lying is also what you do. PCIE latency is around 0.5 us. We are talking PHY here. Controller and software overhead affect equally every communication protocol.

Xpoint will see only minuscule latency improvements from moving to dram slots. Even if PCIE has about 10 times the latency of dram, we are still talking ns, while xpoint is far slower in the realm of us. And it ain't no dram either, so the actual latency improvement will be nowhere nearly the approx 450 us.

It *could* however see significant bandwidth improvements, as the dram interface is much wider, however that will require significantly increased level of parallelism and a controller that can handle it, and clearly, the current one cannot even saturate a pcie x4 link. More bandwidth could help mitigate the high latency by masking it through buffering, but it will still come nowhere near to replacing dram without a tremendous performance hit.