Investigating Cavium's ThunderX: The First ARM Server SoC With Ambition

by Johan De Gelas on June 15, 2016 8:00 AM EST- Posted in

- SoCs

- IT Computing

- Enterprise

- Enterprise CPUs

- Microserver

- Cavium

The ThunderX SoCs

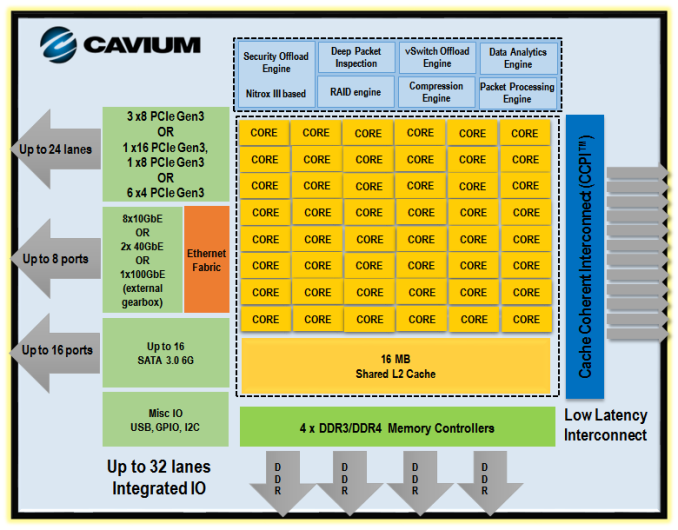

Below you can see all the building blocks that Cavium has used to build the ThunderX.

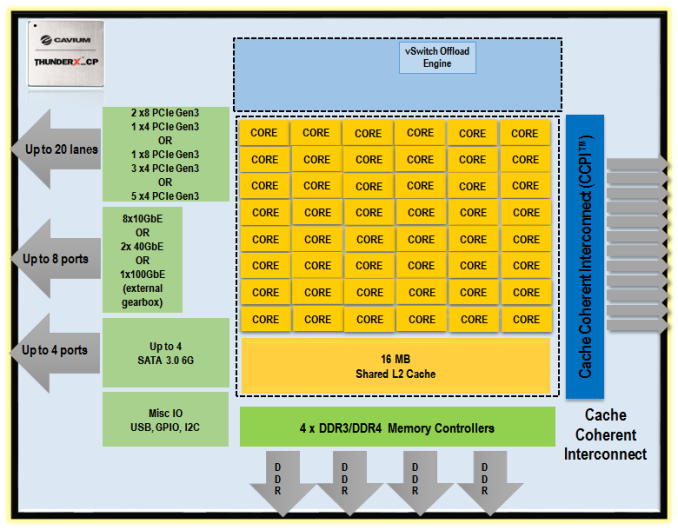

Depending on the target market, some of these building blocks are removed to reduce power consumption or to increase the clockspeed. The "Cloud Compute" version (ThunderX_CP) that we're reviewing today has only one accelerator (vSwitch offload) and 4 SATA ports (out of 16), and no Ethernet fabric.

But even the compute version can still offer an 8 integrated 10 Gbit Ethernet interfaces, which is something you simply don't see in the "affordable" server world. For comparison, the Xeon D has two 10 Gbit interfaces.

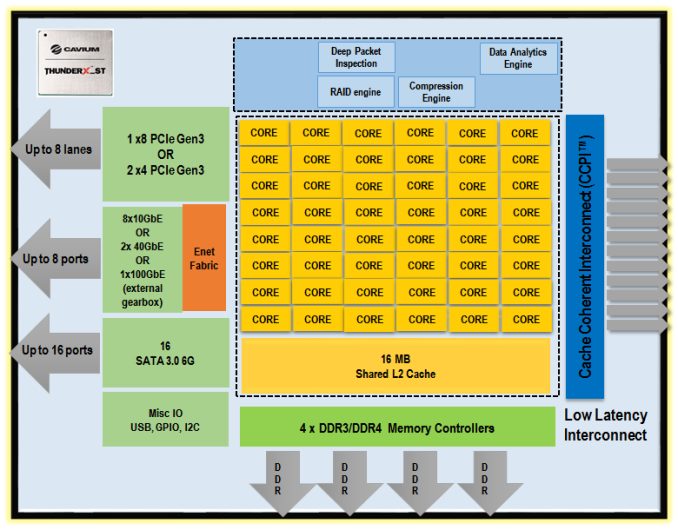

The storage version (_ST) of the same chip has more co-processors, more SATA ports (16) and an integrated Ethernet fabric. But the ThunderX_ST cuts back on the number of PCIe lanes and might not reach the same clockspeeds.

There is also a secure compute version with IP Sec/SSL accelerators (_SC) and network/telco version (_NT). In total there are 4 variants of the same SoC. But in this article, we focus on the version we were able to test: the CP or Cloud Compute.

82 Comments

View All Comments

vivs26 - Wednesday, June 15, 2016 - link

Not necessarily - (read Amdahl's law of diminishing returns). The performance actually depends on the workload. Having a million cores guarantees nothing in terms of performance unless the workload is parallelizable which in the real world is not as much as we think it could be. I'm curious to see how xeon merged with altera programmable fabric performs than ARM on a server.maxxbot - Wednesday, June 22, 2016 - link

Technically true but every generation that millstone gets a little smaller, the die area and power needed to translate x86 into uops isn't huge and reduces every generation.jardows2 - Wednesday, June 15, 2016 - link

Interesting. Faster in a few workloads where heavy use of multi-thread is important, but significantly slower in more single thread workloads. For server use, you don't always want parallelized tasks. The results are pretty much across the board for all the processors tested: If the ThunderX was slower, it was slower than all the Intel chips. If it were faster, it was faster than all but the highest end Intel Chips. With the price only being slightly lower than the cheapest Intel chip being sold, I don't think this is going to be a Xeon competitor at all, but will take a few niche applications where it can do better.With no significant energy savings, we should be looking forward to the ThunderX2 to see if it will bring this into a better alternative.

ddriver - Wednesday, June 15, 2016 - link

There is hardly a server workload where you don't get better throughput by throwing more cores and servers at it. Servers are NOT about parallelized task, but about concurrent tasks. That's why while desktops are still stuck at 8 cores, server chips come with 20 and more... Server workloads are usually very simple, it is just that there is a lot of them. They are so simple and take so little time it literally makes no sense parallelizing them.jardows2 - Wednesday, June 15, 2016 - link

In the scenario you described, the single-thread performance takes on even more importance, thus highlighting the advantage the Xeon's currently have in most server configurations.niva - Wednesday, June 15, 2016 - link

Not if the Xeon doesn't have enough cores to actually process 40+ singlethreaded tasks con-currently.hechacker1 - Wednesday, June 15, 2016 - link

But kernels and VMWare know how to schedule multiple threads on 1 core if it's not being fully utilized. Single threaded IPC can make up for not having as many cores. See the iPhone SoCs for another example.ddriver - Wednesday, June 15, 2016 - link

Not if you have thousands of concurrent workloads and only like 8 cores. As fast as each core might be, the overhead from workload context switching will eat it up.willis936 - Thursday, June 16, 2016 - link

Yeah if each task is not significantly longer than a context switch. Context switches are very fast, especially with processors with many sets of SMT registers per core.ddriver - Thursday, June 16, 2016 - link

If what you suggest is correct, then intel would not be investing chip TDP in more cores but higher clocks and better single threaded performance. Clearly this is not the case, as they are pushing 20 cores at the fairly modest 2.4 Ghz.