The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTCompute

Shifting gears, let’s take a look at compute performance on Pascal.

Overall, we’re not expecting a significant difference in compute performance compared to Maxwell 2 for standard compute benchmarks. The fundamental architecture hasn’t changed – the CUDA cores, register files, and caches still behave as before - so there’s little reason for compute performance to shift. GP104 for all intents and purposes should perform like a higher clocked and slightly wider Maxwell 2, similar to what we’ve seen in most games.

However in the long run there is potential for Pascal to show some improvements. The architecture’s improved scheduling features are geared in part towards HPC users, and instruction level preemption means that compute kernels can now be a lot more aggressive on consumer systems since they can be paused so easily. That said, to really leverage any of these improvements, applications utilizing GPU compute need to have work that benefits from better scheduling and be written with Pascal in mind, and for consumer workloads the latter is likely a long way off.

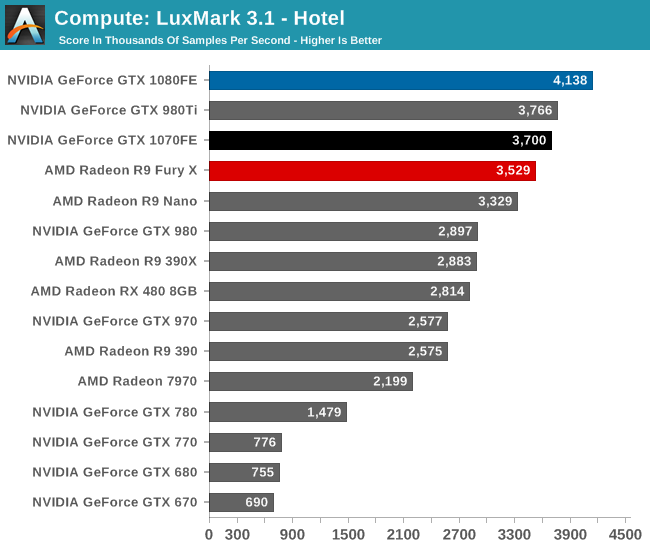

Starting us off for our look at compute is LuxMark3.1, the latest version of the official benchmark of LuxRender. LuxRender’s GPU-accelerated rendering mode is an OpenCL based ray tracer that forms a part of the larger LuxRender suite. Ray tracing has become a stronghold for GPUs in recent years as ray tracing maps well to GPU pipelines, allowing artists to render scenes much more quickly than with CPUs alone.

As with games, when it comes to LuxMark, the GTX 1080 is uncontested; this is the first high performance FinFET GPU in action. That said, I’m surprised by how close some of these results cluster. Though GTX 1080 is not a full generational replacement for GTX 980 Ti, normally it outperforms the Big Maxwell card by more than this. Instead we’re looking at a lead of just 10%, notably less than a simple extrapolation of CUDA core counts and frequencies would tell us to expect (GTX 1080 has almost 50% more FLOPs).

That said, GTX 1070 still places very close to GTX 980 Ti – albeit below it – so what we’re seeing isn’t just Pascal being a laggard. Especially since as a consequence of this, GTX 1080 only beats GTX 1070 by 12%. In any case, this may be a case of early drivers, particularly as OpenCL has not been an NVIDIA priority for the last couple of years. Alternatively, as strange as it may be, I’m not ready to rule out LuxMark being CPU limited. It’s something that we’ll have to keep an eye on.

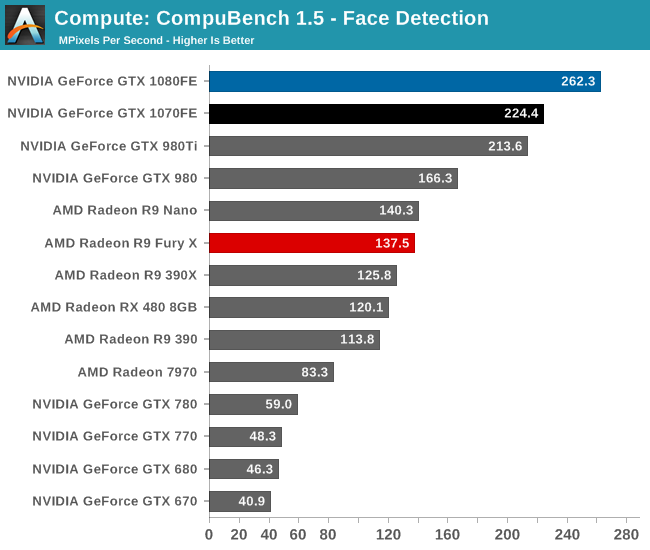

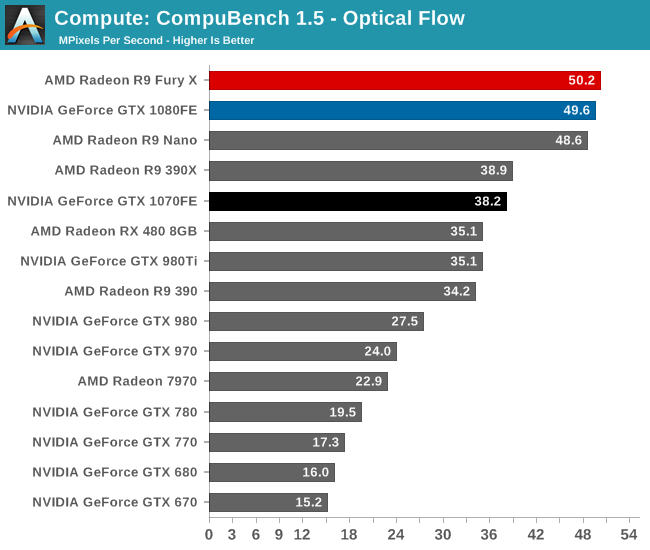

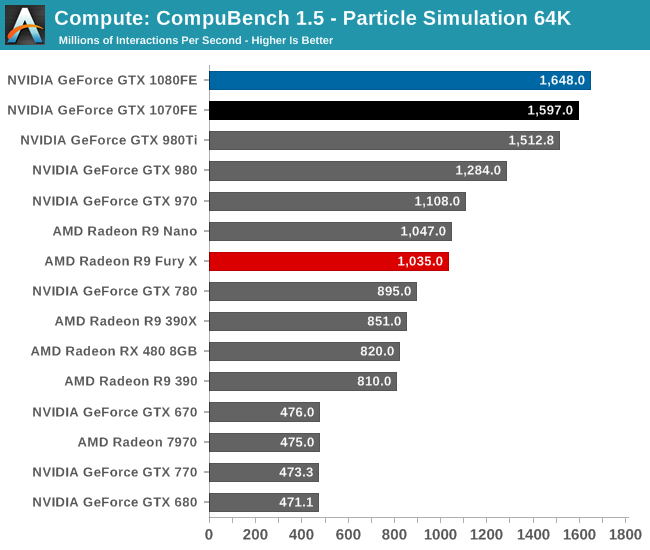

For our second set of compute benchmarks we have CompuBench 1.5, the successor to CLBenchmark. CompuBench offers a wide array of different practical compute workloads, and we’ve decided to focus on face detection, optical flow modeling, and particle simulations.

Depending on which sub-test we’re looking at, CompuBench is all over the place. In Face Detection the GTX 1080 takes a commanding lead, with GTX 1070 easily slotting into second place. On the other hand we have Optical Flow, which NVIDIA cards have traditionally struggled with, where even GTX 1080 can’t unseat Radeon Fury X. Finally in the middle we have the 64K Particle Simulation, which has GTX 1080 in the lead again, but not unlike LuxMark, it also has some interesting clustering going on.

Ultimately each test stresses our GPU collection in different ways, which as we can see greatly influences how the results pan out. Face Detection has always played well to NVIDIA’s strengths, and on a generational basis we get solid scaling from Maxwell 2 to Pascal. Even Optical Flow, which seems to favor raw FLOPs more than anything else, still shows very good gains with Pascal.

Particle Simulation is the outlier in this regard; Pascal’s generational gains are not insignificant, but they’re less than what we’d expect. Furthermore GTX 1080 and GTX 1070 are very closely clustered together despite their much larger difference in FLOPs. This may mean we’re looking at a CPU or driver bottleneck, or possibly some sort of internal path bottleneck. GTX 1080 has more FLOPs and a similar advantage in memory bandwidth, but once you get on chip things get much closer. If nothing else this goes to show that compute benchmarks are much more architecture sensitive than games, which is why we can’t make very broad generalizations for all compute workloads.

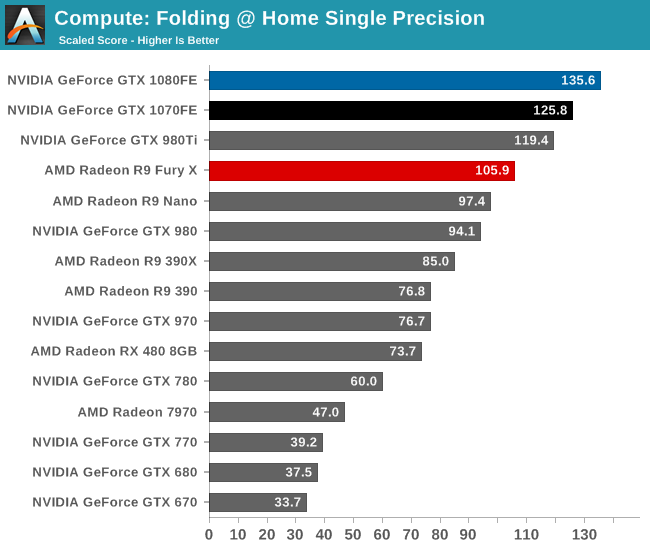

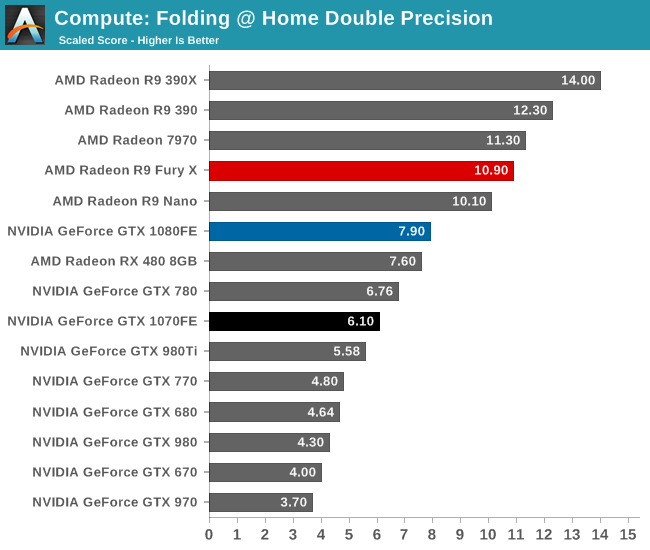

Moving on, our 3rd compute benchmark is the next generation release of FAHBench, the official Folding @ Home benchmark. Folding @ Home is the popular Stanford-backed research and distributed computing initiative that has work distributed to millions of volunteer computers over the internet, each of which is responsible for a tiny slice of a protein folding simulation. FAHBench can test both single precision and double precision floating point performance, with single precision being the most useful metric for most consumer cards due to their low double precision performance. Each precision has two modes, explicit and implicit, the difference being whether water atoms are included in the simulation, which adds quite a bit of work and overhead. This is another OpenCL test, utilizing the OpenCL path for FAHCore 21.

In single precision performance, to the surprise of no one the GTX 1080 is solidly in the lead, followed up by the GTX 1070. On a generational basis performance gains are decent, but at 44% for GTX 1080 they aren’t quite as great as we’ve seen from the card elsewhere. Meanwhile the two Pascal cards are again closer than we’d expect, with GTX 1080 leading by only 10%.

As for double precision performance, we can see that even with the higher overall compute throughput of GP104, it still can’t make up for the fact that FP64 performance on the GPU is capped at 1/32 by virtue of so few FP64 CUDA cores, which puts even NVIDIA’s latest and greatest at a disadvantage here. But if nothing else, generational scaling versus Maxwell 2 looks very good, with performance gains closely tracking the theoretical increase in FLOPs.

200 Comments

View All Comments

patrickjp93 - Wednesday, July 20, 2016 - link

That doesn't actually support your point...Scali - Wednesday, July 20, 2016 - link

Did I read a different article?Because the article that I read said that the 'holes' would be pretty similar on Maxwell v2 and Pascal, given that they have very similar architectures. However, Pascal is more efficient at filling the holes with its dynamic repartitioning.

mr.techguru - Wednesday, July 20, 2016 - link

Just Ordered the MSI GeForce GTX 1070 Gaming X , way better than 1060 / 480. NVidia Nail it :)tipoo - Wednesday, July 20, 2016 - link

" NVIDIA tells us that it can be done in under 100us (0.1ms), or about 170,000 clock cycles."Is my understanding right that Polaris, and I think even earlier with late GCN parts, could seamlessly interleave per-clock? So 170,000 times faster than Pascal in clock cycles (less in total time, but still above 100,000 times faster)?

Scali - Wednesday, July 20, 2016 - link

That seems highly unlikely. Switching to another task is going to take some time, because you also need to switch all the registers, buffers, caches need to be re-filled etc.The only way to avoid most of that is to duplicate the whole register file, like HyperThreading does. That's doable on an x86 CPU, but a GPU has way more registers.

Besides, as we can see, nVidia's approach is fast enough in practice. Why throw tons of silicon on making context switching faster than it needs to be? You want to avoid context switches as much as possible anyway.

Sadly AMD doesn't seem to go into any detail, but I'm pretty sure it's going to be in the same ballpark.

My guess is that what AMD calls an 'ACE' is actually very similar to the SMs and their command queues on the Pascal side.

Ryan Smith - Wednesday, July 20, 2016 - link

Task switching is separate from interleaving. Interleaving takes place on all GPUs as a basic form of latency hiding (GPUs are very high latency).The big difference is that interleaving uses different threads from the same task; task switching by its very nature loads up another task entirely.

Scali - Thursday, July 21, 2016 - link

After re-reading AMD's asynchronous shader PDF, it seems that AMD also speaks of 'interleaving' when they switch a graphics CU to a compute task after the graphics task has completed. So 'interleaving' at task level, rather than at instruction level.Which would be pretty much the same as NVidia's Dynamic Load Balancing in Pascal.

eddman - Thursday, July 21, 2016 - link

The more I read about async computing in Polaris and Pascal, the more I realize that the implementations are not much different.As Ryan pointed out, it seems that the reason that Polaris, and GCN as a whole, benefit more from async is the architecture of the GPU itself, being wider and having more ALUs.

Nonetheless, I'm sure we're still going to see comments like "Polaris does async in hardware. Pascal is hopeless with its software async hack".

Matt Doyle - Wednesday, July 20, 2016 - link

Typo in the lead sentence of HPC vs. Consumer: Divergence paragraph: "Pascal in an architecture that...""is" instead of "in"

Matt Doyle - Wednesday, July 20, 2016 - link

Feeding Pascal page, "GDDR5X uses a 16n prefetch, which is twice the size of GDDR5’s 8n prefect."Prefect = prefetch