The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTHitman

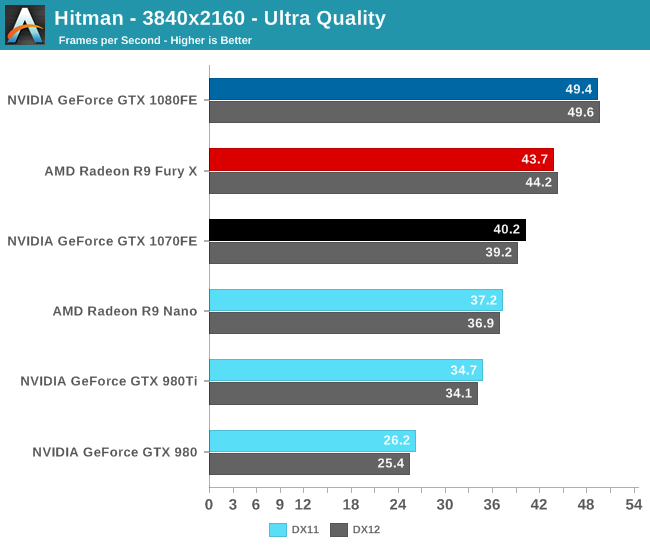

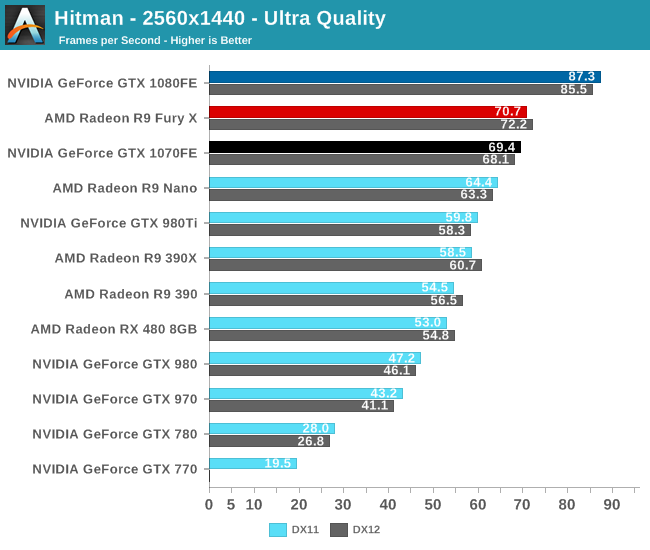

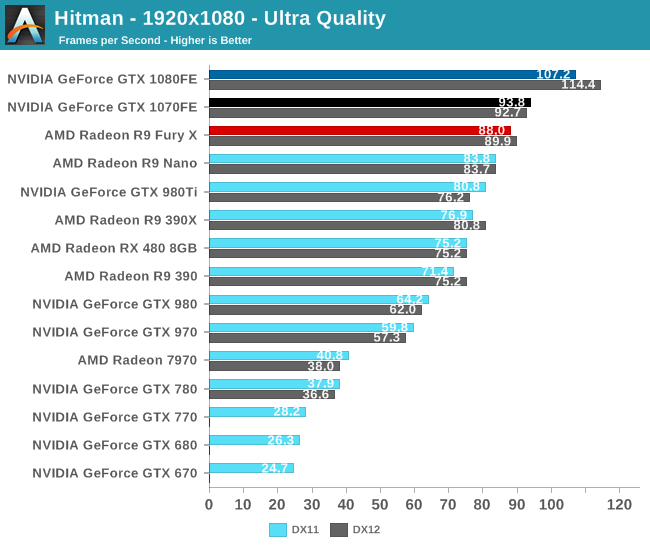

The final game in our 2016 benchmark suite is the 2016 edition of Hitman, the latest title in the stealth-action franchise. The game offers two rendering paths: DirectX 11 and DirectX 12, with the latter being the case of DirectX 12 being added after the fact. As with past Hitman games, the latest proves to have a good mix of scenery and high model counts to stress modern video cards.

Because Hitman supports both DX11 and DX12, for the moment we’ve gone ahead and benchmarked it with both. In practice the performance impact of DX12 is very mixed; NVIDIA cards prior to Pascal lose performance and Pascal cards can either gain or lose performance. AMD cards on the other hand tend to gain performance. The image quality is the same with both renderers, so it’s simply a matter of picking the render path that produces the best performance for a given card.

In any case, the GTX 1080 continues to top the charts here. 60fps still isn’t attainable at 4K, but it can deliver a reasonably playable 49fps. Alternatively, at 1440p it does better than 85fps. Meanwhile the GTX 1070 isn’t a great option at 4K, but at 1440p it can easily stay north of 60fps, delivering 69.4fps.

Thanks in part to the DX12 code path, this is another game where the GTX 1070 performs as expected versus GTX 1080, but still can’t hold on to second place. Rather the Radeon Fury X takes second place at all but 1080p.

Looking at our generational comparisons one last time, this final game has the Pascal cards performing better than expected. At 1440p and above, the GTX 1080 hits 86% better performance than the GTX 980 under DirectX 11, and the GTX 1070 bests the GTX 970 by an average of 63% in the same circumstances. As best as I can tell, there is just something about the Pascal cards that is slightly more in tune with this game than was the Maxwell 2 cards, leading to the performance we’re seeing here. Otherwise the gap between the GTX 1080 and GTX 1070 is pretty typical at about 25% at the higher resolutions.

Finally, in our last time checking in on the GTX 680, the GTX 1080 offers a commanding performance improvement. GTX 1080 is 4.1x faster than GTX 680 under DirectX 11, reinforcing just how much progress NVIDIA had made in 4 years and a single full manufacturing node upgrade.

200 Comments

View All Comments

TestKing123 - Wednesday, July 20, 2016 - link

Then you're woefully behind the times since other sites can do this better. If you're not able to re-run a benchmark for a game with a pretty significant patch like Tomb Raider, or a high profile game like Doom with a significant performance patch like Vulcan that's been out for over a week, then you're workflow is flawed and this site won't stand a chance against the other crop. I'm pretty sure you're seeing this already if you have any sort of metrics tracking in place.TheinsanegamerN - Wednesday, July 20, 2016 - link

So question, if you started this article on may 14th, was their no time in the over 2 months to add one game to that benchmark list?nathanddrews - Wednesday, July 20, 2016 - link

Seems like an official addendum is necessary at some point. Doom on Vulkan is amazing. Dota 2 on Vulkan is great, too (and would be useful in reviews of low end to mainstream GPUs especially). Talos... not so much.Eden-K121D - Thursday, July 21, 2016 - link

Talos Principle was a proof of conceptajlueke - Friday, July 22, 2016 - link

http://www.pcgamer.com/doom-benchmarks-return-vulk...Addendum complete.

mczak - Wednesday, July 20, 2016 - link

The table with the native FP throughput rates isn't correct on page 5. Either it's in terms of flops, then gp104 fp16 would be 1:64. Or it's in terms of hw instruction throughput - then gp100 would be 1:1. (Interestingly, the sandra numbers for half-float are indeed 1:128 - suggesting it didn't make any use of fp16 packing at all.)Ryan Smith - Wednesday, July 20, 2016 - link

Ahh, right you are. I was going for the FLOPs rate, but wrote down the wrong value. Thanks!As for the Sandra numbers, they're not super precise. But it's an obvious indication of what's going on under the hood. When the same CUDA 7.5 code path gives you wildly different results on Pascal, then you know something has changed...

BurntMyBacon - Thursday, July 21, 2016 - link

Did nVidia somehow limit the ability to promote FP16 operations to FP32? If not, I don't see the point in creating such a slow performing FP16 mode in the first place. Why waste die space when an intelligent designer can just promote the commands to get normal speeds out of the chip anyways? Sure you miss out on speed doubling through packing, but that is still much better than the 1/128 (1/64) rate you get using the provided FP16 mode.Scali - Thursday, July 21, 2016 - link

I think they can just do that in the shader compiler. Any FP16 operation gets replaced by an FP32 one.Only reading from buffers and writing to buffers with FP16 content should remain FP16. Then again, if their driver is smart enough, it can even promote all buffers to FP32 as well (as long as the GPU is the only one accessing the data, the actual representation doesn't matter. Only when the CPU also accesses the data, does it actually need to be FP16).

owan - Wednesday, July 20, 2016 - link

Only 2 months late and published the day after a different major GPU release. What happened to this place?