Ashes of the Singularity Revisited: A Beta Look at DirectX 12 & Asynchronous Shading

by Daniel Williams & Ryan Smith on February 24, 2016 1:00 PM ESTDirectX 12 Multi-GPU Performance

Shifting gears, let’s take a look at multi-GPU performance on the latest Ashes beta. The focus of our previous article, Ashes’ support for DX12 explicit multi-GPU makes it the first game to support the ability to pair up RTG and NVIDIA GPUs in an AFR setup. Like traditional same-vendor AFR configurations, Ashes’ AFR setup works best when both GPUs are similar in performance, so although this technology does allow for some unusual cross-vendor comparisons, it does not (yet) benefit from pairing up GPUs that widely differ in performance, such as a last-generation video card with a current-generation video card. None the less, running a Radeon and a GeForce card together is an interesting sight, if only for the sheer audacity of it.

Meanwhile as a result of the significant performance optimizations between the last beta build and this latest build, this has also had an equally significant knock-on effect on mutli-GPU performance as compared to the last time we looked at the game.

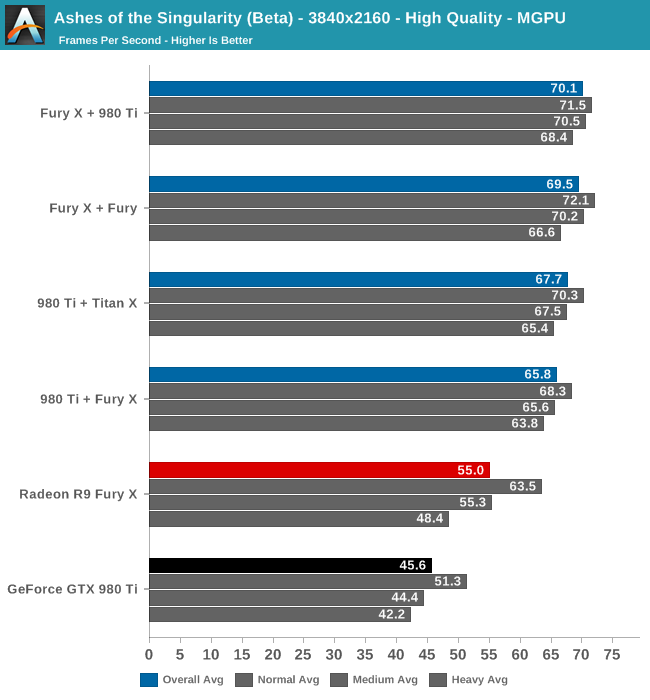

Even at 4K a pair of GPUs ends up being almost too much at Ashes’ High quality setting. All four multi-GPU configurations are over 60fps, with the fastest Fury X + 980 Ti configuration nudging past 70fps. Meanwhile the lead over our two fastest single-GPU configurations is not especially great, particularly compared to the Fury X, with the Fury X + 980 Ti configuration only coming in 15fps (27%) faster than a single GPU. The all-NVIDIA comparison does fare better in this regard, but only because of GTX 980 Ti’s lower initial performance.

Digging deeper, what we find is that even at 4K we’re actually CPU limited according to the benchmark data. Across all four multi-GPU configurations, our hex-core overclocked Core i7-4960X can only setup frames at roughly 70fps, versus 100fps+ for a single-GPU configuration.

Top: Fury X. Bottom: Fury X + 980 Ti

The increased CPU load from utilizing multi-GPU is to be expected, as the CPU now needs to spend time synchronizing the GPUs and waiting on them to transfer data between each other. However dropping to 70fps means that Ashes has become a surprisingly heavy CPU test as well, and that 4K at high quality alone isn’t enough to max out our dual GPU configurations.

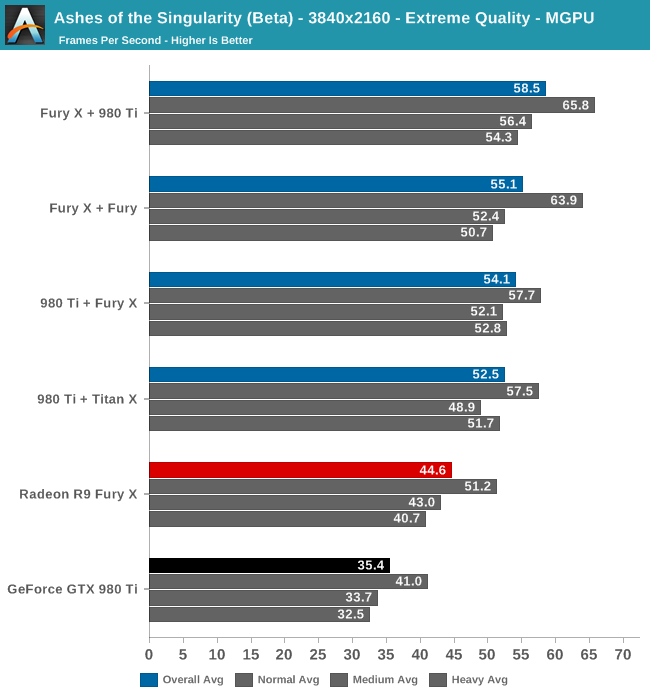

Cranking up the quality setting to Extreme finally gives our dual-GPU configurations enough of a workload to back off from the CPU performance cap. Once again the fastest configuration is the Fury X + 980 Ti, which lands just short of 60fps, followed by the Fury X + Fury configuration at 55.1fps. In our first look at Ashes multi-GPU scaling we found that having a Fury X card as the lead card resulted in better performance, and this has not changed for the newest beta. The Fury continues to be faster at reading data off of other cards. Still, the gap between the Fury X + 980 Ti configuration and the 980 Ti + Fury X configuration has closed some as compared to last time, and now stands at 11%.

Backing off from the CPU limit has also put the multi-GPU configurations well ahead of the single-GPU configurations. We’re now looking at upwards of a 65% performance boost versus a single GTX 980, and a smaller 31% performance boost versus a single Fury X. These are smaller gains for multi-GPU configurations than we first saw last year, but it’s also very much a consequence of Ashes’ improved performance across the board. Though we didn’t have time to test it, Ashes does have one higher quality setting – Crazy – which may drive a bit of a larger wedge between the multi-GPU configurations and the Fury X, though the overhead of synchronization will always present a roadblock.

153 Comments

View All Comments

Kouin325 - Friday, February 26, 2016 - link

yes indeed they will be patching DX12 into the game, AFTER all the PR damage from the low benchmark scores is done. Nvidia waved some cash at the publisher/dev to make it a gameworks title, make it DX11, and to lock AMD out of making a day 1 patch.This was done to keep the general gaming public from learning that the Nvidia performance crown will all but disappear or worse under DX12. So they can keep selling their cards like hotcakes for another month or two.

Also, Xbox hasn't been moved over to DX12 proper YET, but the DX11.x that the Xbox one has always used is by far closer to DX12 than DX11 for the PC. I think we'll know for sure what the game was developed for after the patch comes out. If the game gets a big performance increase after the DX12 patch then it was developed for DX12, and NV possibly had a hand in the DX11 for PC release. If the increase is small then it was developed for DX11,

Reason being that getting the true performance of DX12 takes a major refactor of how assets are handled and pretty major changes to the rendering pipeline. Things that CANNOT be done in a month or two or how long this patch is taking to come out after release.

Saying "we support DirectX12" is fairly ease and only takes changing a few lines of code, but you won't get the performance increases that DX12 can bring.

Madpacket - Friday, February 26, 2016 - link

With the lack of ethics Nvidia has displayed, this wouldn't surprise me in the least. Gameworks is a sham - https://www.youtube.com/watch?v=O7fA_JC_R5skeeepcool - Monday, February 29, 2016 - link

Finally!..I can't even grasp the concept of how low rez and crappy the graphics look on this thing and everybody is praising this "game" and its benchmarks of dubious accuracy.

It looks BAD, its choppy and pixelated, there is a simple terrain and small units that look like sprites from Dune 2000 and this thing makes an high end GPU cry to run at 60Fps's??....

hpglow - Wednesday, February 24, 2016 - link

No insults in his post. Sorry you get your butt hurt whenever someone points out the facts. There are few Direct X 12 pieces of software outside of tech demos and canned benchmarks avalible. Nvidia has better things to do than appease the arm-chair quarterbacks of the comments section. Like optimize for games we are playing right now. Weather Nvidia cards are getting poor or equal performance in DX 12 titles to their DX 11 counterparts is irrelevant right now. We can talk all we want but until there is a DX 12 title worth putting $60 down on and that title actually gains enough FPS to increase the gameplay quality then the conversation is moot.Your first post was trolling and you know it.

at80eighty - Wednesday, February 24, 2016 - link

there is definitely a disproportion in responses - in the exact inverse you described.review your own post for more chuckles.

Flunk - Thursday, February 25, 2016 - link

What? How dare you suggest that the fans of the great Nvidia might share some of the blame! Guards arrest this man for treason!Mondozai - Thursday, February 25, 2016 - link

"No insults in his post."Yeah, except that one part where he called him a fanboy. Yeah, totally no insults.

Seriously, is the Anandtech comment section devolving into Wccftech now? Is it still possible to have intelligent arguments about tech on the internet without idiots crawling all over the place? Thanks.

Mr Perfect - Thursday, February 25, 2016 - link

Arguments are rarely intelligent.MattKa - Thursday, February 25, 2016 - link

If fanboy is an insult you are the biggest pussy in the world.IKeelU - Thursday, February 25, 2016 - link

"Trolling" usually implies deliberate obtuseness in order to annoy. Itchypoot's posts reads like a newb's or fanboy's (likely a bit of both) who simply doesn't understand how evidence and logic factor into civilized debate.