Ashes of the Singularity Revisited: A Beta Look at DirectX 12 & Asynchronous Shading

by Daniel Williams & Ryan Smith on February 24, 2016 1:00 PM ESTDirectX 12 vs. DirectX 11

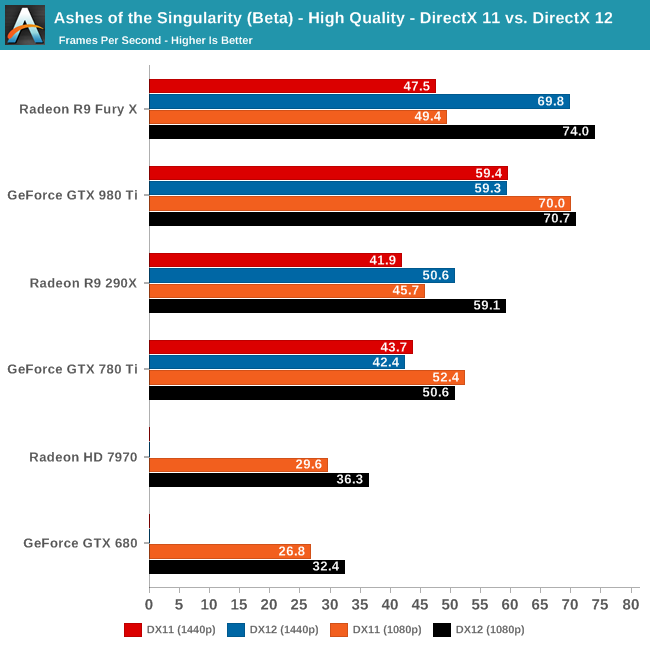

Now that we’ve had the chance to look at DirecX 12 performance, let’s take a look at things with DirectX 11 thrown into the mix. As a reminder, while the two rendering paths are graphically identical, the DirectX 12 path introduces the latter’s multi-core scalability along with asynchronous shading functionality. The game and the underlying Nitrous engine is designed to take advantage of both, but particularly the multi-core functionality as the game pushes some very high batch counts.

Given that we had never benchmarked Ashes under DirectX 11 before, what we had been expecting was a significant performance regression when switching to it. Instead what we found was far more surprising.

On the RTG side of matters, there is a large performance gap between DX11 and DX12 at all resolutions, increasing with the overall performance of the video card being tested. Even on the R9 290X and the 7970, using DX12 is a no brainer, as it improves performance by 20% or more.

The big surprise however is with the NVIDIA cards. For the more powerful GTX 980 Ti and GTX 780 Ti, NVIDIA doesn’t gain anything from the DX12 rendering path; in fact they lose a percent or two in performance. This means that they have very good performance under DX11 (particular the GTX 980 Ti), but it’s not doing them any favors under DX12, where as we’ve seen RTG has a rather consistent performance lead. In the past NVIDIA has gone through some pretty extreme lengths to optimize the CPU usage of their DX11 driver, so this may be the payoff from general optimizations, or even a round of Ashes-specific optimizations.

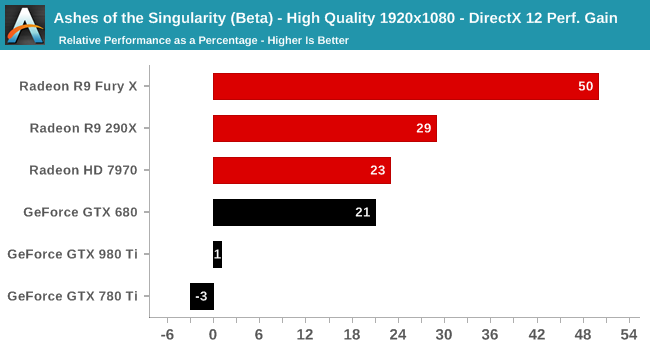

Breaking down the gains on a percentage basis at 1080p, the most CPU-demanding resolution, we find that the Fury X picks up a full 50% from DX12, followed by 29% and 23% for the R9 290X and 7970 respectively. Meanwhile at the opposite end of the spectrum are the GTX 980 Ti and GTX 780 Ti, who lose 1% and 3% respectively.

Finally, right in the middle of all of this is the GTX 680. Given what happens to the architecturally similar GTX 780 Ti, this may be a case of GPU memory limitations (this is the only 2GB NVIDIA card in this set), as there’s otherwise no reason to expect the weakest NVIDIA GPU to benefit the most from DX12.

Overall then this neatly illustrates why RTG in particular has been so gung-ho about DX12, as Ashes’ DX12 path has netted them a very significant increase in performance. To some degree however what this means is a glass half full/half empty full situation; RTG gains so much from DX12 in large part because of their poorer DX11 performance (especially on the faster cards), but on the other hand a “simple” API change has unlocked a great deal of GPU power that wasn’t otherwise being used and vaulted them well into the lead. As for NVIDIA, is it that their cards don’t benefit from DX12, or is it that their DX11 driver stack is that good to begin with? At the end of the day Ashes is just a single game – and a beta game at that – but it will be interesting to see if this is a one-off situation or if it becomes recurring.

153 Comments

View All Comments

Kouin325 - Friday, February 26, 2016 - link

yes indeed they will be patching DX12 into the game, AFTER all the PR damage from the low benchmark scores is done. Nvidia waved some cash at the publisher/dev to make it a gameworks title, make it DX11, and to lock AMD out of making a day 1 patch.This was done to keep the general gaming public from learning that the Nvidia performance crown will all but disappear or worse under DX12. So they can keep selling their cards like hotcakes for another month or two.

Also, Xbox hasn't been moved over to DX12 proper YET, but the DX11.x that the Xbox one has always used is by far closer to DX12 than DX11 for the PC. I think we'll know for sure what the game was developed for after the patch comes out. If the game gets a big performance increase after the DX12 patch then it was developed for DX12, and NV possibly had a hand in the DX11 for PC release. If the increase is small then it was developed for DX11,

Reason being that getting the true performance of DX12 takes a major refactor of how assets are handled and pretty major changes to the rendering pipeline. Things that CANNOT be done in a month or two or how long this patch is taking to come out after release.

Saying "we support DirectX12" is fairly ease and only takes changing a few lines of code, but you won't get the performance increases that DX12 can bring.

Kouin325 - Friday, February 26, 2016 - link

ugh, I think Firefox had a brainfart, sorry for the TRIPPLE post.... *facepalm*Gothmoth - Friday, February 26, 2016 - link

it´s a crap game anyway so who cares?honestly even when nvidia should be 20% worse i would not buy ATI.

not becasue im a fanboy.. but i use my GPU´s for more than games and ATI GPUs suck big time when it comes to drivers stability in pro applications.

D. Lister - Friday, February 26, 2016 - link

Oxide and their so called "benchmarks" are a joke. Anyone who takes the aforementioned seriously, is just another unwitting victim of AMD's typical underhanded marketing.https://scalibq.wordpress.com/2015/09/02/directx-1...

"And don’t get me started on Oxide… First they had their Star Swarm benchmark, which was made only to promote Mantle (AMD sponsors them via the Gaming Evolved program). By showing that bad DX11 code is bad. Really, they show DX11 code which runs single-digit framerates on most systems, while not exactly producing world-class graphics. Why isn’t the first response of most people as sane as: “But wait, we’ve seen tons of games doing similar stuff in DX11 or even older APIs, running much faster than this. You must be doing it wrong!”?

But here Oxide is again, in the news… This time they have another ‘benchmark’ (do these guys actually ever make any actual games?), namely “Ashes of the Singularity”.

And, surprise surprise, again it performs like a dog on nVidia hardware. Again, in a way that doesn’t make sense at all… The figures show it is actually *slower* in DX12 than in DX11. But somehow this is spun into a DX12 hardware deficiency on nVidia’s side. Now, if the game can get a certain level of performance in DX11, clearly that is the baseline of performance that you should also get in DX12, because that is simply what the hardware is capable of, using only DX11-level features. Using the newer API, and optionally using new features should only make things faster, never slower. That’s just common sense."

Th-z - Saturday, February 27, 2016 - link

“But wait, we’ve seen tons of games doing similar stuff in DX11 or even older APIs..."Doing similar stuff in DX11? What stuff and what games?

"The figures show it is actually *slower* in DX12 than in DX11. But somehow this is spun into a DX12 hardware deficiency on nVidia’s side."

Which figure?

This is Anandtech, we need to be more specific and provide solid evidence to back up your claims in order to avoid sounding like an astroturfer.

D. Lister - Saturday, February 27, 2016 - link

You see my post? You see that there is this underlined text in blue? Well my friend, it is called a URL, which is an acronym for "Uniform Resource Locator", long story short it is this internet thingy that you go clickity-clickity with your mouse and it opens another page, where you can find the rest of the information.Don't worry, the process of opening a new webpage by using a URL may APPEAR quite daunting at first, but with very little practice you could be clicking away like a pro. This is after all "The AnandTech", and everybody is here to help. Heck, who knows if there are more like you out there, I might even make a video tutorial - "Open new webpages in 3 easy steps", or something.

PS: Another pro tip, there is no such thing as "solid evidence" outside of a court of law. On the internet, you have information resources and reference material, and you have to use your own first-hand knowledge, experience and commonsense to differentiate the right from wrong.

Th-z - Sunday, May 29, 2016 - link

Your blabbering is as useful as your link. I have a pro tip for you: you gave yourself away.EugenM - Tuesday, June 7, 2016 - link

@Th-z Dont feed the troll.GeneralTom - Saturday, February 27, 2016 - link

I hope Metal will be supported, too.HollyDOL - Monday, February 29, 2016 - link

Hm, from the screenshots posted I honestly can't see why would there be a need to run Dx12 with so "low performance" even on the most elite cards. While I give these guys credits for having the guts to go and develop in completely new API, the graphics looks more like early Dx9 games.Just a note this opinion is based on screenshots, not actual live render, but still from what I see there I'd expect FPS hitting 120+ with Dx11...