Ashes of the Singularity Revisited: A Beta Look at DirectX 12 & Asynchronous Shading

by Daniel Williams & Ryan Smith on February 24, 2016 1:00 PM ESTDirectX 12 Single-GPU Performance

We’ll start things off with a look at single-GPU performance. For this, we’ve grabbed a collection of RTG and NVIDIA GPUs covering the entire DX12 generation, from GCN 1.0 and Kepler to GCN 1.2 and Maxwell. This will give us a good idea of how the game performs both across a wide span of GPU performance levels, and how (if at all) the various GPU generational changes play a role.

Meanwhile unless otherwise noted, we’re using Ashes’ High quality setting, which turns up a number of graphical features and also utilizes 2x MSAA. It’s also worth mentioning that while Ashes does allow async shading to be turned off and on, this option is on by default unless turned off in the game’s INI file.

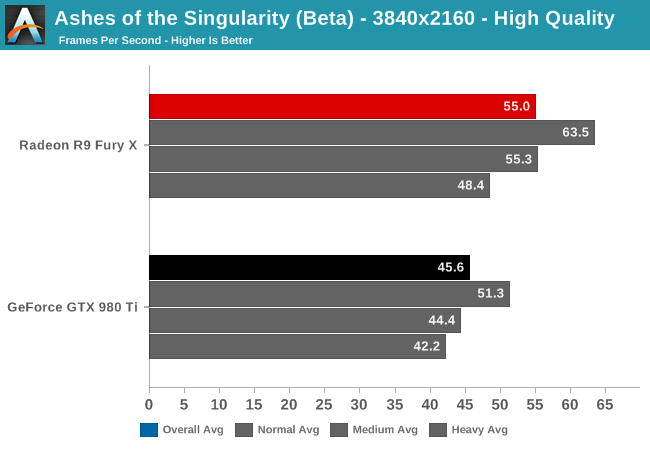

Starting at 4K, we have the GeForce GTX 980 Ti and Radeon R9 Fury X. On the latest beta the Fury X has a strong lead over the normally faster GTX 980 Ti, beating it by 20% and coming close to hitting 60fps.

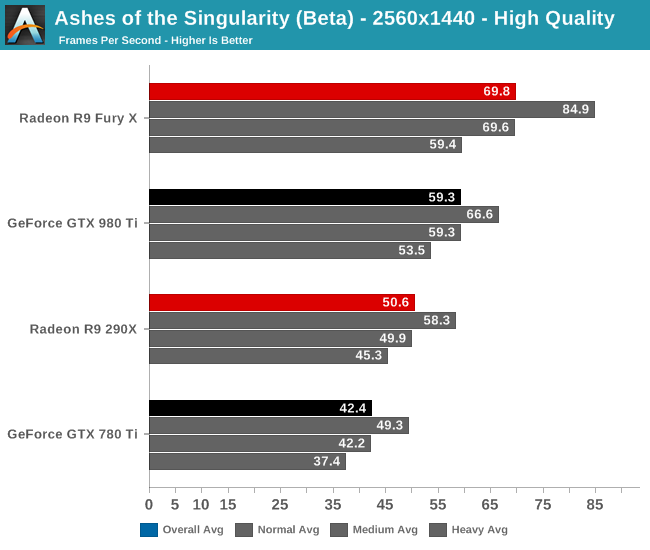

When we drop down to 1440p and introduce last-generation’s flagship video cards, the GeForce GTX 780 Ti and Radeon R9 290X, the story is much the same. The Fury X continues to hold a 10fps lead over the GTX 980 Ti, giving it an 18% lead. Similarly, the R9 290X has an 8fps lead over the 780 Ti, translating into a 19% performance lead. This is a significant turnabout from where we normally see these cards, as 780 Ti traditionally holds a lead over the 290X.

Meanwhile looking at the average framerates with different batch count intensities, there admittedly isn’t much remarkable here. All cards take roughly the same performance hit with increasingly larger batch counts.

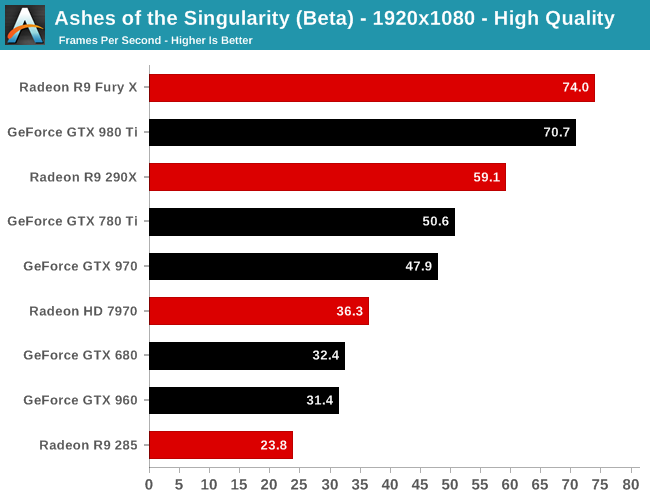

Finally at 1080p, with our full lineup of cards we can see that RTG’s lead in this latest beta is nearly absolute. The 2012 flagship battle between the 7970 and the GTX 680 puts the 7970 in the lead by 12%, or just shy of 4fps. Elsewhere the GTX 980 Ti does close on the Fury X, but RTG’s current-gen flagship remains in the lead.

The one outlier here is the Radeon R9 285, which is the only 2GB RTG card in our collection. At this point we suspect it’s VRAM limited, but it would require further investigation.

153 Comments

View All Comments

knightspawn1138 - Thursday, February 25, 2016 - link

I think that Radeon's advantage in DX12 comes from the fact that most of DX12's new features were similar to features AMD wrote into the Mantle API. They've been designing their recent cards to take advantage of the features they built for Mantle, and now that DX12 includes many of those features, their cards essentially get a head-start in optimization.If Radeon and NVidia were running a 100-yard dash, it just means that Radeon's starting block is about 5-yards ahead of NVidia's. I (personally) think that NVidia's still the stronger runner, and they easily have the potential to catch up to Radeon's head start if they optimize their drivers some more. And, honestly, a 4fps gap should not be enough of a reason to walk away from whichever brand you already prefer.

I still prefer NVidia due to the lower power consumption, friendlier drivers, 3D glasses, and game streaming they've had for a few years. I used to like ATI cards, but when the Catalyst Control Center started sucking more cycles out of my CPU than the 3D games, I switched to NVidia.

I would also like to have seen the GTX 970 in some of these benchmarks. I understand benchmarking the highest-end cards, but I hope that when the game is out of beta and being used as an official DX12 benchmark, we get some numbers from the more affordable cards.

Shadowmaster625 - Thursday, February 25, 2016 - link

Of course Nvidia's performance doesnt go up under DX12. That is no doubt intentional. Why would they improve their current cards when they can sell all new ones to the same gullible fools that fell for that trick the last time around?Denithor - Thursday, February 25, 2016 - link

I had exactly the same thought. AMD may have shot themselves in the foot. Everyone using their cards is going to see a 10-20% boost in performance, meaning they may not need an upgrade this cycle.silverblue - Thursday, February 25, 2016 - link

Perhaps, but DX12's lower overhead would just encourage devs to make even more complex scenes. Result: same performance, better visuals.All the people jumping on NVIDIA need to be careful, as there's parts of the DX12 spec that they support better than AMD. Give it a year to eighteen months and we'll see how this pans out.

K_Space - Sunday, February 28, 2016 - link

Having read all 13 pages of comments I was surprised no one mentioned this either. I'll certainly be keeping my 295x2 for at least the next 18 months if not 24 months. With AFR and VR coming up, Dual GPUs is the way to go.Drake O - Thursday, February 25, 2016 - link

I was really hoping to see the benefits of sharing workload with the iGPU. Not everyone has multiple GPUs(but I do) but most people have a CPU with onboard graphics. If people with graphics cards can finally start using this recourse that would be a very good thing for a tremendous number of users. Please follow this article up as soon as possible with one on this area. Maybe one percent of users have different brand video cards laying around, maybe five percent have multiple similar GPUs but almost everyone has a video card and an unused iGPU on their CPU. This is the obvious first direction to take.rhysiam - Thursday, February 25, 2016 - link

This is (sort of) covered in the article and covered clearly in the comments above. This particular game is only using AFR, and the devs have clearly said (as noted in the article) that you'll never get more than double the performance of the slower graphics solution. Just about any discrete GPU worth of the name will be more than double the performance of an IGP and therefore is better (for this game) run on its own.DX12 opens up a raft of possibilities to use any and all available graphics resources, including IGPs, but it leaves the responsibility entirely with the game developers. For this game at this time, the devs aren't looking to make use of onboard graphics unless it's paired with a similarly anaemic GPU.

Drake O - Thursday, February 25, 2016 - link

If virtually everyone can boost their framerate by 20% at no cost then it is a big thing. Most people want one processor and one video card. Multiple video cards offer a very real performance boost but there is a downside, more power, more heat and frequent compatibility issues. With DX12 developers can send the post processing to the iGPU and let the video card handle the rest. Again a 20% performance boost for free. Only then should you think about the much smaller market that wants to run with multiple video cards.Kouin325 - Friday, February 26, 2016 - link

yes indeed they will be patching DX12 into the game, AFTER all the PR damage from the low benchmark scores is done. Nvidia waved some cash at the publisher/dev to make it a gameworks title, make it DX11, and to lock AMD out of making a day 1 patch.This was done to keep the general gaming public from learning that the Nvidia performance crown will all but disappear or worse under DX12. So they can keep selling their cards like hotcakes for another month or two.

Also, Xbox hasn't been moved over to DX12 proper YET, but the DX11.x that the Xbox one has always used is by far closer to DX12 than DX11 for the PC. I think we'll know for sure what the game was developed for after the patch comes out. If the game gets a big performance increase after the DX12 patch then it was developed for DX12, and NV possibly had a hand in the DX11 for PC release. If the increase is small then it was developed for DX11,

Reason being that getting the true performance of DX12 takes a major refactor of how assets are handled and pretty major changes to the rendering pipeline. Things that CANNOT be done in a month or two or how long this patch is taking to come out after release.

Saying "we support DirectX12" is fairly ease and only takes changing a few lines of code, but you won't get the performance increases that DX12 can bring.

Kouin325 - Friday, February 26, 2016 - link

yes indeed they will be patching DX12 into the game, AFTER all the PR damage from the low benchmark scores is done. Nvidia waved some cash at the publisher/dev to make it a gameworks title, make it DX11, and to lock AMD out of making a day 1 patch.This was done to keep the general gaming public from learning that the Nvidia performance crown will all but disappear or worse under DX12. So they can keep selling their cards like hotcakes for another month or two.

Also, Xbox hasn't been moved over to DX12 proper YET, but the DX11.x that the Xbox one has always used is by far closer to DX12 than DX11 for the PC. I think we'll know for sure what the game was developed for after the patch comes out. If the game gets a big performance increase after the DX12 patch then it was developed for DX12, and NV possibly had a hand in the DX11 for PC release. If the increase is small then it was developed for DX11,

Reason being that getting the true performance of DX12 takes a major refactor of how assets are handled and pretty major changes to the rendering pipeline. Things that CANNOT be done in a month or two or how long this patch is taking to come out after release.

Saying "we support DirectX12" is fairly ease and only takes changing a few lines of code, but you won't get the performance increases that DX12 can bring.