Ashes of the Singularity Revisited: A Beta Look at DirectX 12 & Asynchronous Shading

by Daniel Williams & Ryan Smith on February 24, 2016 1:00 PM ESTDirectX 12 vs. DirectX 11

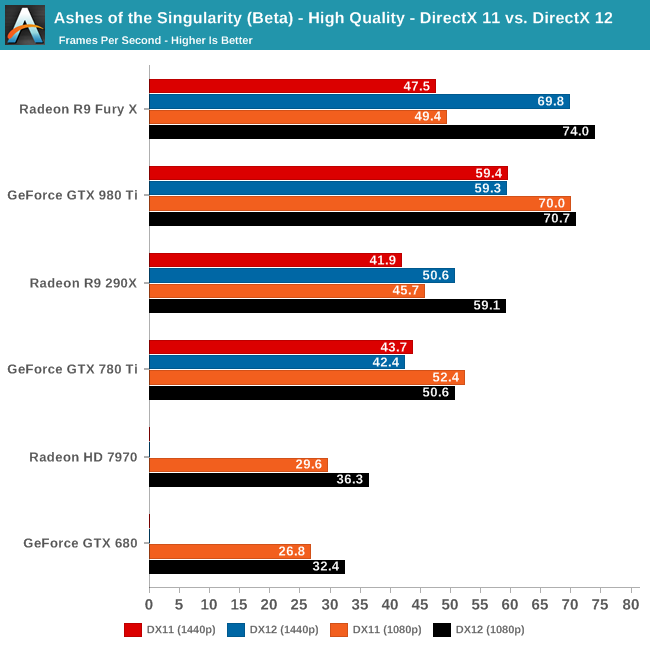

Now that we’ve had the chance to look at DirecX 12 performance, let’s take a look at things with DirectX 11 thrown into the mix. As a reminder, while the two rendering paths are graphically identical, the DirectX 12 path introduces the latter’s multi-core scalability along with asynchronous shading functionality. The game and the underlying Nitrous engine is designed to take advantage of both, but particularly the multi-core functionality as the game pushes some very high batch counts.

Given that we had never benchmarked Ashes under DirectX 11 before, what we had been expecting was a significant performance regression when switching to it. Instead what we found was far more surprising.

On the RTG side of matters, there is a large performance gap between DX11 and DX12 at all resolutions, increasing with the overall performance of the video card being tested. Even on the R9 290X and the 7970, using DX12 is a no brainer, as it improves performance by 20% or more.

The big surprise however is with the NVIDIA cards. For the more powerful GTX 980 Ti and GTX 780 Ti, NVIDIA doesn’t gain anything from the DX12 rendering path; in fact they lose a percent or two in performance. This means that they have very good performance under DX11 (particular the GTX 980 Ti), but it’s not doing them any favors under DX12, where as we’ve seen RTG has a rather consistent performance lead. In the past NVIDIA has gone through some pretty extreme lengths to optimize the CPU usage of their DX11 driver, so this may be the payoff from general optimizations, or even a round of Ashes-specific optimizations.

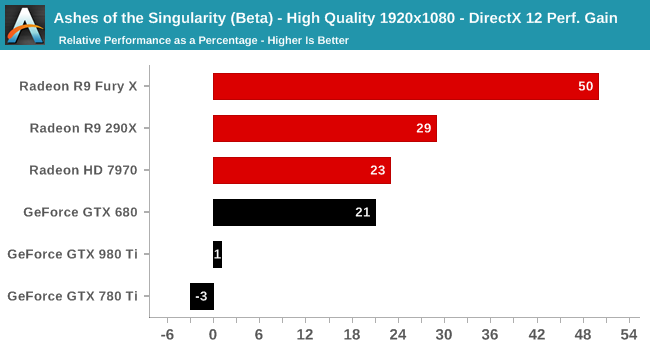

Breaking down the gains on a percentage basis at 1080p, the most CPU-demanding resolution, we find that the Fury X picks up a full 50% from DX12, followed by 29% and 23% for the R9 290X and 7970 respectively. Meanwhile at the opposite end of the spectrum are the GTX 980 Ti and GTX 780 Ti, who lose 1% and 3% respectively.

Finally, right in the middle of all of this is the GTX 680. Given what happens to the architecturally similar GTX 780 Ti, this may be a case of GPU memory limitations (this is the only 2GB NVIDIA card in this set), as there’s otherwise no reason to expect the weakest NVIDIA GPU to benefit the most from DX12.

Overall then this neatly illustrates why RTG in particular has been so gung-ho about DX12, as Ashes’ DX12 path has netted them a very significant increase in performance. To some degree however what this means is a glass half full/half empty full situation; RTG gains so much from DX12 in large part because of their poorer DX11 performance (especially on the faster cards), but on the other hand a “simple” API change has unlocked a great deal of GPU power that wasn’t otherwise being used and vaulted them well into the lead. As for NVIDIA, is it that their cards don’t benefit from DX12, or is it that their DX11 driver stack is that good to begin with? At the end of the day Ashes is just a single game – and a beta game at that – but it will be interesting to see if this is a one-off situation or if it becomes recurring.

153 Comments

View All Comments

Koenig168 - Wednesday, February 24, 2016 - link

There is a brief mention of GTX 680 2GB "CPU memory limitations". I take it you mean "VRAM memory limitations". It would be interesting to know if this can be overcome by DX12 memory stacking, either a pair of GTX 680s or the GTX 690.Ryan Smith - Wednesday, February 24, 2016 - link

That was meant to be "GPU memory limitations", thanks for the catch.B3an - Wednesday, February 24, 2016 - link

Why is Beta 2 still not available on Steam? Have the media got early access? At the time of posting this there's still only Beta 1 available.Ryan Smith - Wednesday, February 24, 2016 - link

It's out to the public tomorrow.hemipepsis5p - Wednesday, February 24, 2016 - link

Hey, so I'm confused by the mixed GPU testing. I thought that both cards had to be the same in order to run them in SLI/Crossfire? How did they test a Fury X + 980Ti?Ext3h - Wednesday, February 24, 2016 - link

That's no longer the case with DX12. It used to be like this with DX11 and earlier versions, when the driver decided if/how to split the workload onto multiple GPUs, but with DX12 that choice is now up to the application.So if the developer chooses to support asymmetric configurations, even cross vendor or exotic combinations like Intel IGP + AMD dGPU, then it can be made to work.

anubis44 - Thursday, February 25, 2016 - link

I'm willing to bet that nVidia's Maxwell cards can't use DX12's async compute at all, and they're falling back to the DX11 code path, even when you 'enable' DX12 for them.Ext3h - Thursday, February 25, 2016 - link

You loose that bet.The asynchronous compute term only defines how tasks are synchronized against each other, whereby the "asynchronous" term only states tasks won't block while waiting for each other. The default of doing that in software, in order to create a sequential schedule, is perfectly legit and fulfills the specification in whole.

Hardware support isn't required for this feature at all, even though you *can* optionally use hardware to perform much better than the software solution. Parallel execution does require hardware support and can bring an huge performance boost, but "asynchronous compute" does not specify that parallel execution would be required.

BradGrenz - Thursday, February 25, 2016 - link

The whole point of async compute is to take advantage of parallel execution. It doesn't matter what nVidia's drivers tell an application, if it accepts these commands but is forced to reorder them for serial execution because the hardware can do nothing else then it doesn't really support the technology at all. It's be like claiming support for texture compression even though your driver has to decompress every texture to an uncompressed format before the GPU can read it. It doesn't matter if the application thinks compressed textures are being used if the hardware actually provides none of the benefits the technology intended (in this case more/larger textures in a given amount of VRAM, and in the case of async compute, more efficient utilization of shader ALUs).Sajin - Thursday, February 25, 2016 - link

"Update 02/24: NVIDIA sent a note over this afternoon letting us know that asynchornous shading is not enabled in their current drivers, hence the performance we are seeing here. Unfortunately they are not providing an ETA for when this feature will be enabled."Source: http://www.anandtech.com/show/10067/ashes-of-the-s...