Examining Soft Machines' Architecture: An Element of VISC to Improving IPC

by Ian Cutress on February 12, 2016 8:00 AM EST- Posted in

- CPUs

- Arm

- x86

- Architecture

- Soft Machines

- IPC

The Data: Soft Machines' Proof

Ever since the initial announcement of the VISC architecture in 2014 there has been a element of it sounding too good to be true, and Soft Machines' 2016 announcements have come with yet more questions as well. Aside from questions requiring more information about the architecture and ISA, the big money questions relate to performance. We mentioned a couple of pages back that the original 28nm design made its way to silicon at 500 MHz and was shown as a proof of concept. At the 2014 conference, the platform was compared to both ARM and x86 and offered better scores on Denbench compared to both while also using less power. Now that Shasta is on the 16nm node, the big question is how the new design at a 2 GHz frequency compares, and if the increase in frequency has upset some of the IPC gains.

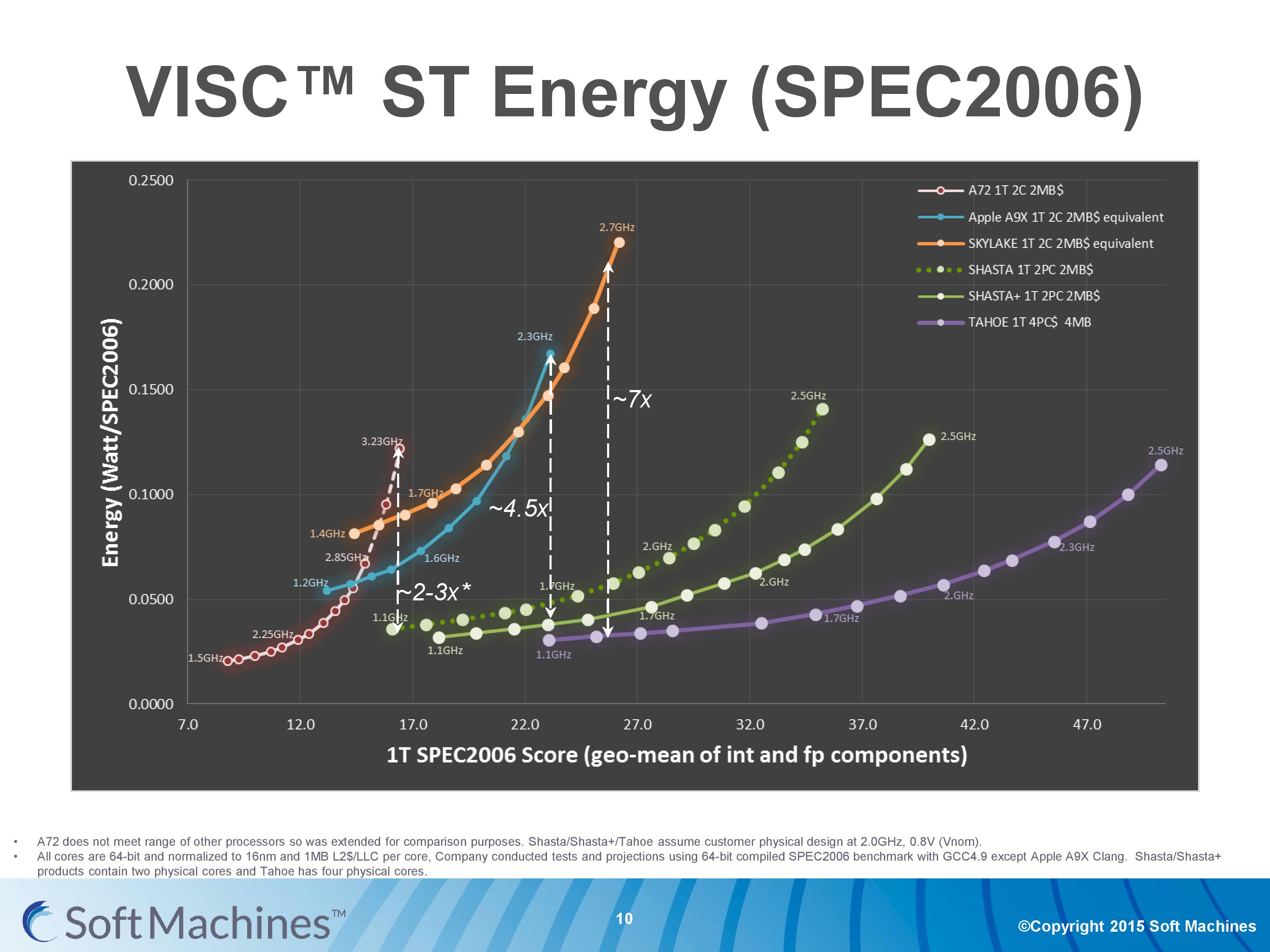

So it’s at this point that we have to show this graph before we can progress any further. This is a graph which has caused a lot of commotion among the analyst community, because it can be a very difficult graph to digest and work out what is going on. I’ll take you through it. But to start, ignore the vertical dashed lines.

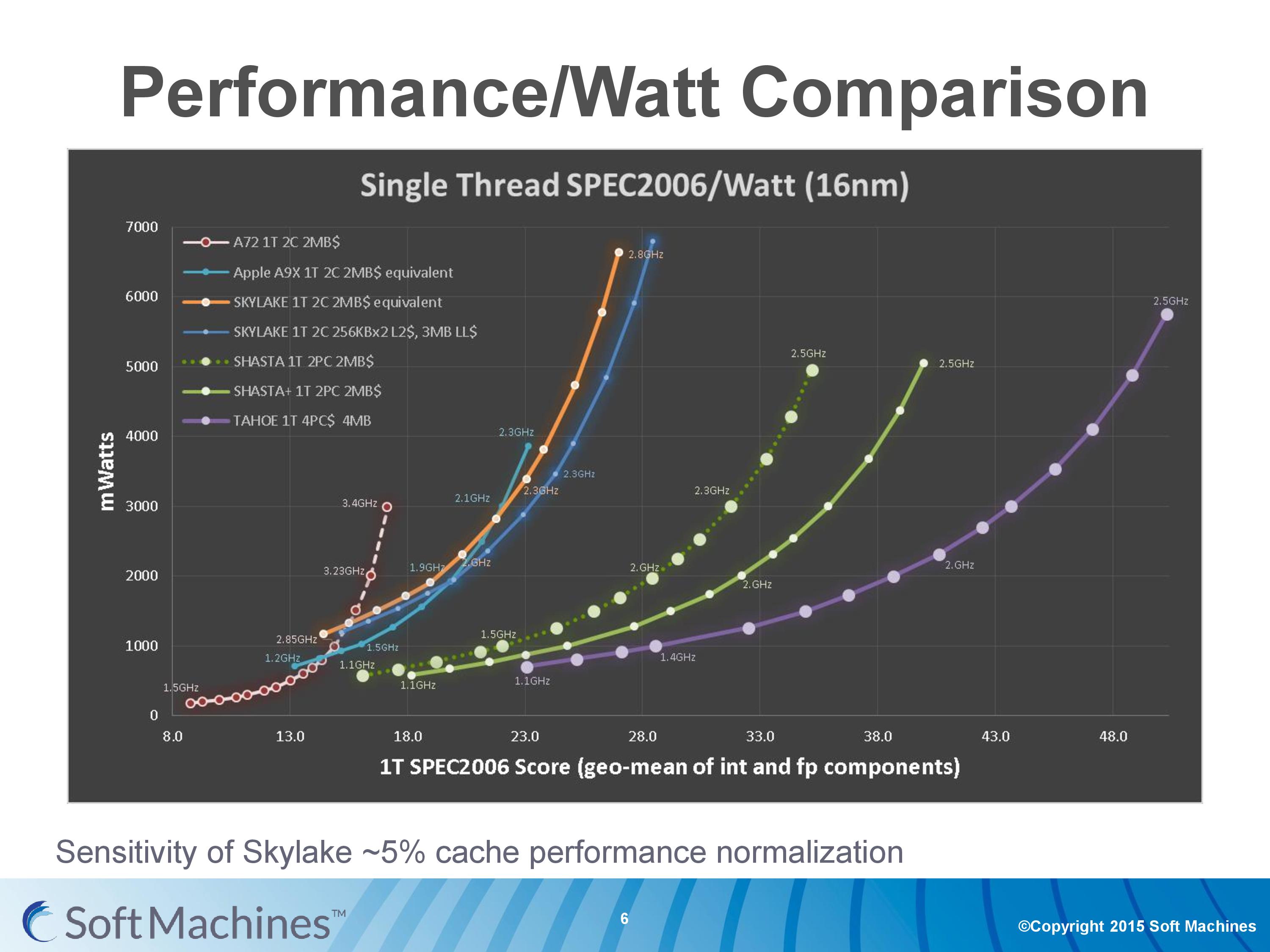

This is a graph from Soft Machines attempting to show the efficiency of several CPUs cores: Cortex-A72, Apple’s Twister (A9X), Skylake, Shasta, Shasta+ (2017), and Tahoe (2018). It is a graph of the average power consumed per unit SPEC2006 score plotted against the SPEC2006 score, and each dot on a line shows the relative score of each CPU at a given frequency.

The reason why this graph has caused a lot of commotion is that it shows a lot of data based on a lot of assumptions displayed in a very odd way. The following points are worth mentioning

| The Scores #1 | This graph shows the geometric mean of SPEC2006int and SPEC2006fp, the integer and floating point parts of the SPEC2006 set of benchmark tools. Because different architectures focus on integer and floating point performance to differing degrees (more units focused on INT or FP), these results are typically given separately, with individual subtest scores. Practically no-one in the industry puts them together as a geometric mean, which has some analysts wondering if there are certain subtests where VISC scores particularly low. |

| The Scores #2 | This graph shows only single threaded results, even though each CPU is listed as having two cores in the data but running a single thread. This puts the Soft Machines cores in the best light, as a single thread has access to all the ports on two cores as well as two re-order buffers and two cores' worth of L2 cache. |

| Conversion #1 | All the results have been converted as if each CPU design has 1MB of last level cache per core. This means that designs such as the A9X and Skylake CPUs have been reduced, and scores have been adjusted by ambiguous ‘industry standard techniques’ according to SMI. A number of analysts say that this is not a fair conversion, as an A9X core or Skylake core with less cache would be arranged differently in silicon to take advantage of more space for other things or lower latencies. |

| Conversion #2 | All the results have been converted to 16nm FinFET+ on TSMC, again by ‘industry standard techniques’. This is a hard one to grasp, because core designs are not simply ‘shrunk’ from one node to another. Similar to the cache situation, each process node can be optimized for metal layers and arrangement for latency and bandwidth optimizations. Each conversion, such as Intel’s 14nm to TSMC 16nm, or TSMC’s 28nm -> 16nm, would have to be thoroughly examined. Extrapolating from 28nm to 16nm would be an exasperating task to be accurate (and this level of extrapolation wouldn’t be acceptable even in a high school classroom as I pointed out). |

| Testing #1 | Not all points on the graph come from direct data. Each line has had several points taken from data and the rest are interpolated given basic power formulas. |

| Testing #2 | The platforms used are not all what they appear to be. So for example, the best Cortex-A72 16nm data point would be the Kirin 950 in the Huawei Mate 8, but instead a dual A72 was used from the Amazon Fire TV which as a 28nm MediaTek MT8173 running at 1.98 GHz. One could argue that A72 is new enough and only recently on 28nm that it isn’t fully optimized for the process yet and this is probably a low end version of that silicon. The Apple A9X numbers are actually taken from a 14nm A9 and the assumption was made that the dynamic power in a cold environment was similar to the A9X. The Skylake numbers were a mid-range Core i5-6200U in a Dell laptop, which could be prone to variable turbo modes or overheating, and that specific SKU is hardly the most power efficient model in Intel’s Skylake lineup. |

| Compilers | In order to ‘normalize’ the data, each of the actual data points taken were as a result of SPEC2006 being compiled on GCC 4.9 (or Clang for Apple). Typically for SPEC we normally consider the peak numbers possible with the best compiler, and as pointed out by some analysts, Intel’s results on their compiler can get scores more than double that of GCC, which can put a negative bent on Intel’s numbers. |

| Simulations | Almost all of SMI’s numbers come from internal RTL simulation of their IP designs. With the 28nm proof-of-concept chip, we were told that the difference between simulation and physical was around 5-10% on performance and power, but some chip designers have pointed out that performance on a simulated processor can be anywhere from 33-50% inaccurate from the peak theoretical performance when you actually put it into silicon. |

| Optimizations | The data shown in this graph for the VISC processors is based on assumptions relating to process optimization. The way the design is to be sold means that licensees can work with the foundries to optimize the metal stack layers or other design characteristics to get better power or higher frequencies. I was told that this was put into the graph at an assumed value around 10%, and the data in the graph includes this. |

Typically any one of these points in most contexts would be grounds to be apprehensive about the results. The fact that there are nine salient points here listed (and I may even have missed one or two) means that the data should be thrown out entirely.

Clarification on the Data from Soft Machines

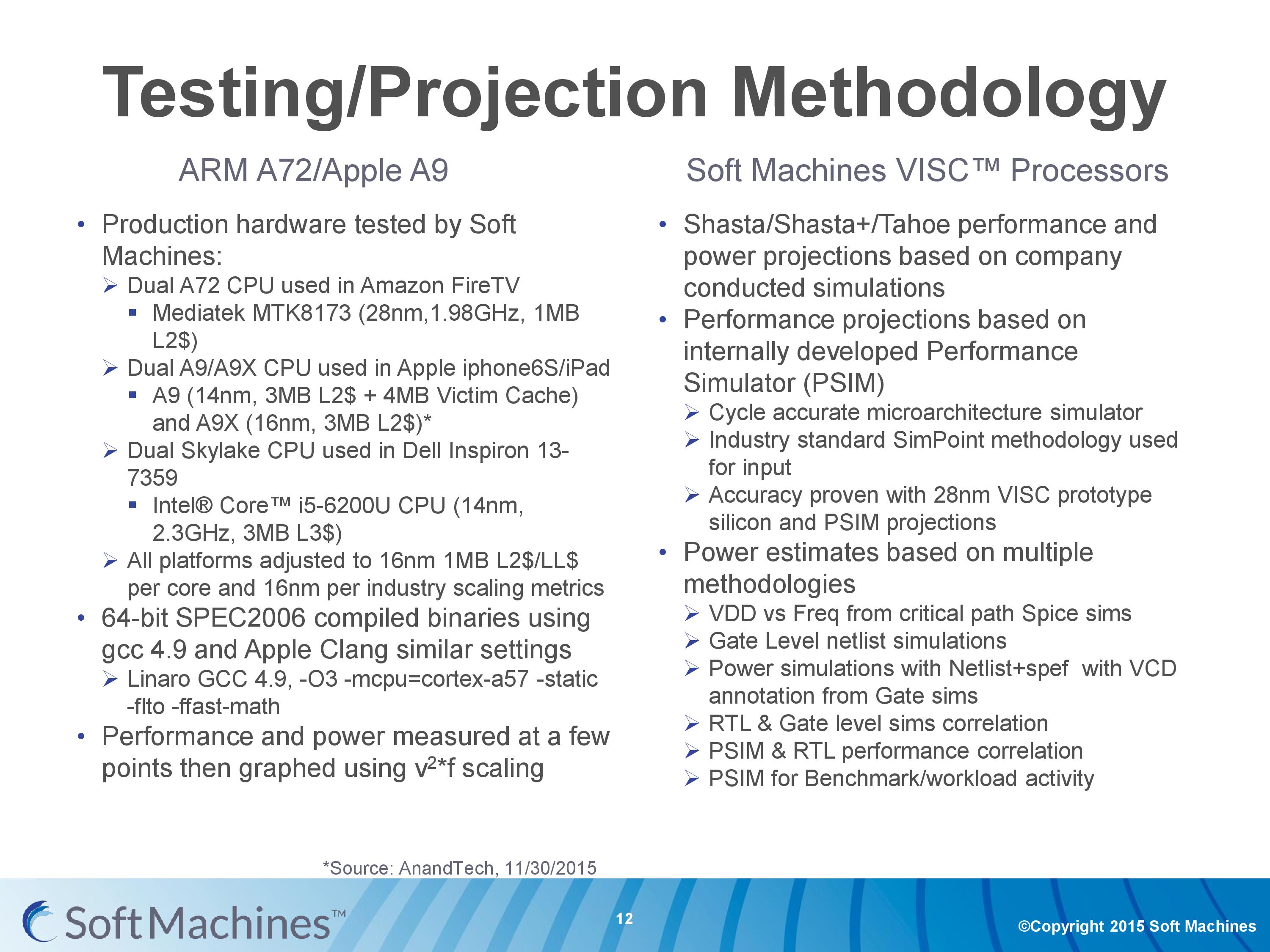

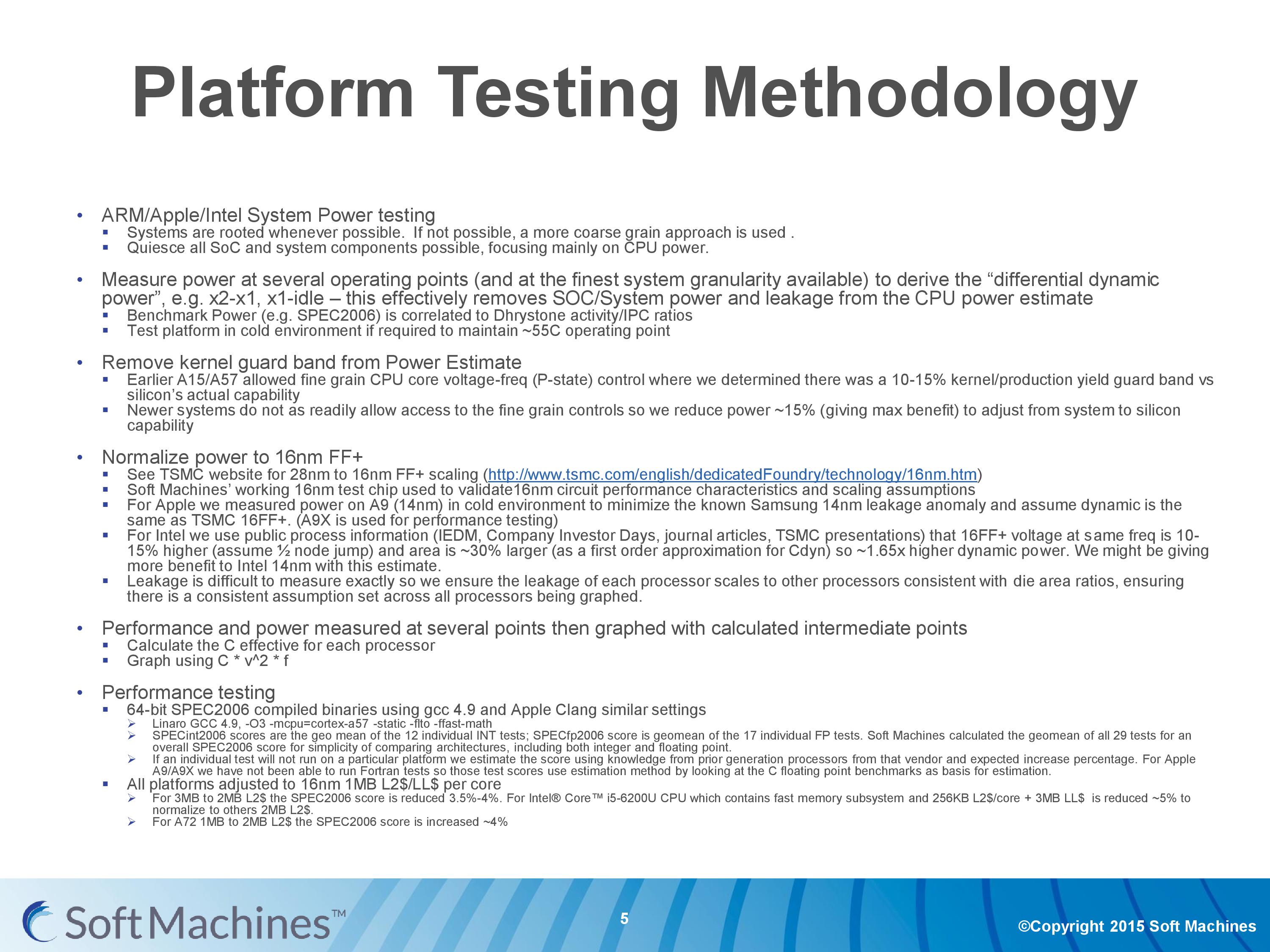

Because we were one of the last media outlets to speak with Soft Machines, and I had already seen some discussion around these points, I posed the issues back to them, as well as a few questions of my own. Because of the response that had been presented, we managed to get a lot of details around the simulation and assumptions aspects. So to start, here’s the testing methodology that everyone was provided with:

For clarity on the VISC processors, simulations were done to be both signal accurate and cycle accurate, and data taken from 16nm design configurations. Both power and results were taken from these.

For the power on the other parts, the power consumption was taken at the wall. To remove system power from the equation, the system was run in 2C vs 1C modes and 1C vs idle modes at various frequencies to find the dynamic power. Each platform was tested in a cold environment to ensure the maximum temperature did not go above 55C. Each of the power numbers are estimates that have removed the production yield ‘guard’ (i.e. protection overestimate), which was about ~15%, giving benefits to the non-VISC core results.

For the power conversion to 16nm FinFET+ on TSMC:

- The A72 28nm TSMC used TSMC’s numbers for scaling.

- For the A9X numbers, the A9 numbers were taken in a cool environment to minimize Samsung 14nm leakage and the dynamic power for the A9X is assumed the same as the A9.

- For Intel, using public data it was assumed that 16FF+ voltage is 10-15% higher and area is 30% larger, giving ~1.65x power on 16FF+.

- Leakage scales with die area.

For performance testing:

- Linaro GCC 4.9 with -O3 -mcpu=cortex-a57 -static -flto -ffast-math

- If any test failed, results were taken from previous generations and expected percentage increases. For example, FORTRAN on A9 failed, so estimates were taken from the floating point numbers in C.

- For the cache adjustments, for 3MB to 2MB was reduced 3.5-4%. Because Intel has L2+L3, this is reduced a further 5%. For A72 moving from 1MB L2 to 2MB L2 in total, score was increased by 4%.

For a full rundown, this was the slide provided to us:

As part of these assumptions, I did ask about the raw data collected and if that would ever be presented. I did mention that they really need to split up INT and FP results, and I was told that it may happen at a later date but not right now. What I was given though was the effect of the cache adjustment on Skylake.

The orange line and the blue line next to it represents the movement from a multi-cache hierarchy of L2+L3 to 1MB of L2 cache per core only. The blue line still has the assumption of moving from 14nm to 16FF+, and using the GCC compiler, but the orange line has that extra assumpton.

Personally, these assumptions make me uneasy. Conversions like this are typically only done as back-of-the-envelope types of calculations during the early stages of design, because they are very rough and do not take into account things like silicon floor-plan optimization that would occur if you chose a smaller/larger cache arrangement, or changing from 8-way to 4-way associativity in the caches and so on. Typically we see companies in similar positions to SMI provide the raw or semi-modified data, using one assumption at most, with a split between FP and INT results - e.g. taking all the results as-is with GCC. The reason why it all comes into one graph is for brief simplicity, which doesn't particularly endear any reader/investor who might decide to be heavily invested in this project.

We pointed out a lot of concerns with this data to Soft Machines, including the list above of assumptions and how some of them simply do not make sense and should be restricted to that those quick rough calculations, especially when presenting at a conference. They gave us the graph above showing the effect of cache changes on Skylake, but I have asked in the future for them to display the data in a less complicated way, using standard industry metrics (such as INT or FP). Ideally the graphs are also kept to two or three data sets without requiring a 9-point interpretation scheme to understand what is happening - we typically get a dozen or so graphs from Huawei, ARM, AMD or Intel when they are describing their latest architecture or microarchitecture designs. This allows more understanding of what is happening under the hood and can be used to validate the results - as it stands, it is difficult to validate anything due to the assumptions and conversions made.

97 Comments

View All Comments

vladx - Sunday, February 14, 2016 - link

If it works, Intel or ARM won't be abke to copy them because they've already patented the techniques used.vladx - Sunday, February 14, 2016 - link

*able tovalinor89 - Saturday, February 13, 2016 - link

AMD, Samsung and Globalfoundries are chief investors so it is doubtful Intel or Nvidia will be able to aquire this company.xthetenth - Friday, February 12, 2016 - link

Why is that such a red flag? They show the optimal part of the curve for A72, and they show the suboptimal tail for all of them, although they extend it farther for the A72 to show what it takes to get it up to the same performance level (basically it's non-viable and that they're in a different class if accurate), and they say as much. There's a huge list of objections the article raises and that isn't on it for pretty good reason. It's just not nearly as big a deal as the rest.Andrei Frumusanu - Friday, February 12, 2016 - link

It's incorrect to simply extend a curve of an existing design beyond its design operating range. It's perfectly possible to design the physical implementation to be optimized at very high frequencies - in such a case the curve would less steep but consume higher power at the low frequencies. Extending the curve of a low-power design is relatively misleading in this case.extide - Friday, February 12, 2016 - link

Yeah, and I don't think they should adjust the Intel cores at all. Intel chips come as Intel makes them, that's it. You will never see the skylake arch on TSMC or GF foundry processes. You should take the results from the Intel chip as they are because that is what you will be competing against, not some made up adjusted result that will never exist in the wild.As for adjusting the OTHER chips, well, ok I see what they are going at here, but I still think they took it a bit too far, like adjusting for more or less cache. Although you can see those other chips on various processes, form GF and TSMC, so the process correction isn't really as big of a red flag to me.

name99 - Saturday, February 13, 2016 - link

The curve is not illegitimate because you're missing the point. The goal of the curve is not to show how great their CPU is, it is to show how great their TECHNOLOGY is (ie their microarchitecture). This is best done by comparisons that hold all else equal (ie same process, same compiler, same caches, etc; only different microarchitecture).If you're going to criticize the presentation, criticize it on grounds that actually make sense:

- their "performance" score is garbage because they claim to be in the business of speeding up SINGLE-THREADED code, but then mix in a number of benchmarks that are very naturally parallelized. This is much like comparing an ARMv8 CPU with NEON switched off to an x86 using AVX-512, to test matrix multiplication speed --- it's simply NOT telling you anything about single-threaded performance.

- the robustness of their normalizations is dodgy and they provide little evidence that the ways in which they have normalized are legit.

gamerk2 - Friday, February 12, 2016 - link

This is where CPUs are eventually going to go, since it's really the only way to get maximized CPU performance without adding a lot of power-hungry components onto the die.That being said, the likely outcome is someone (Intel most likely, possibly NVIDIA) acquires Soft Machines and integrates their IP onto their own chips.

vladx - Sunday, February 14, 2016 - link

Doubt it, the only chance for NVidia would be to license it and Intel would most likely be blocked from nuying such a company.Avendit - Friday, February 12, 2016 - link

How doe this all compare to the Transmetta/Crusoe parts? That had a different purpose but did have the translation abstraction layer approach, but didn't seem to go anywhere unfortunetly. Are there any parallels or learnings to be had?