The AMD Radeon R9 380X Review, Feat. ASUS STRIX

by Ryan Smith on November 23, 2015 8:30 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Asus

- Radeon 300

Power, Temperature, & Noise

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

Unfortunately we don’t have any tools that can read the GPU voltage on the ASUS card, so we’ll jump right into average clockspeeds.

| Radeon R9 380X Average Clockspees | |||

| Game | ASUS R9 380X (OC) | ASUS R9 380X (Ref) | |

| Max Boost Clock | 1030MHz | 970MHz | |

| Battlefield 4 |

1030MHz

|

970MHz

|

|

| Crysis 3 |

1030MHz

|

970MHz

|

|

| Mordor |

1030MHz

|

970MHz

|

|

| Dragon Age |

1030MHz

|

970MHz

|

|

| Talos Principle |

1030MHz

|

970MHz

|

|

| Total War: Attila |

1030MHz

|

970MHz

|

|

| GRID Autosport |

1030MHz

|

970MHz

|

|

| Grand Theft Auto V |

1030MHz

|

970MHz

|

|

The ASUS R9 380X has no problem holding its full boost clockspeed in games, both at its stock speed of 1030MHz and when downclocked to 970MHz.

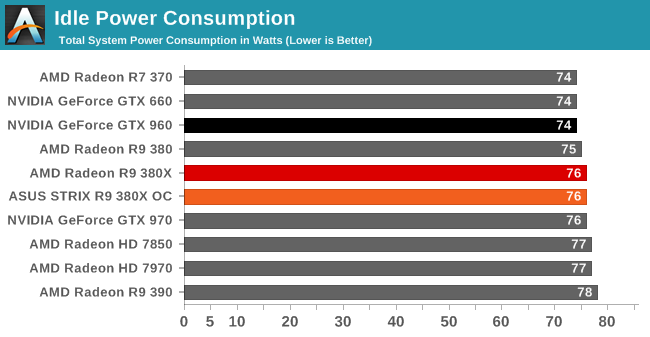

Starting with idle power consumption, the ASUS card comes in right where we’d expect it. 75-76W is typical for a Tonga card on our GPU testbed.

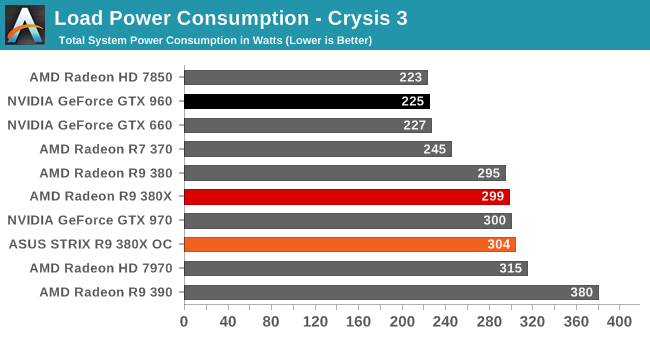

Moving on to power consumption under Crysis 3, like so many other aspects of R9 380X, its performance here is very close to the original R9 380. Power consumption is up slightly thanks to the additional CUs and the additional CPU load from the higher framerate, with the reference clocked R9 380X coming in at 299W, while ASUS’s factory overclock pushes that to 304W.

The problem for AMD is that this is smack-dab in GTX 970 territory. Meanwhile the GTX 960, though slightly slower, is drawing 74W less at the wall. R9 380 just wasn’t very competitive on power consumption compared to Maxwell, and R9 380X doesn’t do anything to change this. AMD’s power draw under games is essentially one class worse than NVIDIA’s – the R9 380X draws power like a GTX 970, but delivers performance only slightly ahead of a GTX 960.

The one bit of good news here for AMD is that while the power consumption of the R9 380X isn’t great, it’s still better balanced than the R9 390. With AMD opting to push the envelope there to maintain price/performance parity with the GTX 970, while the R9 380X is a fair bit slower than the R9 390, it saves a lot of power in the process. And for that matter the R9 380X shows a slight edge over the 7970, delivering similar gaming performance for around 16W less at the wall.

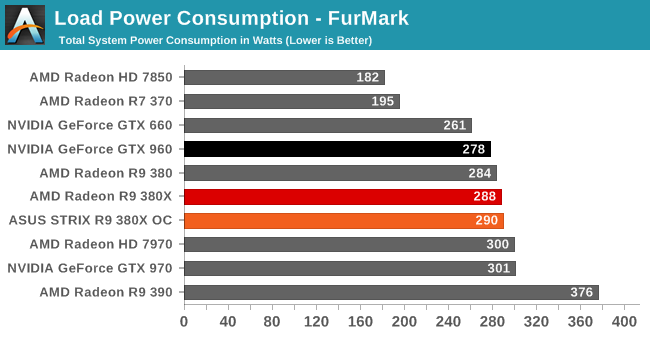

Moving over to FurMark our results get compressed by quite a bit (we’re using a GTX 960 with a fairly high power limit), but even then the R9 380X’s power consumption isn’t in AMD’s favor. At best we can say it’s between the GTX 960 and GTX 970, with the former offering performance not too far off for less power.

Otherwise as was the case with Crysis 3, the R9 380X holds a slight edge over the 7970 on power consumption. This despite the fact that the R9 380X uses AMD’s newer throttling technology, and consequently it gets closer to its true board limit than the 7970 ever did.

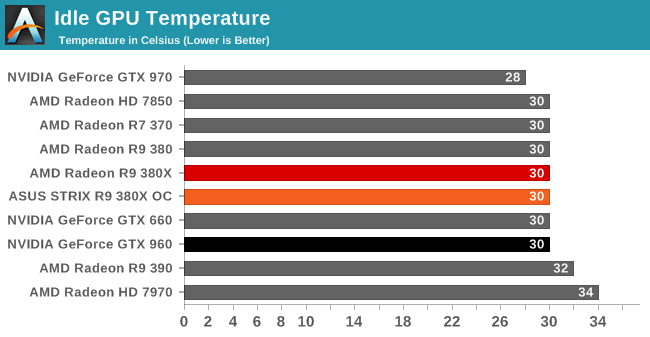

With idle temperatures ASUS’s 0db Fan technology doesn’t hamper the R9 380X at all. Even without any direct fan airflow the STRIX R9 380X holds at 30C.

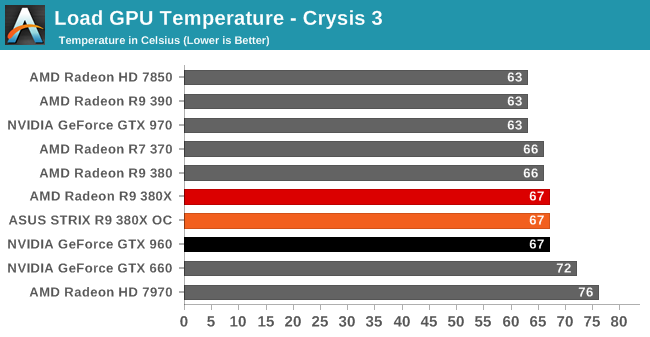

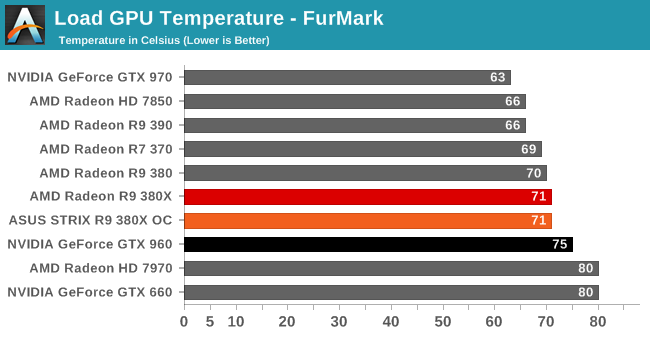

Load temperatures also look good. ASUS’s sweet spot seems to be around 70C – right where we like to see it for an open air cooled card – with the R9 380X reaching equilibrium at 67C for Crysis 3 and 71C for FurMark.

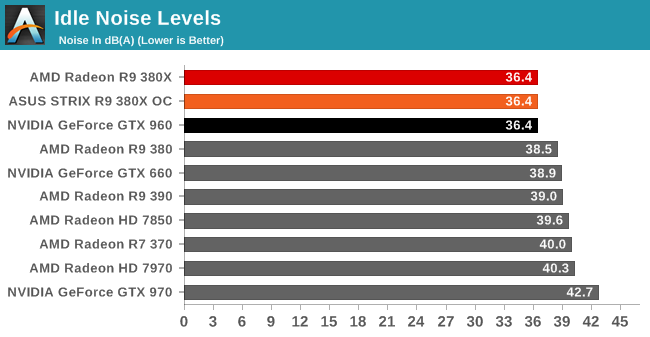

Finally with idle noise, the zero fan speed idle technology on the STRIX lineup means that the STRIX R9 380X gets top marks here. At 36.4dB the only noise coming from our system is closed loop liquid cooler for the CPU. The video card is completely silent.

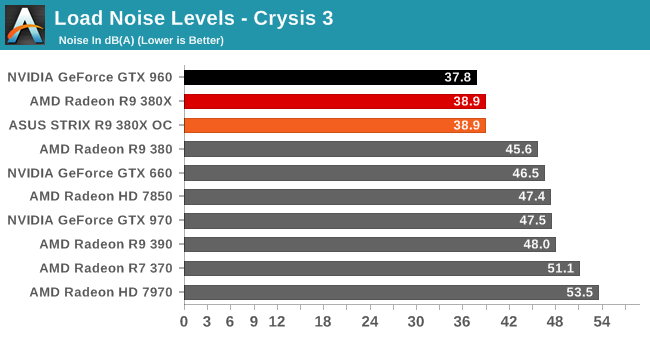

Shifting over to load noise levels then, the STRIX R9 380X continues to impress. With Crysis 3 the card tops out at 38.9dB – less then 3dB off of our noise floor – and that goes for both when the card is operating at AMD’s reference clocks and ASUS’s factory overclock. At this point the STRIX R9 380X is next-to-silent; it would be hard to do too much better without using an entirely passive cooling setup. So for ASUS to dissipate what we estimate to be 175W or so of heat while making this little noise is nothing short of impressive.

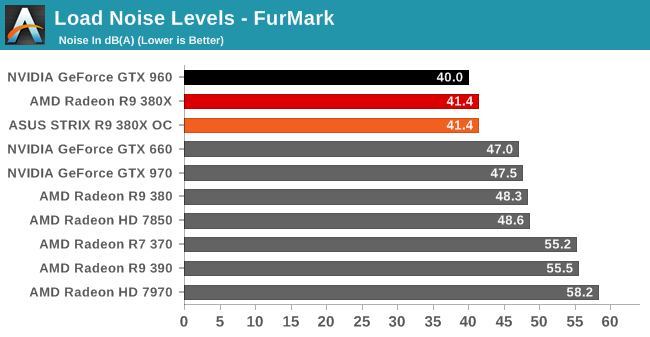

Meanwhile with FurMark the ASUS card needs to work a bit harder, but it still offers very good results. Even with the card maxed out we’re looking at just 41.4dB. The STRIX R9 380X isn’t silent, but it gets surprisingly close for such a powerful card.

101 Comments

View All Comments

FriendlyUser - Monday, November 23, 2015 - link

This is not a bad product. It does have all the nice Tonga features (especially FreeSync) and good tesselation performance, whatever this is worth. But the price is a little bit higher than what would make a great deal. At $190, for example, this card woule be the best card in middle territory, in my opinion. We'll have to see how it plays, but I suspect this card will probably find its place in a few months and after a price drop.Samus - Monday, November 23, 2015 - link

Yeah, it's like every AMD GPU...overpriced for what it is. They need to drop the prices across the entire line about 15% just to become competitive. The OC versions of the 380X is selling for dollars less than some GTX970's, which use less power, are more efficient, are around 30% faster, and you could argue have better drivers and compatibility.SunnyNW - Monday, November 23, 2015 - link

To my understanding, the most significant reason for the decreased power consumption of Maxwell 2 cards ( the 950-60-70 etc.) was due to the lack of certain hardware in the chips themselves specifically pertaining to double precision. Nvidia seems to recommend Titan X for single precision but Titan Z for DP workloads. I bring this up because so many criticize AMD for being "inefficient" in terms of power consumption but if AMD did the same thing would they not see similar results? Or am I simply wrong in my assumption? I do believe AMD may not be able to do this currently due to the way their hardware and architecture is configured for GCN but I may be wrong about that as well, since I believe their 32 bit and 64 bit "blocks" are "coupled" together. Obviously I am not a chip designer or any sort of expert in this area so please forgive my lack of total knowledge and therefore the reason for me asking in hopes of someone with greater knowledge on the subject educating myself and the many others interested.CrazyElf - Monday, November 23, 2015 - link

It's more complex than that (AMD has used high density libraries and has very aggressively clocked its GPUs), but yes reducing DP performance could improve performance per watt. I will note however that was done on the Fury X; it's just that it was bottlenecked elsewhere.Samus - Tuesday, November 24, 2015 - link

At the end of the day, is AMD making GPU's for gaming or GPU's for floating point\double precision professional applications?The answer is both. The problem is, they have multiple mainstream architectures with multiple GPU designs\capabilities in each. Fury is the only card that is truly built for gaming, but I don't see any sub-$400 Fury cards, so it's mostly irrelevant since the vast majority (90%) of GPU sales are in the $100-$300 range. Every pre-Fury GPU incarnation focused too much on professional applications than they should have.

NVidia has one mainstream architecture with three distinctly different GPU dies. The most enabled design focuses on FP64\Double Precision, while the others eliminate the FP64 die-space for more practical, mainstream applications.

BurntMyBacon - Tuesday, November 24, 2015 - link

@Samus:: "At the end of the day, is AMD making GPU's for gaming or GPU's for floating point\double precision professional applications?"Both

@Samus: "The answer is both."

$#1+

@Samus: " Fury is the only card that is truly built for gaming, but I don't see any sub-$400 Fury cards, so it's mostly irrelevant since the vast majority (90%) of GPU sales are in the $100-$300 range. Every pre-Fury GPU incarnation focused too much on professional applications than they should have."

They tried the gaming only route with the 6xxx series. They went back to compute oriented in the 7xxx series. Which of these had more success for them?

@Samus: "NVidia has one mainstream architecture with three distinctly different GPU dies. The most enabled design focuses on FP64\Double Precision, while the others eliminate the FP64 die-space for more practical, mainstream applications."

This would make a lot of sense save for one major issue. AMD wants the compute capability in their graphics cards to support HSA. They need most of the market to be HSA compatible to incentivize developers to make applications that use it.

CiccioB - Tuesday, November 24, 2015 - link

HSA and DP64 capacity have nothing in common.People constantly confuse GPGPU capability with DP64 support.

nvidia GPU have been perfectly GPGPU capable and in fact they are even better than AMD ones for consumer calculations (FP32).

I would like you to name a single GPGPU application that you can use at home that makes use of 64bit math.

Rexolaboy - Sunday, January 3, 2016 - link

You asked a question that's been answered in the post you reply to. Amd wants to influence the market to support fp64 compute because it's ultimately more capable. No consumer programs using fp64 compute is exactly why amd is trying so hard to release cards capable of it, to influence the market.FriendlyUser - Tuesday, November 24, 2015 - link

It's not just DP, it's also a lot of bits that go towards enabling HSA. Stuff for memory mapping, async compute etc. AMD is not just building a gaming GPU, they want something that plays well in compute contexts. Nvidia is only being competitive thanks to the CUDA dominance they have built and their aggressive driver tuning for pro applications.BurntMyBacon - Tuesday, November 24, 2015 - link

@FriendlyUser: "It's not just DP, it's also a lot of bits that go towards enabling HSA. Stuff for memory mapping, async compute etc. AMD is not just building a gaming GPU, they want something that plays well in compute contexts."This. AMD has a vision where GPU's are far more important to compute workloads than they are now. Their end goal is still fusion. They want the graphics functions to be integrated into the CPU so completely that you can't draw a circle around it and you access it with CPU commands. When this happens, they believe that they'll be able to leverage the superior graphics on their APUs to close the performance gap with Intel's CPU compute capabilities. If Intel releases better GPU compute, they can still lean on discrete cards.

Their problem is that there isn't a lot of buy-in to HSA. In general, there isn't a lot of buy-in to GPU compute on the desktop. Sure there are a few standouts and more than a few professional applications, but nothing making the average non-gaming user start wishing for a discrete graphics card. Still, they have to include the HSA (including DP compute) capabilities in their graphics cards if they ever expect it to take off.

HSA in and of itself is a great concept and eventually I expect it will gain favor and come to market (perhaps by another name). However, it may be ARM chip manufacturers and phones/tablets that gain the most benefit from it. There are already some ARM manufacturers who have announce plans to build chips that are HSA compatible. If HSA does get market penetration in phones/tablets first as it looks like may happen, I have to wonder where all the innovative PC programmers went that they couldn't think of a good use for it with several years head start.