Stepping Into the Display: Experiencing HTC Vive

by Joshua Ho on September 14, 2015 8:00 AM EST

Almost every day, we hear about revolutions in technology. A smartphone is often said to have a revolutionary camera, display, or design. This kind of marketing hyperbole is present throughout the tech industry, to the point where parodies of it have appeared on TV.

We often hear the same language when discussing VR as well. For the most part, I haven’t seen anything to show that VR is worthy of such hyperbole, but I've only experienced mobile VR while covering mobile devices. Almost every mobile VR headset like Gear VR or Google Cardboard just doesn’t work with glasses very well, if at all. In the case of Gear VR, pretty much any time I’ve tried it I found it to be a cool experience, but not really anything that would be life-changing. I just didn’t see the value of a video in which I have to be the cameraman. Probably the best example of this was the OnePlus 2 launch app, in which I would miss bits of information in the video just because I wasn’t looking at the right place when something appeared and disappeared.

A week ago, I was discussing my thoughts on VR with HTC when they realized that I had never tried their VR headset, the HTC Vive. A few days later, I stepped into one of HTC’s offices in San Francisco, expecting the experience to be similar in feeling to what I had seen before.

The room I was in was relatively simple. There was a desktop PC that was running the whole system that definitely had a good amount of computing power in it judging by the 10-12" long video card (single GPU) and what looked like an aftermarket heatsink fan unit on the CPU, though I wasn’t able to get any details about the specific components in the system. Other than this, two Lighthouse tracking devices were mounted on top of some shelves in the corners of the VR space.

Sitting in the middle of the VR space was an HTC Vive. There were cables running out of the headset, but the two controllers were completely wireless. The display is said to be 1080x1200 per eye, refreshing at 90 Hz with a field of view of 110 degrees or greater. Two headset itself contains one HDMI port and two USB ports to connect the headset to the PC. The motion tracking is also supposed to have sub-millimeter precision, with angular precision to a tenth of a degree. With the two tracking stations, the maximum area for interactivity is a 15 foot square.

Putting the headset on was simple. There were some adjustable straps that hold the display component to the eyes, and there was more than enough space in the headset to allow me to wear my glasses and see the sharpness of the display. Right away, I noticed that it was important to make sure the straps were tight. If I pushed down on the display, it would lose clarity until I pushed the headset up again to keep it in the right position. I also noticed that the subpixels of the display were subtly visible when looking at a pure white background, which suggests that there is room to improve in the resolution department.

At this point, the person managing the demo held out the controllers. One of them had a color palette on the touchpad, but the color of the controller was otherwise grey in this virtual world. I reached out and grabbed both controllers on the first attempt. I couldn’t see my hands, but the controllers were moving with my arms. I could walk around in this area. If I tried, I could inflate a balloon and hit it with my hands. The balloon bounced in reaction, as a balloon should.

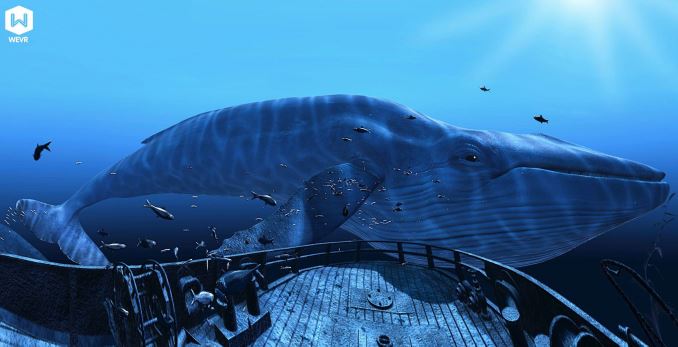

The demo loop started after this small tutorial. These demos were the same that Ian had seen before, but I was now seeing it for myself. I was standing on a wrecked ship on the bottom of the ocean. Fish were swimming around me, and if I walked around the ship or flailed my arms, the fish and the water around me would react. An enormous whale swam by and then everything went black.

The next demo was a simulation of a job. There were various ingredients in the kitchen that surrounded me, ranging from eggs to tomatoes. A robotic voice read out a list of ingredients, and I turned to look at where the voice came from. The robot had a display, with a checklist of the previously described ingredients. I instinctively looked around and reached towards some tomatoes and picked them up to drop them in the pot, repeating this until a soup can appeared. I picked it up and dropped it in the pan. The next task was to make a sandwich. I placed and egg and other ingredients between two slices of bread, which made the sandwich.

The final demo was familiar. A robotic voice asked me to place each controller in a receptacle of some sort to be charged, then asked me to open various drawers before closing them. A robot then walked into the room and I stepped away from the robot as it stumbled in unpredictably as it was much bigger than I was. I was told to press some buttons and pull some levers to open it for repair, and I cautiously walked towards it to do so. Some directions to fix the robot were spoken quickly and it was impossible to keep up. Eventually, the entire robot fell apart on to the floor, and the robot was removed. The walls and floor of the room began to disappear until a single platform remained, and I stepped away to avoid falling into the abyss. Eventually, GLaDOS appeared to criticize the work done, and the room was completely sealed.

That was the end of my experience. I took off the headset, headphones, and set the controller down. In some ways, I felt a bit groggy as if I had just woken up from a dream. I was reflecting upon what had happened when I tried to look at my phone closely. I immediately got a sense of vertigo and had to sit down to gain my bearings. The room wasn't spinning, but I was definitely disoriented.

In some ways, the fact that I got vertigo is a bad sign. When I thought about it, I realized the problem that I had was that HTC Vive isn’t a perfect simulation of human vision. In the underwater boat demo, my eyes were always focusing on the display, which appeared to be distant. However, fish swam by extremely close to my eyes staying perfectly in focus with no double vision effects. When I tried to do something similar after the demo, I was disoriented because the real world didn’t work the same as Vive.

Tiltbrush on HTC Vive with Glen Keane

In my mind, Vive had already become my reality. HTC Vive, even in this state, was so incredibly convincing that it had become my reality for half an hour. I was fully aware that this wasn’t real and that I could take off the headset at any time, but at the same time it was so thoroughly convincing that at a subconscious level I was reacting to what I saw as if it was real. In that sense, HTC Vive is almost dream-like. It feels real when you’re interacting within the world that is contained within the headset, but when you take it off you realize what was strange about it. Unlike a dream, you can go back just by putting the headset on again.

In a lot of ways, HTC Vive is hard to describe because of its rarity. I’ve always been around personal computers, and while the modern smartphone was a great innovation it’s always been a connected mobile computer to me. There are other VR headsets out there to be sure, and these headsets were all neat to use, but HTC Vive is life-changing. It is a revolution.

27 Comments

View All Comments

Shadowmaster625 - Monday, September 14, 2015 - link

That oscilloscope smile is beyond creepy.user3311 - Monday, September 14, 2015 - link

"In the underwater boat demo, my eyes were always focusing on the display, which appeared to be distant. However, fish swam by extremely close to my eyes staying perfectly in focus with no double vision effects. When I tried to do something similar after the demo, I was disoriented because the real world didn’t work the same as Vive."Great Article, I just wanted to clarify on that one thing. It's actually the accommodation cue that is missing that you noticed. Double vision doesn't really have to do with it though, the vergence/convergence works exactly as it would in real life on the Vive and Rift. Accommodation, which is missing, is the blur when you aren't directly focused on an objects that is not there yet, basically depth of field (what I think you are describing).

So if you focus on something near with even only one eye, things behind it blur and vice-versa. (It's weak visual cue anyways though) The Vive and rift can emulate all of the other visual cues, I think there are 7 or 8 of them in total? It may eventually benefit from variable focus lenses also I had read, but I don't know what that would add exactly.

Happy to hear you enjoyed it btw. I tried it on the HTC Vive tour and it was amazing. Very dream-like as you describe.

user3311 - Monday, September 14, 2015 - link

Oops, typos and grammar errors in parts of my post but I couldn't edit.user3311 - Monday, September 14, 2015 - link

Sorry, I forgot to mention if the fish got really close to your face like inches away it would affect vergence and give you double vision due to the accommodation/vergence conflict. But the overall scene shouldn't be affected by double vision.scbundy - Monday, September 14, 2015 - link

I know of some VR tech coming down the pipe (can't recall by exactly who), that has eye tracking built in, and then can simulate depth of focus blur in software cause it knows exactly what you're looking at. Hopefully we'll see this soon.Friendly0Fire - Monday, September 14, 2015 - link

The most promising technology for this is light field displays, which were demo'd a few times before already. They can reconstruct a good approximation of the full light field (position and direction) instead of merely two flat planes, so you get accommodation naturally.CryingCyclops - Monday, September 14, 2015 - link

I think you might be thinking of the Fove. http://www.getfove.com/crim3 - Wednesday, September 16, 2015 - link

The Fove tracks your sight to blur the image accordingly, but there is no real eye lenses accommodation unlike with light field displays refered by Friendly0Firebji - Monday, September 14, 2015 - link

I've been following VR development closely for some time now and I think I've read just about every review that's been posted for these devices. To be frank, this review was extremely light on detail and interest compared to so many other reviews that go into much greater detail about the experience of the Vive and its features. I encourage anyone interested in this tech to search for more thorough reviews if you're truly interested.That being said, the one unique aspect to this review that I found very interesting was the description at the end about the perceptual shift that you underwent when taking the headset off and your description of the experience as seeming "dream-like" on reflection afterwards. That is an insight that I had not read before. I have not used the Vive myself but I have wondered about the lack of focal blurring/double-imaging as you described and couldn't understand why more wasn't said about it in other reviews. Is it really unnoticeable? was my continual question. But I think you've really provided a key insight here: it's not unnoticeable, but you can adapt to it pretty quickly. The result being that there is a perceptual shift when going into and out of this VR experience that can be disconcerting. Very interesting.

user3311 - Monday, September 14, 2015 - link

Unless the fish got really really close to his face he should still have seen things exactly as they were in real life throughout the entire scene. But due to the lack of accommodation it's a little hard to focus things right in front of your face, it's minor. So it's accommodation he is describing, but it only has an impact on vergence when things are really close like that. I couldn't tell exactly you why though, something to do with accommodation/vergence conflict. There are papers on it.Lack of accommodation is overall extremely subtle. Some argue that in VR it can be better than real life vision for the overall scene because nothing blurs in the foreground or background when you focus on something.

I think if it were added with fast enough eye tracking and some sort of layered or lightfield type display it would add a subtle immerssive factor.