Update on GPU Optimizations in Galaxy S 4

by Anand Lal Shimpi & Brian Klug on July 31, 2013 10:11 AM EST- Posted in

- Smartphones

- Samsung

- Android

- Mobile

- Benchmarks

Yesterday we posted our analysis of the Exynos 5 Octa's behavior in international versions of the Galaxy S 4 in certain benchmarks first discovered by Beyond3D user @Andreif7. Samsung addressed issue on their blog earlier today:

Under ordinary conditions, the GALAXY S4 has been designed to allow a maximum GPU frequency of 533MHz. However, the maximum GPU frequency is lowered to 480MHz for certain gaming apps that may cause an overload, when they are used for a prolonged period of time in full-screen mode. Meanwhile, a maximum GPU frequency of 533MHz is applicable for running apps that are usually used in full-screen mode, such as the S Browser, Gallery, Camera, Video Player, and certain benchmarking apps, which also demand substantial performance.

The maximum GPU frequencies for the GALAXY S4 have been varied to provide optimal user experience for our customers, and were not intended to improve certain benchmark results.

Samsung Electronics remains committed to providing our customers with the best possible user experience.

The blog seems to confirm our findings, that the 533MHz GPU frequency is available for certain benchmarks ("a maximum GPU frequency of 533MHz is applicable for running apps that are usually used in full-screen mode, such as the S Browser, Gallery, Camera, Video Player, and certain benchmarking apps"). The full screen statement doesn't make a ton of sense (both GLBenchmark 2.5.1 and 2.7.0 are full screen apps, but with different GPU behavior). Samsung claims however that a number of its first party apps (S Browser, Gallery, Camera and Video Player) can also run the GPU at up to 532MHz, which actually explains something else we saw while digging around.

Looking at resources.arc inside TwDVFSApp.apk we find the following reference to pre-loaded Samsung apps:

This is what we originally assumed was happening with GLBenchmark 2.5.1 (that it was broadcasting boost intent to Samsung, but Kishonti told us that wasn't the case when we asked).

As we mentioned in our original piece, there's a flag that's set whenever this boost mode is activated: +/sys/class/thermal/thermal_zone0/boost_mode. None of the first party apps get that flag set, only the specific benchmarks we pointed out in the original article. Of those first party apps, S Browser, Gallery and Video Player all top out at a GPU frequency of 266MHz (which makes sense, none of the apps are particularly GPU intensive). I tried running WebGL content in S Browser to confirm (I ran Aquarium with 500 fish), as well as edited some photos in Gallery - 266MHz was the max observed GPU frequency.

| Max Observed GPU Frequency | ||||||||

| S Browser | Gallery | Video Player | Camera | Modern Combat 4 | AnTuTu | |||

| Samsung GT-I9500 | 266MHz | 266MHz | 266MHz | 532MHz | 480MHz | 532MHz | ||

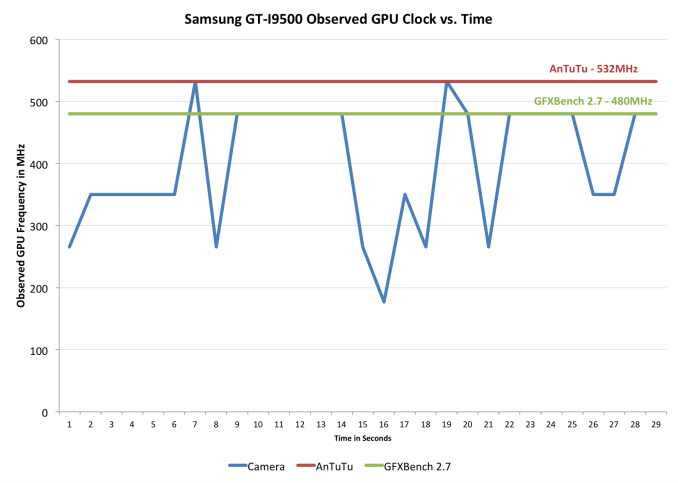

The camera app on the other hand is a unique example. Here we see short blips up to 532MHz if you play around with the live filters aggressively (I just quickly tapped between enabling all of the filters, the rugged/oil pastel/fish eye filters seemed to be the more likely to trigger a 532MHz excursion). I never saw 532MHz for more than a second though. The chart below plots GPU frequency vs. sampling time to illustrate what I saw there:

What appears to be happening here is the boost routine grants access to raised thermal limits, which makes it possible to sustain the 532MHz GPU frequency for the duration of certain benchmarks. Since the camera app doesn't get the boost mode flag, it doesn't seem to sustain 532MHz - only 480MHz.

Otherwise the Samsung response is consistent with our findings and we're generally in agreement. Games aren't given access to the 532MHz GPU frequency, while certain benchmarks are. Samsung's pre-loaded apps can send boost intent to the DVFS (dynamic voltage and frequency scaling) controller, but they don't appear to be given the same thermal boost ability as we see in the benchmarks. Their reasoning for not giving games access to the higher frequency makes sense as well. Higher frequencies typically require higher voltage to reach, and power scales quadratically with voltage so that's typically not the best way of increasing performance - especially at the limits of one's frequency/voltage curve. In short, you wouldn't want to for thermal (and battery life) reasons. The debate is ultimately about what happens within those specified benchmarks.

Note that we're ultimately talking about an optimization that would increase GPU performance in certain benchmarks by around 10%. It doesn't sound like a whole lot, but in a very thermally limited scenario it's likely the best you can do.

I stand by the right solution here being to either allow the end user to toggle this boost mode on/off (for all apps) or to remove the optimization entirely. I suspect the reason why Samsung wouldn't want to do the former is because you honestly don't want to run in a less thermally constrained mode for extended periods of time in a phone. Long term I'm guessing we'll either see the optimization removed or we'll see access to view current GPU clock obscured. I really hope it's not the latter as we as an industry need more insight into what's going on underneath the hood of our mobile devices, not less. Furthermore, I think this also highlights a real issue with the way DVFS management is done presently in the mobile space. Software is absolutely the wrong place to handle DVFS, it needs to be done in hardware.

Since our post yesterday we've started finding others who exhibit the same CPU frequency behavior that we reported on the SGS4. This isn't really the big part of the discovery since the CPU frequencies offered are available to all apps (not just specific benchmarks). We'll be posting our findings there in the near future.

38 Comments

View All Comments

jeffkibuule - Wednesday, July 31, 2013 - link

Samsung is clearly violating the reason of benchmarking, which is to test system performance under similar conditions a game would cause. Results from performance tests unachievable during a game are meaningless. And it's not that Samsung lets every app run at max clocks if needed until it needs to be thermally throttled down, they are specifically "boosting" benchmarking apps because it ultimately makes their phone look better.It's just some shady stuff.

twotwotwo - Wednesday, July 31, 2013 - link

Right--the frequency cap may have made sense, but if so it ought to be on everywhere.MKBL - Wednesday, July 31, 2013 - link

Unfortunately, many, if not the most, big corporations tend to try such sloppy tricks on consumers every so often. Not necessarily because they are axis of evil, but rather because of unlimited pressure on worker bees from the top to produce superb results no matter what. Executives are smart, so they usually don't explicitly direct R&D and marketing to cheat on consumers, but when pressed for outstanding performance in tight time windows, engineers and product managers have limited choice. If the trick is busted, executives can deny their involvement, and engineers will be scapegoated, if needed. This is the side effect of bad capitalism and profit maximization.Shadowself - Thursday, August 1, 2013 - link

"... many, if not the most, big corporations tend to try such sloppy tricks..."In a word, "BULL!"

The vast majority of corporations do not play such shady games.The vast majority do not setup -- and keep hidden until caught -- one set of capabilities for the vast majority of uses while utilizing a different set of capabilities for benchmarks and their own applications.

By saying "everyone's doing it" you seem to be saying "Everyone does it so it's OK to do." It's not and never should be.

Yes, it does happen. (I remember such things as far back as the Dhrystone fiasco years ago.) However, every time it comes to light the perpetrators should be treated very, very harshly. No one should have to stand for this crap.

MKBL - Thursday, August 1, 2013 - link

If my posting offended you, I'm sorry, but seriously I don't understand how you could interpret it such a way. I suggest you to take Reading Comprehension 101 at a local community college.Dman23 - Thursday, August 1, 2013 - link

Nice... attack someones character just because you don't agree with his statement. Maybe you should take a class at YOUR local community college in Debate to realize that turning to character-assination in a reply to an argument is the first sign of a weak and pathetic argument. SEE how easy that isRadarTheKat - Sunday, August 4, 2013 - link

I suggest MKBL also consider an Ethics class.chuchurocket - Saturday, August 3, 2013 - link

nvidia and apple both got caught doing similar benchmark boosting, that 2 big corporations that i can immediately recall, I'm sure you can google a bit and find out more.RadarTheKat - Sunday, August 4, 2013 - link

And they should have been castigated and publicly exposed for doing so. Now its Samsung and so we need to expose this and get them to cease this deplorable activity.edward kuebler - Tuesday, October 1, 2013 - link

Apple? When did that happen?