Inside AnandTech 2013: CPU Performance

by Anand Lal Shimpi on March 15, 2013 1:56 PM EST- Posted in

- IT Computing

- CPUs

- Intel

- Enterprise

Last week I kicked off a short series on the hardware behind our current network infrastructure. In the first post I presented a high level overview of the hardware that runs AnandTech, while in the second post I detailed our All-SSD Architecture. Today I wanted to do some rough CPU comparisons to show how much faster the new hardware is, and on Monday I'll conclude the series with a discussion of power savings.

Our old infrastructure was built in the mid-2000s, mostly around dual-core processors. For our most compute heavy systems, we had to rely on multiple dual-core processors to deliver the CPU performance we needed. Once again I'm using our old Forums database server, an HP DL585, as an example here.

This old 4U box used AMD's Opteron 880 CPUs. The Opteron 880 was a 90nm 2.4GHz part with 2 x 1MB L2 caches, and a 95W TDP. Our DL585 configuration had four of these 880s, each on their own processor card, bringing the total core count up to 8.

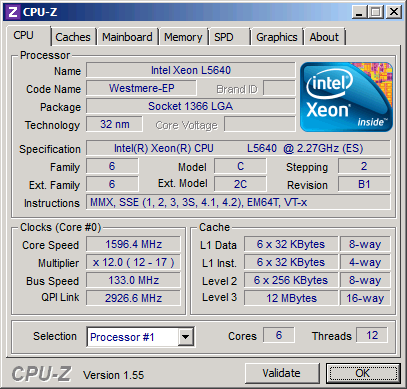

We replaced it (and all of our other servers) with 2U dual-socket systems based on Intel's Xeon L5640 processors. Each L5640 features 6-cores, making each server a 12-core machine, running at 2.26GHz with a 60W max TDP.

The spec comparison is pretty interesting:

| AnandTech Server CPU Comparison | ||

| AT Forums DB Server (2006) | AT Forums DB Server (2013) | |

| Server Size | 4U | 2U |

| CPU | 4 x AMD Opteron 880 | 2 x Intel Xeon L5640 |

| Total Cores / Threads | 8 / 8 | 12 / 24 |

| Manufacturing Process | 90nm | 32nm |

| Release Year | 2005 | 2010 |

| Number of Cores per Chip | 2 | 6 |

| L1 / L2 / L3 Cache per Chip | 2 x 64KB / 2 x 1MB / 0MB | 6 x 64KB / 6 x 256KB / 12MB |

| On-die Memory Interface | 2 x 64-bit DDR-400 | 2 x 64-bit DDR3-1333 |

| Max Frequency (Non-Turbo) | 2.40GHz | 2.26GHz |

| Max Turbo Frequency | - | 2.80GHz |

| Max TDP | 95W | 60W |

| Die Size per Chip | 199 mm2 | 240 mm2 |

| Transistor Count per Chip | 233M | 1.17B |

| Launch Price per Chip | $2649 | $996 |

Although die area has gone up a bit, you get 3x the number of cores, a lot more cache and much more memory bandwidth. The transistor count alone shows you how much things have improved from 2005 to 2010. It's also far more affordable to deliver this sort of compute. Although I won't touch on it here (saving that for the final installment), you get all of this with a nice reduction in power consumption.

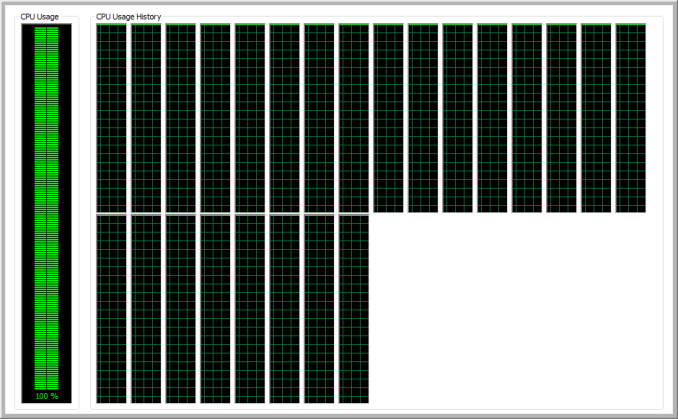

Now the question is how much has performance improved? Simulating our live workload on a single box without the infrastructure that goes along with it is a bit difficult, so I turned to some quick Integer and FP benchmarks to give a general idea of the magnitude of improvement:

| AnandTech Server CPU Performance Comparison | |||

| 4 x AMD Opteron 880 | 2 x Intel Xeon L5640 | Speedup | |

| 7-Zip Benchmark (1 Thread) | 2194 | 3053 | 39% |

| 7-Zip Benchmark (Multithreaded) | 16130 | 38764 | 140% |

| Cinebench 11.5 (Multithreaded) | 4.5 | 11.43 | 154% |

Unlike what we saw in our SSD vs. HDD comparison, there are no 19x gains here. Single threaded performance only improves by 39% over a span of 5 years at roughly similar clocks (it's a little worse if you take into account the L5640's turbo boost). The days of huge/easy improvements in single threaded performance ended a while ago. Multithreaded performance shows much better gains thanks to the fact that we have 50% more cores and 3x the number of threads in the new server.

All of this comes at a lower TDP and lower price point, despite the 20% larger die area. The market was very different back when the Opteron 880 launched.

If you're still running on large, old and outdated hardware, you can easily double performance by moving to something more modern - all while reducing power and rackspace. If your workload remained the same (or very similar), you could thoretically replace multiple 4U servers with half as many 2U servers given the sort of performance/density improvements we saw here. And 2U boxes aren't even as dense as they get. If space/rack density is a concern, there are options in the 1U space or by going to blades.

Looking back at what we had almost 7 years ago makes me wonder about what the enterprise market will look like 5 - 7 years from now. Presumably we'd be able to deliver similar performance to what we have deployed today with a much smaller, even more power efficient platform (~10nm). There's a lot of talk of going to many smaller cores vs. the big beefy Xeon cores we have here. There's also the question of how much headway the ARM players will make into the enterprise space over the coming years. I suspect, at a minimum, we'll see substantially increased price pressure on Intel. AMD was headed in that direction prior to its recent struggles, but the ARM folks should be able to deliver once they navigate their own 64-bit transition.

This will be a very interesting post to revisit around 2017 - 2018, when our L5640s will be 7+ years old...

21 Comments

View All Comments

skuban - Friday, March 15, 2013 - link

Some per core comparisons would make a nice addition to the 1st table.Casper42 - Friday, March 15, 2013 - link

So along those lines, why the heck did you compare a well spec'd Opteron 880 against a Low Power L5640. Why not compare it to something like an X5650 that was the bottom proc of the high end family at the time?Seems like a real Apples:Oranges type comparison.

aruisdante - Friday, March 15, 2013 - link

Because they're compairing what they had then to what they have now. It's apples to apples in terms of application, not processor.Mumrik - Sunday, March 17, 2013 - link

That would make absolutely no sense. This is an article about Anandtech's upgrade.Casper42 - Monday, March 18, 2013 - link

I get that, but go back and read the article and you see there are several generalized statements about "processors from then vs processors from now".When making such comparisons they should have pointed out that the differences seen are against a Low Power model and that a normal or high power model would have even more impact on then vs now.

bryanb - Friday, March 15, 2013 - link

Your "new" Xeon L5640 is already 3 years old. In comparison, you could have gone with the much newer Xeon E3 v2 that uses 22nm Ivy Bridge. You would get even better power efficiency at a much lower cost (currently $200-300) per chip. However, memory capacity may be a problem as you would have to rely on a LGA 1155 server boards which typically have fewer ECC ram slots.MartinT - Friday, March 15, 2013 - link

You have to remember (from the other articles) that these upgrade were done a long time ago, IIRC before Ivy Bridge Xeons even launched.DanNeely - Friday, March 15, 2013 - link

LGA1155 doesn't support dual socket boards; so until about a year ago the older LGA1366 chips (LGA1567 was also available but AT didn't need quad-socket any longer) were the only viable Intel option for their performance target.Casper42 - Friday, March 15, 2013 - link

Right, I want to run my enterprise on a desktop chip with a Xeon badge.A Xeon E3 v2 is nothing more than a Core i7 with Xeon bading and ECC support.

You want at least an E5 before I will even remotely consider it an Enterprise server.

In that space everything is currently on Sandy Bridge EP/EN with Ivy Bridge EP coming around Sept, Ivy EN (why bother really) a few months later, and then Ivy EX (The E7 big boys) either late 2013 or early 2014.

Casper42 - Friday, March 15, 2013 - link

PS:Xeon E3 v2 = Dual Channel memory and 16 lanes of PCIe 3.0 (Single socket only)

Xeon E5 v1 = Quad Channel memory and 40 lanes of PCIe 4.0 per socket, 80 lanes in a standard 2P server similar to their "new" DB server.

RAID Controllers (especially with SSDs behind them) and Network cards and such need some decent I/O.