Intel Core i5 3470 Review: HD 2500 Graphics Tested

by Anand Lal Shimpi on May 31, 2012 12:00 AM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

- GPUs

HD 2500: Compute & Synthetics

While compute functionality could technically be shoehorned into DirectX 10 GPUs such as Sandy Bridge through DirectCompute 4.x, neither Intel nor AMD's DX10 GPUs were really meant for the task, and even NVIDIA's DX10 GPUs paled in comparison to what they've achieved with their DX11 generation GPUs. As a result Ivy Bridge is the first true compute capable GPU from Intel. This marks an interesting step in the evolution of Intel's GPUs, as originally projects such as Larrabee Prime were supposed to help Intel bring together CPU and GPU computing by creating an x86 based GPU. With Larrabee Prime canceled however, that task falls to the latest rendition of Intel's GPU architecture.

With Ivy Bridge Intel will be supporting both DirectCompute 5—which is dictated by DX11—but also the more general compute focused OpenCL 1.1. Intel has backed OpenCL development for some time and currently offers an OpenCL 1.1 runtime that runs across multiple generations of CPUs, and now Ivy Bridge GPUs.

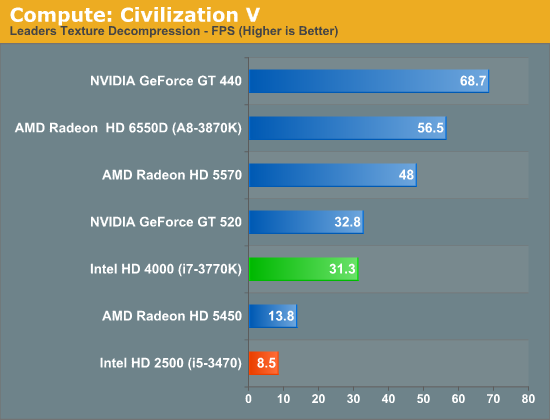

Our first compute benchmark comes from Civilization V, which uses DirectCompute 5 to decompress textures on the fly. Civ V includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game’s leader scenes. And while games that use GPU compute functionality for texture decompression are still rare, it's becoming increasingly common as it's a practical way to pack textures in the most suitable manner for shipping rather than being limited to DX texture compression.

These compute results are mostly academic as I don't expect anyone to really rely on the HD 2500 for a lot of GPU compute work. With under 40% of the EUs of the HD 4000, we get under 30% of the performance from the HD 2500.

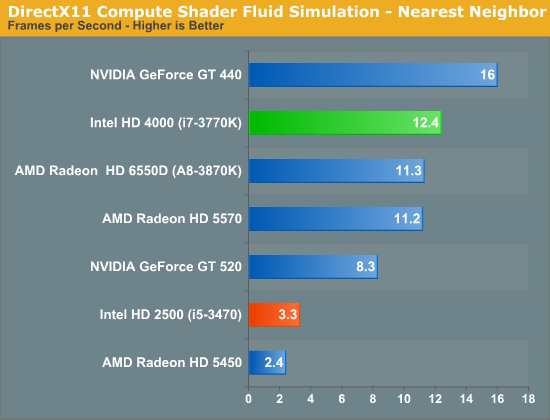

We have our second compute test: the Fluid Simulation Sample in the DirectX 11 SDK. This program simulates the motion and interactions of a 16k particle fluid using a compute shader, with a choice of several different algorithms. In this case we’re using an (O)n^2 nearest neighbor method that is optimized by using shared memory to cache data.

Thanks to its large shared L3 cache, Intel's HD 4000 did exceptionally well here. Thanks to its significantly fewer EUs, Intel's HD 2500 does much worse by comparison.

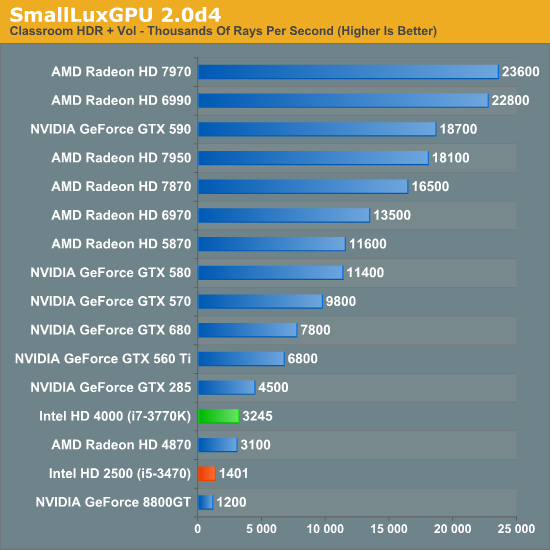

Our last compute test and first OpenCL benchmark, SmallLuxGPU, is the GPU ray tracing branch of the open source LuxRender renderer. We’re now using a development build from the version 2.0 branch, and we’ve moved on to a more complex scene that hopefully will provide a greater challenge to our GPUs.

Intel's HD 4000 does well here for processor graphics, delivering over 70% of the performance of NVIDIA's GeForce GTX 285. The HD 2500 takes a big step backwards though, with less than half the performance of the HD 4000.

Synthetic Performance

Moving on, we'll take a few moments to look at synthetic performance. Synthetic performance is a poor tool to rank GPUs—what really matters is the games—but by breaking down workloads into discrete tasks it can sometimes tell us things that we don't see in games.

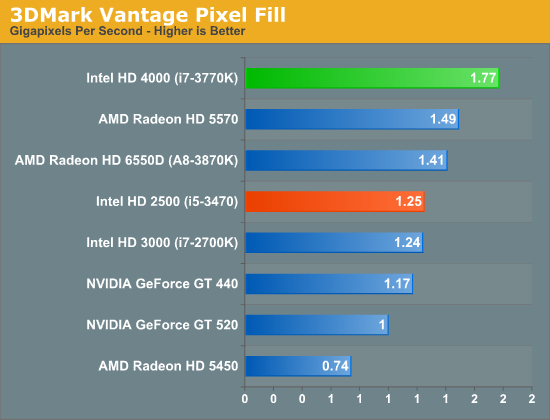

Our first synthetic test is 3DMark Vantage’s pixel fill test. Typically this test is memory bandwidth bound as the nature of the test has the ROPs pushing as many pixels as possible with as little overhead as possible, which in turn shifts the bottleneck to memory bandwidth so long as there's enough ROP throughput in the first place.

It's interesting to note here that as DDR3 clockspeeds have crept up over time, IVB now has as much memory bandwidth as most entry-to-mainstream level video cards, where 128bit DDR3 is equally common. Or on a historical basis, at this point it's half as much bandwidth as powerhouse video cards of yesteryear such as the 256bit GDDR3 based GeForce 8800GT.

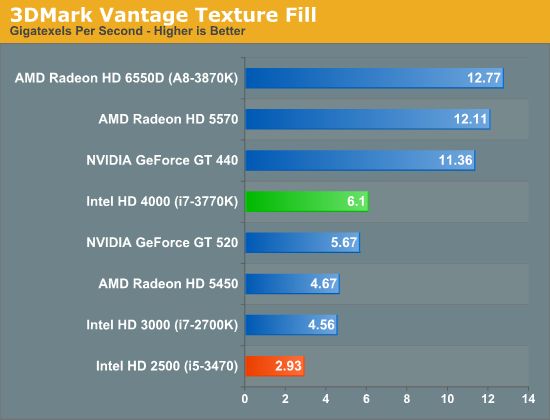

Moving on, our second synthetic test is 3DMark Vantage’s texture fill test, which provides a simple FP16 texture throughput test. FP16 textures are still fairly rare, but it's a good look at worst case scenario texturing performance.

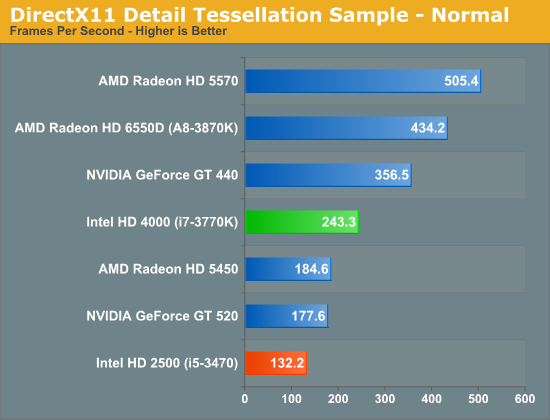

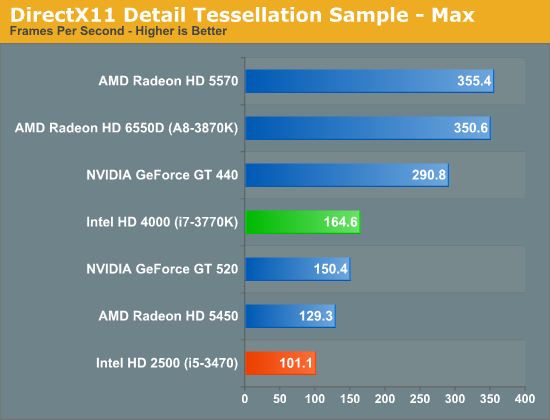

Our final synthetic test is the set of settings we use with Microsoft’s Detail Tessellation sample program out of the DX11 SDK. Since IVB is the first Intel iGPU with tessellation capabilities, it will be interesting to see how well IVB does here, as IVB is going to be the de facto baseline for DX11+ games in the future. Ideally we want to have enough tessellation performance here so that tessellation can be used on a global level, allowing developers to efficiently simulate their worlds with fewer polygons while still using many polygons on the final render.

The results here are as expected. With far fewer EUs, the HD 2500 falls behind even some of the cheapest discrete GPUs.

GPU Power Consumption

As you'd expect, power consumption with the HD 2500 is tangibly lower than HD 4000 equipped parts:

| GPU Power Consumption Comparison under Load (Metro 2033) | ||||

| Intel HD 2500 (i5-3470) | Intel HD 4000 (i7-3770K) | |||

| Intel DZ77GA-70K | 76.2W | 98.9W | ||

Running our Metro 2033 test, the HD 4000 based Core i7 drew nearly 30% more power at the wall compared to the HD 2500.

67 Comments

View All Comments

etamin - Thursday, May 31, 2012 - link

I just glossed through the charts (will read article tomorrow), but I noticed there are no Nehalems in the comparisons. It would be nice if both a Bloomfield and a Gulftown were thrown in. If Phenom IIs are still there, Nehalem shouldn't be THAT outdated right? Anyways, I'm sure the article is great. Thanks for your hard work and I look forward to reading this at work tomorrow :)SK8TRBOI - Thursday, May 31, 2012 - link

I agree with etamin - if Phenom is in there, a great Intel benchmark cpu would be the Nehalem i7-920 D0 OC'd to 3.6Ghz - I'd wager a significant percentage of Anand's readers (myself included!) still have this technological wonder in our everyday rigs. The i7-920 would be a good 'reference' for us all when evaluating/comparing performance.Thanks, and awesome article, as always!

CeriseCogburn - Thursday, May 31, 2012 - link

It's always "best" here to forget about other Intel and nVidia - as if they suddenly don't exist anymore - it makes amd appear to shine.Happens all the time. Every time.

I suppose it's amd's evil control - or the yearly two week island vacation (research for reviewers of course)

LancerVI - Thursday, May 31, 2012 - link

Throw me into the list that agrees.Still running a i7 920 C0. the Nehalems being in the chart would've been nice.

jordanclock - Thursday, May 31, 2012 - link

That's what Bench is for.HanzNFranzen - Thursday, May 31, 2012 - link

I have to agree as well. I have an i7 920 C0 and often wonder how it stacks up today against Ivy Bridge. I'm thinking that holding off for Haswell is a safe bet even though I have the upgrade itch! It's been 3 years, which is great to have gotten this much milage out of my current system, but I wanna BUILD SOMETHING!!CeriseCogburn - Monday, June 11, 2012 - link

It's whole video card tier of frame rate difference in games, plus you have sata 6 and usb 3 to think about not to mention pci-e 3.0 to be ready for when needed.Buy your stuff and sell your old parts to keep it worth it.

jwcalla - Thursday, May 31, 2012 - link

I'm guessing the stock idle power consumption number is with EIST disabled?I've been waiting for some of these lower-powered IVB chips to come out to build a NAS. Was thinking a Core i3 (if they ever get around to releasing it), or maybe the lowest Xeon. Though at this point I might just bite the bullet and wait for 64-bit ARM... or go with a Cortex-A15 maybe.

ShieTar - Thursday, May 31, 2012 - link

If a Cortex-A15 would give you enough computing power, you should also be happy with a Pentium or even a Celeron. The i3 is already rather overkill for a simple NAS.I have a fileserver with Windows Server Home running on a Pentium G620, and it has absolutely no problem to push 120 MB/s over a GBit Ethernet switch from a RAID-0 pack of HDDs while running µtorrent, Thunderbird, Miranda and Firefox on the side. Power consumption of the complete system is around 40-50W in idle, and I havn't even shopped for specifically low-power components but used a lot of leftovers.

BSMonitor - Thursday, May 31, 2012 - link

Yeah, the CPU is just 1 part of the power consumption puzzle.. And since in "file sharing" mode, it will almost always be in a low power/idle state... An ARM CPU would show little improvement..But if you ever offloaded any kind of work to that box, you'd have wasted your money with an ARM box, as no ARM processor will ever match real task performance of any of the x86 processors.