EVGA's GeForce GTX 560 Ti 2Win: The Raw Power Of Two GPUs

by Ryan Smith on November 4, 2011 11:00 PM ESTThe Test, Power, Temperature, & Noise

| CPU: | Intel Core i7-920 @ 3.33GHz |

| Motherboard: | Asus Rampage II Extreme |

| Chipset Drivers: | Intel 9.1.1.1015 (Intel) |

| Hard Disk: | OCZ Summit (120GB) |

| Memory: | Patriot Viper DDR3-1333 3x2GB (7-7-7-20) |

| Video Cards: |

AMD Radeon HD 6970 AMD Radeon HD 6950 NVIDIA GeForce GTX 580 NVIDIA GeForce GTX 570 NVIDIA GeForce GTX 560 Ti EVGA GeForce GTX 560 Ti 2Win |

| Video Drivers: |

NVIDIA GeForce Driver 285.62 AMD Catalyst 11.9 |

| OS: | Windows 7 Ultimate 64-bit |

As the gaming performance of the GTX 560 Ti 2Win is going to be rather straightforward – it’s a slightly overclocked GTX 560 Ti SLI – we’re going to mix things up and start with a look at the unique aspects of the card. The 2Win is the only dual-GPU GTX 560 Ti on the market, so its power/noise/thermal characteristics are rather unique.

| GeForce GTX 560 Ti Series Voltage | ||||

| 2Win GPU 1 Load | 2Win GPU 2 Load | GTX 560 Ti Load | ||

| 1.025v | 1.05v | 0.95v | ||

It’s interesting to note that the 2Win does not have a common GPU voltage like other dual-GPU cards. EVGA wanted it to be 2 GTX 560 Tis in a single card and it truly is, right down to different voltages for each GPU. One of the GPUs on our sample runs at 1.025v, while the other runs at 1.05v. The latter is a bit higher than any other GF114 product we’ve seen, which indicates that EVGA may be goosing the 2Win a bit. The lower power consumption of GF114 (versus GF110 in the GTX 590) means that the 2Win doesn’t need to adhere to a strict voltage requirement to make spec.

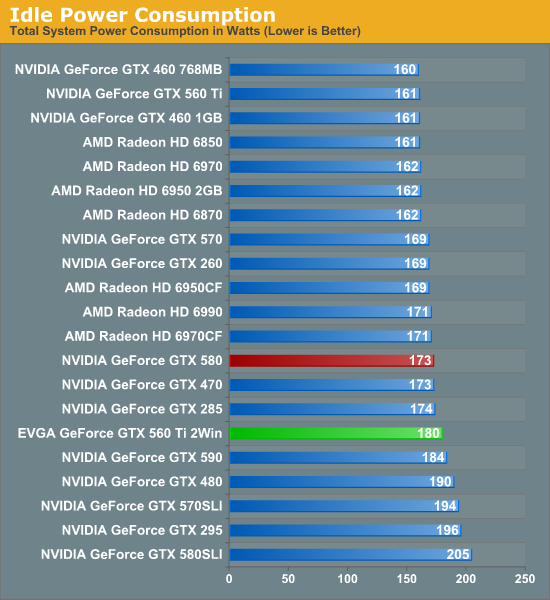

Kicking things off as always is idle power. Unfortunately we don’t have a second reference GTX 560 Ti on hand, so we can’t draw immediate comparisons to a GTX 560 Ti SLI setup. However we believe that the 2Win should draw a bit less power in virtually all cases.

In any case, as to be expected with 2 GPUs the 2Win draws more power than any single GPU, even with GF114’s low idle power consumption. At 180W it’s 7W over the GTX 580, and actually 9W over the otherwise more powerful Radon HD 6990. But at the same time it’s below any true dual-card setup.

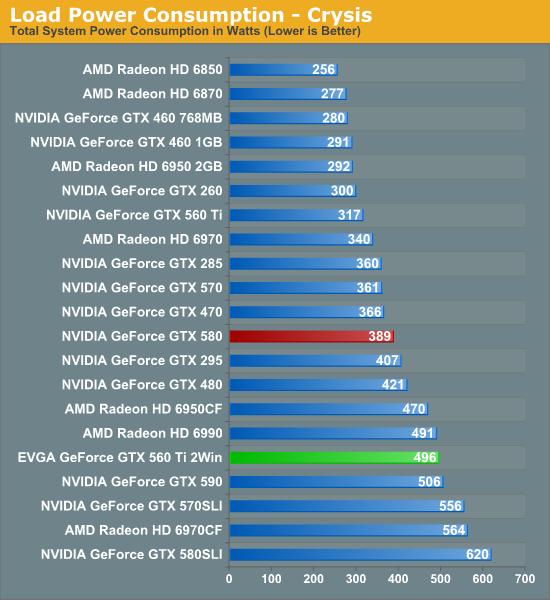

Moving on to load power, we start with the venerable Crysis. As the 2Win is priced against the GTX 580, that's the card to watch for both power characteristics and gaming performance. Starting there, we can see that the 2Win setup draws significantly more power under Crysis – 496W versus 389W – which again is to be expected for a dual-GPU card. As we’ll see in the gaming performance section the 2Win is going to be notably faster than the GTX 580, but the cost will be power.

One thing that caught us off guard here was that power consumption is almost identical to the GTX 590 and the Radeon HD 6990. At the end of the day those cards are a story about the benefits of aggressive chip binning, but it also means that the 2Win is drawing similar amounts of power for not nearly the performance. Given the 2Win’s much cheaper pricing these cards aren’t direct competitors, but it means the 2Win doesn’t have the same aggressive performance-per-watt profile we see in most other dual-GPU cards.

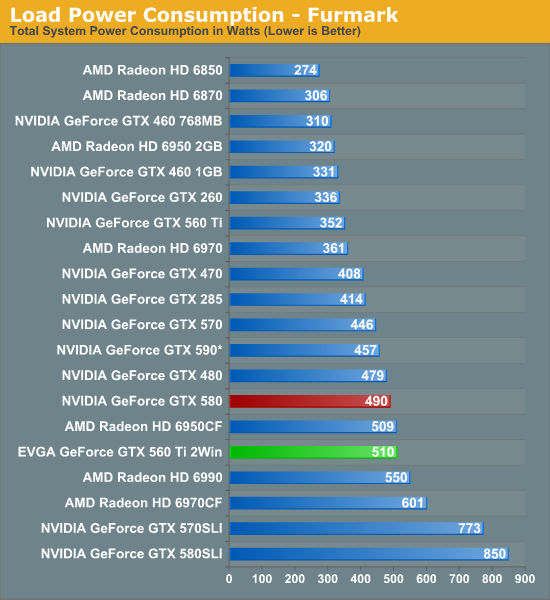

As NVIDIA continues to use OverCurrent Protection (OCP) for their GTX 500 series, FurMark is largely hit or miss depending on how it’s being throttled. In this case we’ve seen an interesting throttle profile that we haven’t experienced in past reviews: the 2Win would quickly peak at over 500W before retreating to anywhere between 450W and 480W before once again rising to over 500W and coming back down, with the framerate fluctuating with the power draw. This is in opposition to a hard cap, where we’d see power draw stay constant. 510W was the highest wattage we saw that was sustained for over 10 seconds. In this case it’s 40W less than the Radeon HD 6990, 50W more than the heavily capped GTX 590, and only 20W off of the GTX 580. In essence we can see the throttle working to keep power consumption not much higher than what we see with the games in our benchmark suite.

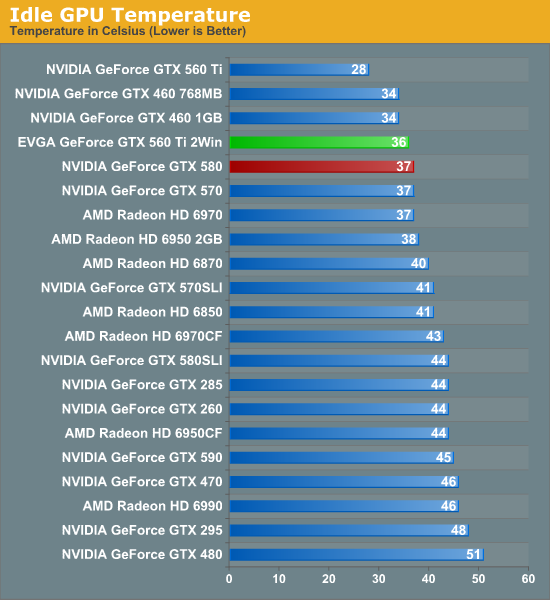

Thanks to the open air design of the 2Win, idle temperatures are quite good. It can’t match the GTX 560 Ti of course, but even with the cramped design of a dual-GPU card our warmest GPU only idles at 36C, below the GTX 580, and just about every other card for that matter.

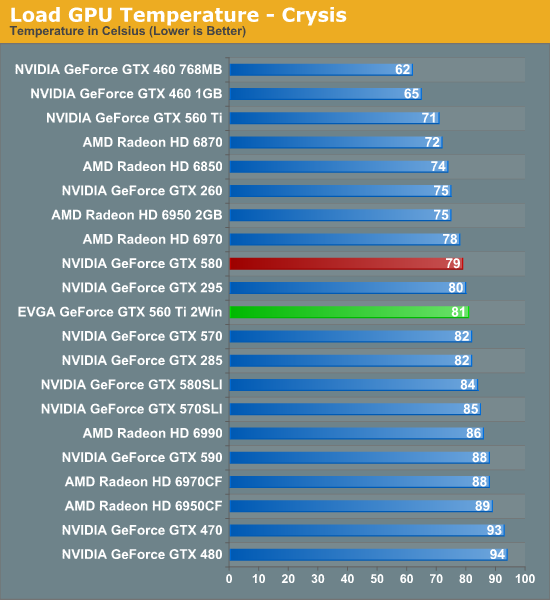

Looking at GPU temperatures while running Crysis, the open air design again makes itself noticed. Our warmest GPU hits 81C, 10C warmer than a single GTX 560 Ti, but only 2C warmer than a GTX 580 in spite of the extra heat being generated. This also ends up being several degrees cooler than the 6990 and GTX 590, which makes the open air design apparent. The tradeoff is that 300W+ of heat are being dumped into the case, whereas the other dual-GPU cards dump only roughly half that. If we haven’t made it clear before we’ll make it clear now: you’ll need good case ventilation to keep the 2Win this cool.

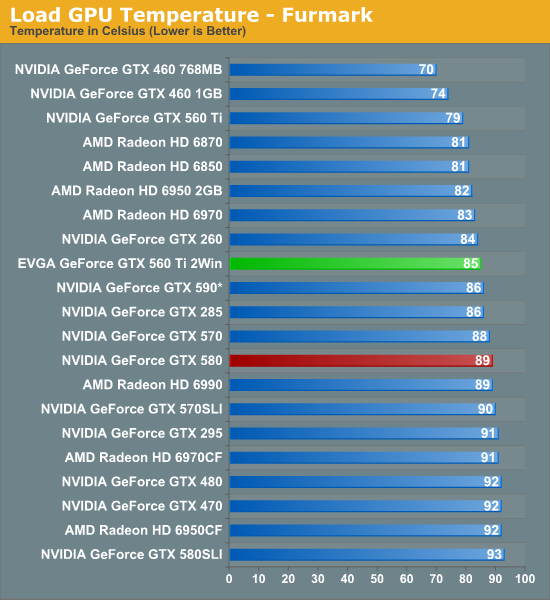

Because of the OCP throttling keeping power consumption under FurMark so close to what it was under Crysis, our temperatures don’t change a great deal when looking at FurMark. Just as with the power situation the temperature situation is spiky; 85C was the hottest spike before temperatures dropped back down to the low 80s. As a result 85C is in good company when it comes to FurMark.

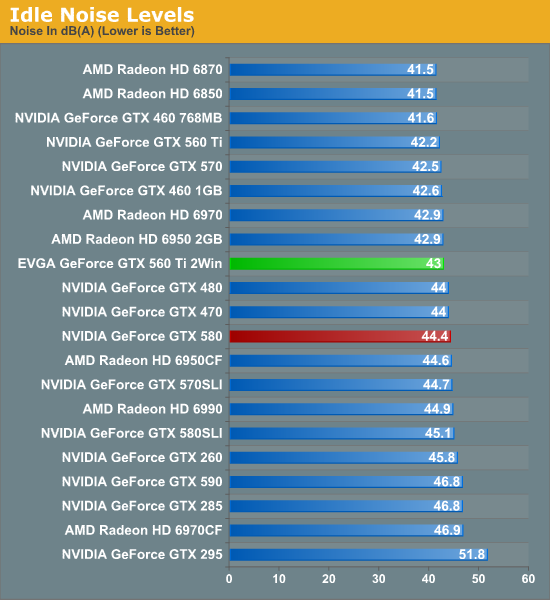

We haven’t reviewed very many video cards with 3 (or more) fans, but generally speaking more fans result in more noise. The 2Win adheres to this rule of thumb, humming along at 43dB. This is slightly louder than a number of single-GPU cards, but still quiet enough that at least on our testbed it doesn’t make much of a difference. All the same it goes without saying that the 2Win is not for those of you seeking silence.

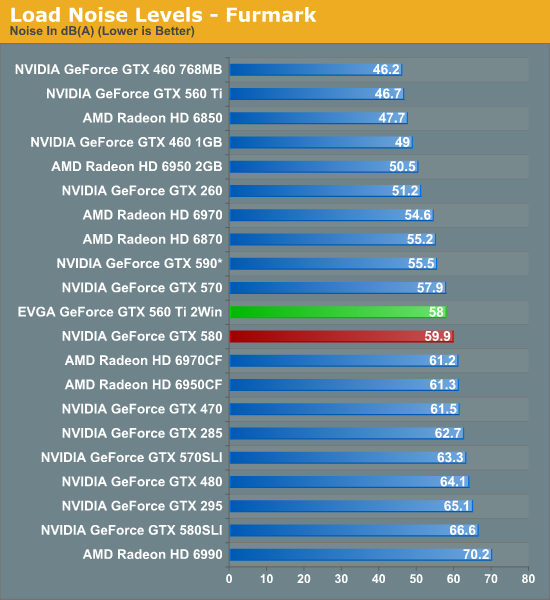

At 56dB the load noise chart makes the 2Win look very good, and to be honest I don’t entirely agree with the numbers. Objectively the 2Win is quieter than the GTX 580, but subjectively it has a slight whine to it that blowers simply don’t have. The 2Win may technically be quieter, but I’d say it’s more noticeable than the GTX 580 or similar cards. With that said it’s definitely still quieter and less noticeable than our lineup of multi-GPU configurations, and of course the poor GTX 480. Ultimately it’s quite quiet for a dual-GPU configuration (let alone one on a single card), but it has to make tradeoffs to properly cool a pair of GPUs.

56 Comments

View All Comments

luv2liv - Friday, November 4, 2011 - link

they cant make it physically bigger than this?im disappointed.

/s

phantom505 - Friday, November 4, 2011 - link

That was so lazy.... it looks like they took 3 case fans and tie strapped them to the top. I think I could have made that look better and I have no design experience whatsoever.irishScott - Sunday, November 6, 2011 - link

Well, it apparently works. That's good enough for me, but then again I don't have a side window.Strunf - Monday, November 7, 2011 - link

Side window and mirrors to see the the fans...I don't understand why people even comment on aesthetics it's not like they'll spend their time looking at the card.phantom505 - Monday, November 7, 2011 - link

If they were lazy here, where else were they lazy?Sabresiberian - Tuesday, November 8, 2011 - link

What is obviously lazy here is your lack of thinking and reading before you made your post.Velotop - Saturday, November 5, 2011 - link

I still have a GTX580 in shrink wrap for my new system build. Looks like it's a keeper.pixelstuff - Saturday, November 5, 2011 - link

Seems like they missed the mark on pricing. Shouldn't they have been able to price it at exactly 2x a GTX 560 Ti card, or $460. Theoretically they should be saving money on the PCB material, connectors, and packaging.Of course we all know that they don't set these price brackets on how much more card costs over the next model down. They set prices based on the maximum they think they could get someone to pay. Oh well. Probably would have sold like hot cakes otherwise.

Kepe - Saturday, November 5, 2011 - link

In addition to just raw materials and manufacturing costs, you must also take in to account the amount of money poured in to the development of the card. This is a custom PCB and as such, takes quite a bit of resources to develop. Also, this is a low volume product that will not sell as many units as a regular 560Ti does, so all those extra R&D costs must be distributed over a small amount of products.R&D costs on reference designs such as the 560Ti are pretty close to 0 compared to something like the 560Ti 2Win.

Samus - Saturday, November 5, 2011 - link

i've been running a pair of EVGA GTX460 768MB's in SLI with the superclock BIOS for almost 2 years. Still faster than just about any single card you can buy, even now, at a cost of $300 total when I bought them.I'm the only one of my friends that didn't need to upgrade their videocard for Battlefield 3. I've been completely sold on SLI since buying these cards, and believe me, I'd been avoiding SLI for years for the same reason most people do: compatibility.

But keep in mind, nVidia has been pushing SLI hard for TEN YEARS with excellent drivers, frequent updates, and compatibility with a wide range of motherboards and GPU models.

Micro-stutter is an ATI issue. It's not noticeable (and barely measurable) on nVidia cards.

http://www.tomshardware.com/reviews/radeon-geforce...

In reference to Ryan's conclusion, I'd say consider SLI for nVidia cards without hesitation. If you're in the ATI camp, get one of their beasts or run three-way cross-fire to eliminate micro-stutter.