Verizon 4G LTE: Two Datacards and a WiFi Hotspot Massively Reviewed

by Brian Klug on April 27, 2011 12:11 AM EST- Posted in

- Smartphones

- Samsung

- Verizon

- LTE

- 4G

- Pantech UML290

- USB551L

- Mobile

- MDM9600

LTE Network Tech Explained

If you look at the evolution of wireless networks from generation to generation, one of the clear generational delimiters that sticks out is how multiplexing schemes have changed. Multiplexing of course defines how multiple users share a slice of spectrum, probably the most important core function of a cellular network. Early 2G networks divided access into time slots (TDMA—Time Division Multiple Access); GSM is the most notable for being entirely TDMA.

3G saw the march onwards to CDMA (Code Division Multiple Access) where each user transmits on the entire 5 or 1.25MHz, but encodes data atop the spectrum with a unique psueodorandom code. The receiver end also has this pseudorandom code, decodes the signal with it, and all other signals look like noise. Decode the signal with each user’s pseudorandom code, and you can share the slice of spectum with many users. As an aside, Qualcomm initially faced strong criticism and disagreement from GSM proponents when CDMA was first proposed because of how it seems to violate physics. Well, here we are with both 3GPP and 3GPP2 using CDMA in 3G tech.

Regardless, virtually all the real 4G options move to yet another multiplexing scheme called OFDMA (Orthogonal Frequency Division Multiple Access). LTE, WiMAX, and now-defunct UMB (the 3GPP2 4G offering) all use OFDMA on the downlink (or forward link). That’s not to say it’s something super new; 802.11a/g/n use OFDM right now. What OFDMA offers over the other multiplexing schemes is slightly higher spectral efficiency, but more importantly a much easier way to use larger slices of spectrum and different size slices of spectrum—from the 5MHz in WCDMA or 1.5MHz in CDMA2000, to 10, 15, and 20MHz channels.

We could spend a lot of time talking about OFDMA alone, but essentially what you need to know is that OFDMA makes using larger channels much easier from an RF perspective. Engineering similarly large channel size CDMA hardware is much more difficult.

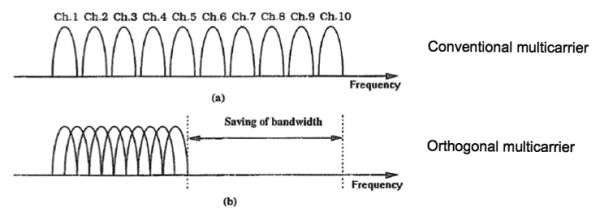

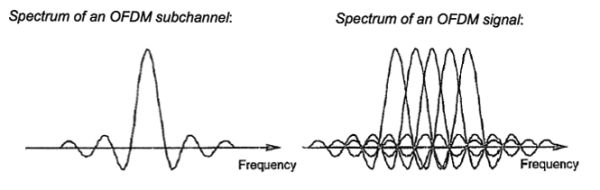

In traditional FDMA, carriers are spaced apart with large enough guard intervals to guarantee no inter-carrier interference occurs, and then band-pass filtered. In OFDM, the subcarriers are generated so that inter-carrier interference doesn’t happen—that’s done by picking a symbol duration and dividing it an integer number of times to create the subcarrier frequencies, and spacing adjacent subcarriers so the number of cycles differ by just one. This relationship guarantees that the overlapping sidebands from other sub-carriers are nulls at every other subcarrier. This results in the interference-free OFDM symbol we’re after, and efficient packing of subcarriers. What makes OFDMA awesome is that at the end of the day, all of this can be generated using an IFFT.

If that’s a confusing mess, just take away that OFDMA enables very dense packing of subcarriers that data can then be modulated on top of. Each client in the network talks on a specific set of OFDM subcarriers, which are shared among all users on the channel through some pre-arranged hopping pattern. This is opposed to the CDMA schema where users encode data across the entire slice of spectrum.

The advantages that OFDMA brings are numerous. If part of the channel suddenly fades or is subject to interference, subcarriers on either side are unaffected and can carry on. User equipment can opportunistically move between subcarriers depending on which have better local propagation characteristics. Even better, each subcarrier can be modulated appropriately for faster performance close to the cell center, and greater link quality at cell edge. That said, there are disadvantages as well—subcarriers need to remain orthogonal at all times or the link will fail due to inter-carrier-interference. If frequency offsets aren’t carefully preserved, subcarriers will no longer be orthogonal and cause interference.

Again, the real differentiator between evolutionary 3G and true 4G can be boiled down to whether the air interface uses OFDMA as its multiplexing scheme, and thus support beefy 10 or 20MHz channels—LTE, WiMAX, and UMB all use it. Upstream on LTE uses SC-FDMA which can be thought of as a sort of precoded OFDMA. One area where WiMAX is technically superior to LTE is OFDMA on the uplink, where it in theory offers faster throughput.

There are other important differentiators like MIMO and 64QAM support. HSPA+ also adds optional MIMO (spatial multiplexing) and 64QAM modulation support, but even the fastest HSPA+ incantation should be differentiated somehow.

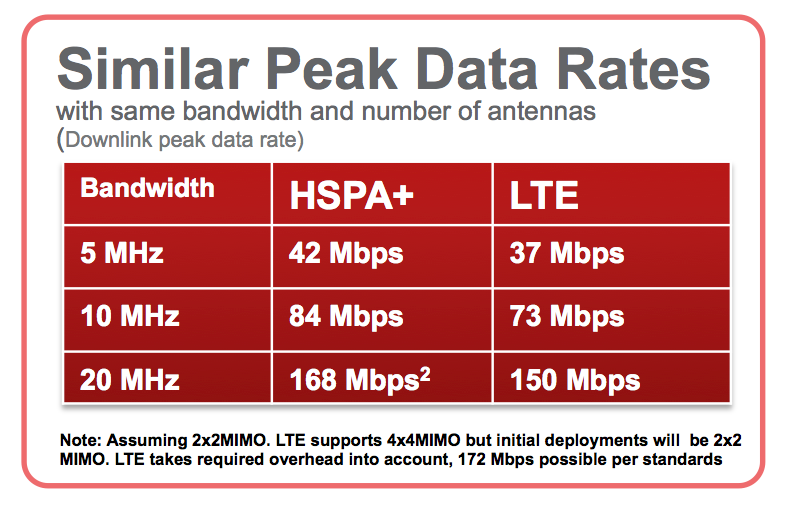

Again, OFDMA doesn’t implicitly equal better spectral efficiency. In fact, with the same higher order modulations, channel size, and MIMO support, they’re relatively similar. The difference is that OFDMA in LTE enables variable channel sizes and much larger ones. This table from Qualcomm says as much:

Keep in mind, the LTE device category here is category 4.

Launch LTE devices with MDM9600 are category 3.

LTE heavily leverages MIMO for spatial multiplexing on the downlink, and three different modulation schemes—QPSK, 16QAM, and 64QAM. There are a number of different device categories supported with different maximum bitrates. The differences are outlined in the table on the following page, but essentially all the Verizon launch LTE devices are category 2 or 3 per Verizon specifications. Differences between device categories boil down to the multi-antenna scheme supported and internal buffer size. Again, the table shown corresponds to 20MHz channels—Verizon uses 10MHz channels right now.

One of the limitations of WCDMA UMTS was its requirement of 5MHz channels for operation. LTE mitigates this by allowing a number of different channel sizes—1.4, 3, 5, 10, 15, and 20MHz channel sizes are all supported. In addition, all equipment supports both time division duplexing (TDD) and frequency division duplexing (FDD) for uplink and downlink. Verizon right now has licenses to the 700MHz Upper C-Band (13) in the US, which is 22MHz of FDD paired spectrum. That works out to 10MHz slices for upstream and downstream with an odd 1MHz on each side whose purppse I’m not entirely certain of.

All of what I’ve described so far is part of LTE’s new air interface—EUTRAN (evolved UMTS Terrestrial Radio Access Network). The other half of the picture is the evolved packet core (ePC). The combination of these two form LTE’s evolved packet system. There’s a lot of e-for-evolved prefixes floating around inside LTE, and a host of changes.

32 Comments

View All Comments

milan03 - Wednesday, April 27, 2011 - link

With my own testing and research, I've reached and exceeded 50mbps using USB tethered ThinderBolt here in NYC. Latency is also in the 50's and I'm extremely happy with the performance.Here are a few videos I've made:

http://www.youtube.com/watch?v=aVC10FMD8kg

http://www.youtube.com/watch?v=ccM_rbfVGDU

http://www.youtube.com/watch?v=YYVfZbmv34U

Brian Klug - Wednesday, April 27, 2011 - link

Wow, 50 Mbps is impressive! I've yet to see anywhere near that - highest was around 39 Mbps for me in Phoenix.-Brian

jigglywiggly - Wednesday, April 27, 2011 - link

WHY IS THIS FASTER THAN MY WIRED DOCSIS CONNECTIONAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAaaaaa

ViRGE - Wednesday, April 27, 2011 - link

Don't worry. Once more than a handful of people are using LTE it won't be...Shared services are great until you have to start sharing them. And there's no sharing quite like sharing a limited RF spectrum.

milan03 - Wednesday, April 27, 2011 - link

Why should anyone be worried? You sound pissed...quiksilvr - Wednesday, April 27, 2011 - link

I'm pretty sure if you pay $50-$80 a month on it you will exceed it...unless on a barrel with Time Warner unzipping...Crazymech - Wednesday, April 27, 2011 - link

Man.. This is kinda.. Embarassing, really!I remember thinking "Pff.. Yea, those speeds? Riiiight" like a year, or one and a half year ago.

This is faster than my fiber connection! And it's wireless, and on a cellphone!..

What's next.. Amazing battery tech that's not "3 to 5 years" away?

Well done, LTE, I'm in awe.

Shadowmaster625 - Wednesday, April 27, 2011 - link

What's next? Cancer for everyone. Yay I cant wait.J_Tarasovic - Friday, May 13, 2011 - link

I am stoked about LTE as well. It really "grinds my gears" that both AT&T and T-Mobile are calling HSPA+ "4G" but I guess that is life, right?I am really waiting for MDM9600 based miniPCI-e WWAN cards. Any idea on this Brian?

Lord 666 - Wednesday, April 27, 2011 - link

The attention to detail is appreciated along with the scope of products tested.Completely agreed about the speed and performance numbers as I have all three; the SCH-LC11 was the best balance, followed by Thunderbolt, and then the Pantec 290 (fastest but limited to USB connection).