ZFS - Building, Testing, and Benchmarking

by Matt Breitbach on October 5, 2010 4:33 PM EST- Posted in

- IT Computing

- Linux

- NAS

- Nexenta

- ZFS

Benchmarks

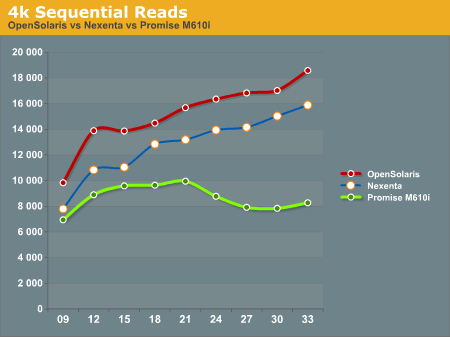

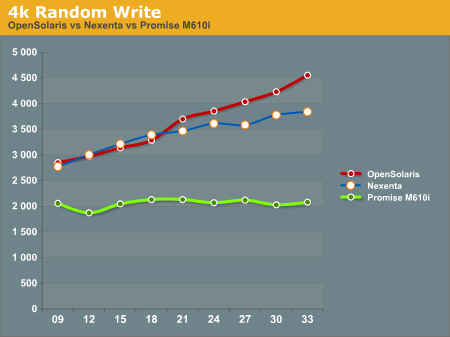

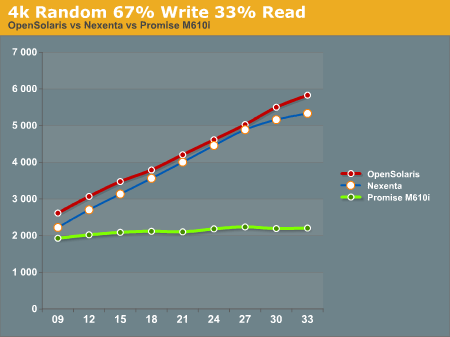

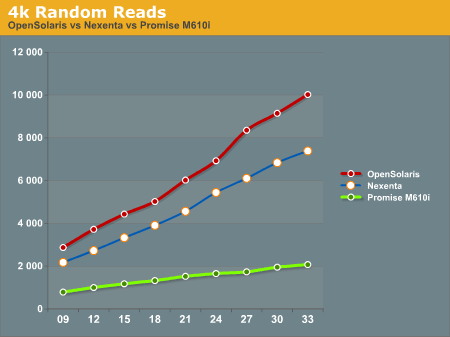

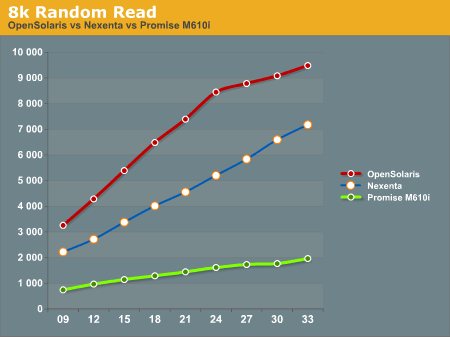

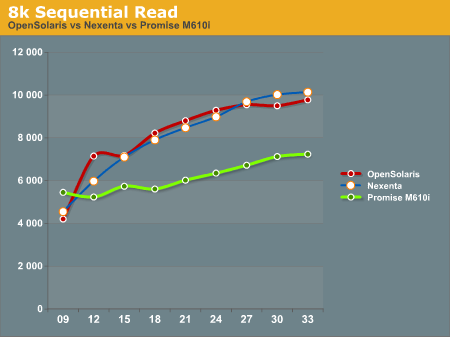

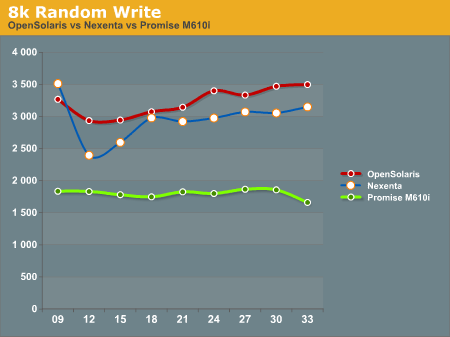

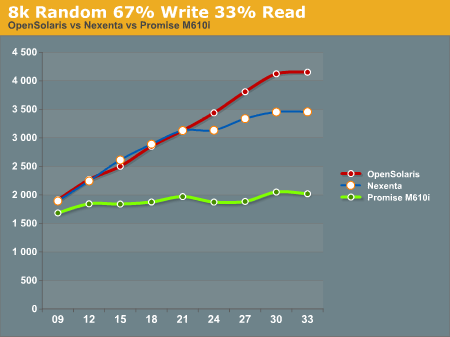

After running our tests on the ZFS system (both under Nexenta and OpenSolaris) and the Promise M610i, we came up with the following results. All graphs have IOPS on the Y-Axis, and Disk Que Lenght on the X-Axis.

In the 4k Sequential Read test, we see that the OpenSolaris and Nexenta systems both outperform the Promise M610i by a significant margin when the disk queue is increased. This is a direct effect of the L2ARC cache. Interestingly enough the OpenSolaris and Nexenta systems seem to trend identically, but the Nexenta system is measurably slower than the OpenSolaris system. We are unsure as to why this is, as they are running on the same hardware and the build of Nexenta we ran was based on the same build of OpenSolaris that we tested. We contacted Nexenta about this performance gap, but they did not have any explanation. One hypothesis that we had is that the Nexenta software is using more memory for things like the web GUI, and maybe there is less ARC available to the Nexenta solution than to a regular OpenSolaris solution.

In the 4k Random Write test, again the OpenSolaris and Nexenta systems come out ahead of the Promise M610i. The Promise box seems to be nearly flat, an indicator that it is reaching the limits of its hardware quite quickly. The OpenSolaris and Nexenta systems write faster as the disk queue increases. This seems to indicate a better re-ordering of data to make the writes more sequential the disks.

The 4k 67% Write 33% Read test again gives the edge to the OpenSolaris and Nexenta systems, while the Promise M610i is nearly flat lined. This is most likely a result of both re-ordering writes and the very effective L2ARC caching.

4k Random Reads again come out in favor of the OpenSolaris and Nexenta systems. While the Promise M610i does increase its performance as the disk queue increases, it's nowhere near the levels of performance that the OpenSolaris and Nexenta systems can deliver with their L2ARC caching.

8k Random Reads indicate a similar trend to the 4k Random Reads with the OpenSolaris and Nexenta systems outperforming the Promise M610i. Again, we see the OpenSolaris and Nexenta systems trending very similarly but with the OpenSolaris system significantly outperforming the Nexenta system.

8k Sequential reads have the OpenSolaris and Nexenta systems trailing at the first data point, and then running away from the Promise M610i at higher disk queues. It's interesting to note that the Nexenta system outperforms the OpenSolaris system at several of the data points in this test.

8k Random writes play out like most of the other tests we've seen with the OpenSolaris and Nexenta systems taking top honors, with the Promise M610i trailing. Again, OpenSolaris beats out Nexenta on the same hardware.

8k Random 67% Write 33% Read again favors the OpenSolaris and Nexenta systems, with the Promise M610i trailing. While the OpenSolaris and Nexenta systems start off nearly identical for the first 5 data points, at a disk queue of 24 or higher the OpenSolaris system steals the show.

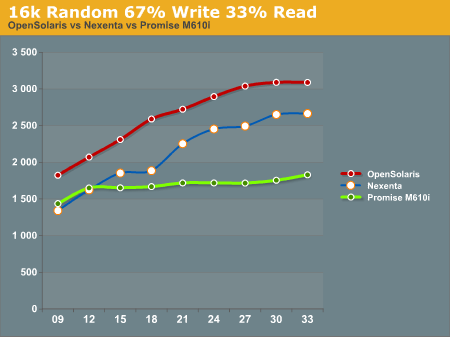

16k Random 67% Write 33% read gives us a show that we're familiar with. OpenSolaris and Nexenta both soundly beat the Promise M610i at higher disk ques. Again we see the pattern of the OpenSolaris and Nexenta systems trending nearly identically, but the OpenSolaris system outperforming the Nexenta system at all data points.

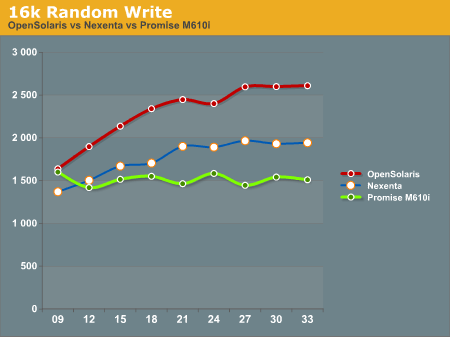

16k Random write shows the Promise M610i starting off faster than the Nexenta system and nearly on par with the OpenSolaris system, but quickly flattening out. The Nexenta box again trends higher, but cannot keep up with the OpenSolaris system.

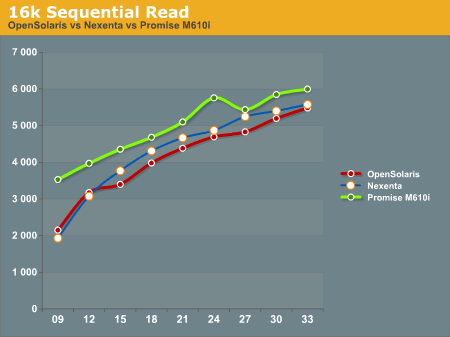

The 16k Sequential read test is the first test that we see where the Promise M610i system outperforms OpenSolaris and Nexenta at all data points. The OpenSolaris system and the Nexenta system both trend upwards at the same rate, but cannot catch the M610i system.

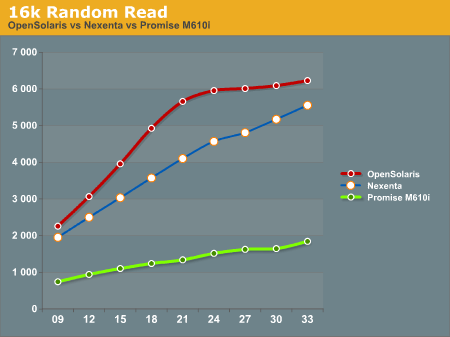

The 16k Random Read test goes back to the same pattern that we've been seeing, with the OpenSolaris and Nexenta systems running away from the Promise M610i. Again we see the OpenSolaris system take top honors with the Nexenta system trending similarly, but never reaching the performance metrics seen on the OpenSolaris system.

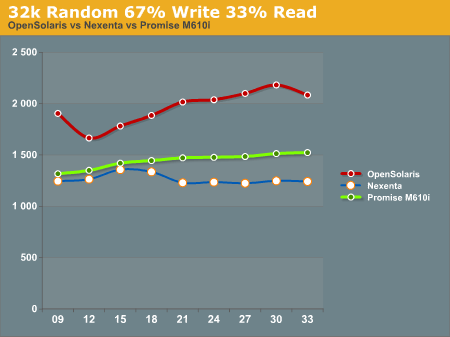

32k Random 67% Write 33% read has the OpenSolaris system on top, with the Promise M610i in second place, and the Nexenta system trailing everything. We're not really sure what to make of this, as we expected the Nexenta system to follow similar patterns to what we had seen before.

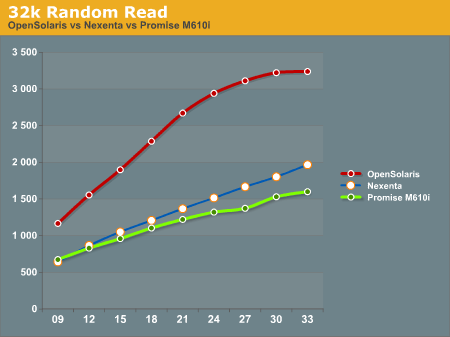

32k Random Read has the OpenSolaris system running away from everything else. On this test the Nexenta system and the Promise M610i are very similar, with the Nexentaq system edging out the Promise M610i at the highest queue depths.

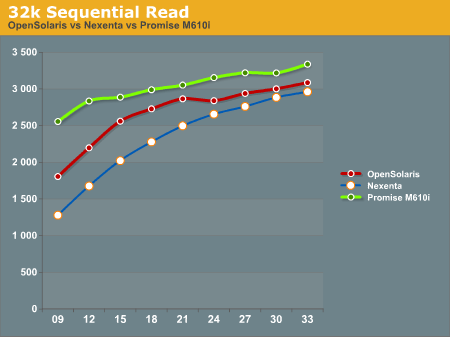

32k Sequential Reads proved to be a strong point for the Promise M610i. It outperformed the OpenSolaris and Nexenta systems at all data points. Clearly there is something in the Promise M610i that helps it excel at 32k Sequential Reads.

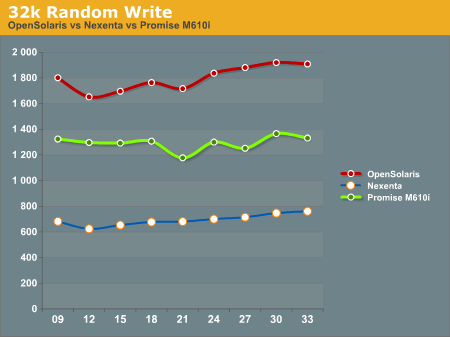

32k random writes have the OpenSolaris system on top again, with the Promise M610i in second place, and the Nexenta system trailing far behind. All of the graphs trend similarly, with little dips and rises, but not ever moving much from the initial reading.

After all the tests were done, we had to sit down and take a hard look at the results and try to formulate some ideas about how to interpret this data. We will discuss this in our conclusion.

102 Comments

View All Comments

diamondsw2 - Tuesday, October 5, 2010 - link

You're not doing your readers any favors by conflating the terms NAS and SAN. NAS devices (such as what you've described here) are Network Attached Storage, accessed over Ethernet, and usually via fileshares (NFS, CIFS, even AFP) with file-level access. SAN is Storage Area Network, nearly always implemented with Fibre Channel, and offers block-level access. About the only gray area is that iSCSI allows block-level access to a NAS, but that doesn't magically turn it into a SAN with a storage fabric.Honestly, given the problems I've seen with NAS devices and the burden a well-designed one will put on a switch backplane, I just don't see the point for anything outside the smallest installations where the storage is tied to a handful of servers. By the time you have a NAS set up *well* you're inevitably going to start taxing your switches, which leads to setting up dedicated storage switches, which means... you might as well have set up a real SAN with 8Gbps fibre channel and been done with it.

NAS is great for home use - no special hardware and cabling, and options as cheap as you want to go - but it's a pretty poor way to handle centralized storage in the datacenter.

cdillon - Tuesday, October 5, 2010 - link

The terms NAS and SAN have become rightfully mixed, because modern storage appliances can do the jobs of both. Add some FC HBAs to the above ZFS storage system and create some FC Targets using Comstar in OpenSolaris or Nexenta and guess what? You've got a "SAN" box. Nexenta can even do active/active failover and everything else that makes it worthy of being called a true "Enterprise SAN" solution.I like our FC SAN here, but holy cow is it expensive, and its not getting any cheaper as time goes on. I foresee iSCSI via plain 10G Ethernet and also FCoE (which is 10G Ethernet + FC sharing the same physical HBA and data link) completely taking over the Fibre Channel market within the next decade, which will only serve to completely erase the line between "NAS" and "SAN".

mbreitba - Tuesday, October 5, 2010 - link

The systems as configured in this article are block level storage devices accessed over a gigabit network using iSCSI. I would strongly consider that a SAN device over a NAS device. Also, the storage network is segregated onto a separate network already, isolated from the primary network.We also backed this device with 20Gbps InfiniBand, but had issues getting the IB network stable, so we did not include it in the article.

Maveric007 - Tuesday, October 5, 2010 - link

I find iscsi is closer to a NAS then a SAN to be honest. The performance difference between iscsi and san are much further away then iscsi and nas.Mattbreitbach - Tuesday, October 5, 2010 - link

iSCSI is block based storage, NAS is file based. The transport used is irrelevent. We could use iSCSI over 10GbE, or over InfiniBand, which would increase the performance significantly, and probably exceed what is available on the most expensive 8Gb FC available.mino - Tuesday, October 5, 2010 - link

You are confusing the NAS vs. SAN terminology with the interconnects terminology and vice versa.SAN, NAS, DAS ... are abstract methods how a data client accesses the stored data.

--Network Attached Storage (NAS), per definition, is an file/entity-based data storage solution.

- - - It is _usually_but_not_necessarily_ connected to a general-purpose data network

--Storage Area Network(SAN), per definition, is a block-access-based data storage solution.

- - - It is _usually_but_not_necessarily_THE_ dedicated data network.

Ethernet, FC, Infiniband, ... are physical data conduits, they are the ones who define in which PERFORMANCE class a solution belongs

iSCSI, SAS, FC, NFS, CIFS ... are logical conduits, they are the ones who define in which FEATURE CLASS a solution belongs

Today, most storage appliances allow for multiple ways to access the data, many of the simultaneously.

Therefore, presently:

Calling a storage appliance, of whatever type, a "SAN" is pure jargon.

- It has nothing to do with the device "being" a SAN per se

Calling an appliance, of whatever type, a "NAS" means it is/will be used in the NAS role.

- It has nothing to do with the device "being" a NAS per se.

mkruer - Tuesday, October 5, 2010 - link

I think there needs to be a new term called SANNAS or snaz short for snazzy.mmrezaie - Wednesday, October 6, 2010 - link

Thanks, I learned a lot.signal-lost - Friday, October 8, 2010 - link

Depends on the hardware sir.My iSCSI Datacore SAN, pushes 20k iops for the same reason that their ZFS does it (Ram cacheing).

Fibre Channel SANs will always outperform iSCSI run over crappy switching.

Currently Fibre Channel maxes out at 8Gbps in most arrays. Even with MPIO, your better off with an iSCSI system and 10/40Gbps Ethernet if you do it right. Much cheaper, and you don't have to learn an entire new networking model (Fibre Channel or Infiniband).

MGSsancho - Tuesday, October 5, 2010 - link

while technically a SAN you can easily make it a NAS with a simple zfs set sharesmb=on as I am sure you are aware.