Beginnings of the Holodeck: AMD's DX11 GPU, Eyefinity and 6 Display Outputs

by Anand Lal Shimpi on September 10, 2009 2:30 PM EST- Posted in

- GPUs

Wanna see what 24.5 million pixels looks like?

That's six Dell 30" displays, each with an individual resolution of 2560 x 1600. The game is World of Warcraft and the man crouched in front of the setup is Carrell Killebrew, his name may sound familiar.

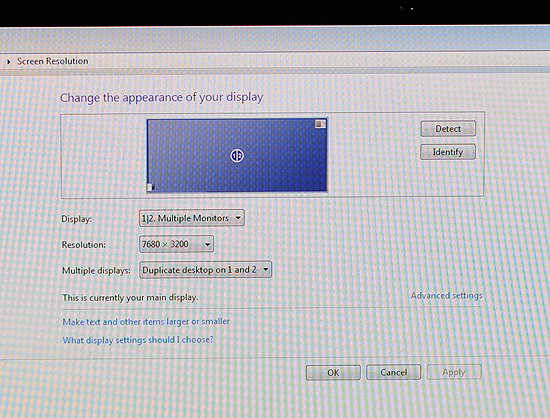

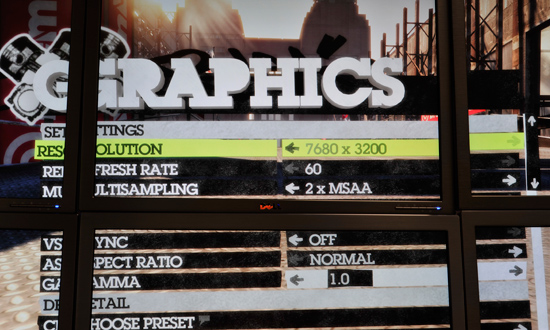

Driving all of this is AMD's next-generation GPU, which will be announced later this month. I didn't leave out any letters, there's a single GPU driving all of these panels. The actual resolution being rendered at is 7680 x 3200; WoW got over 80 fps with the details maxed. This is the successor to the RV770. We can't talk specs but at today's AMD press conference two details are public: 2.15 billion transistors and over 2.5 TFLOPs of performance. As expected, but nice to know regardless.

The technology being demonstrated here is called Eyefinity and it actually all started in notebooks.

Not Multi-Monitor, but Single Large Surface

DisplayPort is gaining popularity. It's a very simple interface and you can expect to see mini-DisplayPort on notebooks and desktops alike in the very near future. Apple was the first to embrace it but others will follow.

The OEMs asked AMD for six possible outputs for DisplayPort from their notebook GPUs: up to two internally for notebook panels, up to two externally for conncetors on the side of the notebook and up to two for use via a docking station. In order to fulfill these needs AMD had to build in 6 lanes of DisplayPort outputs into its GPUs, driven by a single display engine. A single display engine could drive any two outputs, similar to how graphics cards work today.

Eventually someone looked at all of the outputs and realized that without too much effort you could drive six displays off of a single card - you just needed more display engines on the chip. AMD's DX11 GPU family does just that.

At the bare minimum, the lowest end AMD DX11 GPU can support up to 3 displays. At the high end? A single GPU will be able to drive up to 6 displays.

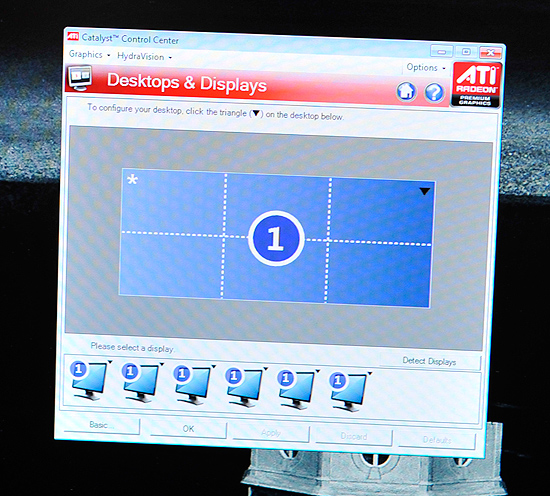

AMD's software makes the displays appear as one. This will work in Vista, Windows 7 as well as Linux.

The software layer makes it all seamless. The displays appear independent until you turn on SLS mode (Single Large Surface). When on, they'll appear to Windows and its applications as one large, high resolution display. There's no multimonitor mess to deal with, it just works. This is the way to do multi-monitor, both for work and games.

Note the desktop resolution of the 3x2 display setup

I played Dirt 2, a DX11 title at 7680 x 3200 and saw definitely playable frame rates. I played Left 4 Dead and the experience was much better. Obviously this new GPU is powerful, although I wouldn't expect it to run everything at super high frame rates at 7680 x 3200.

Left 4 Dead in a 3 monitor configuration, 7680 x 1600

If a game pulls its resolution list from Windows, it'll work perfectly with Eyefinity.

With six 30" panels you're looking at several thousand dollars worth of displays. That was never the ultimate intention of Eyefinity, despite its overwhelming sweetness. Instead the idea was to provide gamers (and others in need of a single, high resolution display) the ability to piece together a display that offered more resolution and was more immersive than anything on the market today. The idea isn't to pick up six 30" displays but perhaps add a third 20" panel to your existing setup, or buy five $150 displays to build the ultimate gaming setup. Even using 1680 x 1050 displays in a 5x1 arrangement (ideal for first person shooters apparently, since you get a nice wrap around effect) still nets you a 8400 x 1050 display. If you want more vertical real estate, switch over to a 3x2 setup and then you're at 5040 x 2100. That's more resolution for less than most high end 30" panels.

![]()

Any configuration is supported, you can even group displays together. So you could turn a set of six displays into a group of 4 and a group of 2.

It all just seems to work, which is arguably the most impressive part of it all. AMD has partnered up with at least one display manufacturer to sell displays with thinner bezels and without distracting LEDs on the front:

A render of what the Samsung Eyefinity optimized displays will look like

We can expect brackets and support from more monitor makers in the future. Building a wall of displays isn't exactly easy.

137 Comments

View All Comments

Deke2009 - Sunday, September 20, 2009 - link

will we be able to align displays simular to matrox's triplehead2go?TheOtherMrSmith - Saturday, September 19, 2009 - link

If you want to see a really interesting monitor design that could really benefit from this new video card tech, check out NEC's new goods:

# 42.8" diagonal

# 2880 x 900

# 0.02 ms. response time

# 12-bit color range

http://www.homotron.net/2009/01/macworld_2009_nec_...">http://www.homotron.net/2009/01/macworld_2009_nec_...

This thing is really amazing!

Deke2009 - Sunday, September 20, 2009 - link

woohoo 900 vertical res, what a piece of crapvol7ron - Tuesday, September 15, 2009 - link

Once man can harness the capability of rearranging matter (elements, protons, neutrons, etc), this graphical hologram will be a thing of the past.All that we'll need to do is provide existing matter and the technology will be able to break it down and reconfigure it to another molecular structure. Thus, changing trash into food.

In coordination with that, the real holodeck will do the same thing, but instead of making food, it'll make people and inanimate objects. However, a computer program will track what it's made so that it can't "hurt" real humans.

That is the wave of the future, which may very-well be possible with metaphysics.

JonnyDough - Monday, September 14, 2009 - link

Samsung was a great choice.I <3 AMD.

I wonder what the power draw on the entire system, including monitors was. There's a gray fuse box right next to the monitors...yikes!

AnnonymousCoward - Monday, September 14, 2009 - link

Here's your damn holodeck. 6-sided fully enclosed cube, 16.8MP per wall, in 3D. http://www.vrac.iastate.edu/c6.php">http://www.vrac.iastate.edu/c6.php I've been in it.I'm surprised there's no mention of HDR. That's what's required for images to be indistinguishable from reality. Without HDR, all the new game engines, megapixels, and multi-monitors won't get you there.

ProDigit - Saturday, September 12, 2009 - link

I think instead of focusing on millions of millions of pixels that the eye will not be able to perceive, why not focus on widescreen, high resolution glasses?To see a single image (does not even need to be 3D) through glasses, and when rotating the head, the display rotates too (but the controller,mouse or keyboard controls the direction that the in game character faces).

Why spend precious resources on:

- First of all pixels we're not able to perceive with the eye

- Second of all, when we focus to the right with our eye, why render high quality images on the left monitors (when we're not able to see them anyways)?

Makes more sense to go for glasses.

,or, some kind of sensor on the head of the player,that will tell the pc, where to focus it's graphics to.

Images shown in the corner of the eye, don't need highest quality, because we can't perceive images in the corners as well as where we focus our eyes to.

Second of all;it would make more sense spending time in research on which monitor is the ultimate gaming monitor?.

The ultimate gaming monitor depends on how far you're away from the monitor.

For instance a 1920x1080 resolution screen might be perfect as a 22" monitor on a desk 2 feet away,while that same resolution might fit an 80" screen 10 feet away.

There is need for researching this optimal resolution/distance calculation, and then focus on those resolutions.

It makes no sense to put a 16000 by 9000 resolution screen on a desk 3 feet away from you,and will take plenty of unneccesary GPU calculations.

lyeoh - Saturday, September 12, 2009 - link

In practice, the "Single Large Surface" approach will not be as good as the O/S being aware of multiple monitors.Why? Because the monitors do not present a single contiguous display surface - there are gaps. So if the O/S isn't aware, in certain monitor setups it's going to plonk a dialog box across a boundary or more, making it hard to read. And when you maximize a window it gets ridiculous. I don't think it helps to have your taskbar stretched across 3 screens either..

Nvidia actually does let you treat two displays as one (span mode), but they also allow you to expose them to windows (dual view). And I prefer dual view to span, and don't have many problems with it.

I can quickly move windows to different monitors with hotkeys (or just plain mouse dragging). The problem I have is moving "full screen" stuff to different monitors usually doesn't work.

"Single Large Surface"/span mode is actually going to be suboptimal for most users. Just a kludge to support OSes that can't handle 6 displays in various configs.

kekewons - Saturday, September 12, 2009 - link

FWIW, I suspect SLS will incorporate some sort of "hard frame" adjustment...if only because freeware "multimonitor" solutions like SoftTH already do so even now.But, at the same time, I'll also say I wouldn't be surprised to see this driver team miss out completely, re: a version 1.0 release.

Because it's happened before......

snarfbot - Friday, September 11, 2009 - link

um projectors?buy 6 cheap led projectors, 800x480 resolution each, easily make a surround setup with it too.

best part is no bezels. thats the ticket.