µATX Part 1: ATI Radeon Xpress 1250 Performance Review

by Gary Key on August 28, 2007 7:00 AM EST- Posted in

- Motherboards

Power Consumption

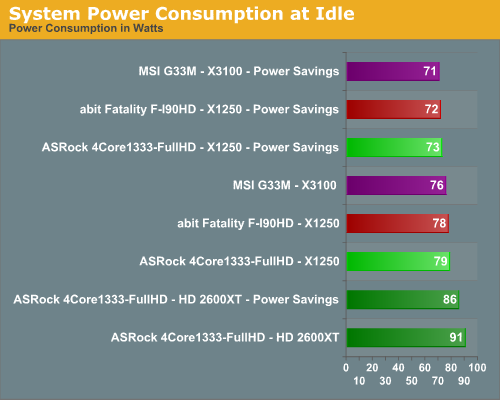

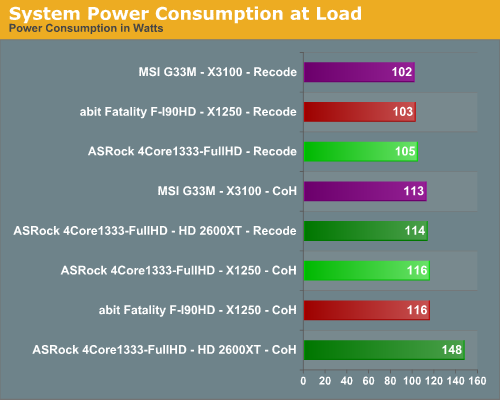

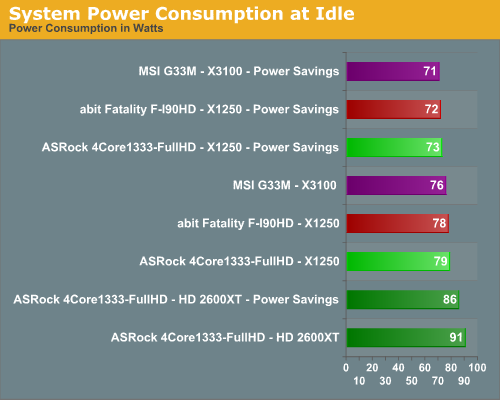

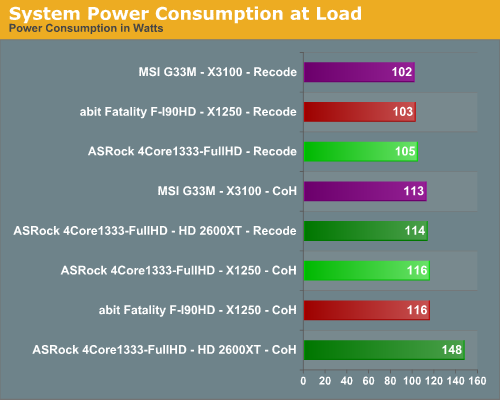

We measured power consumption in three states: at idle sitting at the Vista desktop after 10 minutes of inactivity, and under load while running our Nero Recode 2 and Company of Heroes benchmarks. At each setting, all available power saving options were enabled in the BIOS and operating system to keep power consumption to a minimum, although obviously the biggest difference occurs at idle.

Our tests surprised us as we see both X1250 Radeon express platforms consuming slightly more power than the Intel G33 platform with or without power management turned on. The AMD HD 2600 XT consumes 13W more power at idle in power savings mode and 12W more without power saving features enabled when compared to the X1250. The 2600 XT uses 9W more power in Nero Recode 2 and 32W more power under load in Company of Heroes when compared to the X1250. We will have NVIDIA 8600 GTS results in our next article. Our only problem during testing with the X1250 boards occurred during the 3D06 and PCMark05 benchmark runs when the CPUs remained in a low idle state. This was quickly solved by changing the power state to Performance settings.

1080p CPU Utilization

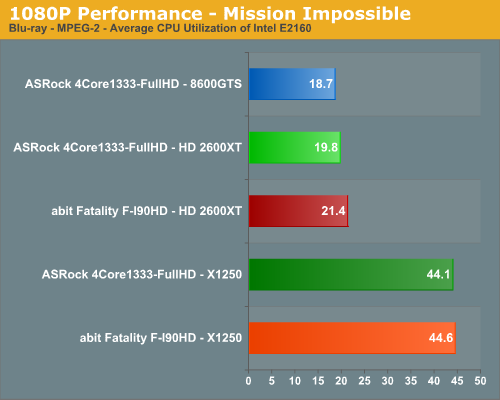

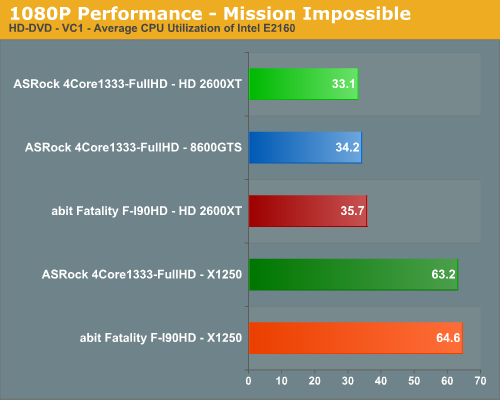

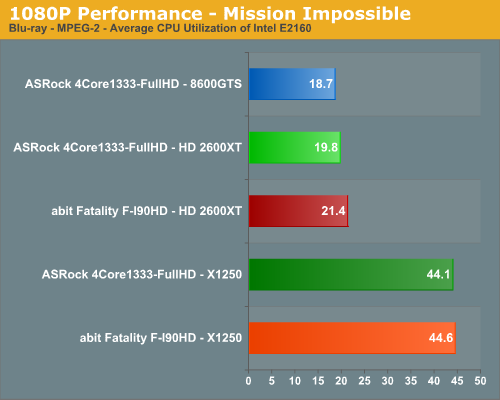

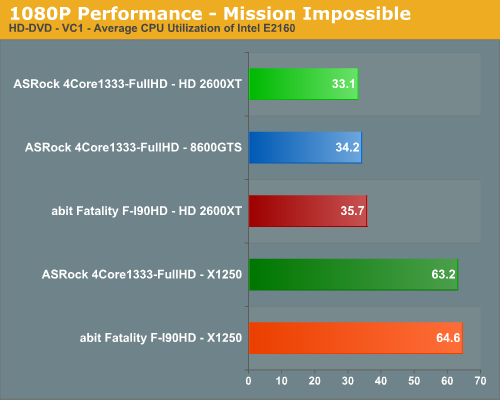

With the release of the Catalyst 7.8 driver, the X1250 graphics core has been touted as having the capability to playback high definition content in 1080p with an appropriate CPU and CyberLink's PowerDVD player. We confirmed this claim with our Mission Impossible titles that are encoded in the VC1 format in HD-DVD and the less demanding MPEG-2 format for Blu-ray with our E2160 processor. We tested Casino Royale that is encoded under H.264 and found the average CPU utilization rate to be around 89% with the E2160. While the majority of the movie was viewable, there were certain action sequences that created a 100% CPU usage rate that resulted in the movie stopping or PowerDVD locking up.

ASRock recommends an E6550 processor for 1080p playback and we did not notice an issue with this processor during testing. However, on the ASRock board this means the FSB is automatically overclocked to 333 as the Radeon Xpress 1250 does not officially support the 1333MHz FSB rates required by this processor. The abit board will boot this processor but the FSB rate is locked at 266 which defeats the purpose of using a 1333MHz FSB capable CPU. We found that an E6420 is perfectly suited for playing back H.264 content at 1080p on either board.

The playback quality of the X1250 at 1080p was perfectly acceptable on both boards and we did not see any real differences between the HDMI output of the abit board and the DVI output on the ASRock board. The high definition video quality output is definitely a notch below our HD 2600 XT and NVIDIA 8600 GTS cards with a fair amount of moiré and banding on the Vatican walls after Tom Cruise drops to the ground and again on the stairs in the next sequence inside the Vatican. Color saturation and contrast are very good while noise reduction and deinterlacing on the X1250 is acceptable but clearly a step below our 2600 XT and 8600 GTS cards. Unfortunately, we cannot show screenshot comparisons between our graphic solutions under Vista due to the DRM police hounding us; we are working to find a solution that doesn't involve a camera aimed at the display....

We measured power consumption in three states: at idle sitting at the Vista desktop after 10 minutes of inactivity, and under load while running our Nero Recode 2 and Company of Heroes benchmarks. At each setting, all available power saving options were enabled in the BIOS and operating system to keep power consumption to a minimum, although obviously the biggest difference occurs at idle.

Our tests surprised us as we see both X1250 Radeon express platforms consuming slightly more power than the Intel G33 platform with or without power management turned on. The AMD HD 2600 XT consumes 13W more power at idle in power savings mode and 12W more without power saving features enabled when compared to the X1250. The 2600 XT uses 9W more power in Nero Recode 2 and 32W more power under load in Company of Heroes when compared to the X1250. We will have NVIDIA 8600 GTS results in our next article. Our only problem during testing with the X1250 boards occurred during the 3D06 and PCMark05 benchmark runs when the CPUs remained in a low idle state. This was quickly solved by changing the power state to Performance settings.

1080p CPU Utilization

With the release of the Catalyst 7.8 driver, the X1250 graphics core has been touted as having the capability to playback high definition content in 1080p with an appropriate CPU and CyberLink's PowerDVD player. We confirmed this claim with our Mission Impossible titles that are encoded in the VC1 format in HD-DVD and the less demanding MPEG-2 format for Blu-ray with our E2160 processor. We tested Casino Royale that is encoded under H.264 and found the average CPU utilization rate to be around 89% with the E2160. While the majority of the movie was viewable, there were certain action sequences that created a 100% CPU usage rate that resulted in the movie stopping or PowerDVD locking up.

ASRock recommends an E6550 processor for 1080p playback and we did not notice an issue with this processor during testing. However, on the ASRock board this means the FSB is automatically overclocked to 333 as the Radeon Xpress 1250 does not officially support the 1333MHz FSB rates required by this processor. The abit board will boot this processor but the FSB rate is locked at 266 which defeats the purpose of using a 1333MHz FSB capable CPU. We found that an E6420 is perfectly suited for playing back H.264 content at 1080p on either board.

The playback quality of the X1250 at 1080p was perfectly acceptable on both boards and we did not see any real differences between the HDMI output of the abit board and the DVI output on the ASRock board. The high definition video quality output is definitely a notch below our HD 2600 XT and NVIDIA 8600 GTS cards with a fair amount of moiré and banding on the Vatican walls after Tom Cruise drops to the ground and again on the stairs in the next sequence inside the Vatican. Color saturation and contrast are very good while noise reduction and deinterlacing on the X1250 is acceptable but clearly a step below our 2600 XT and 8600 GTS cards. Unfortunately, we cannot show screenshot comparisons between our graphic solutions under Vista due to the DRM police hounding us; we are working to find a solution that doesn't involve a camera aimed at the display....

22 Comments

View All Comments

Brick88 - Thursday, August 30, 2007 - link

doesn't anyone feel that AMD is cutting itself short? Yes Intel is their primary competitor but by not producing an igp chipset for intel based processors, they are cutting themselves out of a big market. Intel ships the majority of processors and AMD will need every single stream of revenue to compete with Intel.bunga28 - Wednesday, August 29, 2007 - link

Charles Dickens would roll over his grave if he saw you comparing these 2 boards by paraphrasing his work.Myrandex - Tuesday, August 28, 2007 - link

I don't knwo why they would ever put that name on the board. the fact that it is getting beat by a ASRock motherboard in gaming performance is pathetic, since that name is supposed to be all about gaming (no offense to the ASRockers out there, as they aren't bad boards I have more experience with them then fatal1ty's anyways).Etern205 - Tuesday, August 28, 2007 - link

On the "abit Fatality F-I90HD: Feature Set" page,that Abit EQ software interface of a car looks

familar one of those real models.

Like this one

<img>http://img404.imageshack.us/img404/8490/toyotafjhh...">http://img404.imageshack.us/img404/8490/toyotafjhh...

source:

http://www.automobilemag.com/new_car_previews/2006...">http://www.automobilemag.com/new_car_previews/2006...

strikeback03 - Tuesday, August 28, 2007 - link

I was thinking Hummer, either way...Etern205 - Tuesday, August 28, 2007 - link

Not really because the face of a Hummer is differentthan the one from Toyota. The face of a Hummer has

vertical grill bars, while the Toyota does not.

strikeback03 - Wednesday, August 29, 2007 - link

However the Hummer has the full-width chrome fascia, the Toyota has a part-width sorta satin chrome thing.I highly doubt they licensed an image of either, so it can't look exactly like any vehicle. I remember a lawsuit between Jeep and Hummer over the 7 vertical slots in eachother's grilles several years ago.

eBauer - Tuesday, August 28, 2007 - link

Why are the Xpress 1250 systems running tighter timings (4-4-4-12) where the G33 system is running looser timings (5-5-5-12)?strikeback03 - Tuesday, August 28, 2007 - link

Top of page 8

Mazen - Tuesday, August 28, 2007 - link

I have a 6000+ (gift) and I am just wondering whether I should go with a 690G or wait for nvidia's upcoming MCP 78. Can't wait for the 690G review... thoughts anyone?