Real World DirectX 10 Performance: It Ain't Pretty

by Derek Wilson on July 5, 2007 9:00 AM EST- Posted in

- GPUs

Lost Planet: Extreme Condition

Lost Planet: Extreme Condition is a port of an Xbox 360 game. Well, to be honest, it's almost as if they just tacked on support for a keyboard and mouse and recompiled it for Windows on x86 hardware. While we haven't done a full review of the game, only playing through the intro and first mission, our initial assessment is that this is absolutely the worst console port ever.

Unless you have an Xbox 360 controller for your PC, the game is almost unplayable. The menus are clunky and difficult to navigate. Moving in and out of different sections of the main menu requires combinations of left and right clicking, which is patently absurd. If during gameplay you wish to change resolutions, you must click the mouse no less than a dozen times. This does not include the need to navigate menus through hovering (who does that?) and the click required to grab the scroll bar in the settings menu.

The console shooter has always had to work hard to compete with the PC. Halo and Halo 2 did quite a good job of stepping up to the plate, and Gears of War really hit one out of the park. But simply porting a mediocre console shooter to the PC does not a great game make.

That said, if you can get past the clunky controls and stunted interface, the visuals in this game are quite stunning. It can also actually be fun and satisfying to shoot up a bunch of Akrid. Our first impression is that a good game could be buried underneath all of the problems inherent in the PC port of Lost Planet, but we'll have to take a closer look to draw a final conclusion on this one.

For now, the important information to take away is what we get from the DirectX 10 version of the game. While we haven't found an explicit list of the differences, our understanding is that the features are generally the same. Under DirectX 10, gamers can choose a "high" shadow quality option while DX9 is limited to "medium". Other than this, it seems lighting is slightly different (though not really better) under DX10. From what we've seen reported, Capcom's goal with DX10 on Lost Planet is to increase performance over their DX9 version.

Lost Planet DirectX 9

Lost Planet DirectX 10

In order to make as straightforward a comparison as possible, we used the same settings under DX9 and DX10 (meaning everything on high except for shadow quality).

DirectX 9 Tests

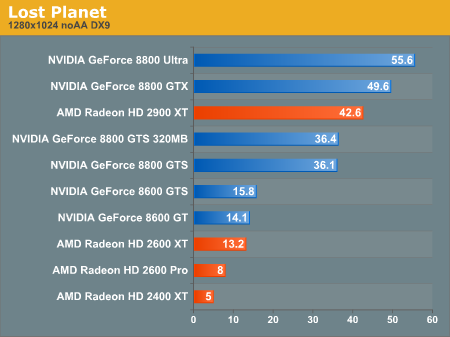

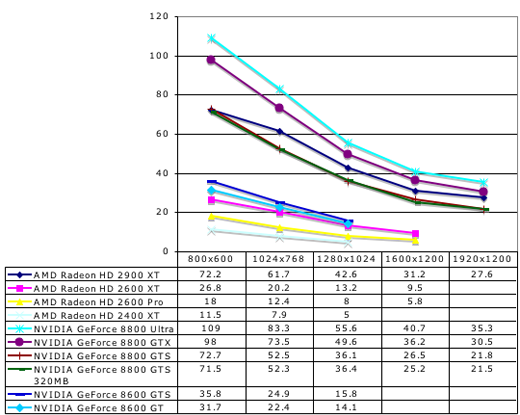

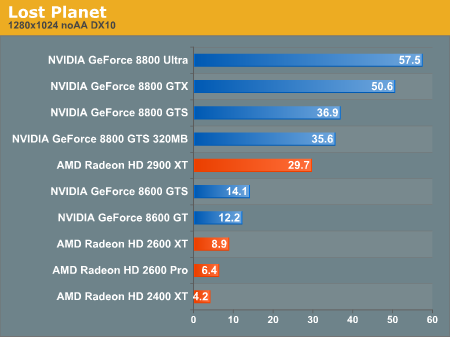

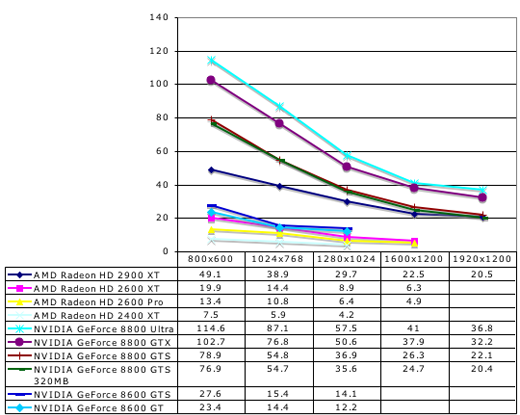

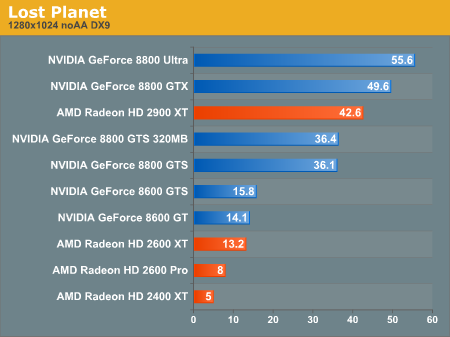

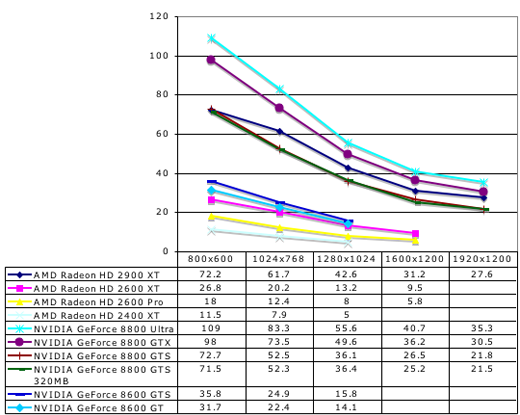

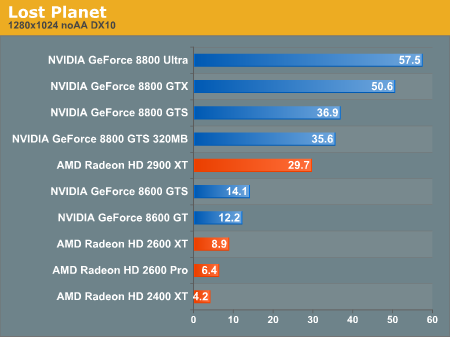

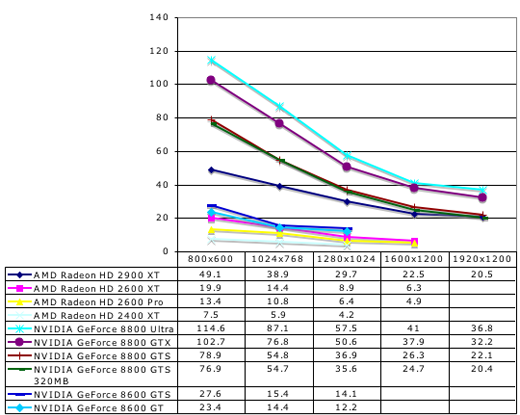

Lost Planet DX9 Performance

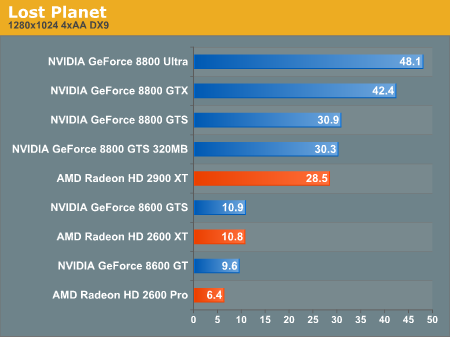

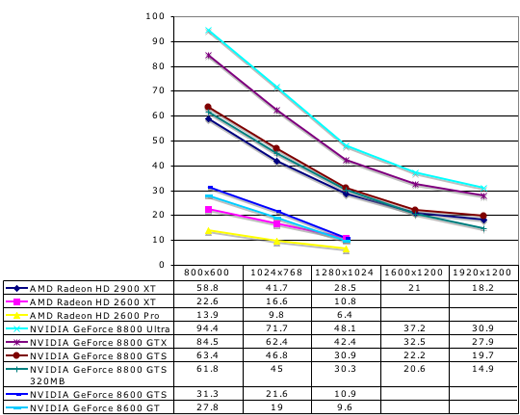

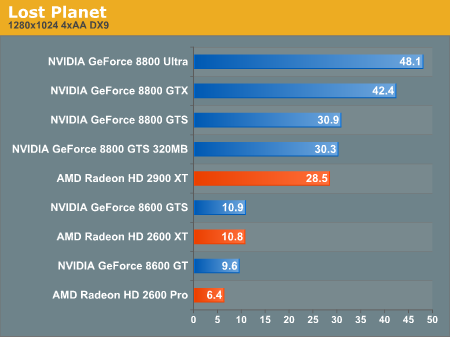

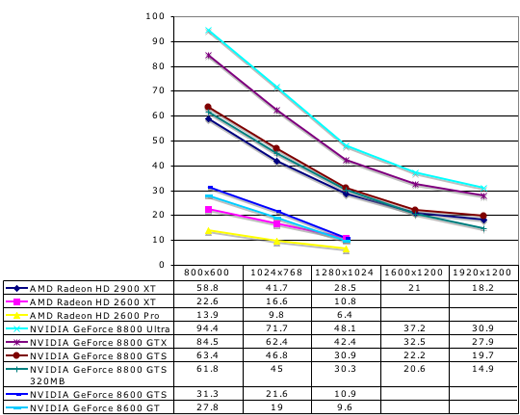

Lost Planet 4xAA DX9 Performance

While it's difficult not to feel like a broken record, we need to disable quite a few settings to get our low-end cards playable. In this case, even under DX9 without AA enabled they don't perform well. We are planning on testing the 8500 and 8400 in the near future and we'll be sure and go back to see if we can run DX10 tests at low enough settings to get interesting results for these budget and mainstream parts.

Also, once again, the 2900 XT performs well under DX9, but slips a little behind with 4xAA due to it's lack of MSAA resolve hardware.

DirectX 10 Tests

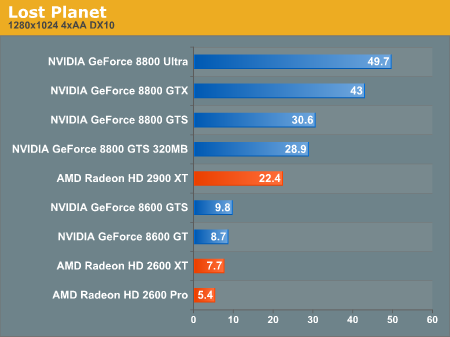

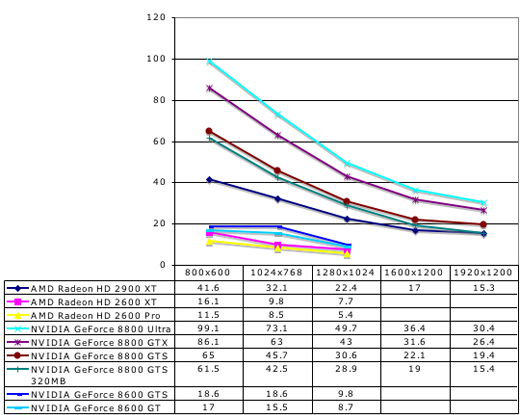

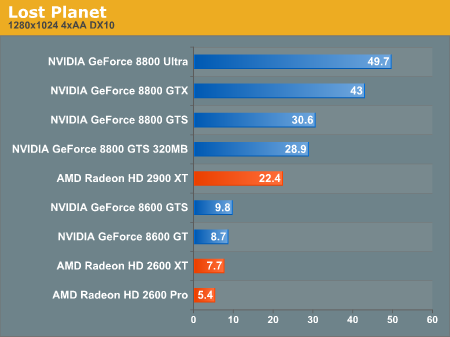

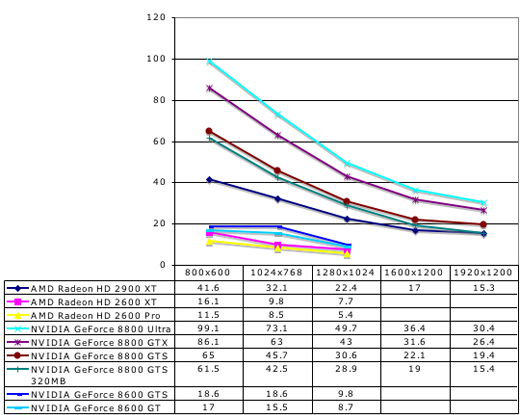

Lost Planet DX10 Performance

Lost Planet 4xAA DX10 Performance

Incredibly, NVIDIA's 162 drivers combined with the retail version of Lost Planet actually deliver roughly equivalent performance on DX9 and DX10. While Capcom's goal is higher performance under DX10, we would still expect that AMD and NVIDIA both have a long way to go in bringing their DX10 drivers up to parity with the quality of their DX9 drivers.

Lost Planet: Extreme Condition is a port of an Xbox 360 game. Well, to be honest, it's almost as if they just tacked on support for a keyboard and mouse and recompiled it for Windows on x86 hardware. While we haven't done a full review of the game, only playing through the intro and first mission, our initial assessment is that this is absolutely the worst console port ever.

Unless you have an Xbox 360 controller for your PC, the game is almost unplayable. The menus are clunky and difficult to navigate. Moving in and out of different sections of the main menu requires combinations of left and right clicking, which is patently absurd. If during gameplay you wish to change resolutions, you must click the mouse no less than a dozen times. This does not include the need to navigate menus through hovering (who does that?) and the click required to grab the scroll bar in the settings menu.

The console shooter has always had to work hard to compete with the PC. Halo and Halo 2 did quite a good job of stepping up to the plate, and Gears of War really hit one out of the park. But simply porting a mediocre console shooter to the PC does not a great game make.

That said, if you can get past the clunky controls and stunted interface, the visuals in this game are quite stunning. It can also actually be fun and satisfying to shoot up a bunch of Akrid. Our first impression is that a good game could be buried underneath all of the problems inherent in the PC port of Lost Planet, but we'll have to take a closer look to draw a final conclusion on this one.

For now, the important information to take away is what we get from the DirectX 10 version of the game. While we haven't found an explicit list of the differences, our understanding is that the features are generally the same. Under DirectX 10, gamers can choose a "high" shadow quality option while DX9 is limited to "medium". Other than this, it seems lighting is slightly different (though not really better) under DX10. From what we've seen reported, Capcom's goal with DX10 on Lost Planet is to increase performance over their DX9 version.

Lost Planet DirectX 9

Lost Planet DirectX 10

In order to make as straightforward a comparison as possible, we used the same settings under DX9 and DX10 (meaning everything on high except for shadow quality).

DirectX 9 Tests

While it's difficult not to feel like a broken record, we need to disable quite a few settings to get our low-end cards playable. In this case, even under DX9 without AA enabled they don't perform well. We are planning on testing the 8500 and 8400 in the near future and we'll be sure and go back to see if we can run DX10 tests at low enough settings to get interesting results for these budget and mainstream parts.

Also, once again, the 2900 XT performs well under DX9, but slips a little behind with 4xAA due to it's lack of MSAA resolve hardware.

DirectX 10 Tests

Incredibly, NVIDIA's 162 drivers combined with the retail version of Lost Planet actually deliver roughly equivalent performance on DX9 and DX10. While Capcom's goal is higher performance under DX10, we would still expect that AMD and NVIDIA both have a long way to go in bringing their DX10 drivers up to parity with the quality of their DX9 drivers.

59 Comments

View All Comments

slickr - Monday, July 9, 2007 - link

Great review, thats what we all need to get Nvidia and ATI stop bitchin around and stealing our money with slow hardware that can't even outperform last generations hardware. If you ask me the 8800Ultra should be the middle 150$ class here and top end should be some graphic card with 320 stream processors 1GB GDDR4 clocked at 2.4GHZ and 1000MHz core clock, same from amd they need the X2900XT to be middle 150$ class and top of the line should be some graphic card with 640stream processors 1GB GDDR4 2.4GHz and 1000MHz core clock!More of this kind of reviews please so we can put to ATI and Nvidia we won't buy their hardware if its not good!!!!!!!!

ielmox - Tuesday, July 24, 2007 - link

I really enjoyed this review. I have been agonizing over selecting an affordable graphics card that will give me the kind of value I enjoyed for years from my trusty and cheap GF5900xt (which runs Prey, Oblivion, and EQ2 at decent quality and frame rates) and I am just not seeing it.I'm avoiding ATI until they bring their power use under control and generally get their act together. I'm avoiding nVidia because they're gouging the hell out of the market. And the previous generation nVidia hardware is still quite costly because nVidia know very well that they've not provided much of an upgrade with the 8xxx family, unless you are willing to pay the high prices for the 8800 series (what possessed them to use a 128bit bus on everything below the 8800?? Did they WANT their hardware to be crippled?).

As a gamer who doesn't want to be a victim of the "latest and greatest" trends, I want affordable performance and quality and I don't really see that many viable options. I believe we have this half-baked DX10 and Vista introduction to thank for it - system requirements keep rocketing upwards unreasonably but the hardware economics do not seem to be keeping pace.

AnnonymousCoward - Saturday, July 7, 2007 - link

Thanks Derek for the great review. I appreciate the "%DX10 performance of DX9" charts, too.Aberforth - Thursday, July 5, 2007 - link

This article is ridiculous. Why would Nvidia and other dx10 developers want gamers to buy G80 card for high dx10 performance? DX10 is all about optimization, the performance factor depends on how well it is implemented and not by blindly using API's. Vista's driver model is different and dx10 is different. The present state of Nvidia drivers are horrible, we can't even think of dx10 performance at this stage.the dx10 version of lost planet runs horribly eventhough it is not graphically different from dx9 version. So this isn't dx10 or GPU's fault, it's all about the code and the drivers. Also the CEO of Crytek has confirmed that Nvidia 8800 (possibly 8800GTS) and E6600 CPU can max Crysis in Dx10 mode.

Long back when dx9 came out I remember reading an article about how it sucked badly. So I'm definetly not gonna buy this one.

titan7 - Thursday, July 12, 2007 - link

No, it's not about sucky code or sucky drivers. It's about shaders. Look at how much faster cards with more shader power are in d3d9. Now in d3d10 longer, richer, prettier shaders are used that take more power to process.It's not about optimization this time as the IHVs have already figured out how to write optimized drivers, it's about raw FLOPS for shader performance.

DerekWilson - Thursday, July 5, 2007 - link

DX9 performance did (and does) "suck badly" on early DX9 hardware.DX10 is a good thing, and pushing the limits of hardware is a good thing.

Yes drivers and game code can be rocky right now, but the 162 from NVIDIA are quite stable and NV is confident in their performance. Lost planet shows that NV's drivers are at least getting close to parity with DX9.

This isn't an article about DX10 not being good, it's an article about early DX10 hardware not being capable of delivering all that DX10 has to offer.

Which is as true now as it was about early DX9 hardware.

piroroadkill - Friday, July 6, 2007 - link

Wait, performance on the Radeon 9700 Pro sucked? I seem to remember games several years later that were DirectX 9 still being playable...DerekWilson - Saturday, July 7, 2007 - link

yeah, 9700 pro sucks ... when actually running real world DX9 code.Try running BF2 at any playable setting (100% view distance, high shadows and lighting). This is really where games started using DX9 (to my knowledge, BF2 was actually the first game to require DX9 support to run).

But many other games still include the ability to run 1.x shaders rather 2.0 ... Like Oblivion can turn the detail way down to the point where there aren't any DX9 heavy features running. But if you try to enable them on a 9700 Pro it will not run well at all. I actually haven't tested Oblivion at the lowest quality so I don't know if it can be playable on a 9700 Pro, but if it is, it wouldn't even be the same game (visually).

DerekWilson - Saturday, July 7, 2007 - link

BTW, BF2 was released less than 3 years after the 9700 Pro ... (aug 02 to june 05) ...Aberforth - Thursday, July 5, 2007 - link

Fine...Just want to know why a DX10 game called Crysis was running at 2048x1536 res with 60+ FPS equipped with Geforce 8800 GTX.

crysis-online.com/?id=172